* 23 entries including: mining infrastructure, alternative fuels compared, bringing tyrants to justice, dot xxx domains intimidation cyberscams. Three Gorges dam, barcoding nature, biology modeling language, advertising & Tivo, flash memory everywhere, flash environments, bear mutts, $100 laptop, disease mongering, American Airlines, North Korean & Chinese authoritarianism, San Francisco versus earthquakes, pricetag for Iraq, energy availability, power from manure, my new car, business cards.

* NO DOT XXX: There has been lobbying over the last few years for a ".xxx" Internet domain name for online porn, or "adult entertainment" if you like. For example, under the proposed scheme a porn site might name itself "www.hotbabes.xxx". Proponents of the idea -- led by ICM Registry INC of Jupiter, Florida, an Internet registry company -- suggested that the .xxx domain name would allow porn sites to be easily screened out by those who objected to them, and reduce public pressure on pornsite operators.

By that argument, a ".xxx" domain might have been thought to make everybody happy. The reality was that opinion flowed against the idea. The Internet Corporation for Assigned Names and Numbers (ICANN) had given tentative approval in June 2005 to the ".xxx" domain name, but after considerable protest, has now withdrawn its endorsement.

As it turned out, setting up a ".xxx" domain posed more problems than it solved. Pornsite operators worried that the ".xxx" domain would make them easier to shut down, and were particularly alarmed when a bill mandating a ".xxx" domain name was submitted to the US Congress. In addition, many pornsite operators are interested in adapting their offerings to appeal to a wider, mainstream public, and the ".xxx" domain name would have been an obstacle to doing so. Anti-porn activists worried that the pornsite operators would be free to use both ".com" and ".xxx" domains, extending their influence. They also felt that it would legitimize porn on the Internet. ICANN decided the whole thing was fearsome and should best be left alone, not even suggesting it as an option. However, ICANN did approve a ".tel" domain name to support online reference to telephone numbers.

ICM had originally proposed the ".xxx" domain in 2000, only to see it shelved by ICANN in short order. ICM modified the proposal and resubmitted it to ICANN in 2004. Some of the critics of the ".xxx" domain name have suggested that ICM's real motives were to sell new domain names and drum up new business, one critic calling it a "land grab". ICM officials replied that a number of pornsite operators backed the proposal, and the company still wants to push the idea.

* INTIMIDATION: According to an article from CNET.com ("Your Money Or Your Files" by Joris Evers), writers of malicious software have taken a step up in malevolence by distributing "ransomware" that threatens to delete files from a victim's PC unless a payment is made. The malware, named "Ransom-A" by antivirus company Sophos, gets the user's attention with offensive imagery and text, then says that a file will be deleted every half hour, unless $10.99 USD is handed over. The victim is told to pay through Western Union money transfer, and that a disarming code will be provided once the money is paid.

This is the second malware program to take this approach. In March 2006, an earlier program encrypted all the user's files and then demanded $300 USD to decrypt them. Sophos officials worry that this is the start of a trend. I somewhat doubt it myself. I keep backups; if anyone pulled a stunt like this on me, I would assume that my PC was completely compromised and clean it out, accepting some marginal loss of materials. [ED: As of 2021, I was half wrong. Since I do keep backups, I wouldn't pay up for ransomware -- but organizations with sloppy data security turned out to be only too vulnerable to it.]

BACK_TO_TOP* THREE GORGES DAM ERECTED: According to an article from BBC.com, the structure of the controversial Three Gorges Dam across the Yangtze River in China is now complete, and the waters are beginning to fill up behind it. Turbines and other systems still need to be installed, but when it's fully operational in 2009, it will be the biggest hydroelectric dam ever built. It is 2,309 meters (1.4 miles) wide and 185 meters (607 feet) tall. The dam itself will have 26 power turbines providing a total of 18 gigawatts of power, with ten more turbines to be installed in underground channels following 2010. A lock system currently allows vessels to move over the dam, with a ship elevator planned for a 2009 opening.

Although the notion of damming the Yangtze was proposed by the founder of the Chinese Republic, Dr. Sun Yatsen, and considered by Mao Zedong, work didn't begin until 1993, when President Jiang Zemin began the project. Officially, the dam cost $25 billion USD, but most believe that is a substantial underestimate.

The lake behind the dam will be 660 kilometers (410 miles) long and will submerge 632 square kilometers (244 square miles) of land. About 1,300 towns and villages are being relocated. The government claims that the million citizens displaced will be given new homes, jobs, and compensation, but there have been complaints that corrupt officials have stolen the money provided for the job; there have been prosecutions. Green activists protest that the reservoir will drown scenic areas and important historical sites, as well as accumulate pollutants. However, the government believes the need for power and to control flooding on the Yangtze outweigh these considerations. Floods on the Yangtze have killed hundreds of thousands in living memory. Government officials have no apologies for the dam and regard it as a monument to the power of the Chinese state.

BACK_TO_TOP* ALTERNATIVE FUELS COMPARED (1): As discussed in an article from POPULAR MECHANICS ("How Far Can You Drive On A Bushel Of Corn?" by Mike Allen, May 2006), the steep increase in fuel prices over the previous few years has led to a parallel surge of interest in alternative fuels, such as ethanol and biodiesel -- and sometime down the road straight hydrogen, with the US Department of Energy (DOE) envisioning that America will eventually be a hydrogen economy. Although the Bush II Administration was slow to get on the bandwagon, President Bush now says he would like to see hydrogen-powered cars on the market by 2020.

Hydrogen is not here yet, but ethanol is already widely used as a gasoline additive, and use of biodiesel is increasing rapidly. Bush signed new energy regulations into law in the summer of 2005 and then announced the "Advanced Energy Initiative" early in 2006, establishing a target of national use of 28.5 billion liters (7.5 billion gallons) of ethanol and biodiesel by 2012, a level almost twice as great as now.

Many alternative fuels sound good on paper, but the question remains of how practical, cost-effective, and environmentally sound they are at present. A careful analysis of the options -- ethanol, methanol, synthetic fuel from coal, natural gas, biodiesel, electricity, and hydrogen -- gives some interesting results.

* Ethanol is just plain old grain alcohol, obtained by fermenting a grain mash. Corn is the favored feedstock, and the production method is conceptually the same as it is for producing moonshine corn liquor, if generally conducted on a much bigger scale:

Pure ethanol isn't used to drive cars because cars won't start on it on cold days. It is instead used as the main component of the "E85" blend, which is 85% ethanol and 15% gasoline. E85 has an "energy density" -- energy per volume -- only about 65% as great as pure gasoline, and so it takes a little more than half again as many liters of E85 as gasoline to drive the same distance.

Ethanol does burn well and cleanly, though. Ethanol is also added in much smaller proportions, usually about 10%, to gasoline in many states to reduce emissions. It does have the problem that high concentration blends of ethanol are corrosive, demanding stainless steel and corrosion-resistant plastics in automobiles that use it. Such vehicles are known as "flex fuel" machines.

Ethanol also has the virtue, in principle, of being neutral as far as carbon emissions are concerned, since the carbon released to the atmosphere by burning ethanol was originally taken from the atmosphere by the plants that were used to produce ethanol. Critics have sniped at ethanol, charging that it takes more energy to produce than it provides, but the DOE has calculated that it does in fact provide a reasonable, though not generous, margin of net energy.

Farmers have seen ethanol as a potential way of improving their own access to fuel supplies and, more importantly, an opportunity to make more money, and so farm states have been big on the technology. It is going strong, with 95 ethanol plants turning out 16.3 billion liters (4.3 billion US gallons) in 2005 by mid-2007. 40 more plants are coming online that will provide about 50% more capacity.

Some critics have suggested that the ethanol lobby is drunk on its own high-proof product, with the economics made more attractive by the fact that corn is at the low part of its price cycle, while ethanol is in relatively high demand. In fact, its price is so high that the ethanol being used as a gas additive is helping to contribute to current price pressures at the pump. Corn prices are likely to rise; greater production of ethanol will likely mean cheaper ethanol due to competition, which is a setup for a profit squeeze. The ethanol boom could end up being an ethanol bubble.

The most optimistic think that ethanol could wean the US from gasoline completely, but that idea isn't credible at present. Even with the new capacity coming online, ethanol will only be able to fulfill 3% of US fuel requirements. According to some estimates, to produce enough ethanol to replace gasoline would require dedicating 71% of America's farmland to ethanol production. Work is under way to develop processes that can use "cellulosic" plants, like switchgrass, as an ethanol feedstock, which could improve the bottom line. [TO BE CONTINUED]

NEXT* INFRASTRUCTURE -- MINING (6): Dimension stone tends to be expensive as a building material, and in practice its use in construction is dwarfed by that of brick, which can be thought of as manufactured stone; and concrete, which can be thought of as moldable stone. Of course, bricks and concrete have a hybrid offspring in the form of concrete block. Other forms of "synthetic" materials used in construction include the "blacktop" used to surface roadways, as well as, to a small but increasing degree, plastics.

* Brickmaking is an ancient technology, playing an important part in the story of the Israelite exile in Egypt, and the traditional process of brickmaking remains in service in underdeveloped parts of the world: clay is dug up with pick and shovel; kneaded by bare feet in a wood tub; pressed into wood frames to dry in the sun; and then fired in a wood-burning kiln to give the final product.

The modern industrialized method of brickmaking is actually little more than an automated approach to performing the same steps. Clay is dug up, or "won" as the industry puts it, with backhoes and other heavy machinery, to be dumped into grinding mills and turned into a dry powder. A mixer unit blends the powder with water, with the resulting material usually drawn out as a long ribbon that is sliced into bricks by a fine wire, though sometimes the output is still dumped into wooden forms.

The damp or "green" bricks are loaded onto carts and then drawn through a kiln in the form of a long tunnel with a staged series of temperature zones. The bricks are dried for a day or two at low heat, fired for two or three days at high heat, then allowed to cool off for a few days. Bricks tend to be nonstandardized, varying between locales not merely because of the variation in clays available, but because of local differences in taste.

* Concrete has also been around for a long time, the material having been used to build the dome of the Pantheon in Rome. The Roman formula was misplaced during the Dark Ages, to be reinvented in Britain in the early 19th century.

People tend to confuse "concrete" and "cement", but they're not the same thing. Cement is just a sort of glue, made of dry limestone and clay. Mix it with water and sand, and the result is "mortar" or "grout", used to set bricks and tiles. Add crushed stone or "aggregate" to mortar, and that's concrete.

Everyone is familiar with the "ready-mix" or "transit-mix" truck, more generally known as a concrete truck, with its spinning top-shaped drum on the rear. The raw materials are stored in heaps at the concrete plant, to be loaded into hoppers that measure them out into the drum of a truck. The loading process may be performed by a single operator under semi-automated control. The drum is set to spinning rapidly to mix the materials; once they're mixed, the drum is rotated more slowly just to keep the mix from settling out. There's a corkscrew blade inside the drum that promotes mixing when the drum is spun one way, but draws out the concrete when spun the other.

Concrete plants are necessarily local affairs, since concrete sets in about an hour and a half. A driver whose truck breaks down with a full load of concrete is in big trouble, since the end result will be a drum full of solid concrete. After being poured, concrete has to set or "cure". This is not merely a drying process; it involves an irreversible chemical reaction that results in a matrix of the material with water bound into it.

The reaction releases heat, and massive concrete structures sometimes feature temporary refrigeration systems to help cure the concrete more rapidly. Hoover Dam featured such systems; incidentally, the stories that workers were sucked into concrete during the construction of Hoover Dam are just urban folktales, since the concrete was only laid down in slabs that might come part of the way to a worker's boot-tops.

Concrete is poured into forms that generally consist of panels of thick plywood that's been oiled to keep the concrete from sticking, with the form held together by external braces and, for walls, internal rods whose ends are snapped off when the form is removed. The concrete may be reinforced by steel bars or "rebar", tied together with wire and sometimes bent to fit together. Rebar is generally ribbed to keep it in place, and often fitted with plastic caps to prevent its sharp-ended ends from cutting up workers.

Stainless steel rebar or epoxy-coated rebar is used in environments where corrosion is a problem. Heavy steel mesh is sometimes used for reinforcement as well, and plastic reinforcements are becoming more common -- plastic's relatively expensive, but it resists corrosion and so is better suited for, say, traffic bridges in northern climates where roads are salted during the winter. Concrete-block structures can also be reinforced.

Forms for "artistic" concrete structures, with soaring smooth roofs and the like -- think the Sydney Opera House -- can be very elaborate. The moldable nature of concrete is almost uniquely suited to building such elegant structures. Dumping concrete into forms well off the ground requires use of a "pumper truck", which carries a long boom with a hose through which concrete is pumped. The pumper truck deploys supporting struts to keep it from falling over. For really tall structures, a crane carries a concrete bucket to the appropriate level for pouring.

Concrete elements can be prefabricated at a central plant and then hauled out to be set in place at the construction sites. In many cases, such prefabricated concrete elements are "prestressed", with the forms strung with hefty cables that are installed under tension. When the concrete element dries and the form is removed, the cables give the element strength under tension forces, which is not otherwise an attribute associated with concrete structural elements.

* Producing the cement for concrete is a big business in itself, requiring use of a "cement kiln", which is a long steel tube about 3 meters (10 feet) in diameter or more, and with a length of 30 to 150 meters (100 to 500 feet). The kiln is lined with firebrick to allow it to be heated to high temperature, and is set at a slight angle, with clay and limestone dumped into the high end. The tube is rotated at the rate of a revolution in a minute or two, with the hot materials gradually flowing down to the low end.

The output is in the form of dried-out "clinkers", which are then crushed into cement powder in rotating bins full of steel balls. The kiln features exhaust flues and smokestacks, with "baghouses" to trap dust. The cement kiln may be one of the most interesting items in a concrete plant, but it is generally dwarfed by the bins for raw materials and silos for finished product.

* Blacktop is sometimes referred to as "asphalt" or "macadam", though purists insist that all these terms mean slightly different things; the pros tend to call it "hot mix". The term "blacktop" is generally a safe bet, in any case meaning a material composed of aggregate stone mixed with hot "bitumen", a kind of tar that's obtained as the "bottom of the barrel" in petroleum refining. Blacktop is dumped in a hot state on a roadway, to spread out, cool, and harden.

A blacktop plant is a bit like a concrete plant, with various bins (or one internally segregated bin) containing different grades of aggregate. The aggregate is heated and dried before being stored into the bins. The stones are dumped into a truck along with bitumen and mixed for delivery.

* Construction design tends to be conservative, for the simple reason that safety is a major concern, and it's taken time to incorporate plastics as major elements in construction projects. They are now being used for footbridges and light traffic bridges, mostly in Europe. Plastic bridges are cheap, often made with recycled plastics; easy to set up with minimal labor; and durable, with long lifetimes. They are manufactured in a prefabricated fashion and delivered to the installation site. Computer-aided design systems can be used to mix and match predefined components as requested by the customer. [TO BE CONTINUED]

START | PREV | NEXT* GIMMICKS & GADGETS: In the gimmicks and gadgets category this month, a company named TiroGage has introduced a tire pressure gauge that's screwed onto the valve stem of a tire and left there indefinitely, allowing tire pressure to be determined at a glance. This is the sort of gimmick I would definitely pick up, as long as I had some assurance that it was reliable -- I wouldn't want the gauge to blow out at freeway speeds -- and the price wasn't too steep. Right now, they're asking $25 USD for it; I think it would have to be at about $10 USD for me to bite.

* An issue of WIRED NEWS from a year and a half back had pictures of a fun gimmick: a set of 4.1 kilogram (9 pound) carbon-fiber wings that a skydiver can strap to his or her back to allow high-speed maneuvering during free fall. The "Skyray" was developed by Munich inventor Alban Geissler and is in the form of swept wings about a meter and a half in span, with little vertical "winglets" (or if you prefer, "finlets") on the wingtips to ensure stability; the skydiver grabs onto two handles to maintain control. The parachute is attached to the back of the Skyray, and once the fun's over the skydiver opens the parachute and lands normally, with the Skyray still strapped on.

More recent news indicates the design has evolved, with a powered version named the "Gryphon" propelled by twin small turbojets being prepared for testing. That might be real fun, but it is unclear if anyone is going to bite on it.

* A Georgia Tech materials research teams has come up with another interesting gimmick: zinc oxide "nanowires" that generate electricity using a piezoelectric effect as they vibrate back and forth. Arrays of such nanowires could be grown on polymer substrates using chemical deposition processes; scaling effects making the nanowires very sturdy and hard to break. Such arrays could be incorporated into pocket devices such as cellphones to make them self-powering, or be used to power medical implants.

* An article from CNET.com reports that a Silicon Valley startup named Ageia Technologies has introduced a "physics chip" for computer games and simulations. The "PhysX" coprocessor provides the details for properly simulating everything from smoke to stones, making everything from explosions to the fluttering of a flag much more realistic. Demonstrations display an awesome level of detail, but the PhysX chip doesn't come cheap: Ageia is asking $300 USD for it.

* In semi-related news, nanotube arrays made of titanium dioxide are being used to catalyze hydrogen from water using sunlight as an energy source. A team at Pennsylvania State University has developed arrays with a sun-to-hydrogen conversion efficiency of 12% -- if only in the ultraviolet (UV) range of the electromagnetic spectrum. Although a thin film of titanium dioxide will catalyze water when stimulated by sunlight, the "forest" of nanotubes, each a few microns long, provides much more surface area for catalytic action, greatly increasing the conversion rate.

The problem is that UV is a relatively small component of the sunlight we receive on the ground. The Penn State researchers have linked up with another group at the University of Texas to build arrays that dope the titanium dioxide nanotubes with carbon, extending their sensitivity into the visible range. The researchers believe they will be able to exceed the goal specified by the US Department of Energy of 10% conversion efficiency.

Titanium dioxide nanotube arrays have also been covered with dyes to allow them to produce electricity directly from sunlight. I am beginning to think that by 2050, people will read about the energy insecurity of our present times and wonder what we were so worried about. The thing is that, with an ever-increasing array of tools to manipulate materials and organisms, we are likely to end up with "breakthrough" technologies that are now impossible to predict. I don't see people as always as pleasant or conscientious as they like to claim they are, but they are capable of being extremely resourceful.

Unfortunately, that reasoning is circular. The fact that such breakthroughs are impossible to predict means they can't be a factor in our planning. Moore's Law aside, could anybody have predicted the explosion of computing power and capability in the last two decades of the twentieth century? The optimistic view of the future is encouraging, but we can't take it to the bank.

BACK_TO_TOP* BARCODING NATURE: A short article from WIRED.com ("Mark Of The Beasts" by Jonathan Keats) described a new effort to bring plant and animal taxonomy into the 21st century. Traditionally, taxonomists interested in examining species of plants and animals from collections have relied on old-fashioned ledgers and card catalogs. Just how cumbersome this seems now can be understood from the fact that over 600,000 specimens are stored in the back rooms of the Smithsonian Museum of Natural History in Washington DC, and there are comparable collections in the great museums of other nations.

The current system would have been perfectly familiar to Charles Darwin. Now the Smithsonian is driving a global effort to introduce a new system that would have completely astounded Darwin. Three years ago, the museum began a "Consortium For The Barcode Of Life", the goal of which is to sequence the same segment of DNA from the more than ten million known species on Earth, with the results accessible in a worldwide Internet database.

The target DNA sequence is a stretch of mitochondrial DNA defining part of an enzyme named "cytochrome C oxidase I (COI)". In 2003, this particular DNA segment was proposed as a useful "barcode of life" by geneticist Paul Hebert of the University of Guelph in Ontario, on the basis that it's common among species, varies widely between species, while remaining highly constant within a particular species.

The Smithsonian is now working "barcoding" 10,000 bird species by 2010. This effort wouldn't be possible without the latest gene sequencing technology, and even with that it's still not cheap. Says a researcher involved with the exercise: "On three [gene sequencing] machines, we can process 6,000 samples a week for about two dollars apiece, but the total cost of the machines is over half a million dollars. Without the high tech robotics, it could cost up to five dollars a sample, and you can do only hundreds a week."

Enthusiasts see "handheld" barcoders not too far down the road, and point out that the effort is not merely one of intellectual curiosity. For example, it would help track species of mosquitoes linked to specific diseases. The US Air Force is interested in the idea to permit identification of the remains of birds struck by aircraft, helping to map out bird migration routes to be avoided. Other applications include examination of imports to identify endangered or restricted species.

COI is not found in plants, but plant researchers have identified alternate "barcodes" that are. A complete "Barcode Of Life" is expected to cost over a billion USD. Taxonomists are excited by the program, but even they admit that they don't completely understand the real potential of the knowledge. It also gives them the worry that they may be working themselves out of a job -- providing such a complete listing of species that few will be inclined to go into the field in the future.

* BIOLOGY BY COMPUTER: As reported by an article in AAAS SCIENCE ("Life In Silico: A Different Kind Of Intelligent Design" by Kim Krieger, 14 April 2006), the unraveling of the basic mechanisms of life during the 20th century has given the promise in the 21st of being able to engineer organisms at a fundamental level. Given how complicated even simple organisms are, it's obvious that computer power will have to be called in to support such work. That's the dream of a team of computer researchers at Harvard University under mathematician Jeremy Gunawardena. The group is close to an initial release for a "biology language" they call "Little b", as an oblique reference to the popular C programming language.

The idea of modeling organisms and biological processes on computer is not new, having been used by pharmaceutical companies and researchers investigating complicated diseases. In fact, a library of fifty-plus biological models is now available from "BioModels Database" at the European Bioinformatics Institute (EBI) in Hinxton, UK. However, so far it has proven difficult to leverage off of one biomodel to help build another. The Harvard group is working to produce a tool in the form of Little b that will standardize biological computer models in such a way that parts or "modules" of one model could be easily imported into another. It will be sort of like a biological "computer aided design (CAD)" package. CAD systems are common in machine design, with users able to select parts from the CAD system database and see how they fit together without even having physical parts; Little b will provide a system that will allow biologists to engineer organisms in a similar way.

Little b leverages off an existing standard for biomodels known as the "Systems Biology Markup Language (SBML)", which was developed by computer scientist Michael Hucka of the California Institute of Technology and software engineer / systems biologist Herbert Sauro of the Keck Graduate Institute in California. The acronym "SBML" is a deliberate nod to the "HyperText Markup Language (HTML)" used to format web pages. Just as any web page formatted using HTML codes will work on any Web browser, any biomodel formatted using SBML codes will work in the same biomodel execution environment. The biomodels in the EBI BioModels Database use SBML, and now many scientific journals such as NATURE require that biomodels submitted to them use SBML as well.

SBML is a fine thing, but Little b is a big step forward. Hucka admiringly says of the Harvard team: "They're ahead of the curve." Sauro sees it as a solution to the "huge waste of grant money" due to the "chronic reinvention" of biomodels. Little b does seem like it has the capability to rationalize biomodel design; although it isn't ready for general use yet, as part of their testing it has been used to built modular biomodels of embryonic cell development and even the entire Drosophila melanogaster fruitfly.

Not everyone is impressed. One difficulty is that Little b is based on a long-standing programming language named LISP, which was originally designed for artificial intelligence research. Few people familiar with LISP are neutral on it: it has its fanatical devotees, as well an opposing faction that finds it obscure and clumsy. Sauro suggests that a sophisticated graphical user interface could be designed to protect users from having to worry about the details of Little b, though he adds that he is not aware of any such effort at present.

The Harvard team has also been criticized for not obtaining more inputs from the biomodeling community. Gunawardena believes that feedback will occur as Little b is introduced into the outside world, and that future versions will incorporate changes as required by experience.

BACK_TO_TOP* STOP LOOK & LISTEN: TV advertisers have been dreading the spread of digital video recorders (DVRs) like the popular TiVo system. While it was possible to skip over commercials with a VCR, most people didn't bother, but it's much easier to do so with a DVR. Now, according to a BUSINESS WEEK article ("Learning To Love The Dreaded TiVo", by David Kiley, 17 April 2006), advertisers are starting to stop worrying and love the DVR.

Sony Corporation is preparing to run TV ads for the company's Bravia flat panel TVs that will allow TiVo users to select different endings, even if they're watching the commercial live. Five seconds into the commercial, menu options pop up, one for women, two for men, to permit selection of 12 possible different endings.

The issue with advertising is that, within limits, viewers don't mind ads all that much as long as the ads pitch something the viewers are interested in. Viewers will actually be engaged by ads for things they really want to buy. Targeting ads to an audience on a person-by-person basis was impossible with old-time broadcast TV, but the coming digital video age is a new frontier for advertisers. About 18% of US households will have a DVR by the end of 2006, with that number more than doubling by 2010. TiVo is now working on an "ads on demand" system for their product, using a broadband link to provide targeted ads.

Experiments are being performed with other schemes. Kentucky Fried Chicken has run a commercial that, when run at low speed, revealed a hidden puzzle message that yielded a coupon for a free Buffalo Snacker sandwich. Response to the ad was highly positive. This may be the sort of gimmick that dies out quickly, but the age of the traditional commercial spot is clearly coming to an end. If the new age that is coming represents a threat to advertisers, it is also an opportunity to those who are quick enough on their feet.

BACK_TO_TOP* TRYING THE TYRANTS (3): One of the most high-profile of the courts trying deposed dictators and similar criminals is the "International Criminal Court (ICC)", which was set up in The Hague in 2002 as a neighbor to the ICTY and the long-standing UN International Court of Justice, which rules on disputes between states. The ICC is the world's very first permanent war-crimes tribunal. It is not directly backed by the UN. Its charter is to pursue justice against those who have committed crimes against humanity in a cheaper and more efficient way than the current courts. The Bush II Administration has not been a big fan of the ICC, perceiving that its charter implicitly undermines American sovereignty, though the administration now seems to be coming around to the ICC's necessity.

The ICC's first indictment was against Joseph Kony of the "Lord's Resistance Army (LRU)", which terrorizes Northern Uganda, and four of his henchmen. The LRU is infamous for kidnapping children -- turning the boys into whimsically sadistic soldiers and the girls into sex slaves -- as well as for grotesque mutilations of civilians. The ICC is also focusing on the atrocities committed in the struggle for the Congo, the Darfur region of the Sudan, the Ivory Coast, and the Central African Republic. However, the ICC's mandate is limited:

* Indeed, there is much criticism of the ICC and the other war-crimes courts, the critics arguing that they are politicized; selective; and in practice counterproductive, pressuring war criminals to keep on fighting since they won't be granted amnesty. Developing-world governments tend to see the courts as a front by which the big powers impose, often with double standards, their own concepts of justice on small countries.

Defenders counter that providing a forum for justice actually helps end the cycle of atrocity and reprisal by providing a sense of closure, and that punishment of the guilty provides a degree of deterrence against future atrocities. True, so far the butchers don't seem to be much deterred anywhere, but the defenders say that improved enforcement should have an effect. As far as the negative effects of eliminating amnesty go, peace accords have been signed in the Balkans and Afghanistan that have no formal amnesty provisions. Besides, amnesties are not popular, with countries that have granted them often backtracking later. The cry for justice is much too loud to be suppressed.

In fact, although the South African Truth & Reconciliation Commission that investigated apartheid crimes is well-known, it did not provide blanket amnesty even for those who came forward, and absolutely did not provide amnesty for those who didn't. The South African government is now finally beginning to talk about prosecutions.

Advocates of international courts say that punitive justice and truth & reconciliation are not opposites but complements. Exactly how they play out depends on local circumstances. The history of justice and law is very old, but these are all new chapters, and it will take some time for them to be written, establishing legal precedents for future generations. [END OF SERIES]

START | PREV* INFRASTRUCTURE -- MINING (5): If coal mining is as big as all other forms of mining combined, it is dwarfed in turn by quarrying. The typical vision of quarrying is extraction of granite and other "dimension stone" for floors, tables, and tombstones, but the reality is that the quarrying of crushed stone is much more significant. Crushed stone is a fundamental component of road and railroad building, building foundations, even filtration beds in water-treatment plants.

Crushed stone quarries tend to be local affairs, built on the outskirts of towns, for the simple reason that it's such a cheap material that it doesn't make economic sense to transport it very far. Crush stone is just basically rocks, and it easy to find reasonable sources of rocks almost anywhere. Such a quarry is really a kind of open-pit mine, with the stone in the "working face" drilled, blasted, and then mucked out by power shovels or front-end loaders.

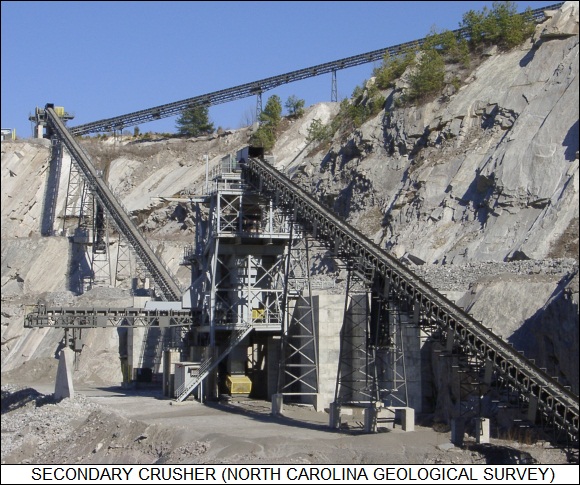

The broken stone is then dumped into a "primary crusher", the most prominent and expensive piece of gear on the quarry site, and the main bottleneck for quarry output. A primary crusher takes the big chunks of stone and breaks them down to football size. The crusher can take the form of a machine with giant jaws, or a spinning giant steel drum, tumbling around noisily like an enormous out-of-balance washing machine. The stones from the primary crusher are shipped by conveyor to one or more "secondary crushers", which break the stones down into gravel ranging in size from a prune to a hen's egg. There also may be "tertiary crushers" to produce even smaller grades of gravel. The output is sorted by size using screens, to be dumped into trucks for delivery to end users, or stockpiled in heaps for use later.

Most crushed stone used in the USA is limestone, but some is granite, as well as "traprock", which is actually a variety of different kinds of stone. When used for road paving, the crushed stone must meet specifications for skid resistance.

* A quarry for dimension stone is a very different beast from a quarry for crushed stone. Dimension stone is produced in relatively nice neat blocks, and so the quarry walls are in the form of irregular straight-sided steps. The lip of the quarry pit is surrounded by several derricks or "jib cranes" to pull out the stone and to transfer workers and their tools. The jib cranes have to handle a good deal of weight, so they are thoroughly guyed down by cables.

Slabs can't be simply blasted out without shattering them, and so a slab has to be cut out using more laborious means. Traditionally, a slab was cut out from a bench by drilling boreholes around the back and sides; this was once done with a steel bit and sledgehammers, but pneumatic and hydraulic drills provided a less agonizing means of accomplishing the task. A set of widely-space holes was then driven at the base of the slab, with a gimmick known as a "plug & feathers" inserted in each hole. A plug & feathers consisted of two tapered steel bars with a wedge between them, with the wedge pounded in by sledgehammer until the slab finally broke loose.

Nowdays, a wire saw, consisting of a power-driven loop of wire a few millimeters thick and studded with abrasive diamonds, is used to cut dimension stone. Boreholes still have to be cut in the stone so a wire can be looped through it. Some types of stone can be cut by a high-temperature torch system called a "channeling machine", with the flame driven by fuel oil and pure oxygen. The flame doesn't melt the stone, instead causing it to "spall" or shatter under thermal shock. The channeling machine runs the torch back and forth repeatedly, deepening the cut on each pass; it is said to be an impressively noisy process. Drilling hasn't been completely abandoned, however, and in fact seems to be reviving a bit, thanks to new drilling machines with diamond-tipped bits.

The bottom of a cut slab still has to be drilled and split out, but the plug & feathers approach has disappeared. These days the preferred splitting tools are hydraulic wedges, inflating airbags, or small and precise explosive charges. The process of quarrying out a slab is, as it always has been, time consuming: it may take weeks of hard work to obtain one slab. Once the slab's brought to the surface, it then needs to be cut and, if need be, polished for end use, which is still more work. [TO BE CONTINUED]

START | PREV | NEXT* FLASH MEMORY EVERYWHERE: As discussed in an article in THE ECONOMIST ("Not Just A Flash In The Pan", 11 March 2006), the impact of nonvolatile "flash" memory technology could have hardly been predicted. Today flash memory is used in digital music players, digital cameras, and cellphones, with hundreds of megabytes of capacity available at commodity prices. The global market in 2006 was $19 billion USD.

The basic concept was developed by two Bell Laboratories researchers, Simon Sze and Dawon Kahng, who came up with a variation on the classic field-effect transistor (FET). A normal FET has three connections: a "source", "drain", and "gate". The gate is effectively a plate whose voltage can be controlled to turn current between source and drain on and off. In effect, the FET is an electronically controlled switch.

The two researchers came up with an idea to add a second "floating" gate underneath the regular "control" gate. Activation of the control gate would cause the floating gate to acquire or lose a charge. Once the floating gate was configured in this fashion, it would stay switched on or off indefinitely. In other words, the device was "nonvolatile" -- it didn't lose its memory the instant the power was turned off. Sze's boss asked him: "Simon, tell me, what use can you think of for this device?" He later admitted: "I couldn't think of anything." It took a lot of power to charge or discharge the floating gate and so the memory was power-hungry. It was also slow, expensive, had limited lifetime, and seemed like a marginal technology at best at the time.

Then, in 1980, a Toshiba researcher named Masuoka Fujio came up with a modified scheme in which entire blocks of a floating-gate memory were erased at one time. The result was cheaper and less power-hungry. His bosses weren't impressed either, but he saw potential in his "flash" memories, seeing they could occupy a niche between normal fast, cheap, volatile computer RAM memory and hard disks. It was a perceptive insight, and Masuoka was eventually able to sell Toshiba management on the idea.

By 1986, Toshiba was producing flash memories in pilot quantities, and two years later Intel of the US bought a license to produce flash as well. In 1987, Masuoka rethought the architecture of his original "NOR" flash to come up with a cheaper, denser "NAND" flash architecture. The flash revolution had begun. Since that time, other improvements have been added -- for example "multilevel" flash memories, in which each memory cell doesn't just have an ON or OFF state -- it also has a "1/3 ON" and "2/3 ON" state, allowing twice the bit capacity with the same number of cells on a chip. Another innovation has been to stack flash ROM chips on top of each other.

* Flash memories have been getting more powerful every year, with gigabyte flash modules now available. As Masuoka perceived, flash memories have their particular niche: they can't compete in price per bit with disk drives, and they have neither the speed nor the lifetime to compete with RAM -- a flash memory will wear out after about a million accesses, which would kill it off almost immediately if it were used for RAM.

However, there's a certain minimal cost for building a hard disk drive, and flash memories can operate on far lower power than a hard disk drive. A million accesses is not much of a problem with a digital camera, since memory accesses only take place when a picture is taken or accessed, not all the time as with RAM. As a replacement for a floppy disk, worn on a pendant, flash memory is almost ideal.

There's a continuously moving border between the utility of hard disks and flash memory. The new XO "$100 PC" will have a flash drive, not a hard disk, to keep down costs -- but the problem is that operating systems and application software keep getting more and more storage-hungry.

Flash memory still has technological room for growth and hard disk drives are not likely to crowd it to extinction any time soon. New technologies for solid-state memories, such as "magnetic RAM" and "ferroelectric RAM (FRAM)" may replace the current flash technology in a decade or two, but they will merely move into the profitable niche already carved out by flash memory.

* FLASH ENVIRONMENTS: The spread of flash ROM technology has gone off in some surprising directions. According to an article by well-known computer systems reviewer Matt Roush from TECHNOLOGYREVIEW.com ("Your World On A Flash Drive"), one of the latest tricks is putting an entire computer operating environment on a USB-based flash drive.

PCs are becoming more and more widespread, and it's not hard to see a day when they will become fixtures in hotel rooms. Now imagine that, instead of lugging a laptop computer around on a trip, we have a pocket flash drive that we can plug into the USB port of any computer we find, and then boot the PC off the flash drive. The computer then really is the flash drive, the PC being reduced to little more than a console, used to access the operating environment and data files on the flash drive.

There are various ways to implement such a scheme, with some workers developing miniature Linux operating systems to store on a flash drive and others building operating environments that run as a layer on top of Microsoft Windows. Elements are already in place: flash drive manufacturers M-Systems and SanDisk offer a system named "U3" that can be used to run applications, such as web browsers, off a flash drive. This allows flash drive users to access a web browser configured with all their bookmarks and other preferences.

It's not a big jump from that to a full operating environment. A company named InfoEther is now in beta with their "indi" software, which can be loaded onto a flash drive to provide a set of applications -- calendar, address book, memo pad, and instant messenger program. It's plugged into a PC USB port and takes control when the PC is booted. The indi apps can hook up over the Internet to other indi users for file sharing and multiplayer games; new apps can be purchased to plug into the indi framework.

Some think the idea is misguided, that there are now Internet-based operating environments -- think of My_Yahoo as a crude example -- that give all the portability without lugging a flash drive around: anyone who can get onto the Internet has everything needed anyway. Advocates reply that it's not always easy or convenient to connect to the Internet, while a flash drive can be kept handy at all times.

[ED: I just bought a 1GB necklace-type flash drive for going on trips. My road tours are focused on picture-taking, and at the end of every day I dump my digital-camera images to my laptop, where I sort them out and discard the obvious junk. It makes me a bit nervous, though, since the laptop could be stolen, lost, or malfunction. It would be an expense to replace it, but it would more distressing to lose all the pictures, because that would tank the entire trip. On future road trips, I intend to dump the pix to the flash drive and then wear it. It would likely survive an accident that would kill me, which gives me a sense of security -- sort of.

Maybe one of these days I won't even need the laptop, just a flash drive with a few gigs of storage to handle all the utilities I like -- a mini-set of UNIX-type tools would be nice. It would really help if I could just plug a flash drive into a camera USB port and dump the images directly. I think some of my cameras might actually be able to do this, but I haven't investigated yet.]

[ED: As of 2021, I bought a set of 8GB flash drives, just for giveaways. It's harder to find any smaller drives any more. As far as cameras go, I'm focused on smartphone cameras now, and the images get uploaded wirelessly to my PC via OneDrive.]

BACK_TO_TOP* MUTT FROM HELL: According to an article from BBC.com, sometime in April an American on a hunting trip in the Canadian Northwest Territories shot and killed a bear. It was an unusual animal, with patchy white and brown fur, with the general physique of a polar bear but the humped back and long claws of a grizzly. The guide on the trip, Roger Kuptana, suspected it was a cross between the two, and Canadian wildlife officials picked up the body for further examination.

Such hybrids are known in captivity -- they are sterile crosses -- but there is no record of one being spotted in the wild before. However, genetic tests on the dead bear showed it was the offspring of a male grizzly and female polar bear. The ranges of the two species overlap in the Northwest Territories, but they have distinct behaviors, in particular having different breeding seasons. The hybrid has been named a "grolar bear", "pizzly", or more elegantly "nanaluk", after the Inuit names for polar bear ("nanuk") and grizzly bear ("aklak").

[ED: Whatever they call it, I'm just glad I didn't run into it. When I was a kid in northern Idaho, I used to run into black bears in the woods. Black bears are timid and inclined to run away from humans, but I always went the other way anyway. I didn't see any valid reason to push my luck with a beast that could kill me without breathing hard if it decided it had a reason to. I've never seen a grizzly in the wild -- they're said to be much more aggressive, though that may be an exaggeration. I wouldn't want to find out.

I've never seen polar bears in the wild either, but I had an encounter some years back with one at the zoo in Portland, Oregon, that I haven't forgotten. The Portland zoo is not all that big, but it is very well laid out and highly regarded for its design. The polar bear enclosure consists of a pool up front with a rocky shore behind; visitors can drop down into a viewing area to watch the bears swim underwater, and then walk up to see them paddle around on the surface. A thick plexiglas wall keeps the bears caged in.

As I walked up from the underwater viewing area, I saw a polar bear swimming in the pool next to the plexiglas, very close to me. I made eye contact with it -- and it immediately shot out the water at me, slamming hard into the plexiglas, to fall back into the pool. I wasn't particularly startled, the whole incident being over before I could react, but there was a muted realization in the back of my mind that if that plexiglas wall hadn't been there, I would have been ended up as table scraps.]

BACK_TO_TOP* ONE LAPTOP PER CHILD: Nicholas Negroponte of MIT has made a splash with his "One Laptop Per Child (OLPC)" effort, which aims to provide mass-produced laptops with a cost of less than $100 USD for kids in third-world countries. According to an article from CNET.com ("Slimmer Linux Needed For $100 Laptop" by Steven Shankland), the OLPC group plans to provide five to ten million of the laptops to children in India, China, Brazil, Argentina, Thailand, Egypt, and Nigeria in early 2007, a slip from the original schedule of late 2006. Negroponte believes the laptops will help give these children a big boost in their educations.

As currently defined, the laptop will use a 500 MHz processor from Advanced Micro Devices, with 128MB of RAM memory and 512 MB of flash memory as mass storage. It will not have a hard drive. The laptop's display, which is a major proportion of the cost, will be dual mode, providing an 1110x830 B&W image in sunlight, and a 640x489 color mode otherwise. Negroponte has emphasized to display vendors that the display doesn't have to be perfect -- it can be missing a pixel or two, it doesn't have to be bright, it just has to be cheap and workable.

Negroponte originally insisted on a hand crank on the side of the laptop to provide power, but that stressed the machine's frame, and so the current concept envisions an external foot pedal attached through a power cord. The laptop will draw two watts, one watt just for the display. There will be no networking capability at the outset, but Negroponte sees the OLPC machine as forming a "mesh" wireless network a few years down the road, with $100 USD servers containing small hard disk drives housed at schools to provide data resources.

Although the plan has been to use the open-source Linux operating system (OS), Negroponte has been critical of the way Linux has, as software tends over time, suffered from "code bloat". Microsoft's Windows OS has become famously bigger and bigger with every release, and Negroponte states: "Linux has gotten fat, too."

The OLPC association plans to sell $135 USD laptops in 2007, then cut the price to $100 USD by 2008, and to $50 USD by 2010. Microsoft Chairman Bill Gates has criticized the OLPC laptop as inadequate. He has since backtracked on his judgement somewhat, but Negroponte remains annoyed, particularly since Microsoft has been connected with the OLPC effort. Negroponte complains: "It's not about a weak computer. It's about a thin, slim, trim, fast computer ... We are also talking to Microsoft constantly ... So jeez, why criticize me in public?"

* The OLPC effort is interesting in itself, but it also opens the possibility cheap little PCs everywhere. Kids would take them to school, configured appropriately to help their studies. They could also be configured as other specialized tools.

A number of companies are moving towards cheap little PCs. Negroponte isn't the only one trying to come up with cheap PCs for the developing world: AMD has a partnership with HCL in India to sell a $200 USD PC, Via of Taiwan is working on a laptop driven by car batteries or solar cells in a ruggedized case, and Intel has announced a series of low-cost PCs. It does not seem unreasonable to think that, at least in a decade or so, that we could have $100 PCs with much more CPU power, memory, mass storage, and IO capability than a PC that was seen as perfectly usable in 1990. [ED: Looking back from 2017, the OLPC was a dud. It turned out that cheap tablets were more of the future.]

BACK_TO_TOP* TRYING THE TYRANTS (2): Although the "truth and reconciliation" approach to dealing with crimes against humanity has its merits, now the drift is towards international justice that can override national concerns. In 1993, the United Nation's "International Criminal Tribunal For Ex-Yugoslavia (UN ICTY)" was set up in The Hague, the Netherlands, to become the first international war crimes tribunal set up since the aftermath of World War II. The court's jurisdiction was completely under international law, with all the judges from countries other than the former Yugoslavia. It was followed in 1994 by the similar UN tribunal for Rwanda, set up in Arusha, Tanzania, with five more war crimes tribunals being set up since then to deal with atrocities in Sierra Leone, Cambodia, Timor-Leste, Iraq, and Afghanistan. Lebanon is talking with the UN about setting up such a court to investigate the assassination of Lebanese ex-prime minister Rafik Hariri, who was murdered with a huge car bomb in 2005.

The Special Court for Sierra Leone is a "hybrid" court, the first of its kind, financed by voluntary contributions from UN member states and operating under both local and international judges. It is also innovative in being run "in theatre", the country where the crimes were committed.

The crimes committed during the 11-year civil war in Sierra Leone are almost beyond belief. The main rebel group, the "Revolutionary United Front (RUF)", was fond of hacking off both hands or arms of its victims, and burning families in their homes. That was when they were being unimaginative; a detailed list of some of their brutalities reads like the script of a particularly gruesome horror movie. Tens of thousands to hundreds of thousands of people were killed -- there is no solid count -- and six million forced to flee. The conflict went on until the British, Sierra Leone's old colonial master, finally sent in troops. The professionals immediately suppressed the ragtag rebels.

Charles Taylor, the warlord of neighboring Liberia who was forced into exile in Nigeria in 2003, is alleged to have armed the RUF in exchange for diamonds. Nigeria, the US, and Britain had negotiated the deal that deposed Taylor; he had been living in a comfortable seaside villa since that time, but demands that he extradited to face judgement gradually rose in volume until he was finally sent back home in March 2006. He attempted to flee, but the Nigerians arrested him trying to leave the country. Given that he has supporters in the region who might try to rescue him, the trial was moved to The Hague, with Britain volunteering to provide prison facilities for Taylor if he is found guilty and sentenced to incarceration.

Besides Taylor, nine others indicted by the Special Court are in custody, with three each from the two rebel groups and three from the pro-government "Civil Defense Force (CDF)". However, some big fish are still loose, particularly the vicious head of the RUF, Foday Sankoh. The failure to bring in the major criminals have led critics to charge that the court is a joke. On the other side of the coin, critics have claimed that indicting Chief Samuel Hinga Norman, boss of the CDF, is outrageous, since many Sierra Leonans see him as having been a force working for stability in a land reduced to chaos. Replies the court's chief prosecutor, Desmond de Silva: "You can fight on the same side as the angels and still commit crimes against humanity."

The international nature of the court is seen as a safeguard against miscarriages of justice. In fact, the Sierra Leone court is seen as something of a model, with two foreign judges for every local judge; an aggressive "outreach" (public relations) effort; strong victim-protection programs; a tight schedule, with the work to be completed in five years, as opposed to 17 for the Yugoslav trials; and a fairly modest but effective budget of $30 million USD.

The Rwandan and Yugoslav tribunals are respected, though regarded as slow, costly, and remote. The Cambodian and Iraqi tribunals, which feature mostly or completely local staff, are regarded as bad examples, being neither impartial nor competent. [TO BE CONTINUED]

START | PREV | NEXT* INFRASTRUCTURE -- MINING (4): Coal mining is the most important category of mining, the magnitude of the business running to roughly the size of all other forms of mining combined. A century or so ago, coal was used everywhere, with an extensive infrastructure set up to deliver it to residential and industrial uses. This infrastructure has long since disappeared, but ironically more coal is now being mined than ever before. The range of users is now much narrower, however: about three-quarters of the coal mined goes to the electric utilities, with the rest going to a few big classes of customers such as the iron and steel industry.

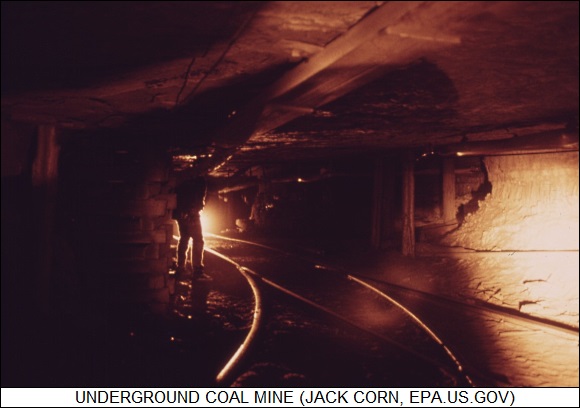

As mentioned in a previous installment, coal is often produced from strip mines, but much is also dug out of underground mines. Coal is soft and, by that measure, fairly easy to dig out of a mine. However, its softness also makes underground coal mines highly vulnerable to cave-ins. Coal also has the disadvantage, as far as underground mines are concerned, of being a source of methane gas and coal dust that can lead to explosions, and of course being flammable in itself. As discussed here last year, sometimes underground coal deposits can catch on fire, burning for decades while smoke oozes out of the ground. The ground will subside after the slow wave of burning passes by, with houses and the like swallowed up into the earth. Putting out such creeping fires is in the range of "difficult" to "impossible".

In any case, underground coal mines have long used a "room and pillar" system. Continuous-mining machines -- squat vehicles with augers or cutting wheels up front and conveyors rolling the coal out the back -- chew through the seam of coal, gouging out the rooms and leaving large "pillars" of coal behind to support the roof. The predominant modern approach in Europe is called "long-wall mining", in which a cutting machine shuttles back and forth along the side of a coal seam, gradually shaving it down. The machine is protected by hydraulic shields as it cuts along, with the roof simply caving in after its passage. Long-wall mining is now catching on in the US.

Traditionally, after the coal was brought to the surface, cars containing the coal were winched up to a "breaker" or "tippling house" and then tipped over ("tippled") into the top of the structure. The tippling house was highly distinctive -- high at the end where the coal was dumped in, then descending by stages to sections with lower and lower roofs. In the first stage, the coal was sorted from shale and other waste rock by "breaker boys", who actually were usually children. The coal then fell down through the sequence of stages, being sorted by grates and screens into pieces of decreasing size, ranging from "lump" down through "stoker"; "nut"; "egg"; and finally fragmentary debris called "slack". The different grades were dumped into railroad cars for transport to appropriate customers.

A modern coal processing facility may still be referred to as a breaker or tippling house, but officially it's called a "coal preparation plant". Sorting by size is no longer particularly important, since end users such as utilities simply want bulk coal -- but the coal still needs to be separated from the waste material, a procedure referred to as "washing". There are several ways of doing this.

One approach is the "cyclone", a funnel-shaped device in which a slurry of raw coal is dumped to spin around, with the heavy slate and waste being pushed to the side and discarded while the lighter coal falls down the center. (Cyclones, incidentally, are common to a wide range of industries; my family long owned a cabinet shop with a cyclone that trapped sawdust picked up by a vacuum cleaner network linked to the saws, planers, and other power woodworking tools -- the dust being unhealthy to inhale.) A "spiral concentrator" works in much the same way, but has the form of a helical water slide.

One of the most interesting ways of washing coal is to dump it into a shallow agitating tank containing a slurry of water and a pulverized iron ore known as "magnetite". The heavy slate and waste sinks, while the mix of coal and magnetite slurry is drawn off. Not too surprisingly given its name, magnetite is magnetic; the mix flows under a magnetized drum that draws off the magnetite, leaving a slurry of coal and water that can then be dried out. Another ingenious scheme is "froth flotation", in which bubbles are forced up through a slurry of raw coal, with the bubbles carrying up the coal while the slate and other waste simply sink to the bottom.

The slurry of coal produced by all these methods is transported through pipes or troughs to be dried in a big cylindrical centrifuge, which drives out the water in much the same way that a washing machine spins its load of clothes at the end of its cycles. The washing is required not merely to get rid of materials like slate that don't burn, but also materials that could generate pollutants, particularly sulfur compounds, when the coal is used.

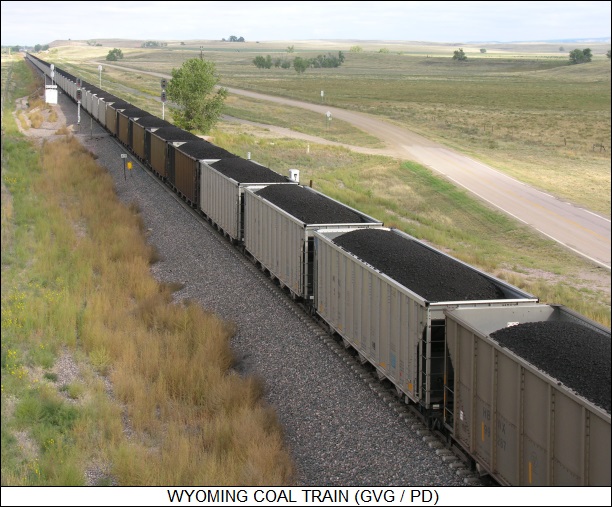

* Strip mining of coal tends to take place mostly in the western US, where the terrain tends to be flat, the overburden thin, and the coal seams thick. In fact, most of the coal mined in the US these days is from the west.

Coal from the western US tends to provide less energy per unit than coal from the east, but western coal has lower sulfur content, meaning that it costs less to control the pollution from burning it. Coal from the big western strip mines is loaded into long trains and carted off to a particular power plant, with the train then coming back over the same dedicated rail route for another load. Such coal trains are a common sight when driving through the state of Wyoming, and their association with the power plants that can be seen here and there on the badlands is obvious. In some cases, the power plants are built right on site with the strip mine, eliminating the need to haul the coal over long distance.

Some surface mining is actually done back east as well, but the approach is a bit different. Coal tends to be found in hills in the Appalachian Mountains, and so one strategy is to simply dig the hill down to its base and use the spoils to fill up a valley. Coal mine operators like to point out that this actually increases the amount of usable land in the area, but not everyone is happy with the process, particularly those who are displaced when their valley is filled up.

As petroleum becomes more expensive, coal may become an even more important energy source. There is an enormous amount of coal still in the ground; processes to convert coal to liquid fuels have been around for a century, and with the rise in petroleum prices, the economics of fuel-from-coal becomes more attractive. [ED: As of 2021, coal is in steep decline, and fuel-from-coal is a nonstarter.] [TO BE CONTINUED]

START | PREV | NEXT* HYPOCHONDRIA INC: As reported in BUSINESS WEEK ("Hey, You Don't Look So Good" by Catherine Arnst, 8 May 2006), the efforts of pharmaceutical companies to market new drugs has created a chorus of critics who claim that in some cases the drugs are solutions to problems that don't really exist. For example, a recent article in the prestigious NEW ENGLAND JOURNAL OF MEDICINE (NEJM) described a syndrome known as "prehypertension", an affliction in which people have blood pressures close to the threshold used to define high blood pressure. Luckily, AstraZeneca Pharmaceuticals sells a drug named Atacand that can deal with it.

The critics call this "disease mongering", asserting that treating the risk of a risk is absurd. One points out that if "you make a cutoff for blood pressure that's close to the normal range, then just about everyone can be diagnosed." AstraZeneca officials reply that the fact that the article was accepted by NEJM gives it credibility.

The critics are not impressed. They point to other examples, such that estimates of the supposed incidence of erectile dysfunction increased dramatically following the introduction of Pfizer's Viagra. Similarly, the number of sufferers of "social anxiety syndrome" -- essentially, being apprehensive of social interactions -- shot up after GlaxoSmithKline PLC's Paxil was put on the market. Bipolar disorder, a severe mental affliction that was once estimated as having an incidence of 1 in 1,000, is now believed by some to have an incidence of 1 in 10 -- and of course new mood-stabilizer drugs are available to cure the affliction.

Critics of disease mongering do not place the blame entirely on the pharmaceutical companies, saying that some doctors are inclined to over-prescribe, and that there is a popular attraction to the idea of solving all one's problems just by popping a pill. Some of the critics are also careful to point out that drugs like Viagra and Paxil do some patients a great deal of good, but worry that overtreatment leads pharmaceutical companies down the wrong path: "None of these companies is coming up with a cure for TB."

* As a footnote, SCIENTIFIC AMERICAN commented that the BRITISH MEDICAL JOURNAL published a report in the 1 April 2006 issue, describing how Australian scientists had discovered people who seemed lazy were instead suffering from "motivational deficiency disorder (MoDeD)". A drug named "Strivor" had been developed to deal with MoDeD, and the article reported that "one young man who could not leave his sofa is now working as an investment adviser." Of course, the date of the issue was significant in this context, though it seems the article was so deadpan that it was cited elsewhere at face value.

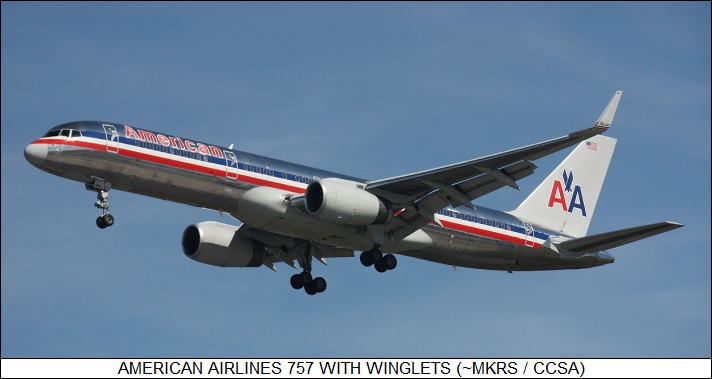

BACK_TO_TOP* AA FLIES HIGH: The rising costs of fuel have hit US airlines hard, but as pointed out in a BUSINESS WEEK article ("Making Every Gallon Count" by Dean Foust, 8 May 2006), American Airlines has demonstrated a certain flair in adapting to the new reality. American, which is based out of Fort Worth, Texas, doesn't generally have the latest fuel-efficient jetliners -- half are old McDonnell-Douglas MD-80s, with relatively uneconomical turbofans -- or whizzy technology, but has taken a wide range of simple measures that have had a big positive influence on the company's competitiveness.

American hopes to reduce fuel consumption by 3% compared to 2004. That might seem overly modest, but on the bottom line it runs to $220 million USD over the year at current fuel prices. One of the first measures was to refit "winglets" to all the company's Boeing 737 and some of the 757 jetliners. Winglets are little fins attached to wingtips that reduce "vortex drag" around the wingtips to improve fuel consumption. Then the focus moved on to eliminating unnecessary aircraft facilities, tearing out a redundant galley and replacing it with seats. The amount of water carried on flights turned out on analysis to be excessive, and was gradually reduced to half.

Another line of effort focused on simply flying more efficiently. New routing software was written that leveraged off meteorological data to take as much advantage of tailwinds as possible. New, shorter routes were defined, for example cutting across the Gulf of Mexico instead of hugging the US Gulf coast; the over-water flight required carriage of life vests, but the savings in fuel and time were more than worth it.

American is profitable, but not entirely healthy, with a big pension-fund burden -- and aging jetliners, none of which will be replaced until 2013 as far as current company plans are concerned. However, American seems willing and able to confront the challenges, with involvement from top to bottom. Says one American pilot who is involved in the flight-efficiency effort: "The reward for me is the survival of this airline."

* Another one of the interesting features of the impact of the rise of fuel prices on the airline industry is the resurgence of sales of fuel-efficient turboprops, which for a time were being eclipsed by jets. There is considerable interest in fitting turboprop airliners and air freighters with new high-efficiency composite props, with eight or so curved blades -- clearly not your father's propellers, even at first sight.

The irony is that these next-generation props are more or less an outgrowth of a 1980s technology, advanced turboprops known as "propfans", which were developed in response to the first energy crunch of the 1970s. The propfans never went anywhere, since conventional turbofan technology, partially leveraging off data acquired in propfan development, managed to become efficient enough to render the propfan irrelevant when fuel prices dropped again. It's only now that the prop technology developed for the propfans is starting to become widespread.

BACK_TO_TOP* MAD BAD & CRAZY: The 29 April 2006 issue of THE ECONOMIST had an obituary for Shin Sang-Ok, who died on 11 April 2006 at age 79. Shin was a South Korean movie-maker who had made a series of highly-regarded films in the 1950s and 1960s, which generally starred his beautiful wife, Choi Eun-Hee. His luck took a turn for the worse in the 1970s, with a series of box-office flops, a divorce, and the then-authoritarian government of South Korea shutting down his movie studio when he became too outspoken against censorship.

That was only the beginning of his problems: his life was about to descend into something like a mad black fantasy. It began when his ex-wife disappeared during a trip to Hong Kong. Shin went to Hong Kong to find out what had happened to her -- and was kidnapped by North Korean agents, to be presented to Kim Jong-Il, the heir to the Communist throne of Kim Il-Sung's North Korea. The younger Kim, it seems, was a fan of Shin's movies, and had decided that Shin should be given the "opportunity" to start making movies in the People's Paradise.

Shin's leash was loose until he tried to make a run for it. He was captured and thrown into a prison camp, where he went on hunger strike. The guards force-fed him; a guard told him that he was the only prisoner the authorities there had ever bothered to save, a sign of his importance. After four years and writing a series of groveling letters to Kim Il-Sung and Kim Jong-Il, he was finally released -- to be reunited with his ex-wife, whose disappearance in Hong Kong had also been the work of North Korean agents. Like any good fan, Kim had not been happy with Shin and Choi's divorce, and so he told them to remarry. They did so. They were also ordered to submit to a public press conference and state they were in North Korea of their own free will. They did so.

Kim Jong-Il showered Shin with luxuries and gave him, by North Korean standards, a considerable amount of artistic license. Kim, it seems, wanted Shin to make world-class movies for North Korea, not just propaganda potboilers. Although Shin and Choi had a remarkable level of comfort and affluence compared to ordinary North Korean citizens, a gilded cage was still a cage, and when they were on a promotional tour in Vienna in 1986, they went to the US embassy there and asked for asylum. No doubt Kim had thought that their public proclamation of loyalty to North Korea had burned their bridges back to South Korea, but they had, at terrifying risk, taped conversations with him that clearly demonstrated coercion was involved.

Shin went back to South Korea to make more movies right up to his death. Choi survives him. The tale of their misadventures in North Korea is full of lunatic black humor, but it also has a lesson of sorts. Analyses of international politics often attempt to determine the logic behind the actions of foreign states; but how seriously can such musings be taken when the people in charge seem to be perfectly mad, bad, crazy, and dangerous to know?

* UNMAPPED: While China is not in the same league of crazy as North Korea, THE ECONOMIST reports ("No Direction Home", 22 April 2006) that Chinese authorities have their own eccentricities, specifically in their determination to make sure that dangerous materials don't fall into the hands of the citizenry.

In this case, the dangerous materials are road maps. Chinese are buying more and more cars, and road building is going on at a rapid pace as well. Given this dynamic situation, the state's reluctance to release maps to the public means people have a tendency to get lost much too often. Detailed topographical maps of the sort used by hikers in the West are flatly classified information. Cartographers employed by the central government were outraged some time back when a few local governments put topographical maps on their websites. The cartographers claimed releasing the maps was a threat to national security. Officials were far more shocked when they discovered that Chinese could access online satellite imagery databases, like Google Earth, and get pix of the buildings in the walled-off headquarters of the Chinese Communist Party -- as well as the exact geographical location of the site. It's not even mentioned on Chinese maps.

In an increasingly capitalist China, of course map-making startups have arisen to fill the need. To no surprise, they have to make sure they get state approval for what they put on the maps. When asked what was forbidden, an official at one of the companies replied, fortunately it seems not with a straight face: "Even what is secret is secret."

* UNAUTHORIZED: In more related news ... there are tens of thousands of characters in the Chinese alphabet, but a good half of them or more are little used. In fact, many of the little used characters are only seen in given names, which always consist of two Chinese characters. According to a short article in THE ECONOMIST ("Farewell The Red Soldiers", 15 April 2006), parents have taken to making more use of unusual characters in their children's names to make them more distinctive.

The law has had a problem with this, since some of these characters can't be entered into a computer. Given the enormous size of the character set, there's no way to have a keyboard that covers them all, and so a Roman keyboard is used on Chinese PCs, with a user typing in the sound of a Chinese character in Roman characters, and then cycling through a list of characters with that sound -- there may be several -- to select the one desired. Some of the more obscure characters aren't in the localization set on the PC, and end up being represented by zeroes or asterisks.

This annoys the police. In 2004, things got worse when the state introduced microchip-based "smart card" ID cards, which also had problems with obscure characters. The police have come up with a solution: a senior police official has ordered that such characters will not be used. Public reaction has not been entirely positive, some newspaper editors having the audacity to suggest that the police exist to serve the public, not the other way around.

[ED: I have some familiarity with the Chinese character set through my tinkerings with Japanese. The Chinese-based characters of Japanese, the kanji, are in general exactly the same as the original Chinese characters, though there are a (very) few kanji that are unique to Japanese.

The formal base set of kanji for essential literacy as established in the Japanese educational curriculum runs to 1,945 characters. The total number of characters runs to over 20,000, it is said, but it is hard to believe that even half that number are in any general use. I have a dictionary that uses 8,000 characters, and it's both thick and terse -- few English-speakers would know all the words in an English dictionary of comparable size. As in Chinese, many of the obscure characters are used in names, and in the "hanko" hand stamps used to perform legal signatures -- a pot of hanko plays a role in Juzo Itami's excellent semi-comic movie A TAXING WOMAN.

Also as in Chinese, to type Japanese text into a computer, a user types in the sound of the kanji character and then cycles through a list of characters with the same sound to select the one desired. However, the phonetics are typed in using kana, the Japanese phonetic set, not Roman characters.

When I see kanji characters on tattoos and the like, I am now compelled to look them up and figure them out -- learning how to navigate a kanji dictionary isn't trivial, by the way. After reading this little ECONOMIST article, I just had to wonder what the characters were for "control freak".]

BACK_TO_TOP* TRYING THE TYRANTS (1): As reported by an article in THE ECONOMIST ("Bringing The Wicked To The Dock", 11 March 2006), any brief inspection of the daily news suggests that not all is right with the world, and some of that which is wrong is deliberately created by leaders with malign agendas. Modern communications brings every atrocity to our households; and not surprisingly an international consensus of sorts has arisen that such criminals should be brought to judgement.

Brutal dictators like Josef Stalin, Mao Zedong, Pol Pot, and Idi Amin died natural deaths, but now those guilty of "crimes against humanity" are under increasing pressure. Serbian strongman Slobodan Milosevic did die a natural death, but he was in prison awaiting trial when he passed away, and the hunt for Bosnian Serb General Ratko Mladic, allegedly responsible for the massacre of Bosnian Muslims at Srebrenica, is intensifying. Chilean General Augustin Pinochet, whose rightist government liked to make opponents disappear, is finding the amnesty he engineered for himself eroding, and Hissene Habre, once the brutal president of Chad but now hiding out in Senegal, is facing extradition. Polish prosecutors are considering charges against Wojciech Jaruzelski, the country's last Communist leader. Iraq's Saddam Hussein is thrashing about in court, confronted with the near-certainty of his conviction and execution.