* 22 entries including: mining and waterworks infrastructure, another road trip, alternative fuels compared, Orbital Express space mission, holographic solar concentrators, combat armor, changes in US War on Terror policies, new digital camera features, gas prices, Chornobyl disaster at 20, automotive thermophotovoltaic systems, saving power in NYC, medical cost-effectiveness, death of Abu Musab al-Zarqawi in Iraq, self-powered sensors, smart sensors, synthetic diamonds, changes in Saudi society, voice-activated laptops for recuperating GIs.

* INFRASTRUCTURE -- WATERWORKS (2): A levee is much like a dam, being designed to hold back water, the difference being that a levee is intended to keep a river from overflowing its banks while a dam is intended to halt the river's flow. Levees are somewhat trickier to build than dams, however, since a dam can be sited at a strategic and relatively optimum place, while levees have to be built on the banks of a river, meaning that the levee's foundations generally rest on soft sediment deposits. Placing the levee is also tricky, since if it's too close to normal riverbanks, a flood will overwhelm the levee too easily, but if it's too far away, that means giving up otherwise useful land.

Traditionally, levees are simply big, wide embankments or "berms", with the material obtained by digging out "borrow pits" on the river side of the levee. The river tends to silt up the borrow pits in time. The earth berms are generally made of soils as sandy as possible; such soils tend to be more porous than ordinary soil, but wet sand tends to hold its shape better than mud -- think sand castles. The berms are usually planted with grass to prevent erosion. Tree seedlings that crop up are pulled out, however, since their roots will undermine a levee, and creatures such as rodents and rabbits that burrow into a levee are trapped for the same reason.

* Levees keep a river from flowing into the surrounding countryside, but of course they necessarily block streams from flowing back into the river. A small stream may be accommodated by a culvert pipe through the levee, with a gate or check valve closed to prevent a flooding river from flowing back through the culvert. Pumps may also be used to carry water from a small stream over the levee into a river.

Such approaches are impractical for larger tributaries, and so that means building up more levees well back up the tributaries. This measure has the effect of dividing the land on the sides of a major river into segments bounded by tributaries and their levees: if the levee in one segment gives way, the flooding will be restricted to that segment. These segments are often formally set up as administrative districts, supported by semi-public groups known as "drainage districts" or "levee commissions" that maintain the system, with "ditch wardens" and "drainage commissioners" standing in local elections.

Incidentally, the breach of a levee in one segment of course reduces the pressure of the river on other segments, a fact that apparently has occasionally led to acts of sabotage against neighbors, the principle being: Better you than me. Oddly, a levee that isn't broken when a river floods over its top may well break well after flood crest, just when the citizenry is beginning to think the crisis is over. There is seepage through a levee, but as the river rises it plasters silt against the levee, helping reinforce it. As the river falls, it draws off the silt, and water seeping back towards the river undermines the levee, leading to its collapse.

* Big earthen levees aren't practical when a river runs through a city, and so high concrete "floodwalls" are built instead. As with earthen levees, the floodwalls cut off tributaries from the river, usually demanding an extensive system of pumping stations. Cables and pipes also have to get across the floodwall, which normally isn't too much of a problem -- they can be safely routed through or under it -- but so does ground traffic. Huge doors are built to allow cars and trains to pass through the floodwall. The doors are normally open, and only closed in emergencies.

* Levees, like dams, can be politically controversial, if not for exactly the same reasons. Once levees are in place, people will feel free to build on areas traditionally seen as floodplains, with disastrous results if a levee breaks. Critics have suggested that more rational land-use policies would leave the floodplains unsettled to avoid such misfortunes. After the ghastly flooding in New Orleans in the wake of Hurricane Katrina in late 2005, there was considerable public comment on the wisdom or lack thereof in trying to protect districts of the city that were actually lower than sea level. The discussion was heated and highly politicized, there being a strong reluctance to give up significant parts of the city even though they could be argued as costing more to protect than they were actually worth in dollars and cents.

Politics raises its head in other ways. Cities that build floodwalls may pressure other cities along the watercourse to build them as well, the protection provided by floodwalls in one town being negated if the town just upstream doesn't have them as well. The US Federal government has occasionally pressured towns that haven't built floodwalls to do so.

* The most spectacular example of levees is the system of "dikes" that the Netherlands uses to keep out the North Sea. The nation sits on the floodplain of the Rhine, meaning the country is flat and prone to flooding. The Dutch have been building dikes for a long time, since at least 1200 AD, both to prevent flooding and to create "polders" -- land that would otherwise be underwater but has been reclaimed for habitation, industry, and agriculture. The classic Dutch windmills were mostly used for water-pumping, not grinding grain, and many are still in use for that purpose.

The history of the modern Netherlands involves a running sequence of "conquests" of land against the sea. Modern Schiphol Airport lies on a polder reclaimed in the 1850s. The biggest single exercise in dike-building was the enclosure of the Zuider Zee, begun in 1918 and completed in 1932. The sea is blocked out of the area using a dike 24 kilometers (15 miles) long and 90 meters (300 feet) wide, made of clay and sand, with a four-lane highway on top plus space for a rail line. Final closure was apparently a frantic exercise, a race between the rate at which material was dumped and the rate at which the tide could carry it away. Once enclosed, fresh water accumulated in a lake, the Usselmeer, with five tracts diked off in turn to create polders with a total area of 2,200 square kilometers (850 square miles). Polders start their lives below sea level, meaning they have to be pumped continuously, which ends up lowering their surface level further. Such settling was a problem for New Orleans as well.

Although the Dutch exercise in dike-building is the most prominent, it is not unique. There is a large area of polders where the Sacramento and San Joaquin Rivers empty into San Francisco Bay, with the Californians having to deal with much the same difficulties as the Dutch. They also have to deal with an additional problem: earthquakes. The reclaimed soil tends to act something like jello during an earthquake, which doesn't do the structures built on it much good. [TO BE CONTINUED]

START | PREV | NEXT* ORBITAL EXPRESS ON THE PAD: According to an article in AVIATION WEEK, ("Express Service" by Michael A. Dornheim, 5 June 2006), this fall the US Defense Advanced Research Projects Agency (DARPA) will put a satellite system named "Orbital Express" into orbit as a step towards the development of space robots to maintain, refuel, and repair satellites.

There have been previous steps towards space service robots. In 1997:1998, the Japanese "Engineering Test Satellite 7 (ETS-7)" performed a rendezvous with a cooperative target satellite and changed components on it using a remote manipulator arm. The US Air Force Research Laboratory (AFRL) flew the "XSS-10" smallsat in 2003, which autonomously maneuvered around its own Delta booster upper stage and relayed images back to earth. XSS-10 was followed in 2005 by "XSS-11", which tested rendezvous systems technologies, using the upper stage of its Minotaur booster as a target. In the same year, the US National Aeronautics & Space Administration (NASA) flew a mission designated the "Demonstration of Autonomous Rendezvous Technology (DART)" to prove rendezvous technologies, but that experiment was a failure.

In 2002, DARPA, which conducts "future technology" studies for the military, awarded a contract to Boeing for the Orbital Express, which was intended as an experimental prototype for future operational space service robots. Orbital Express was defined as a complementary set of two spacecraft. Boeing built the servicing spacecraft, named the "Autonomous Space Transfer & Robotic Orbiter (ASTRO)", while a subcontract was issued to Ball Aerospace for a target spacecraft named "NextSat".

As it has emerged, ASTRO looks like an octagonal hatbox with two solar arrays sticking off to opposite sides of the base, and a remote manipulator arm. The on-orbit weight will be 1,090 kilograms (2,400 pounds); the octagonal bus will be 1.75 meters (69 inches) across and 1.78 meters (70 inches) long, with the solar arrays spanning 5.59 meters (220 inches). The solar arrays will provide 1,560 watts at the outset. The remote manipulator arm is built by MacDonald Dettweiler of Canada. The satellite also includes rendezvous targeting and navigation systems, plus a capture fixture. NextStar is much smaller than ASTRO, with an on-orbit mass of about 250 kilograms (550 pounds). It also has an octagonal bus, but only one solar array.

ASTRO carries two sets of sensors to keep an eye on NextSat for the rendezvous process, with the sensor inputs balanced by ASTRO's "guidance, navigation, & control (GN&C)" system as the two spacecraft draw together. One sensor set is the Boeing-built "autonomous rendezvous & capture sensor system (ARCSS)", which includes:

The inputs from ARCSS are assimilated by a program named "Vision-based Software for Track, Altitude, and Ranging (Vis-STAR)" that can perform pattern recognition of NextStar at short range, assisted by a visual target carried by NextStar.

The other sensor system is the "Advanced Video Guidance Sensor (AVGS)" system, built by NASA's Marshall center -- NASA is a minority partner in Orbital Express. AVGS was used on the ill-fated DART but had nothing to do with the failure of that mission. It uses infrared cameras to zero in on twin infrared-illuminated targets fixed to NextStar. The CN&C system uses ARCSS for long-range tracking and AVGS for the final rendezvous.

ASTRO has 16 hydrazine thrusters for maneuvering and uses a set of reaction wheels for attitude control. NextStar does not have any propulsion, maintaining attitude using reaction wheels, though it does have a hydrazine tank for fuel transfer experiments. NextStar has a grapple fixture for ASTRO's robot arm, and a capture socket to accept ASTRO's three-pronged capture fixture. Both satellites have ground communications links as well a crosslink to each other, allowing ASTRO to obtain status from NextSat. However, the crosslink is not actually part of the rendezvous system.

The two spacecraft will be launched mated together on an Atlas 5 booster into a 492 kilometer (306 mile) orbit at an inclination of 46 degrees, for three months of tests. The trials will include fuel transfer, transfer of components from ASTRO to NextSat, removal and replacement of NextSat components, and of course maneuvering and rendezvous tests. Overall cost of the mission is a bit more than a quarter of a billion USD, spread over six years.

* DARPA does not actually develop operational systems. If a DARPA demonstration works out well, usually the agency can find a military service to sponsor follow-on development. The logical owner for an operational system is the Air Force Space Command. However, one DARPA participant in Orbital Express suggests that there may be some lingering skepticism in the space service robot concept, noting that back in the 1990s there were comments that "zero-gravity space refueling is impossible" -- despite the fact that Soviet Progress freighter-tanker spacecraft had been doing it for several years before that.

There are also (more valid) concerns about the economic practicality of the idea: one of the rationales behind the NASA space shuttle was that it would be able to repair satellites in orbit, but though the shuttle did repair a number of spacecraft, it generally turned out that it was cheaper to simply replace them. The cost-effectiveness of a space service robot will be dependent on the number of spacecraft a single robot will be able to tend, with even a slight probability of damaging a target spacecraft badly degrading the bottom line. Of course, all target spacecraft will have to be designed for servicing, which will bump up cost.

DARPA officials admit that a careful cost-benefit analysis of space service robots hasn't been performed yet, since potential end users who are in a position to make such an analysis relative to their operational needs are waiting to see how well Orbital Express works before digging into the numbers.

BACK_TO_TOP* HOLOGRAPHIC CONCENTRATORS: According to an article from TECHNOLOGYREVIEW.com ("Holographic Solar" by Pratchi Patel-Predd), a company named Prism Solar has come up with a new approach for building "concentrators" to focus sunlight on solar cells, using holograms instead of lenses or mirrors.

There's a trade-off between cost and solar power conversion efficiency with solar cells. Cheap solar cells have low efficiencies, with the price rising sharply as efficiency improves. Carpeting a solar power panel with highly efficient solar cells is expensive. One way around this problem is to use fewer solar cells and incorporate concentrators to gather up the sunlight. Traditional concentrators use lenses and mirrors. They are efficient, but they require a mechanism to allow them to track the Sun, and they can heat up solar cells so much that an active cooling system is required.

A hologram is basically a film photograph that was exposed with a laser beam to fix an image of an object as an interference pattern in the film. One of the nice features of a hologram is that a continuous range of images can be fixed in the film just by varying the laser beam angle. The Prism Solar concentrator takes light falling on it at a range of angles and focuses them on a single spot.

A hologram can only concentrate sunlight by a factor of ten or so, while a lens or mirror system can achieve concentrations of a hundred or more. However, the hologram doesn't require a Sun-tracking system. In addition, the hologram can be designed to bend high frequencies of light, useful for generating electricity, and not bend low frequencies of light and the infrared, reducing heat load. Thanks to the relatively modest light concentration and selection against low frequencies, an active cooling system is not required. A solar panel incorporating holographic concentrators promises to be ideal for home installation.

Prism Solar's current device is just a demonstrator. The company is working towards solar panels consisting of solar cells sandwiched between two layers of glass containing holograms. Their price target is $1.50 USD per watt, which should bring solar power closer in range with conventional power sources.

* ARMOR RACE: US soldiers arriving "in theater" in Iraq are given a quick orientation before being sent out into the field, with one element being a video captured from Iraqi insurgents that shows a Hummer light truck rolling down the road -- to be stopped dead by the blast of a mine. It gets the troops' attention. According to an article in SCIENTIFIC AMERICAN ("Enhanced Armor" by Steven Ashley), such attacks have also got the attention of weapon systems designers. One aspect of this is the development of improved armor.

The goal of armor design is to provide the maximum amount of protection for the minimum amount of weight. New "ultrahigh hardness (UHH)" steels are available that are 20% harder than the hardest conventional high-carbon steels, but UHH steels tend to be brittle and crack after being hit. A company named Armor Holdings in Jacksonville, Florida has come up with an armor steel designated "UH56" that provides a reasonable compromise between hardness and resistance to cracking. It is now being used in US military vehicles in Iraq.

Windows in combat vehicles have traditionally been made of thick layers of armor glass, and the usual reaction to enhanced threats is to add more layers. Of course, this adds more weight, making vehicles topheavy and gas hogs. The US military has worked with industry to come up with a technically superior solution in the form of transparent "aluminum oxynitride (ALON)", a sapphirelike material that provides the same protection as armor glass with only have the thickness and weight.

ALON is actually nothing all that new, its use having been curtailed by its expense and the difficulty in making large plates of the stuff. New manufacturing processes promise to increase the size of ALON plates and reduce their cost, though it still remains about three to five times as expensive as armor glass.

Improvements are also being sought in body armor. Lightweight body armor vests use pads of woven Kevlar and other polymers, but the military prefers to used armor containing sets of ceramic plates due to its greater effectiveness. The drawback to ceramic-based body armor, of course, is the greater weight. One solution being developed is "liquid armor", which is Kevlar-type armor impregnated with a "shear-thickening fluid". The liquid consists of nanoparticles, most generally silica or fine-grained sand, mixed into a nonvolatile liquid such as polyethylene glycol. When the armor is hit by a projectile, the liquid stiffens on impact, not only blunting the hit but dispersing the shock over a wider area. The liquid adds about 20% to the weight of the armor. The technology remains experimental for the moment.

BACK_TO_TOP* GLOVES BACK ON: After the 911 terrorist attacks on the USA, the Bush II Administration took the attitude that an extraordinary threat required extraordinary measures. As Vice President Dick Cheney put it: "We will take off the gloves."

There was a certain logic to and justification for this attitude, but the results have not been inspiring: ugly headlines about mistreatment of prisoners, tales of secret CIA flights around Europe, and strained relations with long-time allies -- with the strain magnified by the administration's stated belief that America would unilaterally take whatever actions deemed to be in the national interest, regardless of criticism by outsiders. It made many Americans on both the Right and Left uncomfortable see the government compromise on principles regarded as at the core of what America stood for, and it certainly did much to inspire greater hostility against the USA around the world.

According to an article in THE ECONOMIST ("Straightening The Record", 13 May 2006), the Bush II Administration has been gradually acknowledging, sometimes implicitly and sometimes explicitly, that the brute-force approach hasn't worked very well and a more sophisticated, less unilateral mindset is required. The abuses of prisoners are being leashed in, and even the president has acknowledged in principle that the detention of terror suspects at Guantanamo Bay will be ended eventually -- though he has not specified when.

At hearings in Geneva in May on US compliance with the UN Convention Against Torture, the US State Department sent a delegation of 25 officials under the department's head legal counsel, John Bellinger, to present the American case. Bellinger took a good deal of heat at the hearings, but was direct and even conciliatory in his responses. He admitted that "shocking" errors had been made, but called them "isolated incidents" and insisted that effective measures had been taken to correct the problems. He said that the US is taking a hard line against torture; when asked about "waterboarding" -- suffocating prisoners by putting a wet cloth bag over the head -- he stated that it had never been authorized, and will be specifically banned in the revised Army field manual on interrogations.

Bellinger went on to detail the issue, saying that out of the literally hundreds of thousands of American servicepeople rotated in and out of Iraq, about 800 have been investigated for abuses. Most were exonerated, but action has been taken against 250. 89 have been convicted by court-martial, with 19 sentenced to a year or more in prison; 100 more have been given non-judicial punishment, with 28 being dishonorably discharged. 170 more cases are pending. Bellinger insists that the claims by human-rights groups that the problems are being swept under the rug are not supported by the facts. He also disputes the stories about the "extraordinary rendition" flights around Europe, calling some of them "so hyperbolic as to be absurd." Although he acknowledged that the CIA has performed hundreds of secret flights around the continent, he insisted that "only an extremely small number" carried suspects, and never for the purpose of delivering them to secret jails where they could be tortured out of sight. He added that the last case of "rendition" was three years ago.

On another front, while the administration and the American Right have long opposed the UN-derived International Criminal Court (ICC) -- one prominent Republican sneered at it as "Kofi Annan's kangaroo court" -- the attitude seems to be shifting as well. Although there is still suspicion of the ICC in conservative circles, the consensus is emerging that it is necessary, if possibly a necessary evil, particularly to help deal with the crisis in Darfur.

With regards to Bellinger's comments, it should be remembered that the State Department disagreed with the gloves-off policy from day one, to be overruled by hard-liners such as Vice President Dick Cheney and his long-time associate, Defense Secretary Donald Rumsfeld. It does appear that State is now in the lead on the issue, but the department is confronted with an uphill fight to restore US credibility. [ED: As of 2021, the Bush Administration's "Global War On Terror" has pretty much proven a bust.]

BACK_TO_TOP* THERE & BACK AGAIN (2) On the Tuesday stint of my Northwest USA trip, I drove off from Spokane early. It's about a four-hour drive to Seattle, and I was trying to time myself to avoid morning rush hour. There's not much in central Washington to obstruct traffic along Interstate 90 and it was a smooth drive -- of course taking me through the small town of George, Washington; it seems the town's founder had a corny sense of humor.

My first target was the Seattle Museum of Flight Restoration Center in Everett, north of Seattle, on Paine Field next to the main Boeing plant. I wasn't sure what to expect, and what I found in hindsight wasn't surprising: aircraft in various states of repair crammed into a workshop. I did get some nice shots of a US Coast Guard Sikorsky S-62 / H-52, essentially a scaled-down single-engine version of the well-known twin-engine Sikorsky S-61 Sea King helicopter.

There was a nice French Fouga Magister trainer -- something like a tidy sailplane with two little jet engines and a vee tail -- inside, but the shop was too cluttered to make shooting worthwhile. I think old Magisters are now being passed on to the civilian warbird circuit, and I should get a shot of one at an airshow one of these days. There was also an old BOAC Comet jetliner being restored; I got into the cockpit, to be amused to hear a British old-timer back in the fuselage directing restoration.

Paine Field supports Boeing air operations out of the Everett plant and I tried to get a shot of a KAL 747 jetliner, but security said "no pictures!" That was annoying, since such machines could have been snapped no problem at any airport they operated from. Security said I could get pictures from the Boeing visitor's center down the highway to the south, and I went there, only to be annoyed more. It was a very pretty installation that looked like a nice air museum from the outside and the lobby, but I ended up paying nine bucks to get what mostly amounted to Boeing promotional exhibits. I did get a few nice pix of jet engines, but unless you want to take a factory tour I recommend you save your money. Paine Field was much too far away to making taking pictures from the visitor center worthwhile.

Next stop was to go south to Seattle and the Woodland Park Zoo to the north of downtown. It's a fairly typical zoo, on the high end of the scale in size and sophistication, and a good place to get good pix. It was certainly, as places tend to be on the Northwest coast, lush and overgrown with green. That done, of course I had to go downtown to get pictures of the Space Needle for my photo archive. I got a set from Queen Anne Hill north of the tower, then went down to the mall at the base and got shots upward and of the elevators.

There was a "Science Fiction Hall Of Fame" in the mall complex. I thought it sounded like a tacky tourist-trap sort of thing, but I was on a vacation of sorts and indulging in something like that is part of the game. It was more or less what I expected, but I was pleased anyway because they did a fairly enthusiastic job. For example, they had a display of almost every prop science-fiction video sidearm ever made, from phasers to blasters to pulse rifles and so on.

Final stop for the day was the main Seattle Museum Of Flight at Boeing Field at the south end of town. The Museum of Flight is one of the better air museums, but I'd visited several times before and all I wanted to do was take a quick trip through to nail specific targets. The first time I was there I had a much less capable camera, now in the possession of my niece, and I wanted to duplicate some of the low-resolution pix I had in high resolution.

Since I got down there a bit ahead of schedule, I did a circuit past Boeing Field first to get a fairly nice set of shots of business jets sitting on the tarmac, then got my pix at the museum. And so, on to the motel at Federal Way -- yes, that is the name of the town -- between Seattle and Tacoma. Everything had gone pretty much on schedule, with my timing managing to generally keep me out of traffic jams. [TO BE CONTINUED]

START | PREV | NEXT* INFRASTRUCTURE -- WATERWORKS (1): Chapter 2 of Brian Hayes' excellent book INFRASTRUCTURE focuses on waterworks, including the network of dams and levees that (hopefully) control watercourses; the distribution system that brings water to end users; and the means by which waste water is handled.

* The Earth's surface is 2/3rds covered with water. Of all that water, 97% of it is salt water. The ice sheets of the polar regions account for 2%, meaning that liquid fresh water only accounts for 1%. That's still plenty to go around for humans and the Earth's other land creatures, but that fresh water is not evenly distributed. Controlling the flow of that fresh water is one of the reasons humans build dams and the like, both to collect the water resource when it is scarce and to prevent it from becoming a flood threat when it isn't. The flow of water can also be used to generate electric power.

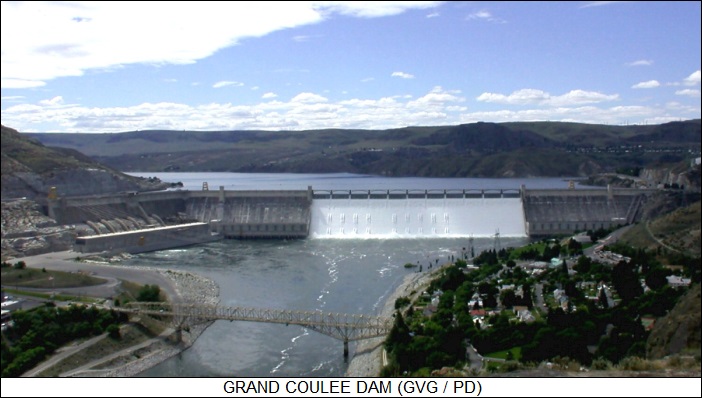

Dams are magnificent structures, some of the biggest works of humankind. There are two basic types: "gravity" dams and "arch" dams. A gravity dam is simply a big wall whose mass holds back the water, while an arch dam is built with an inward-curved cross-section in the horizontal plane, braced on its sides by a narrow canyon. Grand Coulee Dam in the Northwest USA is a classic example of a gravity dam, while Hoover Dam near Las Vegas is a classic arch dam. Actually, Hoover Dam is something of a hybrid, since it not only gains strength from its arch construction but from its sheer mass.

Gravity dams may have a sloping outer face and a vertical inner face; or sloping outer and inner faces; or a buttressed outer face and a vertical inner face. In all of these cases, the structure provides bracing against the pressure of the water behind the dam. Incidentally, the pressure is only due to the depth of the water; it doesn't matter how long the total reservoir behind the dam is.

Gravity dams can be further subdivided into concrete gravity dams or earth gravity dams. Concrete dams are poured in segments glued together with a special grout, since if they were poured as a unit they would crack as they cured. All but the smallest concrete dams are penetrated by tunnels and galleries, and are loaded with instrumentation to keep track of the condition of the dam. One of the simplest instruments used is just a plumbline hanging into a vertical shaft; if the plumbline shifts off center, that means the dam is shifting as well. Strain gauges and temperature sensors are also fitted in a grid over the dam, and seismometers are used to check for earthquakes. The face of the dam may have surveyor's targets to check for shifting; these days, reflectors for laser measurement systems are also fitted to dams.

Earth dams usually look like nothing more than a long hillock of dirt from the outside, but they are carefully designed, with a core of impermeable clay, sometimes overlaying a grouted trench, to keep the water from seeping through and gradually undermining the dam; a layer of sand and rock to add mass; and a surface layer of broken stone called "riprap" on the inner face to resist wave erosion. The outer face may be planted with grass to resist rain and wind erosion. The earth dam does soak up some water, so it has an internal network of drainage pipes to keep the liquid from building up.

* Dams generally have a "spillway", like a set of giant water slides, to handle overflow conditions; of course, for an earth dam, the spillway will be built as a concrete element. The watercourses have a smooth curve to reduce turbulence and erosion, and are sometimes built with a "ski jump" at the end to ensure that the water pouring off the spillway doesn't undermine the bottom of the river downstream. The deep pool that accepts the water is a called a "stilling basin".

The water flowing over the spillway is controlled by "floodgates". One traditional form is the "tainter gate", which is much the like cover of an old rolltop desk, with a curtain of linked horizontal plates rolling up over a big cylinder. Smaller dams tend to have "lift gates", in which a simple rectangular plate its pulled up and down like a window sash. Each gate may have its own winch, which can be electrically driven or hand-winched, while some dams have a traveling hoist that moves from gate to gate to set each as needed.

Not all dams rely on spillways as described above. Some actually have alternate dams known as "saddle dams" linked to the same reservoir to handle the job, while others have "morning glory spillways", which are funnel-shaped vertical tubes behind the dam that divert the water when it reaches the level of the intakes.

Dams may have a number of other interesting features, such as tubes known as "penstocks" that feed a bank of hydropower turbines, and "locks" to shift vessels between the front and back of the dam. Both these systems are discussed in more detail in later installments. Dams may also have "fish ladders", in which the water cascades downward from back to front in small steps, with fish jumping from one step to the next. Some dams actually have locks to allow the fish to move upward, while others catch fish and truck them upwards.

The rear face of the dam is shielded by booms or the like to keep floating objects, including boaters, from coming too close to the dam. There is also a set of depth gauges to determine the current reservoir depth, with the gauges traditionally simply being marked poles that can be read visually. More modern gauges are automated, with devices stored in a shed built over a well connected to the reservoir.

Dams have a tendency to silt up over time, with the rate of siltification not surprisingly depending on how dirty the water is. Some dams have settling basins where the silt accumulates, to be dredged up periodically; some other dams have "scour sluices" that are opened up every few weeks to carry off sediments in a fast flow.

Building dams can be complicated, and there may be remnants of the construction infrastructure in their vicinity long after they are completed. Towns tend to spring up around big dams when they are constructed and remain there afterward, even sometimes growing when industries and businesses move in. There may be hills of spoils in the area, or (for earth dams) pits where the materials for the dam were obtained.

Big concrete dams have concrete plants installed nearby during construction. The plant is usually dismantled after completion of the dam, but there may be remnants of the conveyors or tramways used to transport the concrete. As mentioned earlier in a previous installment in this series, concrete gets warm when it cures, and so refrigeration plants were often built to assist the construction of big concrete dams, with a set of cold water pipes chilling the concrete pours. The refrigeration plant is also usually removed when a dam is completed, but some of the piping system may remain.

Dams are controversial, since they have a major environmental impact and often displace many communities and historical sites that have the bad luck to be sited where a reservoir is going to form. It is now much more difficult to build a dam, but they are still built: they're spectacular items and governments tend to see them as symbols of capability and power -- and to an extent, as generous opportunities for pork and graft. [TO BE CONTINUED]

START | PREV | NEXT* DIGITAL CAMERAS EVOLVE: Digital cameras have been evolving rapidly over the past decade. Up to now, the main emphasis in the digital camera market was more and more megapixels. Now the resolution of digital cameras is approaching a plateau: a pocket camera with 4 or 5 megapixels produces excellent snapshot-sized printouts, while a semi-professional camera with 8 megapixels does the job for full-sized glossies. There's no general need to go to more megapixels, particularly when the big images strain camera storage capacity.

According to an article from WIRED.com ("Looking Beyond Megapixels"), digital camera vendors are starting to push other features beyond sheer numbers of pixels. For example, anybody who takes pictures under low-light conditions knows how tricky it can be to get a good shot. A camera can compensate for low light by opening the aperture wider, though at the expense of a narrow range of focus, or by leaving the shutter open longer, generally resulting in blurring.

One approach to fixing this problem is to increase the light sensitivity of the camera, which is measured in ISO units. Up until now, most digital cameras stopped at ISO 400, but new cameras may go higher. The Sony CyberShot DSC-W100, for example, goes to ISO 1250. However, the problem is that simply pushing up the sensitivity of an image sensor also pushes up its sensitivity to its own internal noise, resulting in a grainy image. Those who take long-exposure tripod-mounted digital camera shots in dark environments will be familiar with this graininess, as well as with the spattering of "saturated" pixels that speckle the image with bright colorful "stars".

This leads to a need to develop imaging sensors that have lower noise levels. FujiFilm is introducing a new camera, the "FinePix F30", which will have ISO 3200 sensitivity. It uses a thinner sensor, which reduces the mass in which the internal noise can arise. Olympus has come up with an alternate scheme, in which the sensor is organized as clusters of three pixels, with the pixels averaged in the final image.

Flash photography can be used to take pictures in dark places at short range, but the glare of a flash often creates unnatural-looking images. Casio is now offering a "Soft Flash" capability on its new cameras, like the EX-Z850, that reduces the glare. The flash setting is manual at present, but Casio engineers are working on an automatic flash setting system. FujiFilm is introduced such an automatically adjusting flash on the FinePix F30.

Zoom is another nice feature. Most pocket cameras have a 3x zoom, but some new small cameras use tricky technology to go well beyond that. The CanonPowerShot A700 has a 6x zoom, with the Panasonic Lumix DMC-TZ1K obtaining a 10x zoom using a folded-optics system. Kodak is taking another approach to 10x zoom on its thin EasyShare V610, using twin 5X zoom lenses, each with its own 6.1 megapixel sensor; the output of the two optical systems is interleaved to give the full 10x zoom.

High zoom, just like long shutter speeds, makes blurred images more likely. Now most digital camera vendors are offering "image stabilization" capabilities on some of their cameras. A traditional image stabilization system uses accelerometer sensors to detect camera motion and activate a motor to shift the lens to compensate, but it's also possible to shift the image sensor instead. Another approach is to track the camera's movement and then sum up the image in segments, shifting each segment as per the motion of the camera and then splicing it to earlier segments.

Other snazzy new features in the latest digital cameras are smart autofocusing systems that can distinguish faces and focus on them; a "critique" mode that checks over pictures and suggests how the shot should have been made; and automatic fixes to common problems, such as brightening elements of photos that are too dark. The modern digital camera is not quite "idiot proof", but it allows even novices to get good results, and vendors are working towards an era when almost anybody will get good results.

BACK_TO_TOP* GAS UP & DOWN: In my recent trip from Colorado to Washington State, I tracked gasoline prices and was somewhat surprised at the wide variation. Around here, I can get it for as low as $2.72 USD per gallon; in the Seattle area, some stations were selling it for as much as $3.24 USD a gallon, though it was more typically about $3.09 USD.

An article in THE ECONOMIST ("Much Ado About Pumping" 3 June 2006), offered some background about the erratic price fluctuations of gasoline, plus cynical comments about attempts by politicians to cash in on energy insecurity. The Right has been pointing fingers at the Left for pushing environmental regulations that have forced up the price of gasoline, while the Left has pointed their fingers back at the Right, saying that the Bush II Administration has given cronies in Big Oil a license to loot the nation. In fact, to the extent that either of these issues matter, they matter much less than the dependence of the price of gasoline at the pumps on the price of crude on the international markets. The US government has little ability to control that price; President Bush can call Saudi King Abdullah and ask for relief, but that can only help so much.

Local factors still do have an influence on prices. To compensate for the loss of 10% of America's refining capacity after last fall's hurricanes, undamaged refineries were working overtime. However, they couldn't sustain this level of output indefinitely and many refineries have had to be shut down temporarily to perform maintenance that had been deferred. Even if everything was working, there still wouldn't be capacity to keep up with demand. It will take time, years, to get existing infrastructure working properly and add new refineries to bring production levels to the needed level.

Another factor, partly backing up the accusations of the Right about the impact of environmental regulation on prices, was that Texas and a number of states back East banned a gasoline additive named "methyl tertiary-butyl ether (MTBE)". It reduced smog emissions but was judged a potential carcinogen and banned, with ethanol to be used in its place. Unfortunately, while MTBE could be mixed with gasoline and shipped through a pipeline, ethanol separates from gasoline in a pipeline, so the two have to be mixed at or near the point of sale. It's not all that hard to do, but it did require setting up a new component of infrastructure, meaning a glitch upward in costs.

On the positive side, high prices are attracting more fuel imports, and resulting in conservation by end users -- with the administration starting to slowly warm to the idea even if it smells like Jimmy Carter. As in the 1970s, sales of economy cars are booming. There are, overall, some reasons for confidence that prices will not rise much further and may well fall over the next few years, though not down to the levels enjoyed before the surge in gas prices. Those days are gone for good.

* One of the ironies of the gasoline situation in the USA is that the volatility of the pricing is partly due to the fact that gasoline taxes are so low -- about 18% of the price of a gallon of gas. In the UK, it's more like 67%, which means that a gallon of gas in Britain costs a bit under the equivalent of $7 USD these days. Changes in the price of crude would be less noticeable if US gas taxes were as high, but not even the greenest American politician would consider that to be anything resembling a sensible fix.

On the other side of the coin, American politicians can't get many points by cutting gasoline taxes, since it would have such a small effect. The alternative is showmanship, with bills in Congress to:

To hammer in the irony, high tariffs are being maintained against cheap Brazilian sugar-cane ethanol. The goal of American energy independence seems to be one that continually recedes over the horizon, no matter how far we drive down this highway.

* In the same issue, another article ("Arabian Alchemy") had an interesting note on "gas to liquid (GTL)" technology, a scheme that involves converting natural gas to a diesel-type liquid fuel. Qatar in the Persian Gulf has the world's biggest known deposits of natural gas. This is something of a mixed blessing; natural gas is very useful as long as the source is not too far away from end users, but that's not the case with Qatar, which has to go through the troublesome and expensive process of cooling the gas to liquid and shipping it in special tankers. As of this June, however, Qatar Petroleum -- in partnership with South Africa's Sasol company, the world leader in synthetic petroleum technology -- is shipping GTL diesel instead.

It's something of a gamble. The advantage of GTL diesel is that it can be shipped in normal tankers and pumped through normal pipelines; it requires no new infrastructure except for the conversion plant. GTL diesel also burns cleaner than ordinary diesel. GTL is, however, a relatively expensive process, and if prices of petroleum fall, Qatar is going to lose big on the exercise.

Sasol officials claim they have refined the process to make it highly cost-effective in today's fuel pricing climate, and the company is planning or considering GTL plants elsewhere. Some other oil companies are also setting up GTL plants in Qatar. If Sasol finds the effort profitable, the company wants to similarly export their technology for converting coal to diesel-type fuel. [ED: It was a nonstarter.]

BACK_TO_TOP* CHORNOBYL AT 20: As discussed in an article in AAAS SCIENCE ("Return To The Inferno: Chornobyl After 20 Years" by Richard Stone, 14 April 2006), early in the morning of 26 April 1986, technicians at the nuclear plant at Chornobyl in the Ukraine botched a safety test, ironically sending Reactor Number Four into a supercritical state that led to an explosion. Two technicians were killed outright, and 28 other workers and firemen suffered lethal doses of radiation inflicted on them as they desperately tried to get the disaster under control. The reactor burned for ten days and dumped 400 times the radioactivity released by the atomic bomb dropped on Hiroshima into the environment.

The Soviet military dispatched over 600,000 "liquidators" to get the runaway reactor under control. The liquidators dampened the fire, consolidated the radioactive debris in sets of dumps, and hastily built a concrete-and-steel sarcophagus over Reactor Number Four. A third of the liquidators were in the area during the first dangerous month; their health was monitored, and those whose white blood cell counts fell too low were sent home. Civilians were evacuated from the fallout-contaminated areas.

Twenty years have passed since the Chornobyl disaster. In the town of Homyel, Belarus, radiologists still go through the town marketplace to check foods for radiation. The region is contaminated with pockets of radioactive cesium-137 and strontium-90, which will remain "hot" for decades more. Most of the food in the market is safe to eat, but the inspectors once got their hands on a slaughtered wild boar that was actually unsafe to handle, much less cook for dinner.

Just outside the Chornobyl plant, within the "Exclusion Zone" that surrounds the plant for tens of kilometers, is a pine forest that's still too dangerous to enter without protective gear. It is behind a grassy field parked with 2,000 vehicles, including trucks, fire engines, armored bulldozers, and helicopters. They were simply discarded there because they were too hot to otherwise deal with. The town of Pripyat -- discussed here last year -- is not in the Exclusion Zone, but it was contaminated and also abandoned, with it famous ferris wheel now rusting away among the weeds.

Despite all this, life in general in the area is slowly returning to normal. Fields in the region are fertilized and limed to expel or bind radionuclides so crops can be sown. Old folks are coming back to their homes. Work is now being planned to create a structure to decisively isolate Reactor Number Four, so it can be dismantled and properly disposed of.

* The damage lingers, however. A UN report estimates that 4,000 of those who survived the accident will have their lives cut short by cancers, and there are critics who insist that this is an understatement by at least an order of magnitude. Other health effects have been noted, including high levels of incidence of cataracts, birth defects, stroke, digestive disorders, and to no surprise depression and other emotional difficulties.

Researchers have been keeping an eye on the health of those affected by the accident, one calling the area "one big laboratory". The liquidators got the worst of it, and they have been given the most attention. The level of residual radiation still hiding in their bodies has been tracked by the simple method of collecting teeth extracted by dentists. Over 6,000 teeth have been collected in the Ukraine since 1990, and show much to everyone's relief that the levels of residual radiation are much lower than feared.

The actual level of health problems among the group remains unclear, though it's not for any lack of effort on the part of data gatherers. The problem is sorting out the signal of maladies caused by radiation from those caused by smoking, alcoholism, and bad nutrition, which are common factors among the population. It's even more difficult to determine the impact of the accident on the health of citizens who were simply caught in the plume, since their exposure was much less than that of most of the liquidators.

There is one case where the impact is obvious, and that was in an epidemic of thyroid cancer among children. Growing kids absorb radioactive iodine-131 in their thyroid glands like a sponge; heavy supplements of stable iodine can block the absorption of iodine-131, but the authorities were caught flat-footed when Reactor Number Four blew, and iodine pills weren't distributed until weeks later. Over 4,000 children came down with thyroid cancer, with nine of them dying. Beyond that, it's almost anyone's guess just how much suffering the population will endure in the coming decades from the radioactive isotopes spewed out by the ruined plant.

* Engineers are still keeping a close eye on Reactor Number Four. Sensors have been placed through the structure; there are still places in it that could dish out a lethal dose of radiation in minutes. The reactor building and the sarcophagus, now just called the "shelter", are slowly decaying. With that in mind, work on an $800 million USD "New Safe Confinement (NSC)" structure will begin later in 2006. It will look like a steel shelter with a curved roof, and will be 150 meters long and 100 meters high (500 x 330 feet). It will be built to one side of the reactor and then rolled over it.

Construction of the structure in the unique and unpleasant environment will be a challenge. Radioactive debris was hastily buried in an haphazard fashion all over the site, and there's no saying when workers setting up the foundations for the NSC might not stumble onto a cache. There's also the simple problem of the radiation still generally in the area, and so the NSC will not be built from the ground up on the site, which could trap radiation inside the structure while the workers are inside. Instead, segments will be assembled in the open and then hoisted into place.

When completed in 2010, the NSC will be slid on rails to cover the reactor. This will be tricky in itself, since nobody wants to stir up radioactive dust while doing it, and it will also be important to keep the interior dry to prevent corrosion after it's sealed in place. Once sealed, the reactor will be gradually disassembled using remote-controlled cranes, with the debris removed for proper disposal. That technology is not adequate for handling parts of the reactor contaminated with radioactive fuel, and in fact nobody has any clear idea of how to do that particular job. However, the NSC is designed to easily last for a century, giving a breathing space to think over the task.

* Within a generation or so, the Chornobyl disaster will be generally forgotten by most of the inhabitants of the region. The Exclusion Zone will shrink; most of the "ghost town" structures within it have already been dismantled and buried. The only reminders will be the big NSC shelter over the ruined Reactor Number Four, and the memories of a fading generation of old folks.

BACK_TO_TOP* I just got back from a week road trip -- Sunday, drive from Loveland, Colorado, to Spokane, Washington; Monday, visit my folks and brothers; then three days in the Seattle area, taking pictures; another day in Spokane on Friday; and then back home to Colorado again on Saturday.

It was the last long-range mission of my 1983 Toyota Tercel hatchback; my new Toyota Yaris will have arrived by the time I take another long trip. It was a bit dodgy at times driving such an old car, with every odd sound that I heard or strange smell I inhaled making my heartbeat step up a bit. But, faithful beast that it has been, I got back home with no particular problems.

I did update it slightly for the trip. I found a battery-operated portable MP3 CD player for $25 USD and, at that price, couldn't spare picking it up so I could listen to a handful of MP3 CDs instead of a pile of tapes while I was driving. I thought I'd use headphones, but then I found another gimmick that eliminated the need for them. It was basically a cassette tape casing with a lead back to the player headphone jack and a magnetic "write head" on the output; I just snapped it into the old car cassette deck and then could listen to MP3 CDs on the regular speakers. It had a high background noise level, but still lower than the regular operating noise of the car itself, so no problem. It was such a simple gimmick that I suspected it had been around for a good number of years -- probably about as long as portable CD players have been around.

This was also the first trip I carried my new Sandisk 1 GB Cruzer USB flash drive, hung around my neck on a cord. It was straightforward to transfer pictures to it, and it also came in handy when I realized that I needed to read some PDF files on my laptop but hadn't installed the Adobe Acrobat reader to do so. I went over to my brothers' office, got into the Adobe site, downloaded all 20 MB of the Acrobat setup file into the Cruzer over a high-speed line, then went back and installed it.

* As far as the details of the trip itself, they're generally dull. Spokane, I'm afraid, is like that. However, my brother Terry was having some excitement he could've done without. Not long before, a woman in a 4WD Subaru was trying to park in the multistory garage at Riverpark Square in central downtown and got a bit confused; she drove through the guardrail and tumbled five floors onto the street to her death. The one lucky thing was that she didn't land on anyone.

My brothers run the family construction firm, and they had built Riverpark Square. The unfortunate woman's family was suing the owners of the square, saying the guardrails should have held, and so Terry was a witness in the case. He believed they would settle out of court. Spokane may be dull, but bizarre things do happen there; it should be noted that it's not too far away from the mythological town of Twin Peaks. The news had a report of a pack of pit bulls roaming the suburbs, killing cats and making people fear for their small kids. David Lynch couldn't top that. I did visit a few places worth reporting. I'll talk about them in following weekly installments. [TO BE CONTINUED]

NEXT* INFRASTRUCTURE -- MINING (9): Aluminum is much superior to steel in several respects, particularly strength-to-weight ratio and electrical conductivity, and its ore, "bauxite", is also common. Smelting it is tricky, however. Aluminum was isolated early in the 19th century, but its oxide is so tightly bound that extracting the pure metal involved a difficult chemical process -- so difficult that aluminum was priced as a precious metal and regarded accordingly.

A much more effective electrolytic process for smelting aluminum was patented in 1886 by an American named Charles Hall; effectively the same process was patented at the same time by a Frenchman named Paul Heroult. After some litigation, the two men came to an agreement in which Hall had the rights to the process in North America and Heroult had the rights in Europe. The process is sometimes called the "Hall process" in the US, but technically it's really the "Hall-Heroult" process, or in Europe the "Heroult-Hall" process.

In any case, the bauxite ore is milled down to alumina, or aluminum oxide. Alumina had been known for a long time before aluminum itself was isolated, and it is a useful material on its own, being used for sandpaper grit and the like. Rubies and sapphires are basically large alumina crystals.

To extract the metal, the alumina is dissolved in hot molten salts in a big carbon-lined iron pot, about the size of a backyard swimming pool. One or more big carbon electrodes are dipped into the melt, with huge electrical currents, tens of thousands of amperes, driven through the solution. The molten aluminum metal accumulates on the bottom and is tapped out through a port.

An aluminum smelter contains rows of such "cells", with the rows called "potlines". The smelter is fitted with a heavy-duty ventilation system to draw off fumes from the cells, with the exhaust filtered through scrubbers. The fumes include hydrogen fluoride, which is a nasty pollutant that produces hydrofluoric acid, leading to acid rain with a real attitude, and so the hydrogen fluoride has to be almost completely eliminated.

An aluminum smelter will of course include a rolling mill to provide finished product. The smelter will also have a distinctively heavy-duty electric power substation to provide the torrents of electricity required for the Hall-Heroult process, and in fact aluminum smelters tend to be located where there is plenty of cheap power available. The fact that smelting aluminum is so energy intensive makes it inherently more expensive than ordinary steels to produce, and so aluminum is heavily recycled.

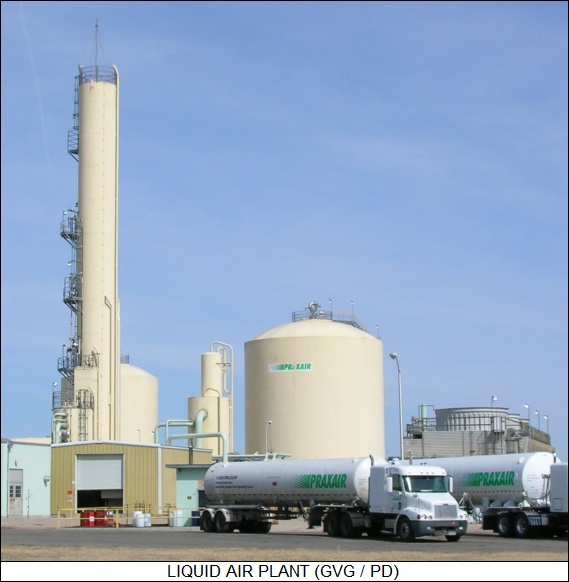

* As a final shot on the subject of mining, consider the production of gases such as oxygen and nitrogen. These gases can be "mined" from the atmosphere itself by a cooling process. Any city of reasonable size has such a "liquid air" plant, since it's not cost-effective to haul gases long distances.

The core of a liquid air plant is the "coldbox", which includes a big refrigeration unit and a tall "distillation tower" to separate the gases. The refrigeration unit operates on general principles no different from that of a household refrigerator -- compressing a gas, allowing it to cool to ambient temperature in a heat exchanger, then allowing it to expand to produce an abrupt drop in temperature -- except for scale and elaboration. The operation of the distillation tower is based on the fact that the boiling point of nitrogen is lower than that of oxygen: the liquefied gas is allowed to evaporate at the base of the distillation tower, filtering upward through layers of cooled, perforated metal trays.

The holes in the trays feed through short vertical pipes that are fitted with caps in the form of wide, upside-down open cans; the vapor flows through the pipes, hits the cap, and has to flow down its sides to escape and rise further. If the temperature at that level is low enough, the appropriate component of the vapor will liquefy inside the cap, dripping down its sides onto the tray to be collected. The lower trays capture and draw off oxygen, while the upper trays take the nitrogen.

Of course, the liquid air plant is dotted with storage tanks for the end products: spherical pressure tanks, "bullet" pressure tanks in the form of a cylinder with rounded ends, and large insulated storage tanks. Trucks hauling bullet tank trailers deliver the gases to end users. A liquid air plant is something that is easily taken for granted, but once it is seen for what it is, it becomes an exotic piece of local technology. [END OF SET 1]

START | PREV | NEXT* THERMOPHOTOVOLTAICS FOR YOUR CAR: After being stranded in Des Moines, Iowa, for a few days in 2004 when the alternator in my Toyota went south, I was very interested to read an article from TECHNOLOGYREVIEW.com ("An Alternative To Your Alternator" by Kevin Bullis) that involved work at MIT on using "thermophotovoltaic (TPV)" technology to provide a no-moving-parts replacement for the alternator.

TPV technology involves heating up a light-emitting material and using photovoltaic (PV) cells to convert the light emission into electricity. The idea has been around since the 1960s, but it's taken a long time to develop TPV devices that combine good efficiencies with low cost. The MIT scheme involves heating a tungsten heating element with gasoline. The heating element is etched to cover its surface with nanoscale pits that provide resonant chambers, producing light covering the proper wavelengths for use by the surrounding PV cells. An optical filter separates the heating element from the PV cells, with the filter passing wavelengths useful to the PV cells while reflecting other wavelengths back to the heating element, helping to keep it hot.

Although a TPV system might sound inefficient, an internal combustion engine is only about 30% efficient in converting fuel energy into motion to run an alternator, and the alternator is only about 50% efficient in converting the motion into electricity, giving an overall efficiency of only 15%. The lack of moving parts is a potential plus. This automotive TPV system is strictly a lab demonstration at present. Finding out if the idea flies will take a few more years' work.

* MONEY FOR NOTHING: In other energy news, an IEEE SPECTRUM article from January 2006 ("Get-Rich-Quick Scheme" by William Sweet) outlined the clever and effective business strategy of a New York City (NYC) company named ConsumerPowerline (CP), founded by Michael B. Gordon and Vinay Gupta.

CP was the result of a 2001 ruling from the US Federal Energy Regulatory Commission (FERC) that, among other things, promoted paying electricity end-users if they cut power usage during declared power crunch times. Gordon and Gupta saw the opportunity very quickly and formed CP to take advantage of it.

CP targets major electricity users in NYC, for example the operator of an office building. CP's sales pitch is to suggest to the operator that he or she can save energy and make money through the New York Independent System Operator's Emergency Demand Response Program (EDRP). If the operator likes the idea, CP then inspects the operator's account with Consolidated Edison, the NYC power utility, and shows how much profit the operator can make if CP's operational recommendations are followed. The data analysis is actually done at a center in New Delhi. Once the operator signs up, CP installs a special metering system to link the office building to the power grid. The metering system figures out the power savings and reports them so the operator can be paid appropriately by the EDRP. The real beauty of CP's pitch is that it's all gravy to the operator: CP doesn't take a penny up front, instead taking a cut of the EDRP payments.

As of the beginning of 2006, CP had about 400 accounts with 40 clients, including the prestigious Macy's department store. Overall, CP monitors about 450 megawatts of load, and about 10% of that can be curtailed on demand. CP officials believe they will eventually be monitoring most of the power load of New York State.

BACK_TO_TOP* BLIND MAN'S BLUFF: Modern medical practice is the result of centuries of scientific evolution and is armed with an impressive array of modern technologies. The obvious question is: how much does it all accomplish? According to an article in BUSINESS WEEK ("Medical Guesswork" by John Carey, 29 May 2006), a medical researcher named Dr. David Eddy from Aspen, Colorado, has the answer: not nearly as much as it should.

For example, in a presentation to the board of the Kaiser Permanente Care Management Institute, Eddy showed that conventional treatments for diabetes were not particularly effective in reducing incidence of heart attacks and strokes that are consequences of the disease, and that a simpler, cheaper regimen of aspirin and generic drugs to lower blood pressure did a better job. The board took Eddy's recommendations to heart and is now implementing the changes he has suggested.

Eddy doesn't think this was an isolated example, either, claiming that there is no evidence that the majority of treatments being given patients are effective. He has demonstrated that annual chest X-rays are worthless, and that the high rate of cesarean sections is not based on any scientific rationale. He has his critics but also his defenders, one claiming that the rate of treatments honestly known to be effective is no higher than 25%. The 64-year-old Eddy's prescription is simply an emphasis on basic science, what he calls "evidence-based medicine", a phrase he invented in the 1980s. He has actually implemented some of his thinking in a computer program named Archimedes that integrates provable scientific thinking about diabetes to determine the most effective treatments, factoring in costs.

* With three generations of doctors in his family before him, there was no doubt what Eddy wanted to do for a career, working in cardiac surgery at the Stanford Medical Center in the 1970s. However, he soon came to the conclusion that the treatments doctors were giving patients seemed to be based more on instinct than science. That ended up drawing him toward the rigor of math, taking advanced courses in the field and then getting a job with the Xerox Palo Alto Research Center (PARC), a famous home of "blue-sky" technology research.

The result of this roundabout excursion was his PhD thesis, which performed a statistical analysis of cancer screening that showed annual chest X-rays and pap smears for low-risk patients were worthless, a simple waste of money. He received awards for his work, obtained a full professorship at Stanford, and then became chairman of the Center for Health Policy Research & Education at Duke University. He acquired a following, with one of his admirers calling him the "father of health economics".

Other people had different names for him. When a preprint of a paper he had written that criticized conventional treatments for glaucoma as ineffective and even possibly counterproductive was distributed to ophthalmologists, he was so bitterly attacked that, as he says, "felt like Salman Rushdie." Unintimidated, he challenged the community to come up with proof that their treatment actually worked, and the research was done.

In 1985, he gave up working in the organization and took on a more advisory role, lecturing doctors and challenging their assumptions. He liked to give doctors a scenario for a patient and typical treatment, then ask what the probability of success was. The answers usually varied from 0% to 100%. Eddy points out that this fuzziness of opinion means that expert medical testimony in court cases may be completely sincere but still totally untrustworthy: "You don't have hire an expert to lie. You can just find one who truly believes the number you want."

The results can be ineffective medicine at high costs, or worse. In one notorious case, the use of "autologous bone marrow transplants (ABMT)" to treat women with advanced breast cancer -- discussed here some months back -- Eddy testified that the treatment was expensive, drastic in every sense of the word, and that there was no hard evidence that it was effective. The tide was against him, and insurers were pressured to go along with funding ABMTs on a large scale. Finally, studies conclusively showed that ABMT did nothing to improve the survival rate of breast-cancer patients. It was a massive waste of time and money, and worse forced patients who ended up dying anyway to suffer the agonies of the damned before they did so. Eddy says that physicians who pushed the procedure "owe this country an apology."

* Eddy sees the tendency to recommend ineffective treatments as due to a number of factors. Sometimes doctors will push a treatment that pays them better than an alternative; and sometimes they will push a treatment simply to make sure they have covered all the bases, in hopes of avoiding a malpractice suit, even when the treatment is not very useful. There is also the old saying: "When all you have is a hammer, all you see is nails." -- and specialists will tend to push their own pet treatments without considering alternatives that might be cheaper or work better. By the same logic, clinics with expensive new medical gear will want patients to use it, not merely to help pay it off but because it's the hammer they have.

Patients also are caught up in the game. Americans are inclined to the belief that "when all else fails, get a hammer -- if that doesn't work, get a bigger hammer." In other words, a more aggressive and expensive treatment may well be assumed to be more effective than a simple and cheap treatment, whether the facts bear that out or not. Patients who are fighting for their lives may also pressure funding of dubious treatments -- ABMT for breast cancer was a particularly ugly example -- and politicians who have no strong background in science or medical practice may back them up.

The simplistic answer to sorting out the maze is more and better clinical trials, but the reality is that clinical trials are difficult, expensive, and can be lengthy -- in some cases, lasting generations. Trials can be and are conducted of course, but the more sophisticated answer is to integrate education into the therapeutic process. Organizations like the "Foundation For Informed Medical Decision Making" provide booklets and videos to give patients the proper information on potential treatments. Says a Kaiser Permanente official: "The popular version of evidence-based medicine is about proving things, but it is really about transparency -- being clear about what we know and don't know." Studies of patients being given clear data about options versus a control group that only accepts doctor's recommendations showed that the first group opted for the more aggressive treatments much less often than the control group, and had more realistic expectations of the results.

Eddy's computer simulation Archimedes offers a third path to clearing the air. He hired top-grade computer programmers to put the program together, with the goal being provide the most accurate model for the action of diabetes available at the time. Archimedes was then used to conduct simulated long-range clinical trials on various treatments. Kaiser Permanente adopted the recommendations provided by Archimedes and they seem to be working much the same way in the real world as they did in the virtual one.

There was no absolute guarantee that would be the case, of course: simulations are no better than the assumptions on which they are based, or as the old saying goes: "garbage in, garbage out". Eddy admits that Archimedes is crude, "like the Wright brother's plane", but it seems to have worked, and there's a lot of room for refinement of the technology, Eddy saying that it won't be too long before "we're offering transcontinental flights with movies." Whatever its limitations, simulation may help break the logjam and take medical practice out of its dark age of guesswork.

BACK_TO_TOP* DEATH OF A TERRORIST: On the evening of 7 June 2006, the Iraqi government and the US military jointly announced with evident satisfaction that Abu Musab al-Zarqawi -- the Jordanian-born terrorist who had acquired a reputation for extreme viciousness in his actions in Iraq and earned himself the top place on the American "most wanted" list -- had been killed in an airstrike, with his body positively identified. An al-Qaeda website later conceded his death. There are hints that Jordanian intelligence discreetly assisted in the operation and helped identify the corpse: he was also on the "most wanted" list in Jordan after performing a set of ghastly hotel bombings last November. Iraqi police, who have been particular targets of terrorist attacks, were videotaped dancing in the streets and firing their weapons in the air in celebration.

It was Abu Musab al-Zarqawi's liking for making videos that was his undoing. US intelligence captured a video he made, playing up "outtakes" of him fumbling with a Minimi light machine gun, and also managed to determine the location where it was shot, leading to arrests in the area. This led to a tipoff to the whereabouts of his "spiritual adviser" Sheik Abd-al-Rahman, who was then located and tracked. When a meeting was called of senior terrorist leaders in a "safe house" in the town of Baquba, north of Baghdad, that had been staked out for several weeks, two F-16 fighters on standby alert were dispatched, each dropping a 225 kilogram (500 pound) GPS-guided bomb on the house, with the targeting video footage released along with the briefing. Six people were killed in the strike.

Iraqi police moved in to inspect the ruins, where they pulled the mortally injured terrorist from the rubble -- he died soon afterward -- while 17 security raids were immediately conducted to snatch up the elements of the terrorist network that had been under observation up to the strike. Dozens more raids were performed the following night. The operation was a serious blow to the al-Qaeda network in Iraq, not merely cutting off its head but doing damage to the body as well. There was a $25 million USD price on his head; it is unclear if anyone will collect.

Anybody who had followed the bloody career of Abu Musab al-Zarqawi knew that he would inevitably die a violent death sooner or later -- his own brother was quoted on Arabic news TV as saying as much -- and how much this will affect the wretched security situation in Iraq is arguable. It would be pleasant and by no means out of the question to find the tide flowing increasingly against the insurgency from now on. One of the features of a protracted war of attrition is that the "tipping point" in the battle may not be obvious at all until years after the fact: if you're hurting, it may not be apparent that the other guy is hurting a lot worse until he falls over.

However, absolutely nobody is expressing any faith in that happening. It is significant that a player who nobody would believe could ever be interested in negotiation has been taken off the game board, but US intelligence has already identified candidates for his replacement. Abu Musab al-Zarqawi's control over the organization had been on the decline anyway, with many questioning his taste for the gruesome and in particular his outspoken and violent bigotry against Shiites, which is not shared by all Sunnis.

The foreign al-Qaeda insurgents were always a small minority, with the real fury taking place between Sunni and Shiite. Baghdad has been suffering through a particularly nasty series of sectarian bombings and shootings, with officials announcing that over 6,000 citizens of the city had been killed between 1 January and 31 May of this year. Still, on another encouraging note, the Iraqi government announced that Defense and Interior ministers had been finally appointed, after an extended and frustrating session of haggling.

The main beneficiary of the killing of Abu Musab al-Zarqawi will likely be the US military, which had been reeling under the impact of the revelation that a group of Marines lost control after losing one of their own in a roadside bombing in Haditha last November and indiscriminately shot down several dozen civilians. That issue is not going to just go away, but it certainly a relief for the brass to have some high-profile positive news to pass off to the public and regain some lost faith in American military professionalism. It was enough to cause a small if temporary drop in oil prices.

* As if to compensate for good news, the Bush II Administration also had to confront a report issued by the Council of Europe describing secret CIA flights that performed "extraordinary rendition" of terror suspects to prisons in European countries, with the report insisting that there was clear evidence of collusion with European governments on the matter. The State Department's chief legal counsel, John Bellinger, derided the report in a BBC interview, saying it consisted of "rumor and innuendo", contained "clear inaccuracies", and read like an article in a "supermarket tabloid".

In other news of the War On Terror, on 3 June Canadian law enforcement swept down on a suspected cell of Islamic militants in the Toronto area, arresting seventeen, including five youths. One of the suspects had the bad judgement to try to obtain tonnes of ammonium nitrate for building a bomb from a Canadian undercover agent. Alleged details of the plot indicated plans to blow up the Canadian Parliament building in Ottawa as well as other structures, with one of the suspects saying he planned to behead Canadian Prime Minister Stephen Harper. Related arrests were made in Europe and Bangladesh.

A day earlier, British authorities had descended on the residence of two young London men after being tipped off that the duo were building a chemical weapon of some sort. Some of the police involved in the raid wore chemical protection suits. One of the suspects was shot in the shoulder during the operation. However, since that time there has been no report of any chemical weapon being found, leading to protests. British Prime Minister Tony Blair backed the police, saying that they had acted on "reasonable intelligence", and pointed out that if they had failed to act under such circumstances and not stopped an actual terrorist plot, that "you could only imagine ... what the outcry would be then ... "

BACK_TO_TOP* ALTERNATIVE FUELS COMPARED (3): The term "biodiesel" actually covers almost any form of fuel derived from biological sources used to run a diesel engine, including vegetable oil, cooking oil, and rendered chicken fat. Diesels are widely tolerant of the fuels they can burn; Rudolf Diesel actually ran his engine on peanut oil at the Paris Exposition in 1900. Nowdays, an outfit in Missouri named Greasel Conversions actually sells a kit that can be installed in a diesel truck to allow it to burn waste cooking oil from fast-food restaurants as fuel.

Biodiesel fuels available commercially are often made from soybean oil or sometimes cottonseed oil. Whatever the source, the oil is purified through a process called "transesterification", which removes glycerin and other contaminants. Biodiesel has its attractions: the energy density is about the same as it is for gasoline, and it burns much cleaner than petrodiesel. A "B20" blend of 20% biodiesel and 80% petrodiesel is about as efficient as pure petrodiesel and has noticeably lower emissions.

On the minus side, biodiesel is more expensive than petrodiesel, and in cold weather high-concentration blends like B30 or B100 tend to turn into waxy solids, demanding additives or fuel warming systems. (The Greasel system, which relies on unprocessed biodiesels, requires that the driver remember to switch back to the normal diesel before the end of a trip lest the engine end up being clogged.)

However, sales of biodiesel are up and to the right. It is very popular in Europe and production is also increasing in the USA, rising from 94 million liters (25 million US gallons) in 2004 to three times that level in 2005. As with ethanol, the farming community has been a prime mover, seeing biodiesel as a way (in principle) to supply their own fuel and (in practice) to make money from otherwise fallow land.

* Electric cars have been around since the beginning of automotive technology, with power provided by banks of lead-acid batteries. Electric cars have virtues, being quiet, clean, and peppy in operation, and electricity is also relatively cheap. However, electric batteries don't have the storage capacity to typically provide more than about 160 kilometers (100 miles) of range, and require long charging times. In addition, batteries can only be recharged a fairly limited number of times before they have to be replaced. Critics also point out that electric cars do little to reduce carbon emissions, since the power is generally derived from coal-fired power plants.

There is some interest at present in a "plug-in hybrid", which is a conventional gas-electric hybrid with a large battery bank that allows it to make short trips without using any gasoline. The batteries can be recharged from a power socket at night. Unfortunately, this is a fairly complicated and expensive solution.