* 22 entries including: oil & gas infrastructure, global warming survey, brain games, Orion space capsule, extended warranties, store security systems, ZigBee home networks, H2S doomsday, AIDS in the USA, business investment in Africa, fraud-spotting software, green building certification, electronic textbooks, cybercrime foot soldiers, six word stories.

* KEEPING AN EDGE: An article in BUSINESS WEEK ("Chicken Soup For The Aging Brain" by Catherine Arnst, 25 September 2006) examined an emerging market in computer gaming: games designed to help the elderly maintain their mental edge. Oldsters can now play BRAIN AGE games on the Nintendo DS handheld to keep sharp, with the Nintendo program written with help from Japanese neuroscientist Dr. Kawashima Ryuto -- or try out the more aggressive "Brain Fitness Program" from Posit Science Corporation, founded by neurologist Michael Merzenich of the University of California at San Francisco.

The problem with such products is that there is no medical evidence that they accomplish very much. To be sure, an oldster who tries to remain mentally active will do better than one who doesn't, but the idea that such "exercises" actually arrest brain degradation has no clear basis in the evidence. The seeming effectiveness of a game may be dependent on selection effects: the sharper oldsters are attracted to the games.

Merzenich is himself convinced that his software is medically useful, believing that such gaming activity helps old folks rewire their brains and claiming that PET scans of brain function in target groups provide some evidence in confirmation. Nintendo is more conservative about the BRAIN AGE games, simply saying that they are ordinary games targeted at oldsters. The games are cheap and are wildly popular in graying Japan. Even critics have not gone so far as to condemn such exercises as ripoffs. At the very least, as one of them said, they can provide some reassurance: "If you can still do it, then you know you haven't lost it yet."

[ED: I found this interesting because I'm now in my early 50s and my short-term memory is clearly going south. There's no way to stop it as such -- but it is possible to compensate. Many decades ago a classmate told me: "You can't exercise your brain!" It was one of those trivial comments I never forgot, because it was, as emphatic assertions often are, almost completely wrong. It's not possible to do calisthenics to toughen up the neurons, but it is possible to acquire better "software", establishing procedures to work around the failures of memory. It's a simple matter to make a habit of doublechecking or even triplechecking. Sometimes leaving a physical reminder helps -- a postit for example, or maybe placing an object relevant to a chore on the kitchen table, or even on the floor where it's certain to be noticed.

I also learn not to try to do several things at a time, focusing on one until I can nail it down; make sure I leave my car keys and wallet in specific places; and spend more effort making sure I've planned things out before I go do something. Another realization is that if short-term memory drops something, it will often "percolate" back up later, and I've also learned to backtrack on what I was just doing to see if it cues the memory again.

Whether computer games could help much in this effort is unclear, but the concept certainly seems worth a shot. I do find the idea that they "rewire the brain" to be arguable; it is enough to simply improve the brain software, the schemes we use to think about things and get things done, to work around the holes -- the same way that space probes are reprogrammed to operate around faults. Still, no matter the details, in the end all that can be done is to compensate for a generally unavoidable degradation in hardware. Reminds me of the gag that the nice thing about Alzheimer's is that you can hide your own Easter eggs.]

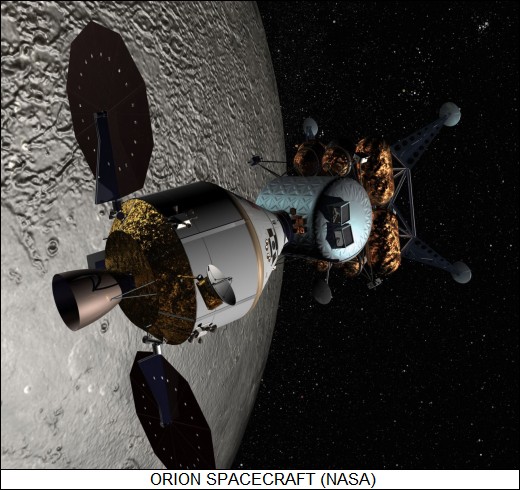

BACK_TO_TOP* ORION IN PROGRESS: The US National Aeronautics & Space Administration (NASA) has been working hard to revitalize itself recently, focusing on sending astronauts back to the Moon as a first step towards an expedition to Mars. As reported in AVIATION WEEK ("Next Up" by Frank Morring JR, 4 September 2006), in late August NASA selected Lockheed Martin to build the new "Orion Crew Exploration Vehicle", the replacement for the NASA space shuttle. The competition between Lockheed Martin and a team consisting of Northrop Grumman and Boeing was intense, with Lockheed Martin edging forward on the basis of a more aggressive schedule. NASA is in a hurry: the first human flight of the Orion is scheduled to take place in September 2014, four years after the last flight of the shuttle.

The Orion has been described by NASA Administrator Michael Griffin as "Apollo on steroids". The Orion command module will look much like the Apollo command module, consisting of a simple conical capsule with a convex heat shield on the base, but is much larger. The Orion command module has a diameter of 5 meters (16 feet 6 inches) and a pressurized volume of 19.6 cubic meters (25.6 cubic yards), with half of that available for a crew of up to six for transfer to the International Space Station (ISS).

The pressure shell of the Orion command module will be made of aluminum-lithium alloy. It will be protected from micrometeorite and space debris strikes by blankets made of Nextel and Kevlar. The current concept for the heatshield envisions it as being made of phenolic impregnated carbon. The command module has an off-axis center of gravity, allowing it to perform maneuvers on reentry by rotating around its axis.

The current concept envisions the Orion command module as landing on solid ground, just as does a Russian Soyuz return capsule, using a four-parachute array fitted with a retrorocket that will fire just before touchdown. Protection from the force of impact will be provided by a crushable structure underlying the heat shield and shock-absorbed crew seats. Ground recovery would eliminate the expense of operating an ocean recovery fleet, but the capsule will still be able to land at sea if there is a launch abort. Studies are also being performed to see how much of the command module will be recyclable; presumably the heat shield will have to be replaced between missions, but other elements may be salvageable for follow-on flights.

The Orion service module is only vaguely like the Apollo service module. It consists of a stubby cylinder with a main propulsion unit, maneuvering thruster arrays, communications antennas, and twin "pizza pan" solar arrays -- Apollo used fuel cells, but Orion is being designed for longer missions and needs a more continuous source of power. The main propulsion unit will be a rocket engine derived from the shuttle's Orbital Maneuvering System (OMS), providing 33.4 kN (3,400 kgp / 7,500 lbf) thrust. The reaction control jets in the thruster arrays will use non-toxic propellants. Avionics, life-support, and propulsion systems are being designed to ensure that the spacecraft and crew can survive failure of any two primary subsystems.

On lunar missions, the Orion will carry a four-person crew, which will descend to the surface on a new lunar landing vehicle, the "Lunar Surface Access Module (LSAM), while the Orion remains unattended in Moon orbit. The Orion will be launched by the Ares I booster, which will be derived from the shuttle's solid rocket motor. An escape tower fitted with a solid-rocket motor will allow the crew to blast free of the booster in case of a launch accident -- the lack of an escape system was one of the major flaws of the space shuttle design.

The Lockheed Martin team includes:

Lockheed Martin will perform overall design and integration, as well as pre-launch final checkout and post-mission refurbishment. Total program costs are given as about $8 billion USD into 2019. This is not a massive sum for a space technology program of such magnitude and emphasizes that the Orion is a conservative design, levering off existing technology where possible. First launches of the Ares 1 are expected in 2009, with about a half dozen test flights before the first Orion human flight in 2014. Human flights to the Moon will require development of the Ares 5 shuttle-derived booster.

BACK_TO_TOP* EXTENDED WARRANTIES: It is the season to buy Christmas toys, and in buying up expensive toys there's the question of whether to buy an extended warranty for them. According to a NEW YORK TIMES article ("The Word On Warranties: Don't Bother" by David S. Joachim) cited on CNET.com, it's probably not worth it.

Calculations of the costs of an extended warranty versus the actual odds of a failure indicate that extended warranties are a waste of money. Says Andrew Housser of the personal-finance site Bills.com: "Extended warranties are basically overpriced insurance products. At times, it makes sense to buy insurance. It's a good idea to buy home, car, life insurance. But those are priced in a very efficient marketplace." Profits in the insurance marketplace run to about 15%, while profits for extended warranties run to about 80%: the extended warranty just isn't that necessary. In fact, some electronics retailers make far more profit on the extended warranty than on the items they sell covered by the warranty, which is why they push them so hard.

CONSUMER REPORTS (CR) magazine has long told readers to ignore extended warranties on most products, such as cars, appliances, and televisions. CR researchers have analyzed repair records and found that the warranties just aren't usually needed. CR senior editor Tod Marks says they are particularly ridiculous for popular consumer digital electronics products like personal computers, digital music pods, digital cameras, and smart phones.

An extended warranty, said Marks, is a "sucker's bet", with the purchaser betting that the item will break in the warranty period -- which according to CR data is rarely the case -- and that the cost of the replacement if it does break will be more than cost of the warranty -- which is usually flat wrong. Prices of things such as digital cameras have been falling, and a few years down the road a purchaser will likely be much better off to buy a new camera at a lower cost and with new features. CR does say that an extended warranty on some products may pay off. Rear-projection TVs have traditionally been unreliable, with the high-intensity lamps burning out often and requiring an expensive replacement. Otherwise, extended warranties are usually a joke.

BACK_TO_TOP* HOTHOUSE WORLD (3): The more traditional fear over global warming and the oceans has been rising water levels. There is no real problem with ocean levels at this time, but as with the Gulf Stream circulation, some alarming if ambiguous evidence has come to light.

The evidence itself was fairly hard to miss. In March 2002 Ted Scambos, a researcher at the University of Colorado / Boulder, was examining satellite imagery and noticed something strange going on with the Larsen B ice shelf in Antarctica, a floating extension of on-land glaciers. He tipped off the British Antarctic Survey in Cambridge, and a research vessel was dispatched to investigate; Antarctic-based researchers conducted aerial surveys of the ice shelf as well. The observations documented the rapid collapse of the ice shelf.

The Larsen B ice shelf was floating in the ocean, so its melting had no effect on sea levels -- ice displaces its own weight in water, so the liquid water from melted floating ice simply occupies the same volume. This is why the sea level would not rise if the polar icecap melted. That would not be true of the melting of ice covering otherwise dry land like Greenland and Antarctica. Greenland's ice sheet is up to 3 kilometers (1.9 miles) thick, while Antarctica's ice sheet is up to 4.2 kilometers (2.6 miles) thick. If all of Greenland's ice melted, the oceans would rise by about 7 meters (23 feet); if all of Antarctica's ice melted, the oceans would rise about 6 meters (20 feet) more. Even a one-meter rise would flood about 17% of Bangladesh's land and make life difficult for coastal cities like New York and London.

As with the Gulf Stream, sea levels haven't been all that constant over recent geologic history. About 18,000 years ago, during a peak ice age, the oceans were 130 meters (425 feet) shallower than they are now, though that was an unusually low level. Over the past century the oceans seem to have risen by about 10 to 20 centimeters (4 to 6 inches), but the word "seem" is important. A rise in the level of one ocean surprisingly does not necessarily mean a rise in the level of another. Seas that are warming expand, seas that are cooling contract, and the winds can collect water in some oceanic regions at the expense of other oceanic regions. Sea levels are falling in the northern Pacific, the northwest Indian Ocean, and near Antarctica; they are rising in most of the tropics and subtropics. The land also moves up and down, with land that had been covered by glaciers during the last ice age still rebounding, sometimes at the expense of locales farther south. Scandinavia is rising at a rate of about 100 meters (330 feet) per century; the rate for Loch Lomond in Scotland is about 10 centimeters (4 inches) per century, while London is sinking at the same rate.

For decades, sea-level data was acquired by hand from simple tide gauges, but in 1992 satellite data became available that gave an automated and much more globally comprehensive read on the shifting of sea levels. Satellite data seem to show that the oceans are rising by about 3 millimeters a year; land-based measurements suggest that the average rise over the past century was 2 millimeters a year, but that the rate has now increased to 4 millimeters per year.

There are two possible reasons for the slow rise in sea levels: the water is warming and so expanding, and ice is melting. As noted, the collapse of the Larsen B ice shelf was not a direct contributor to rising ocean levels, but the oceanic ice shelf was a "buttress" that slowed the movement of land-based glaciers out to sea. Ice shelves are much more vulnerable to melting than land-based glaciers, since their melting is in response to heat from the air above and the water beneath. The ground under land-based glaciers, in contrast, tends to stay cold.

Researchers knew that Larsen B was collapsing, but the speed of the collapse was surprising, as was the increase in the rate of movement of the land-based glaciers behind it. The glaciers are now moving six to eight times faster than they did before the ice shelf collapsed. However, even at the higher rate, the pace is still "glacial": it's gone from a few hundred meters a year to a few kilometers a year. Greenland's largest glacier, the Jacobshavn Isbrae, which drains about 6.5% of Greenland's ice-sheet are, doubled its rate of advance between 1997 and 2003.

The acceleration of these glaciers is unsettling, but only part of the story. Most other glaciers in Greenland and Antarctica have not increased their speed, and a few seem to be slowing. There is also the fact that snow keeps falling on these two continents, building up new glacial material, and there are no hard figures on the "mass balance", the mass gained versus the mass lost. Climatologists still worry that the faster glaciers may be the leading edge of a trend, and they know for certain that if a general increase in speed took place, there's almost nothing that could be done about it.

* As a footnote here, the average temperature of Greenland has increased by 1.5 degrees Celsius over the past 30 years, and it has affected the 56,000 inhabitants in different ways. When the Vikings settled Greenland in the Middle Ages, the place was relatively warm, and the settlers raised fields of barley. The temperature then dropped, making farming difficult and impractical. These days, the locals rely on sheep farming in the south, hunting in the north, and fishing in the west.

However, the rise in temperature has led some Greenlanders to give farming another shot. Some farms are raising barley on an experimental basis; potatoes, turnips, and iceberg lettuce are being raised commercially. Sheep farming is also benefiting from the rise in temperatures, since more grass grows and the sheep don't have to be shut up for as long during the winter, chewing up large stockpiles of fodder. Locals say insects are more of a problem, but overall the current environment is an improvement.

The hunters in the north are not so happy. They hunt narwhales, seals, walruses, and polar bears, using dog sledges for transport. Melting ice makes dog sledge operation trickier, and the weather has become more unpredictable: this is a real hazard, since getting caught out in a storm is potentially fatal. The fishermen in the west, who mostly trawl for shrimp, have mixed feelings. There's less sea ice, which makes fishing easier, and cod, which disappeared in the 1960s, is coming back -- though the cod tend to eat shrimp and reduce the haul. [TO BE CONTINUED]

START | PREV | NEXT* INFRASTRUCTURE -- OIL & GAS (7): Now consider a refinery in terms of its organizational arrangement instead of its individual components. The "crude unit" is where the crude oil is initially processed; in the old days it did all the processing there really was to do, but nowdays things are more complicated and it's only part of the system.

The crude unit is a distillery with three main parts: a feed header, an atmospheric-pressure distillation column, and a vacuum distillation column. The vacuum distillation column is also called a "flasher"; unlike the atmospheric column, which is tall and slender, the flasher is relatively short and has a bulge around the middle.

The feed heater heats up the crude oil before it is fed into the atmospheric column. "Light ends" -- methane, ethane, propane, and butane -- are drawn off from the top of the column. Below that is "straight-run gasoline", which used to be the only gasoline there was, but there's more in the mix now, as discussed later. Other fractions drawn off further down the column include naptha, heavy naptha, kerosene, "gas oil" -- and finally what is known as "residual oil" or "resid", which won't boil in the atmospheric column.

Heating the resid up more would just make it break down, so it is instead fed into the flasher, the vacuum tower, where it boils at a relatively low temperature. The flasher doesn't provide a hard vacuum, by the way -- it's at about a third of atmospheric pressure. The "tops" of the flasher output are used to make motor oils and other lubricants. However, not all the resid will boil even in the flasher. The "flasher bottom" is partly used for asphalt, but it's mostly a nuisance.

* The light ends are sorted out in the "gas unit", which features a set of tall, narrow distillation towers. The height is needed because the light ends are hard to separate from each other. The towers are under pressure -- about 14 atmospheres. The light ends have various uses:

At the other end of the spectrum, the less desireable flow from the flasher is fed into a "catalytic cracking" unit, which breaks down tarry long-chain hydrocarbons into shorter, more useful molecules. The process is based on "zeolite" catalyst -- zeolites being a sort of crystalline clay that is full of small pores where hydrocarbon molecules can be cracked into smaller pieces. Think of the zeolites as providing a template or form where molecules can be broken apart more easily than if they were floating around freely.

The zeolite is crushed into a fine flowing powder, with the powder and feedstock mixed at high temperature and fed into a reaction chamber. The catalysis takes place quickly, with the products separated from the catalyst using a cyclone system. The products end up in another distilling tower to be separated into fractions. The catalyst is reused -- catalysis by definition does not consume the catalyst -- but it has to be run through a "regenerator" first since the zeolite crystals are fouled by carbon. The regenerator uses hot air to clean up the crystals so they can be sent back through the cycle again.

The carbon fouling occurs because hydrocarbons, as their name implies, consist of carbon chains with hydrogen atoms around the "edges". The ratio of hydrogen to carbon atoms tends to get lower as the length of the chain increases, and so breaking a long chain into smaller molecules means that there's not enough hydrogen available to make use of all the carbon, leaving a residue. A scheme known as "hydrocracking" uses a hydrogen gas stream to eliminate the excess carbon. Since hydrocracking minimizes fouling, the catalyst can be provided in a fixed bed, not cycled through a regenerator.

Catalysis is used in other parts of the refinery process as well. A "reformer" unit takes straight-chain molecules and turns them into branched chains or rings that burn better in piston engines. The reformer is a high pressure reaction vessel where reactions are performed using precious-metal catalysts like platinum and rhenium. The catalysts are expensive and have to be replaced every few years.

The "alkylation" or "alkyl" unit performs something of the reverse function: it glues together small hydrocarbon molecules to make useful molecules out of them. Instead of a feed heater, the alkyl unit has a chiller unit that cools the flow down to the temperature of cold water, and instead of platinum and rhenium, it uses sulfuric acid as a catalyst.

The bottoms of the flasher unit are, as mentioned, a nuisance. They are usually fed into a "coking unit", where they are heated and turned it into nearly pure carbon or coke, which as mentioned earlier in this series is used in steel production. The coking unit looks like a row of big drums with a drilling derrick, of all things, on top of each drum. The drums fill up with solid coke, with the drill then used to break up the coke so it can be removed. Coke obtained from refineries is usually too fouled with pollutants to be used in countries with high air-quality standards, so it is usually shipped elsewhere.

* The fuels resulting from this process include gasoline, kerosene, diesel oil, and so on. Relatively pure form of kerosenes, taken from narrow ranges of the fuel "stack", are used as "jet propellant (JP)" for jet aircraft. An even purer form of kerosene taken from a very narrow range of the stack is used as "rocket propellant (RP)". RP has to have consistent properties, since if it didn't the performance of the rocket engines would be unpredictable and the launch mass of a large booster might vary by tonnes, with disastrous results. I recall a TV show where an arsonist was using RP to set fires, which was ridiculous: it would hard to tell it from ordinary kerosene, and though it may burn better because of its purity, it wouldn't be by an order of magnitude.

Automotive gasoline is a blend of gasoline and other fuels, butane being one major constituent, with the volatile butane making the engine easier to start. It's the butane that vaporizes when you fill up your gas tank, not the gasoline. In cold climates, more butane is used in the blend since it's harder to start cars in the cold, and the butane won't evaporate away as easily as it does in a warm climate. Ethanol is also often blended these days since it results in cleaner-burning fuel, reducing emissions.

* A refinery will have a set of "flare stacks", tall pipes with a burner at the top, to burn off escaping methane and other gas. Flare stacks were once used continuously to get rid of gas that was too uneconomical to sell, but with rising energy prices there's hardly any uneconomical fuels any longer. The flare stacks are just to get rid of the gas if there's a problem, since their buildup would produce an explosion hazard. Interestingly, some of the burners on the flare stacks inject steam into the burning gas, which makes it burn cleaner.

Sulfur in the crude oil generally ends up in the form of smelly hydrogen sulfide, H2S. Once upon a time, the H2S was generally vented to the atmosphere, but it was a blatantly noxious pollutant. These days, just like a copper smelter, a refinery will have a sulfuric acid production unit to turn the H2S into a useful and salable product. At some plants, the H3S is turned into elemental sulfur instead. [TO BE CONTINUED]

START | PREV | NEXT* GIMMICKS & GADGETS: Carnegie-Mellon University (CMU) is famous for its robotics department. According to SCIENTIFIC AMERICAN, one the latest intriguing robots to come out of CMU is the "ballbot", designed by a group under CMU researcher Ralph Hollis.

Hollis was looking at how robots tended to be bottom-heavy just so they could be stable, and wondered if there was a smarter way to do things. The result, the ballbot, looks like nothing more than a post that rolls around on a ball on the bottom. The post contains a battery power supply, a control processor, an inertial measurement unit (IMU), and a drive system for the ball on the bottom.

The IMU features three fiber-optic gyros and three micro-accelerometers, with a gyro and micro-accelerometer for each of the X, Y, and Z axes. A sophisticated control algorithm executed by the processor allows the robot to move around, and even simply stand up straight without moving; the ballbot can deploy a tripod system for support before turning itself off. The ball drive system is conceptually based on the mechanics of a computer mouse. A mouse has a ball that rotates against two rollers at right angles to each other, with the rollers driving optical encoders -- slotted spinning wheels that are scanned by a LED-phototransistor assembly -- to give X-Y coordinates. The ballbot drive system is along the same lines -- except that there are three rollers, and instead of being driven by the ball, they are linked to motors to drive it. The motors are fitted with optical encoders so the processor knows exactly how fast they are rotating and how many rotations they make.

Visitors to the lab reportedly find the ballbot absolutely fascinating to watch. I did find an online video but it was unspectacular, with the robot simply moving a few times and then standing still. The CMU group is working on an improved ballbot with arms and a rotating video-camera head. Like a lot of robotic experiments, it's hard to say if something like this sounds like it would be useful any time soon, but it certainly looks like a cool toy.

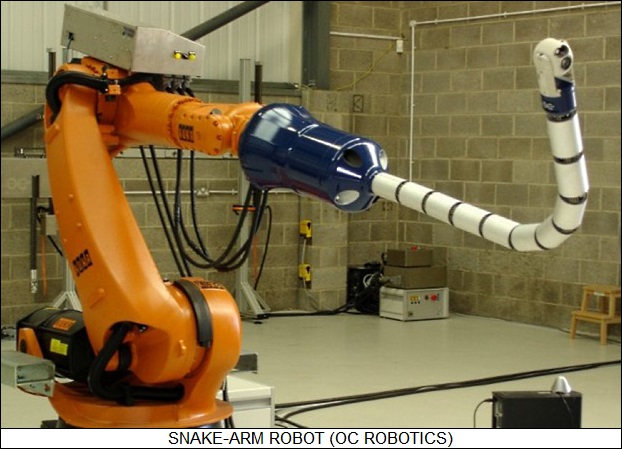

* A report from BBC.com discussed another interesting robotics technology, the "snake-arm robots". Traditionally, robot arms are jointed affairs, not so different in concept from a human arm, but over the last three decades there has also been research into robot arms consisting of a large number of short segments linked together.

The advantage of a snake-arm robot is its flexibility, its ability to reach into corners that would be too difficult for a traditional robot arm. The disadvantage is cost, and so far snake-arm robots have been lab toys or specialized tools. They are being used, for example, in troubleshooting nuclear power plants, where their ability to crawl into tight mazes of piping is irreplaceable.

Backers of the technology believe that it has more potential, if the costs can be reduced. That means simplifying and modularizing the construction of the arm, as well as figuring out smarter ways to control it: writing the software to get a snake-arm robot to do what it is commanded to do is not trivial. There is interest from a wide range of industries, including aircraft manufacturers and doctors looking for improved surgical robots.

* As reported by AVIATION WEEK, San Francisco International Airport is now performing trials of a "4D" -- space and time -- flight control system that reduces jetliner fuel consumption by sequencing arrivals in a way that an aircraft can come straight in to the runway, without any go-rounds. The 4D system tracks the flight path of the jetliners and gives their flight-management systems continuous updates for the most efficient arrival path.

* According to WIRED.com, the town of Bocastle in southern England is prone to nasty floods, with one in 2004 badly damaging the place. The town's rain gauges had not indicated the downpour was heavy enough to cause a flood, and the townspeople had no warning. Now a team of engineers from Lancaster University is setting up a sensor network with 10 to 20 nodes, run by tiny processors known as GumStix and communicating over wireless links. The sensors in the "GridStix" network track water levels, taking measurements on intervals to conserve power, and relay their findings to a central computer at the UK's Meteorological Office.

The central computer sees if the data indicates a flood coming up, then sends out an alarm if it is. The nodes are generally idle, waking up when the rain starts to fall. They use cameras to observe water flow from debris coming downstream and switch from bluetooth wireless to wi-fi wireless to handle the heavier data stream.

* The Segway "Personal Transporter", a two-wheeled electroscooter that looks like an old-fashioned push lawnmower that you stand on, got a lot of attention when it was introduced in 2001 in a blaze of publicity. According to an article in BUSINESS WEEK ("Reinventing The Wheel, Slowly" by Mark Gimein, 11 September 2006), inventor Dean Kamen and his backers thought the Segway would revolutionize urban transportation.

The reality quickly set in: You want five thousand bucks for this thing? It's cute -- but who are you kidding? The WASHINGTON POST called "the invention that runs on hype". It was certainly a clever piece of gear, using computer-controlled electric motors that kept that vehicle balanced even when it hit bumps, and it has been adopted by police as well as disabled persons, who swear by it. However, so far sales have been in the thousands, not the hundreds of thousands.

The company has not given up, though under new president James D. Norrod the vision has changed to reflect reality. Norrod sees the technology developed for the Personal Transporter as widely applicable, for example to implement highly maneuverable unmanned vehicles for commercial or military use, and even as the basis for electric or hybrid automobiles. Segway has already built a four-wheeled prototype vehicle. Says Norrod: "If people want four wheels, I say give them four wheels."

Kamen, still company chairman, had hoped for more: "Life is too short for incrementalism." The reality is that a giant step may require generations of fussing and fiddling before things come right, and Segway is adjusting to that reality. Don't count the idea out yet.

BACK_TO_TOP* BETTER MOUSETRAPS: The war being retailers and shoplifters has been going on for a long time, and as reported in a BUSINESS WEEK article ("Attention, Shoplifters" by Elizabeth Woyke, 11 September 2006), the retailers have as of late been acquiring some high-tech armament for the fight.

Everyone is aware of the video cameras in stores these days -- six million in the USA alone -- but it comes as a surprise that a few leading-edge retailers have very smart video systems that can spot unusual activity automatically. Such a system will take notice if somebody picks ten items off a shelf or opens a case that's normally kept closed, providing an alarm to security to allow them to look the matter over. It can predict the spots where shoplifters would be out of general sight, and can also identify spills, alerting a cleanup crew immediately. The video system will of course observe and track break-ins.

Such a smart video system is expensive, but retailers find it worth the money. These days, shoplifting isn't the domain of loose teenagers; organized gangs have moved into the business, looting stores for specific high-value items that they resell at a discount. The average value of shoplifting incidents has tripled since 2003. That means that retailers have plenty of incentive to invest in defenses. One common scam is to simply walk out the door with a loaded shopping cart, a trick called the "push out". Gatekeeper Systems of Irvine, California, has introduced an "electronic fence" for carts that uses RFID transponders and a network of antennas around the store to keep track of carts. If somebody tries to push out of the store without going through a checkout -- or if somebody who has gone through the checkout then tries to take the cart out of the parking lot -- the wheels of the cart lock up. Target has signed on to the scheme.

Another trick with carts is to hide goods on the bottom rungs in hopes of sneaking out of the store with a score. A number of US grocery chains, are now testing "LaneHawk", developed by Evolution Robotics Retail INC, which uses cameras mounted in the checkout stand just above the floor and pattern recognition to spot packages on the bottom rack, and then add the price to the bill.

Criminals are notoriously quick to catch on to store defenses, and so much work is being done to make the defenses as invisible as possible. Although "electronic article surveillance" tags have been around for years, they are increasingly unobtrusive, having been reduced in scale to a cylinder about as long as a toothpick and maybe twice as thick. Of course, anybody who buys DVDs knows about the EAS strip, which is stuck inside the DVD case before the case is wrapped. J. Crew sews EAS devices into clothing labels.

The big buzz is of course the latest generation of RFID tags, which are small and highly capable. EAS tags are only activated at the store exits, but RFID tags allowing tracking and provide detailed product information, anyplace in the store. Walmart and Target are big RFID fans, though they mostly use the technology for tracking crates of inventory in the back room. The reason is that RFID tags cost from 7 to 20 cents these days, and they need to be cut to 5 cents or less to be cost-effective for all items on the shelves. However, even at 20 cents they are cost-effective for high-value items.

The cash register is also being improved to help fight theft and identify other problems. Software is now available to identify peculiar register usage patterns, for example spotting repeated returns of the same or similar items, suggesting a scam in operation; or an employee who performs manual credit card number entry an unusual number of times, suggesting credit-card number lifts. Of course, the manual entries also may suggest the card reader needs to be fixed, but that's good to know in itself.

One of the problems with adding all such high-tech defenses is that they cumulatively produce floods of data, allowing anomalies -- thefts -- to get lost in the noise. Now software is available to perform "fusion" on all the elements, screening through the routine to flag the unusual to store security. RFID tags can cue video cameras, which then automatically check for suspicious activity, with the system correlating the events to checkout counter data -- or lack thereof. Researchers in the field of security systems believe that the next step will be to integrate summary data streams from individual stores over wide regions, to allow law enforcement to search for patterns of criminal activity. There's some work on using video cameras to get license plate numbers and perform facial recognition, allowing patterns to be pinned down to individuals.

Retailers are generally quiet about their security systems, and not just to make sure that thieves don't get wise to them. There's also consumer resistance to the idea of wiring stores with video surveillance and RFID systems, since such technologies do have real privacy implications. Confronted with energetic crooks, retailers find the concerns exasperating, responding that shoplifting increases prices for shoppers as well. The security systems also perform inventory control, making sure that items remain in stock. Still, it can be a bit unsettling to realize during a trip to the supermarket that "Big Grocer is watching YOU."

BACK_TO_TOP* ZIGBEE HOME NETWORKS: As discussed in an article in IEEE SPECTRUM ("Busy as a ZigBee" by Jon Adams & Bob Heile, October 2006), the idea of a networked home has been around for about two decades, and so far it hasn't really taken off. There have been a number of problems with the concept, such as the lack of effective standards to make sure the different pieces of the network could play together; the need to wire up a house with the network, which not only made such schemes expensive but also inflexible; and the related problem of a certain lack of "scalability", in that the initial investment in the network was so big that it wasn't economical to set up a household network on an incremental basis -- starting out with a single node and then expanding over time.

The widespread introduction of wireless technology opened the possibility of setting up a wireless home networking system, the vision being a control that could be installed simply by slapping a switchbox on the wall with double-sided sticky tape. In 1998, an industry organization, the "HomeRF Working Group" began to investigate the idea. HomeRF concluded that existing wireless systems weren't well-suited to implementing a wireless home network, and began to work on their own concept.

The HomeRF group disbanded without coming up with a solution, but in 2000 the Institute of Electrical & Electronics Engineers (IEEE, a professional association well known for its influential standards efforts), set up a working group designated "IEEE 802.15.4" to investigate "wireless personal area networks (WPANs)". The group released its initial spec in 2003 and issued an update in 2006. The spec is known more informally as "ZigBee 1.0" -- the name was cobbled together out of available domain names to give the spec a more interesting handle than "IEEE 802.15.4", and has no meaning other than whatever people want to read into it.

The IEEE is heavily into the wireless network business, having established the "IEEE 802.11" or "wi-fi" spec. wi-fi is broadly similar to ZigBee in that both provide a household wireless network, but the details are totally different. wi-fi operates at high data rates and necessarily uses a lot of power, while ZigBee operates at very low data rates and uses absolutely minimal power. wi-fi operates through a central hub, while ZigBee is a distributed network, with the intelligence residing in the nodes of the network.

Since ZigBee is intended to do modest things like control light switches, it can get by easily with a fiftieth of the wi-fi data rate. Since ZigBee boxes will often be battery operated and stuck in difficult-to-reach places, they need low power consumption to ensure that the batteries won't have to be replaced in anything less than a number of years.

ZigBee is not seen as a way of just turning lights on and off, however. ZigBee-based sensors could be put on all the windows and doors to let a central security controller know that all is quiet; this would permit any home to have a security system on a level near that of a museum. Sensors might also be fitted to track movements of residents in a house along with the temperature, humidity, and light levels in a room -- to adjust lighting, heating, ventilation, and air conditioning as needed. A ZigBee sensor could monitor the cat door to check the kitty's RFID tag and make sure unwanted visitors -- strange cats, raccoons, or possums -- don't get in. Outdoor sensors could monitor soil moisture and turn on the sprinkler system when needed. These are not basically new things, but ZigBee makes them much easier and cheaper to implement.

* A ZigBee node is built around a small silicon chip featuring a multichannel two-way link with a microcontroller, with the rest of the node built as per its function: a light switch, thermostat, sensor, or whatever, with nodes eventually built into appliances. The ZigBee network features autonomous organization: every time a node is installed, it seeks out neighboring nodes and links itself into the network -- though the owner has to authenticate the node to prevent a light switch from a neighbor's house from being added. Authentication may require no more than pushing a button, though it can be more sophisticated if the node has a display and keyboard. Installing the nodes is generally straightforward, and any homeowner should have no problem doing so except in specialized cases. In maturity, simple nodes will cost only a few dollars and there will be little overhead in buying and installing them, though setting up a central controller will be more expensive.

ZigBee operates at a maximum data rate of 250 kilobits per second. The messages are short and only sent infrequently, minimizing power use. ZigBee uses the same RF band as wi-fi, with communications based on "quadrature phase-shift keying (QPSK)", which is a digital signal in which pulses can shift timing in four ways, providing two bits of data with each shift. Each device in a ZigBee network can have an address in a 64-bit address space, though any particular network configuration will only use a 16-bit subset. Typically a ZigBee node will be asleep, unless it's taking a measurement or being polled by a controller. Even if a home is full of signals in the same band, the node will retry sending a packet at a thousand times a second until it gets an acknowledge, and then goes back to sleep. Security was a basic consideration in the design of ZigBee, with the Advanced Encryption Standard (AES) used as a fundamental component to make sure that only the owner of the house or "trusted vendors" can get into any particular network.

A spec for ZigBee is all very well and good, but it's not enough to guarantee interoperability for ZigBee nodes from different vendors. An industry consortium, the not-for-profit ZigBee Alliance, was established in San Ramon, California, in 2002 to help establish conformance through enhanced specifications and testing procedures. It now has about 200 members, including companies dealing with semiconductors, consumer electronics, control systems, heating and air conditioning, lock makers, and so on.

* ZigBee, on the face of it, has substantial advantages over alternative home-control networks. The "X10" spec is in some use, but it requires dedicated wiring for the most part and can only handle 16 nodes max. The bluetooth short-range wireless networking scheme could be used for a home network in principle, but it wasn't designed with that application in mind; for example, it doesn't have ZigBee's provisions for low-power operation.

ZigBee products are expected to show up at home improvement stores soon. Its advocates believe that ZigBee may make inroads into home-security networks, which have traditionally been based on proprietary technology, and should eventually appeal to commercial and industrial customers -- though commercial and industrial systems can have thousands of nodes, requiring careful planning in their implementation. For the time being, the focus is on the home. Advocates believe they now have a solution that will finally make the smart home a reality, instead of an expensive contraption of questionable usefulness.

BACK_TO_TOP* HOTHOUSE WORLD (2): Ocean currents are measured in a unit called the "Sverdrup", which equates to a million cubic meters of water per second. The Gulf Stream, the northern component of a circulation system known as the North Atlantic Gyre, runs at about 150 Sverdrups at its peak, though the average is more like 100 Sverdrups. North of Britain, the sea temperature is close to zero degrees Celsius -- except for the Gulf Stream, which runs to about 8 degrees Celsius. The net effect is that, in the winter, the coast of Norway is about 20 degrees Celsius warmer than locations at comparable latitudes in Canada. As a result, the idea that the Gulf Stream might stop running was not merely of interest to climatologists, and so a recent scientific paper that suggested it was slowing down got a great deal of attention.

The Gulf Stream is partly driven by the Earth's rotation and partly driven by a deep-water current known as the "Thermohaline Circulation (THC)", which draws warm salty water from the tropics to the north. As it loses heat during its migration, the THC tends to sink, since it is saltier and heavier than the northern waters into which it is flowing. The deeper, colder water heads back south in the Deep Southerly Return Flow, which passes by Florida, and the Subtropical Recirculation, which skirts Africa.

Climate only seems constant on a human time scale; on the geological time scale, it shifts around a good deal and sometimes does so rapidly. Since the end of the last Ice Age 20,000 years ago -- a blink of an eye by geological standards -- the Gulf Stream has shut off several times, most recently about 8,200 years ago, when a sudden flood of fresh water from an American lake dumped into the North Atlantic, diluting the salty Gulf Stream and weakening its flow. Climatologists have worried that the melting of the north polar icecap would similarly flood the sea with fresh water and shut down the Gulf Stream. The ironic end result of this aspect of global warming would be a colder Europe.

Nobody thought that was likely to happen any time soon, and so it came as something of a shock when a paper was published in 2005 by Harry Bryden and his colleagues at Britain's National Oceanography Center in Southampton claimed the THC was slowing down. The finding was based on five sets of measurements performed over the last half-century: the first three sets gave a constant speed for the THC, but measurements in 1998 and 2004 showed a slowing of up to a total of 30%.

The paper was startling, but not everyone has been impressed. According to Carl Wunsch, a climatologist at the Massachusetts Institute of Technology (MIT): "The oceans are like the atmosphere. The system is exceedingly noisy. You get weather in the oceans like you get weather in the atmosphere. This paper is based on five crossings of the Atlantic over 47 years. It's as though you went out on five different occasions in five different places in North America over half a century and measured the wind speed. You say there's a trend. There's a trend in your figures, but you have no evidence of a secular trend. Much of the community would say you have five data points." Wunsch is actually a global-warming advocate, in fact strongly believes it is happening and must be dealt with, but he's annoyed at the tendency of climatologists to read so much into such thin data. Such exaggeration has no credibility and undermines the case for global warming.

To add to the uncertainty, however, several simulations also point to the possibility of a Gulf Stream shutdown, though they don't see it as happening right away. There's also uncertainty about how much impact even a complete shutdown would have; some climatologists see a frigid Britain, some merely see somewhat harsher weather on the coasts of Norway and Scotland. [TO BE CONTINUED]

START | PREV | NEXT* INFRASTRUCTURE -- OIL & GAS (6): An oil refinery is one of the most citadels of industrial architecture: a necessarily grungy workplace by day, a surreal glittering landscape by night, elaborate in either case.

A refinery is a continuous-flow system, not a batch system: crude oil goes into one end and refined fuels of various sorts come up the other, on a more or less constant basis. A refinery tends to be a bewildering maze of pipes and structures on first sight, but there is a method to the madness, the site featuring specific structures and divided into specialized units to perform particular phases of the refining.

Refining the crude oil requires heating at various stages, and so about a dozen or so "feed heaters" are distributed around the grounds, with each "unit" of the refinery usually having its own feed heater. All a heater amounts to is a big furnace, with natural gas burners at the bottom and a sloped roof leading to a smokestack at top. Petroleum is pumped through steel pipes in the center of the structure to be heated, with the heat varying according to the step in the process -- ranging from hot enough to bake bread to hot enough to peel off paint. The natural gas burned in the feed heaters is skimmed off from the production flow.

The best-known component of the refinery is the tall tower known as the "cracking column". It's a distilling tower, in principle little different from the distilling tower used to separate cryogenic gases, except that the cracking column's inputs are hot, warmed up by a feed heater at the bottom, instead of extremely cold. The vaporized crude drifts up through trays and around inverted cups, with the appropriate fraction liquefying at each tray. Cracking columns are usually the tallest spires in the refinery, but they can vary in shape, some being short and tubby.

A refinery may be littered with other, shorter towers, which are "reactors" where particular chemical reactions are performed. Reactors are not always towers; sometimes they're laid on their sides. Reactors supporting high-pressure processes will have rounded ends. A refinery will also have a few heat exchangers, where one portion of the process is cooled by heating up another, conserving energy in the bargain. A heat exchanger just looks like a cylindrical tank, usually lying on its side, with pipes going in and out and intertwined inside.

The refinery is threaded with a tangled network of pipes, valves, and pumps. The pipes carry raw materials, products, and intermediate stocks, as well as water, steam, pressurized air, and such things as hydrogen sulfide and ammonia. Some pipes are insulated; some are steam-heated, fitted with an "expansion loop" -- a hump in the pipe that takes up the expansion of the pipe when it is heated.

All the pipes have one or more valves, which may be "stop valves", which are either fully open or fully closed, or "throttling valves", which operate over the full range from fully open to fully closed. These days, the valves are remote-controlled from a central control facility. There are also many pumps around the refinery to keep the flow in the pipes going, though they are generally hard to spot: they are mounted low, ground level or under, since they have to be below the level of the pipes they are drawing fluid from. The pumps are the major user of electricity for a refinery. [TO BE CONTINUED]

START | PREV | NEXT* NOT WITH A BANG BUT A STINK: The evidence that a giant meteor impact 65 million years ago ended the Cretaceous era and the reign of the dinosaurs accumulated to a persuasive level in the 1980s, and it's become something of the popular wisdom, even becoming a staple of Hollywood blockbusters. There has been debate over the matter since then, but the impact hypothesis for the Cretaceous extinction is still in the ring. However, there have been other mass extinctions in the history of the Earth, and though the notion of giant meteor impacts originally seemed to provide a useful mechanism, so far the evidence hasn't supported the idea. An article in SCIENTIFIC AMERICAN ("Impact From The Deep" by Peter D. Ward, October 2006), suggested an alternate mechanism with unsettling implications.

Along with the Cretaceous extinction, four other mass extinctions are known to have occurred:

The major evidence for the Cretaceous impact was the discovery of a geological layer enriched in iridium metal, since iridium is a much more important constituent of some meteors than it is of the Earth as a whole. The impact scattered meteorite dust around the globe to form the iridium-enriched layer. The discovery of the buried remains of a crater of appropriate size and age beneath the Yucatan peninsula helped strengthen that argument.

Evidence was found linking the other mass extinctions to impact events, but further examination led to doubts: the other events seemed to be very protracted affairs, lasting hundreds of thousands of years, and the evidence suggested they were linked to changes in atmospheric conditions. In the late 1990s, researchers found evidence of an alternate extinction hypothesis, based on evidence that photosynthetic green sulfur bacteria were common in the other mass extinction episodes.

Photosynthetic green sulfur bacteria and the similar purple sulfur bacteria are "anaerobic" microorganisms, which not only do not use oxygen for their metabolic processes but find it toxic. There was a time before the Earth had an oxygen-laden atmosphere when anaerobic microorganisms ruled the planet. They are still common at the bottom of stagnant lakes and some larger bodies of water, like the Black Sea, where the waters are "anoxic", or in other words lacking in oxygen.

The metabolism of the photosynthetic sulfur bacteria is based on converting hydrogen sulfide (H2S) gas into sulfur. H2S gives hot springs their rank odor and is toxic in fair quantities. The photosynthetic sulfur bacteria are part of an "ecology" of anoxic environments, consuming H2S produced by other (non-photosynthesizing) anaerobic bacteria, and so the geological evidence that suggested a "bloom" of photosynthetic green sulfur bacteria implied the accumulation of H2S-producing anaerobic bacteria -- and by implication anoxic oceans.

In most waters, oxygen diffuses in fairly constant concentrations from top to bottom. In environments like the Black Sea, oxygen concentrations are low deep in the waters, providing a pleasant environment for H2S-producing anaerobic bacteria. There is an underwater boundary, called the "chemocline", where H2S-saturated deep waters meet oxygen-diffused surface waters, with the photosynthetic sulfur bacteria thriving at the layer where the sunlight is more plentiful than it is at lower depths. The chemocline is normally stable. However, computer models show that if the oxygen in the surface layer starts to decline, the H2S-saturated anoxic bottom layers start to move upward. In the worst case, they could reach the surface and dump H2S into the atmosphere. Not only is H2S toxic to aerobic organisms, but it also depletes the ozone layer. Evidence suggests that atmospheric H2S levels went through the roof at the end of the Permian -- in a literal fashion, since the evidence also implies widespread damage to life-forms from ultraviolet, and so depletion of the ozone layer.

There is good evidence of widespread volcanism at the end of the Permian. The result would have been to increase atmospheric CO2 levels; since even at high levels CO2 is a trace atmospheric gas, that wouldn't have reduced the oxygen levels of the oceans much in itself, but since CO2 is a "greenhouse gas", it would have led to global warming. Warmer waters don't absorb oxygen as well, and the stage was set for runaway oceanic anoxia -- and mass extinctions by H2S. With rising CO2 concentrations in the present atmosphere, are we faced with mass extinctions by H2S? That seems like an exaggerated worry, but it is still a bit unsettling.

BACK_TO_TOP* AIDS IN AMERICA: Most news on the HIV-AIDS pandemic focuses on the undeveloped world, and given the widespread availability of anti-AIDS drugs in America, there's a certain tendency to perceive that the problem has gone away here. However, as reported by THE ECONOMIST ("Ignorance Is Not Bliss", 30 September 2006), the truth is that AIDS is still at large and dangerous in the USA.

It is estimated that a million Americans have AIDS; compounding the problem is that about a quarter of them don't realize it, meaning they not only are not obtaining treatment, but are passing on the disease to others. The number of new HIV infections per year in the US has not declined since the late 1990s, despite all attempts to bring the rate down. In response, the US Centers for Disease Control (CDC) has recommended that all Americans between the ages of 13 and 64 be regularly tested for HIV. Although the CDC is not specifying that the tests be compulsory, clinics are being encouraged to test incoming patients by default and not wait for them to ask.

Not only would an early diagnosis of HIV infection help reduce the spread of the disease, HIV is easier to treat if it hasn't developed into full-blown AIDS. Most emergency rooms don't test at the present time -- mostly because of the bureaucracy, which requires the signing of consent forms and a lecture about safe sex. The CDC wants to cut down the overhead so that clinics will have no problem administering the test, which is cheap and simple. In addition, making testing commonplace would eliminate the stigma that has been attached to it in the past.

AIDS hits black Americans the hardest. Despite being only an eighth of the population, they account for almost half the new infections, with studies showing that young gay black men are four times as likely to be HIV-positive than their white counterparts, and twice as likely as Latinos. Another study of gays in Washington DC involving a query as to HIV status and an HIV test showed that only 18% of the white subjects were not aware they were HIV-positive, while two-thirds of the black subjects didn't know. Part of the problem is the black person's distrust of the system -- the idea that if a black man is found out to be HIV-positive, it will be reported to the authorities, affecting his job and family relationships. There is also the fact that mainstream black culture is strongly homophobic, and so black gays tend to be more closeted than white gays. In fact, the prejudice is so strong that many blacks who engage in male-on-male sex refuse to acknowledge that they are gay.

No other rich country has established routine testing for HIV, but no other rich country has as bad a problem as the US. Lawmakers, hospitals, and insurers don't have to implement the CDC's recommendations, but the CDC has authority and the recommendation is not going to be simply ignored.

BACK_TO_TOP* INVESTING IN AFRICA: The traditional perception of sub-Saharan Africa is that the region is a hopeless basket case, but as reported by an article in THE ECONOMIST ("The Flicker Of A Brighter Future", 9 September 2006), things have been looking up in the last few years, and many businesses think there's money, even big money, to be made there. Although the subcontinent is still plagued by poverty and AIDS, the economy has been growing steadily as of late, though this is partly due to a massive write-off of debt. However, peace, stability, and better governance have contributed as well. Says an official of South Africa's SABMiller, the world's second-largest brewer: "If there was any more of Africa, we'd be investing in it."

Investing in Africa is not for the faint-hearted. Governments are still often whimsical in making and enforcing the rules, with the wheels of commerce bogged down by incompetence, corruption, and bureaucracy. Much of the economy in the region is informal, because few want to have to go through the red tape of setting up a formal business. Trying to move goods across borders is troublesome, roads are bad, electrical power networks are spotty, and the local citizenry -- though often clever and industrious in their efforts to get out of poverty -- have a low level of marketable skills. Africa only gets about 3% of the world's foreign direct investment, and much of that is from mining and oil companies.

Those companies that do have the nerve to take the plunge are often pleasantly surprised at the results. Celtel, a cellphone company that was organized in the Netherlands to do business in Africa, set up shop in the relatively urbanized regions of Zambia in 1998, with the assumption being that the pickings would be too thin elsewhere. In 2003, Celtel moved into the rural market -- to encounter an astonishing level of demand.

Most of the rural customers never had a phone, but they understood how useful such a gadget was and felt it was worth a good portion of their meager earnings. As noted here last year, Africans prefer a "prepaid" model for phone service as opposed to the subscription model used elsewhere, generally buying prepaid phone cards to get a fixed amount of time. Celtel then added a "Me2U" service that allowed one user to transfer prepaid cellphone credits to another user via cellphone text-messaging, and discovered they had just created a form of electronic currency, with the Me2U service used for personal payments and even purchases of goods. Shopkeepers could accumulate credits and sell them off for hard currency to operators of "phone kiosks", who rent out phone time for small sums. In 2004, Celtel had 70,000 customers in Zambia; now it has a million. The company is enjoying success in a number of other sub-Saharan African countries as well. The secret is to understand local tastes and needs, and to figure out how to accommodate the small budgets of the customer base.

SABMiller has demonstrated a similar flexibility. The company had originally imported malt into Uganda to brew their beer there, but found out that they could use locally-grown sorghum to cut costs, and also give leverage to cut the government's tax bite. The product, named Eagle, proved popular and is also being sold in other African countries. Drinks are generally sold in returnable glass bottles, not throwaway cans or plastic bottles: with labor so cheap, that's the most economical way to go.

An understanding of local cultures can pay off. In Ghana, traders who don't have access to a normal bank come to an arrangement with one of the 5,000 or so "Susu collectors" in the country, who drop by periodically to pick up cash to ensure its protection. A typical Susu collector has about 400 clients; the British bank Barclays estimated that Ghana's Susu collectors represented a $140 million USD market, and decided it would be good business to co-opt them. Barclays set up special bank accounts to serve the collectors and gave them training, not only resulting in profits to the bank but profits to the collectors, who could also extend their services by offering lending to their clients. Bank officials were startled at how much money ended up flowing into the Susu collector accounts, and how shrewd the collectors were.

Sub-Saharan Africa is in fact a golden market for bankers, if they can deal with the unique circumstances of the region. Branch banks of South Africa's Standard Bank in Uganda rely on satellite communications, diesel generators, and solar panels for infrastructure; a branch bank on an island in Lake Victoria that's too small for an airstrip gets bags of cash by airdrop. Standard Bank now handles about half of all of Uganda's banking, and the profits are good -- the beauty of taking the risks is that the competition is weak and the margins are high. Accusations that the firms are exploiting the citizenry are easily countered with the reply that services are now being provided that weren't available before, and the locals are eager to obtain them.

Understanding local conditions often means obtaining a local partner, which also helps reduce resistance against a big foreign firm coming into the country and threatening to walk all over local businesses. Both sides find it a learning experience. Since bringing in help from outside is expensive, firms like to hire locals, giving them training in the required skills -- though in environments where skills are in short supply, such folk end up being in demand, which means the companies try to give their people a good deal, with generous salaries and perks like housing or medical coverage. Big companies have the financial clout to provide such deals, and also have clout to get concessions from local governments. They can even fight corruption to an extent, able to intimidate minor officials asking for payoffs by taking the matter to high government offices.

The concessions are not a one-way street. Foreign companies working in poor African countries are expected to be good citizens. As one businessman put it: "Investing in communities is taken for granted. You do not get rewarded for doing it. You get punished for not doing it." Companies have worked with anti-malarial and anti-AIDS programs, and set up schools, cooperating with non-governmental organizations when possible. In 2005, a group of big firms investing in Africa set up "Business Action For Africa" to boost business there, and a wide range of initiatives are in progress. The biggest issue for further progress is reform, and that seems to be happening. Few African countries call themselves Marxist any more -- having understood the notion, as Winston Churchill once put it, that capitalism is the unequal distribution of wealth while socialism is the equal distribution of poverty. Governments have been privatizing their economies and taking unprecedented efforts to clean up corruption.

There are still plenty of obstacles left, and there's no saying that the tide won't go back out and leave the engines of economic growth broken-down and rusting. There were periods of optimism over Africa in the past that ended in disappointment, but if the momentum can be sustained this time around, the region's growth may prove astonishing.

* ED: Having mentioned "sorghum" in the article, I realized that it was one of those terms that I'd heard all my life and never bothered to look up. Clearly it is a sort of grain, but that doesn't tell me much. On investigation via ENCARTA and Wikipedia, sorghum grasses were originally native to East Africa, with one species native to Mexico, but they are now raised over much of the globe. Wild sorghums are up to 2 meters or more tall and look little like wheat plants; the heads are very different from those of wheat, amounting to a disorderly spearlike cluster of grains, with heads branching out widely from the plant. It has a somewhat weedy appearance. Dwarf breeds, with a height of a meter or so, are also commonly cultivated. Sorghum grows well in dry and hot climates.

There are four families, with sorghums for grain, animal fodder, sweet syrup, and broomstalks. Sorghum is said to be the world's number five crop plant; it is commonly used for brewing beverages, can be used for making breads, but is raised in the US mostly for fodder. Incidentally, although most American consumers are not very aware of sorghum, the US is the single biggest producer, raising a quarter of the world's production. There's some interest in using it for ethanol production -- it isn't a more efficient feedstock than corn, but it can grow in places corn won't.

BACK_TO_TOP* HOTHOUSE WORLD (1): As discussed in an extended survey in THE ECONOMIST ("The Heat Is On" by Emma Duncan, 9 September 2006), the Earth's climate has seemed very stable in the past few centuries. During the 19th century, when the Industrial Revolution was going full-bore, average temperatures hardly changed. They began rising slightly after 1900, dropped a bit after 1950, and then started rising again after 1970. Overall, the average temperature from 1900 to 2000 rose about 0.6 degrees Celsius.

That hardly seems like much to worry about. The Earth's climate is always shifting in one direction or another, and on the face of it nobody would think the modest increase in temperature over a century would be anything more than an item in a paper in a scientific journal. The problem is that the temperature increase appears to be, is increasingly certain to be, part of a process that might lead to an uncontrolled "thermal runaway", with temperatures rising to an ever more disastrous level.

Over the past 3 million years, the world has gone through a sequence of ice ages, periods in which glaciers increasingly covered the Earth, to then fade away for a time. In 1896, the great Swedish chemist Svante Arrhenius published a paper in which he suggested that ice ages might be linked to atmospheric concentrations of carbon dioxide (CO2). The Sun pours light down on the Earth, heating it up; the warm Earth then produces infrared radiation, much of which escapes off into space. Atmospheric CO2 tends to "trap" infrared radiation, preventing it from escaping and making the Earth warmer; in modern terms, CO2 is a "greenhouse gas". The trapping effect is proportional to CO2 concentrations, and so low CO2 concentrations might have led to the ice ages.

There was concern at the time, and later, that the Earth was headed for another ice age, which would undoubtedly have a savage impact on human population, but in a later book Arrhenius suggested: not to worry. Human industrial emissions of CO2 would be strong enough to prevent the Earth from slipping back into another ice age, and the warmer Earth that would result from these high CO2 levels would allow humans to grow more crops to feed an expanding population.

Nobody took Arrhenius's idea too seriously. In 1938 a British engineer named Guy Callendar suggested the Earth was warming, but nobody took him too seriously either. In fact, in the postwar period there was a faction of weather researchers who suggested a new ice age might be in store and in fact. This was not universally accepted by the research community, but the popular media played it up -- as late as 1975 the US news weekly NEWSWEEK ran a cover article titled "The Cooling World", which predicted that a disastrous ice age was then in the making.

That was shortly after the end of a two-decade cooling trend, so it wasn't a completely wild idea, but atmospheric CO2 concentrations were then continuing on what amounted to a steady increase. In fact, as shown by analysis of ice cores from the Greenland icecap, from the beginning of the industrial revolution to the 21st century, atmospheric CO2 concentration has increased from 280 parts per million (PPM) to 380 PPM, a level not seen for 500,000 years. At the current rate of growth, the CO2 concentration will be 800 PPM in 2100. The midcentury cooling trend, it turned out, was also due to emissions -- of particulate pollutants, which reflected sunlight back into space and help cool the world. Effective pollution control measures dropped the concentration of particulates, and so the temperature began to climb again.

Climatologists are very worried about what might happen to the Earth if CO2 concentrations reach 800 PPM and have spoken out about their concerns. The end result has been one of the loudest quarrels of our times. Advocates of global-warming theories insist that the welfare of the planet is at stake, while the critics insist it's all a huge con, a fraud being put over by hysterical "Greens" whose alarmist "Chicken Little" scenarios have no real basis in fact. The critics point to the "cooling world" fuss of the 1970s, saying that we've just gone from one fad to the next.

The advocates' side includes British scientist James Lovelock, who sees that Earth as a "superorganism" named "Gaia" that is being disrupted by global warming; California governor Arnold Schwarzenegger, whose political agenda includes legislation to halt climate change; and presidential hopeful Al Gore, who has traveled the world telling people "An Inconvenient Truth". The critic's side includes Bjorn Lomborg, a Danish statistician who is highly skeptical of global-warming data, and Senator James Inhofe, chair of the Senate Environment & Public Works Committee, who thinks global warming is outright silliness.

* There is a basis for the skepticism. The weather is famously unpredictable, the classic example of an unstable system, where a slight influence in one place can have enormous effects in another by pathways that very hard to nail down -- a phenomenon known as the "butterfly effect", in which the flapping of a butterfly's wings can lead to a hurricane on the other side of the world. Climatologists are perfectly aware of this and have traditionally tended to hedge their statements about climate change.

Computer models designed to predict climate change are extremely tricky to write. There are actually about 30 different greenhouse gases -- water vapor is the most common and important, amounting to a percent or two of atmospheric concentration. However, water vapor occupies an ambiguous position in the global warming debate, since human activities don't directly change its concentration much, there being so much water in the air that we'd be hard pressed to add any significant amount to it. It also isn't persistent, it precipitates out of the air very easily. Its overall global concentration is effectively a function of global temperature.

That means that any other factor that raises global temperature can, in principle, obtain "leverage" to increase the temperature further through the increase in water vapor. CO2 is the worst offender because it has the highest concentration after water, but methane is 21 times more effective in its warming effect than CO2 -- and each has its own "cycle" of production and elimination. There are also concerns about how to factor other effects into the models. As the Earth warms, ice will melt, reducing the reflection of radiation back into space; the oceans will not be able to absorb as much CO2; and enhanced microbial activity in the soil will produce more CO2. Exactly how much will these factors affect the climate? A warmer world will mean more evaporation of water and more cloud cover; on the balance, will that cloud cover's tendency to reflect light back into space outweigh its tendency to trap heat?

Climatologists have been increasingly giving coming forward about global warming because, no matter what the particular details are, the big picture seems somewhat frightening. Humanity is performing a massive uncontrolled experiment on the Earth's environment, and that idea would give any sensible person cause to stop and think for a moment. However, governments always have a long list of problems to deal with, and for good reasons real problems in the present tend to trump theoretical problems in the future.

Governments were concerned enough to set up an "Intergovernmental Panel on Climate Change (IPCC)" to provide recommendations. The IPCC deliberated on the matter and released estimates of global warming; the latest, issued in 2001, suggests that global temperatures might increase from 1.4 to 5.8 degrees Celsius by the year 2100. The wide range of the estimates didn't inspire confidence, though it certainly suggested the way the trend was going, and the panel was challenged by the critics. Some claimed the evidence didn't support the idea of global warming; others admitted that it did, but that trying to head off global warming would be more trouble than it was worth.

One problem was that at the time of the 2001 IPCC report, the data was contradictory. Earth-based data seemed to show the temperature was rising, while satellite-based data didn't. However, over the past few years the satellite data has been shown to be in error, and now the temperature trendline is clearly up and to the right. Arctic sea ice and glaciers are clearly melting rapidly, and an increase in violent hurricanes and other storms seems increasingly linked to the rise in temperature. A general consensus is emerging that global warming is for real; the question is what, if anything, should be done about it. [TO BE CONTINUED]

NEXT* INFRASTRUCTURE -- OIL & GAS (5): Oil has to be stored at a refinery before and after processing, and that means a tank farm. Tank farms are usually set up at refineries and tanker ports. Small tank farms are also found at fuel distribution centers. Even the biggest tank farms cycle through their contents in no more than a few weeks, so great is the demand for oil.

The oil tanks are fabricated using steel panels and vary in size from about that of a house to a good sized sports arena. The biggest tanks may have much wider diameters than the small tanks, but they won't be much taller. Fluid pressure is determined by depth of fluid, not the total amount of fluid, and making a tank taller would demand greater structural strength.

Some tanks have fixed shallow conical roofs supported by a center post, along the lines of a circus tent. However, this configuration is only practical for nonvolatile fuels like diesel and home heating oil. Volatile fuels would tend to evaporate away into the space below the roof, not merely emptying the tank but contributing to pollution and increasing fire hazard. The trick for volatile fuels is to use a "floating roof" tank, in which the roof is a flat circular sheet rimmed by gasketing that floats on top of the fuel. Floating-roof tanks tend to have structural bracing around the top edge to compensate for the loss of the roof as a structural support, and also often have a walkway around the top edge. One of the problems with the floating-roof design is that it tends to accumulate rainwater. In some cases, the rainwater is drained through a flexible pipe in the center of the floating roof that runs through the tank and out the bottom. In other cases, a fixed roof is built on top of the floating roof. Incidentally, tanks for storing volatile fuels are generally white, to reflect solar heat; tanks for thick, nonvolatile fuels are generally black, to absorb solar heat and keep the fuel flowing.

Each tank in a tank farm is isolated by an earth berm to contain the fluid if the tank ruptures, with the berms also preventing fires from spreading. Walkways, pipelines, and the like go over the berm, not through it. The berm walls are designed to be tall enough to contain the full contents of the tank. A tank farm also generally includes fixed fire-fighting stations, which may be permanently equipped with water cannon.

Gaseous petroleum products like propane and butane have to be stored under pressure, up to 17 atmospheres, and so gas storage tanks are generally spherical, kept from rolling off by a set of pillars; such tanks often feature a neat spiral staircase around the outside running to the top. They have to be built to a higher standard than a regular oil tank and are expensive. Of course, berms would be almost useless for containing a gas leak and so such gas tanks don't have berms. The tanks are often tipped with wind socks to suggest which way to run in case something goes seriously wrong. [TO BE CONTINUED]

START | PREV | NEXT* FINGERING FRAUDS: An article run here in July discussed the exaggeration of the extent of identity theft, with the article mentioning how credit-card fraud has been minimized by use of software that identifies patterns of bogus transactions. An article in THE ECONOMIST ("Secrets Of The Digital Detectives", 23 September 2006), lifted the lid on the nature of software designed to detect fraudulent transactions.