* Entries include: CLIMBING MOUNT IMPROBABLE, fun with my new PC system, 4D flight trajectories, Luke Skywalker prosthetic arm, advice for aspiring scientific geniuses, cruise ships, multi-touch touchscreen tech, biofuels from algae, shell shock reconsidered, NLOS PAM precision strike system, conservatives for Obama.

* NEWS COMMENTARY FOR NOVEMBER 2008: To no real surprise, Barack Obama won the US presidential race on 4 November, defeating John McCain by a healthy margin, if not a landslide. Obama won 28 states and the District of Columbia, while McCain won 21 -- Nebraska has an unusual voting setup, and the two more or less split the state. Voter turnout was 62%, about 126 million, with Obama obtaining 53% of the vote while McCain won about 46%. The electoral college vote was very lopsided, 365 for Obama and 162 for McCain.

McCain was eloquent in defeat, saying in his concession speech that Obama won fair and square, that this was a "historic election", that "America today is a world away from the cruel and prideful bigotry" of the past, and pledge to "do all in my power to help him". Current US President George W. Bush, who might have been expected to have kept a low profile over the matter, was almost effusive in his praise for the president-elect, calling his win "a triumph of the American story" and saying "all Americans can be proud of the history" made by the election. Bush added that turning over the White House to Obama "will be a stirring sight".

Now the news has been floating around about two women who lost their shot at the White House: Senator Hillary Clinton and Alaska Governor Sarah Palin. The rumor mill is hinting that Clinton will obtain a high cabinet post, possibly secretary of state -- it seems for some mysterious reason, that's become something of a woman's job. Palin seems to have become something of a darling of the Right Republicans and they would like to make more of her.

Palin's critics have claimed she lost McCain the election, but McCain always had a difficult choice: he couldn't pick a running mate that was acceptable to both the Independents and the Right Republicans, and if he had chosen a vice presidential candidate like Joe Lieberman who was acceptable to the Independents, he would have antagonized the Right Republicans. Palin's backers are making sounds about pushing her as their presidential hopeful in 2012, but it seems unlikely that, without a serious makeover, Palin has any chance of ever being president of the USA. Palin would be an unelectable "Howard Dean" candidate, very attractive to the true believers, and unacceptable to everyone else.

* While the rest of the world may find the US presidential election process overblown and baffling, THE ECONOMIST's rotating US columnist Lexington gave it a more flattering read in an essay titled "Two Cheers For American Democracy", which is worth summarizing here. The essay began with:

BEGIN QUOTE:

There is nothing else like it on earth. No other country obliges its future leaders to spend two years on the campaign trail. No other country forces them to conjure hundreds of millions of dollars out of thin air. And no other country gives make-or-break power to people in plaid shirts in out-of-the-way places.

END QUOTE

The essay went on to point out that it's not surprising that the American election circus has its critics. It places too much power, they say, in states like New Hampshire and Iowa; the money involved tends to lead toward corruption; the length of the campaign tends to highlight trivia; and the effort may well leave the winner exhausted on entering office.

Still, a long campaign means that the candidates spend a lot of time getting in touch with the people they hope will elect them. More significantly it is an enormous test of character, demonstrating endurance, organization, social skills, mastery of rhetoric, and grace under pressure. However, the best thing that can be said for the system is that it is so democratic. In most countries, party leaders are chosen by political insiders. In America, rank-and-file party members (and some independents) get to choose. Millions of people turned out to push their favorites.

There are reasons to hold off on the third cheer. The candidates tend to dodge the hard questions, and the election process becomes a headline obsession that blanks out other important news. The system may be time-consuming and money-grubbing -- but it still has allowed the son of a couple of nobodies, who was denied a floor pass to the Democratic Convention eight years ago, to become, through sheer charisma and organizational skill, the president of the country.

BACK_TO_TOP* GIMMICKS & GADGETS: As reported in THE ECONOMIST, a group at the IBM research laboratory in Zurich, Switzerland, is now working on water-cooled computer chips. Water-cooled electronics are nothing new -- way back when, high-power metal-can radio transmitter tubes were sometimes water-cooled. It might not seem that computer chips are in the same league of a heavy vacuum tube that runs so hot nobody could get near it if it was on, but as it turns out, on a volume basis modern silicon electronics technology can generate about two kilowatts of heat per cubic centimeter. That means it runs hotter than one of those old monster vacuum tubes, at least on a unit volume basis.

That hasn't been such a problem in the past since chips are traditionally planar and not very big, but they have been getting bigger steadily, and worse for heat dissipation, stacked designs are becoming increasingly popular. The IBM researchers have been developing stacked chips with hair-width channels 50 microns in diameter running through them to carry cooling water.

That's interesting in itself, but now consider a power-hungry data center running rows of servers containing water-cooled chips -- translating into a lot of waste heat being dumped through a central heat exchanger that could provide heating to neighboring houses and buildings. Data centers tend to be sited in cool climates to reduce the air-conditioning load, and a data center soaking up a megawatt of power could provide heat to 70 houses. It may sound like a wild idea, but IBM is considering building such a system.

IBM researchers in the US are similarly investigating water-cooled solar cells. One way to get economical power out of a relatively expensive high-efficiency solar cell is to concentrate sunlight on it using lenses or mirrors, but of course that means running the risk of frying the solar cell. Using water cooling, the IBM researchers have been able to concentrate sunlight on solar cells by a factor of 2,300, with the solar cell producing 70 watts per square centimeter.

* I found a website titled "MODERN MECHANIX: Yesterday's TOMORROW Today" -- a fairly tidy piece of work, a nicely organized set of impressively clean scans of articles and ads from an extensive archive of pop science and technology magazines going well back from the 1970s.

Back in the 1960s, a friend of our the family once gave us an oversized box full of old popular science magazines going back to the late 1940s and I spent many hours wading through them. MMYTT occasionally runs articles from that batch of magazines that I clearly remember after the passage of about four decades. The visions of the future past range over the ridiculous; the over-optimistic; the ingenious but not necessarily practical; the brilliant but too far ahead of the time; to the entirely farsighted. I was reminded of the fact that anyone who's been in a manufacturing environment knows that even for a successful company, only a relatively small proportion of the products designed are big winners. The winners are the ones that keep the company going, the rest of the products are middling or outright failures. Trial and error plays a bigger part in how things work than most like to admit.

Some of the ads on MMYTT are interesting too -- particularly reflecting the of Boris Artzybasheff (1899:1965), a Ukrainian-born American illustrator with a knack for drawings of humanized tools and machinery that came across as ingenious, humorous, and grotesque in a deranged way at the same time. Artzybasheff was very popular; he often did covers for TIME and other major magazines. I keep wondering if anyone ever tried to put together an animated video based on his work. It might give people nightmares.

* FLYING IN FOUR DIMENSIONS: Traditionally, once an airliner left the runway, the people on the ground knew not much more about its journey other than its general flight plan and time of arrival at its destination. The only way to make such a system manageable was to provide generous spacing between aircraft, in effect reserving a large corridor of sky in both space and time for each aircraft. As reported in AVIATION WEEK ("The Fourth Dimension" by David Hughes, 11 August 2008), in an era of rising fuel costs a more precise approach is needed, since even a small reduction in fuel burn for a journey translates to big savings.

The result is the "four-dimensional trajectory (4DT)", in which the flight of an airliner is tracked and controlled precisely in both space and time to ensure the most economical and optimum flight profile. 4DT was made practical by GPS navigation and satellite communications that allow the flight path of an aircraft to be continuously tracked -- with the data crunched by "Air Traffic Management (ATM)" computing hardware and software to adjust the flight path as needed in real time, with the corrections directly uploaded to an aircraft's flight processor over a datalink. With 4DT, a jetliner can avoid wasteful go-rounds for landing, instead following a carefully-controlled and precisely-timed descent path straight into the destination airport.

4DT is an integral component of the "NextGen Air Traffic Control (ATC)" system upgrade being developed by the US Federal Aviation Administration (FAA), as well as the "Single European Sky ATM Research (SESAR)" project being conducted by the European Eurocontrol organization. Jetliner giants Boeing and Airbus are also involved in 4DT research. At the present time, however, the different players are working slightly at cross purposes towards a system where international compatibility will be an absolute necessity. For example, the Americans and Europeans have slightly different goals in their 4DT system development programs and use slightly different terminology.

A 4DT working group (4DTWG) has been established between the proper authorities in America and Europe, the US "Radio-Technical Commission for Aeronautics (RTCA)" and the "European Organization For Civil Aviation Equipment (EUROCAE)". One of the early issues to be resolved is that of the datalink technology. Current ATC is dominated by voice control, with ATC controllers telling aircrew what to do next, and going to a datalink is a big change. The Americans envision implementing the datalink in two phases, the first providing a basic functionality and the second full functionality, while the Europeans would like three, inserting an intermediate functionality between the basic and full functionality phases.

One difficulty is that the full-functionality datalink hasn't been well defined yet; it's not expected to be available until 2020 at earliest. However, a basic capability may be available as early as 2013. There are also challenges in defining the ground and flight hardware at both ends of the datalink to support 4DT. The software system is of course a major challenge, since it must factor in geography, weather, transient obstacles, all other conflicting flight traffic, and unforeseen circumstances such as an aircraft engine failure.

Some technology demonstrations are already being performed. General Electric Aviation and Scandinavian Airlines System (SAS) have implemented a demonstration system using an SAS Boeing 737 jetliner. Boeing is also running a demonstration project in collaboration with a number of airlines, with tailored arrivals at three airports -- San Francisco, Miami, and Melbourne, Australia. Although 4DT is very promising, there are worries about the challenge: large aviation infrastructure systems have proven troublesome in the past, being prone to schedule slippages and cost overruns, and participants want to make sure the road to 4DT isn't so rocky.

BACK_TO_TOP* LUKE SKYWALKER ARM: As reported in an article in IEEE SPECTRUM ("Dean Kamen's Luke Arm Prosthesis Readies For Clinical Trial" by Sarah Adee, February 2008), Chuck Hildreth lost both his arms in the early 1980s when he was electrocuted while painting a power station. His right arm was so badly fried that the surgeons even had to remove the shoulderblade. They managed to save part of the left arm halfway between the shoulder and the elbow. He was fitted out with prosthetic arms as part of his rehabilitation.

He hardly ever wears them. Prosthetic arm technology is not that far advanced from the days of the American Civil War, and Hildreth found the clumsy prosthetics more trouble than they were worth. They were sweaty, slippery, tiring, and ineffectual; he found he could perform most tasks, even change the blades on the lawn mower, with his feet. His experience is not unusual: people who lose their arms often give up the prosthetics within a year or two.

Now Hildreth is a guinea pig for Deka Research & Development Corporation in New Hampshire -- Deka being the brainchild of Dean Kamen, creator of the Segway scooter. Hildreth is putting an advanced prosthetic arm, known as the "Luke arm" for the prosthetic fitted to Luke Skywalker in STAR WARS after Darth Vader sliced off his forearm. Says Kamen: "Prosthetic legs are in the 21st century. With prosthetic arms, we're in the Flintstones."

* Kamen wants to bring the prosthetic arm up to date, though it wasn't his idea at the outset. In 2005, he was approached by Tony Tether, director of the Pentagon's Defense Advanced Research Projects Agency (DARPA) and Colonel Geoffrey Ling, who runs DARPA's prosthetics program. DARPA was set up in the Eisenhower Administration to perform "blue sky" research on defense technologies that the armed services, focused on immediate needs, would find difficult to fund, and the agency wanted Kamen to develop a prosthetic arm that leveraged off modern technology.

Kamen said later: "I thought they were crazy, in a good sort of way." Developing an advanced prosthetic arm would be difficult and expensive, and there wasn't a commercial market that made the funding worthwhile. However, DARPA isn't a commercial organization, and the agency wanted to develop advanced prosthetics for troops maimed in combat. DARPA had initiated a "Revolutionizing Prosthetics" program in that year, 2005, initially issuing a $30 million USD contract to a research team led by Johns Hopkins University's Applied Physics Laboratory (JHU APL) in Laurel, Maryland, to conduct a four-year investigation of a high-end prosthetic arm with direct neural control. An $18 million USD contract was then awarded to Deka to conduct a two-year investigation into a less technologically aggressive prosthetic arm, to be controlled with noninvasive measures and to be available as soon as possible.

Well before award of the contract, Kamen embraced the craziness and toured the country, talking to patients, doctors, and researchers to get a handle on current prosthetic technology. He realized he basically had to start from scratch. To be sure, powered arms have been available for some time, but they're hard to control, and so most users choose the traditional hook-&-cable prosthetic. In both cases, the arm has only three "degrees of freedom": the user can move the elbow, the wrist, and open or close some type of hook. Kamen felt the technology for a better prosthetic arm was available and that it was now possible to cram all the digital control electronics, motors, lithium batteries, and wiring into a prosthetic arm of appropriate size and weight. The result was the Luke arm.

A human arm has 22 degrees of freedom, not three. The Luke arm comes close, with 18 degrees of freedom, controlled by considerable computing power. Test subjects using the arm can pick up pieces of candy one by one, handle a power drill, unlock a door, and shake a hand. It has six preprogrammed grip settings to perform these tasks, with names like "chuck grip", "key grip", and "power grip", each based on a common set of human grip operations.

The Luke arm was also designed to be modular, allowing it to be used by subjects with any level of amputation. The hand is driven by its own electronics, as is the forearm. The electronics that drive the elbow are in the upper arm. The shoulder is also powered, allowing the Luke arm to actually allow a wearer to reach up, which is impossible with traditional prosthetic arms.

The Luke arm had to be lighter than the limb it replaced, since it could not be attached to the human skeletal system. A prosthetic arm for amputees who have lost an arm above the elbow requires a body harness, with the arm connected to the stump through a socket. The socket connection traditionally is uncomfortable at best and painful at worst, which is why most users of traditional prosthetic arms give up on them. Deka engineers made the arm with lightweight materials -- most of its structural elements are aluminum, interestingly titanium proved too heavy -- and came up with a new, much more comfortable arm socket that can also be used with traditional prosthetic arms.

Figuring out how a user would control the Luke arm was a particular puzzle. The DARPA contract specified that the control scheme had to be completely noninvasive. Kamen was of course agreeable, but the Luke arm was designed to support any means of control, both invasive and noninvasive. The noninvasive scheme is very capable, with Chuck Hildreth commenting: "I can do things I haven't done in 26 years. I can peel a banana without squishing it."

He controls the Luke arm with input devices embedded in the soles of his shoes. "When I push down with my left big toe, the arm moves out. When I move my right big toe, it moves back in." In the prototypes, the input devices are hooked up to the arm using flat cables, but the objective is to use a wireless system instead. Hildreth obtains force feedback with a vibrating device in the Luke arm socket called a "tactor". Sensors in the Luke hand are linked to the tactor, with the level of vibration increasing with grip strength. He can pick up a paper cup without crushing it, or handle a cordless drill without dropping it.

* The two-year DARPA contract with Deka has ended. DARPA is strictly a research organization, and other organizations have to pick up the results of DARPA research for production and fielding. Officials involved with the Luke arm project feel confident backers will be forthcoming. According to Colonel Ling: "We're trying to get a transition partner so it can go into clinical use and a commercial partner to get it out to the patients. This is no longer a science fair project."

Kamen feels that Deka has done the bulk of costly research and development on the arm, and that manufacturers can now take the technology and "productize" it. The Food & Drug Administration does have to approve the Luke arm for use, however, and that means funding clinical trials. Right now, nobody is coming forward with the money. DARPA has links to other government and commercial organizations, as well as some flexibility in funding mechanisms; the general feeling is that something can be worked out. Hildreth says he can't wait to get a Luke arm home, and adds: "My wife can't wait either. She says: 'Oh yeah, I got lots of stuff for you to do around the house.'"

BACK_TO_TOP* NEW PC ADVENTURES (6): Along with my ultimately unsatisfactory Eee PC, as mentioned earlier, I decided to buy a relatively cheap and lightweight Windows laptop PC. I settled on an Acer Extensa; I had been worried that running Vista might be a problem, but it seems even modest laptops have 2 GB of RAM and 120 GB of hard disk these days, which is plenty for Vista.

Since I was already basically up to speed on Vista, getting the Acer to work was no problem. The only issue was setting up a wireless connection. I was used to hooking up wi-fi in a motel or library, but such places use public-access networks, anybody gets in for the asking. For a home system I have to make sure I'm the only one who gets in lest somebody case my system. That turned out to be simpler than I thought: all I had to do was specify the wireless encryption protocol type (there are options) and a ten-digit security code printed on the Qwest wireless modem. That gave me internet access. It took more puzzling to figure out how to transfer files from the desktop to the Acer, but all it amounted to was authorizing access to a "Public" directory on the Acer from my desktop. Figuring out how to set permissions on other directories on the Acer was troublesome. I finally shrugged and decided to store my files in the Public directories.

I used the Extensa as my jukebox for a while, but it proved unsatisfactory in the role. The first problem was that it kept cycling the hard disk drive, which was annoying with low-volume music. I put the music on a flash card and that helped to an extent. However, it also kept cycling the cooling fan, and there was no way around that. Back to the old drawing board.

* Acer sells a "mini-laptop" along the lines of an Eee PC with a hard drive for about $350 USD and I was tempted a bit, but the Extensa really does the job fine. I think I will get around to buying another mini-laptop one of these years, but given the fact that my actual need for it is small and it wouldn't be much more than a toy, I'm going to wait for more capability at less price. More cheap cute mini-laptops are coming down the road, and it might be interesting to see what's available in 2009 or 2010.

Any old-timers remember the "$100 Computer" of a previous generation, the Timex-Sinclair ZX81? Introduced in 1981, it had an 8-bit Z80 CPU running at a blazing 3.25 MHz, and all of 2 KB of RAM, expandable to a whopping 64KB. It used a standard TV set for display output and an ordinary portable cassette tape drive for mass storage. It was mildly amusing for folks who liked to play with BASIC programs. I had to think that the "limited" Eee PC, with his 800 MHz processor, 512 MB RAM, and 1 GB flash disk, was not only light-years beyond the ZX81, it would blow the socks off any PC available in 1990.

"A ONE-GIGABYTE mass storage device? You're joking." Hey sport, I can carry a couple of gigabytes on a stick on my keychain. In fact, flash is so cheap that if I've got a bit of pocket change to spare when I'm ordering something from Amazon.com, these days I'll buy a 2 GB flash card. 2 GB is plenty for my needs, and it's nice to have a spare around. [TO BE CONTINUED]

START | PREV | NEXT* CLIMBING MOUNT IMPROBABLE (7): An eye arranged as a simple array of light receptor cells can have high light sensitivity, but it cannot obtain an actual image of what it's looking at. It picks up light from all directions in its field of view, and the only capability an organism with such a eye has for visual discrimination is to move around, changing the field of view to see which directions seem light and which seem dark.

One simple way to improve on matters is to arrange the array inside a cup. All this really does is narrow the field of view, giving the organism better discrimination on the direction of the light. The deeper the cup, the better the discrimination. Cup-shaped eyes of a range of depths are common in the animal kingdom, with examples including flatworms and clams. The different creatures with such eyes have eye structures that differ in detail, suggesting they evolved separately.

The cup-type eye still suffers from the problem that can only sense if there is light in some direction or not, it still can't form an image on the array of light receptor cells. Now suppose the entrance to the cup shrinks down to a mere pinhole; the structure will then act as a "pinhole camera", casting an image onto the light receptor array. The squidlike chambered nautilus is well known for its pinhole camera eye; other organisms, such as some marine snails, also have them, though there is (as would be expected in evolutionary terms) a fuzzy dividing line between an eye in a deep cup and a pinhole camera eye.

The pinhole camera eye of the nautilus is open to seawater. It would help protect the interior of the eye if it was filled with some transparent substance and maybe covered with a relatively tough transparent film. Such eyes do exist, and they provide a jumping-off point for more sophisticated eyes.

While the pinhole camera eye can form an image, the pinhole is limited on the amount of light it can admit -- it has a small "aperture" in other words -- and so it doesn't work well in faint light. Now suppose we have an organism with a pinhole camera eye filled with a transparent substance and sealed off by a transparent film. The film could gradually enlarge and acquire a lens configuration that could focus the light, more of it, on the light receptor array.

Now we have a camera-type eye like our own -- or at least, like our own to an extent. Our eye also includes a capability for changing the focus of the eye, a pupil to cut down the light admitted under bright conditions, and of course means of rotating the eye in different directions. We also have a fair chunk of our nervous system devoted to picking up and interpreting imagery obtained by our eyes.

It is no more impossible to conceive of how these "accessories" could have evolved than it is to conceive how the eye itself could have evolved, and there is a range of "solutions" to these problems that suggests how they did evolve. Small creatures often don't have any capability for focusing their vision, making their eyes much like the old "Box Brownie" camera that couldn't be focused. We focus our eyes by adjusting the curvature of the lens, while snakes and frogs do it by moving the lens back and forth, which is how a mechanical camera works.

The pupil is a little trickier, but there's nothing impossibly complicated about a variable shade any more than there is about an anal sphincter, and the evolution of pupils is suggested by their variations in form. We have circular pupils, while goats have horizontal oval pupils which gives them their slightly alien appearance. Cats and some snakes have vertical slits; other snakes have horizontal slits. The pupil configuration isn't picky, anything that does the job is fine. [TO BE CONTINUED]

START | PREV | NEXT* SO YOU WANT TO BE A GENIUS: Physicist Sean Carroll -- not to be confused with the biologist of the same name -- wrote an essay titled "The Alternative Science Respectability Checklist" as a guide for those folks out there who think they have come up with a revolutionary scientific. The essay was witty and worth reprinting here, in a grossly edited-down fashion.

* I do understand that you are in possession of a truly incredible scientific breakthrough that promises to change the very face of science as we know it, if not more. Maybe you've built a perpetual-motion machine from common household items; maybe you have a new universal theory of physics; maybe you have obtained proof that modern evolutionary theory is unworkable. Unfortunately, your ideas fly in the face of accepted scientific notions, and you don't have the elaborate academic credentials that would impress mainstream scientists. That's to be expected, of course, since revolutionary breakthroughs just aren't going to come from the hidebound academic establishment -- they require the fresh perspective that only an outsider genius (such as yourself) can bring to the problem. The problem is that although science is supposed to be all about being open-minded, and there's still so much we don't understand about how the Universe works, people find it hard to take you seriously.

While you may actually be the next Einstein, it's an unfortunate fact of life that there are far more crackpots who think they are Einsteins than there are Einsteins, and so the odds of being a crackpot are much greater than the odds of being an Einstein. Even if you know you really are an Einstein, how are you going to stand yourself out of the crowd of crackpots? What do you need to do to get a fair hearing for your ideas?

Fortunately, help is at hand. We can provide a simple checklist of things that alternative scientists should do in order to get taken seriously by the Man. And the good news is, it's only three items -- how hard can that be? To be sure, dealing with the three items may require a fair amount of work, but nobody ever said that being a genius was easy. Anyway, here's what you need to do:

ONE: Acquire basic competency in whatever field of science your discovery belongs to. In other words, you'll need to be familiar with what is already known. If you have a new theory that unites all the forces, make sure you have mastered elementary physics, and grasp the basics of quantum field theory and particle physics. If you've built a perpetual-motion machine, make sure you possess a thorough grounding in mechanical and electrical engineering, and are pretty familiar with the Laws of Thermodynamics.

Now, you may reply that your new insights couldn't have been obtained had you been tied down by the preconceived notions of established knowledge -- but sadly, that response is not going to fly. We have acquired an enormous body of knowledge of the way the Universe works over the past centuries, and useful new insights cannot be ignorant of well-validated facts. Even if parts of the conventional wisdom are incorrect, you'll still need to understand them and show where they go wrong.

It's a matter of respect. You're asking scientists to take your work seriously, and spend effort investigating your claims. Very well, you owe them the same level of respect; you have to understand their work, and address it in a credible fashion. Few are going to believe that you have discovered a completely obvious mistake that generations of scientists have been too clueless to notice. Scientists know the odds are much better that it is you who has made at least one very naive mistake than that you have actually seen the loophole the professionals somehow never noticed.

TWO: Understand, and make a good-faith effort to confront, the fundamental objections to your claims by established science. If you are saying things that fly in the face of well-established principles, it is your job to convince people to take you seriously. Nobody has any obligation to convince you that you are wrong, and even if they are incautious enough to try, eventually they will weary of that game and wander off. If you claim that you have built a workable perpetual motion machine, you shouldn't be surprised that people are skeptical that you have figured out a way to get around the law of conservation of energy. They will raise objections; if you brush off the objections and complain that you are being treated unjustly, the conversation's over.

THREE: Present your discovery in a complete, transparent, and unambiguous way. It is a rule of science that extraordinary claims require extraordinary proof. If your arguments are sketchy, vague, and muddy, people are going to find them irritating, not convincing. If you respond to all criticisms that your theory hasn't been properly understood, then that means is that your explanation of the theory needs some work. If you claim to have conducted experiments that contradict existing evidence, other people will need to be able to conduct the same experiments to see if that is really the case. No fair saying: "Well, if you come into my lab, I'll turn it on and show you how it works." If you declare that important aspects of your work are secret, then you will have to expect that people will not bother to inquire further.

* So there you have it, three simple items. Maybe you are the next Einstein, but you'll find it easier to convince people if you do the work. Oh, and a fashion hint: lose the tinfoil-lined hat.

BACK_TO_TOP* RESORTS AT SEA: The SCIENTIFIC AMERICAN "Working Knowledge" column for July 2008 ("Nimble Skyscrapers At Sea" by Mark Fischetti) took a close-up of the cruise ship OOSTERDAM of the Holland America Line. It is huge, 285 meters (934 feet) long and 14 stories tall, capable of carrying 1,800 passengers with a crew of 800.

Despite its size, it is remarkably agile, able to spin 360 degrees and even move sideways. Traditionally, ships have been driven by diesel engines or turbines driving a propeller at the rear of the vessel, with a rudder behind the propeller. The OOSTERDAM still uses diesel engines -- five huge ones, capable of producing a total power output of 66,320 megawatts (88,900 horsepower), complemented by a gas turbine -- but they are used to drive electric power generators, with the electricity driving the propulsion system in turn.

Primary propulsion is implemented with two "Azipods", a scheme developed by ABB OY of Finland. The Azipods are at the rear corners of the ship and consist of an oversized electric motor in a rotating streamlined pod with a 5.5 meter (18 foot) propeller at the end. The Azipods can turn in any direction, and eliminate the need for a rudder. They are complemented by three "bow thrusters", propellers set sideways in the forward keel of the ship to help the vessel turn or move sideways. The propulsion system uses about two-thirds of the power generated by the diesel engines, while the ship's services -- lighting and so on -- soak up the other third. The engines will consume about 210 liters of heavy fuel oil per kilometer (90 US gallons per mile) at normal cruise speed, and about 355 liters per kilometer (150 US gallons per mile) at the top speed of 45 KPH (28 MPH / 24 knots).

Although the ship has no rudder, there is a stabilizer fin on each side of the OOSTERDAM's hull amidships to reduce rolling in rough seas. Fuel, ballast, freshwater, and wastewater tanks are sited low in the hull and the crew has to make sure their contents are balanced properly lest they affect the vessel's trim. The ship has its own water desalination system, capable of producing 530,000 liters (140,000 US gallons) of fresh water a day. The wastewater is divided into "graywater" from showers and the like and "blackwater" from the toilets; both are treated on board to output clean water, with the solidified waste from the blackwater system stored and eventually unloaded at port.

The actual crew required to run the ship only runs to about two dozen personnel. The passengers are cared for by about 400 stewards and 400 "waitstaff", who are generally from Indonesia and the Philippines. The employees all work seven days a week for ten months of the year, and sleep below decks, two to a room. They have their own network of corridors and stairs to get around. Passengers used to be given occasional tours of the systems below deck, but worries about terrorism have placed the crew and work areas off-limits to guests. Incidentally, since the OOSTERDAM flies under the Dutch flag, all aboard are subject to Dutch law. How this applies to the use of recreational drugs is an interesting little question.

* A complementary article published in POPULAR MECHANICS ("How Do You Build The World's Biggest Boat?" by Jeff Wise, October 2008) went to the Aker Shipyards in Turku, Finland, to observe the construction of the Royal Caribbean cruise ship OASIS OF THE SEAS, which at a length of 360 meters (1,180 feet) will outsize the OOSTERDAM when launched in December 2009. It will be 18 storeys tall, with the biggest swimming pool ever put on a ship, and will accommodate 6,300 passengers.

The OASIS OF THE SEAS will take two years of work to complete, a remarkably short time for such a huge vessel. Traditionally, ships were built in a drydock from the keel up, but that approach doesn't lend itself to rapid construction. The trick to building an oversized vessel is automation, with modules or "blocks" built in a factory that are then fitted together like Lego blocks to build up the ship.

Steel sheets arrive at the factory, with automated welding machines assembling basic elements and oversized conveyors hauling the elements around the factory. The result of the process is a section of a deck; three sections are stacked and welded together to form a block, which includes cables, ducts, and wiring. A 144-wheel crawler takes the block at 10 KPH (6 MPH) to a paint shop, where it is completely covered with corrosion-resistant paint. A total of 181 blocks must be fitted together to make up the cruise ship. Cabins are actually separate prefabricated modules, wheeled into place inside the blocks.

The modular scheme requires definition of interfaces between the blocks to allow hooking up the wiring and other utilities. Computer-aided design (CAD) is absolutely essential to such a scheme; it would be very difficult to design the blocks by traditional methods and expect them fit together properly, but with CAD the blocks can be "virtually" fitted together before any metal is cut. CAD similarly allows the ship's design to be "inspected" before the vessel is built, and simulations are also performed to validate evacuation procedures in case of fire or sinking. All that doesn't come cheap: the OASIS OF THE SEAS will cost a cool $1.2 billion USD.

BACK_TO_TOP* IN TOUCH: Touchscreens have been around for a long time and they're common enough technology, entirely familiar in public places, for example self-checkout systems in stores, ATMs, and kiosks. The classic touchscreen involves jabs with an index finger on a set of "buttons" drawn up on a display. As reported in an article in SCIENTIFIC AMERICAN ("Hands-On Computing" by Stuart F. Brown, July 2008), touch input technology is now undergoing a significant upgrade.

The Apple iPhone was the thin entering wedge of the new touchscreen technology. There was nothing unusual about the iPhone in using touch inputs for control, but it added new features -- for example, the ability to stretch windows on the display by using two fingers, spreading them apart or bringing them together. However, more sophisticated touch systems that not only allow the simultaneous use of all ten fingers, but even multiple simultaneous users are already in practical use.

Welcome to Perceptive Pixel INC in New York City. Its founder, computer scientist Jeff Han of New York University, is eager to show off the company's product. He walks up to an "electronic wall" and grapples with it, making menus and video streams appear and disappear like a conductor directing an orchestra. Intelligence agencies are early adopters of Perceptive Pixel technology, using it in war rooms to coordinate surveillance images from battle zones. New anchors at CNN used it to track the US presidential primaries. Han sees it as useful for medical imaging, energy trading, graphics design, and architecture.

Work on "multi-touch" input technologies goes back to the 1980s, but it wasn't until about 2000 that Han got interested in the idea and decided to make it work. Traditional touch input technologies are fairly simple: grids of LEDs and phototransistors around the edge of the screen, a grid of thin wires in a plastic sandwich layer with holes at the grid junctions, and (most commonly in modern times) a touchscreen that senses the electrical capacitance of a fingertip press. The problem is that traditional touchscreens have trouble interpreting multiple simultaneous inputs, and touchscreens that can do so mean more complexity and expense.

Han originally went the capacitive route, but a workable multi-touch scheme meant an impractical amount of wiring. He came up with an acrylic panel on top of a projection-type display that was fed by infrared LEDs around the edges, with the light picked up by cameras behind the screen, alongside the projector. The acrylic panel acted as a "waveguide", with the light "internally reflected" between the surfaces of the panel to propagate from one side to the other. A fingertip press caused light to leak out, a phenomenon known as "frustrated total internal reflection (FITR)", with the loss detected by the cameras. The concept was simple, though tracking all the different leakage points and their motion meant writing some heavy-duty real-time software. There was also the problem, obvious once point out, that the "graphical user interfaces" associated with operating systems like MS Windows or Mac OS were not tuned to handling multiple simultaneous inputs, and so the group had to "grow their own".

As Han's team progressed on development, they discovered that a thin layer of polymer with microscopic ridges could be applied to the screen that provided a bit of yield under pressure that the rigid screen lacked. This layer resulted in a spot that became brighter or darker with fingertip pressure, with the change in brightness tracked by the cameras as well. Perceptive Pixel showed off their technology at the 2006 Technology Entertainment Design conference and quickly started bringing in orders.

* Microsoft has also developed multi-touch input systems. In 2001 two MSoft researchers, Steve Bathiche and Andy Wilson, began to work on a multi-touch tabletop, envisioning it as useful for games, browsing, or other digital applications. They ultimately came up with the "Surface", which also uses a projection display shining on an acrylic panel, in this case set up horizontally as a tabletop. It takes a slightly different approach to sensing fingertip inputs, however, with infrared light provided by an LED source alongside the projector, though the fingertip inputs are also picked up by a set of cameras.

Sheraton Hotels is obtaining the Surface to install in hotel lobbies to allow guests to obtain services. Cellphone company T-Mobile USA is buying them to allow customers to select cellphone models. Right now, the Surface is selling in the $5,000 to $10,000 USD range, but Microsoft believes the price will drop dramatically over the next few years.

Another interesting multi-touch input technology has been developed by Circle Twelve of Framingham, Massachusetts, a spinoff of Mitsubishi Electric Research Laboratories. Their "DiamondTouch" table is designed specifically for small group collaborations. It sends radio-frequency (RF) electrical signals through anyone who makes contact with the tabletop, with the signals either picked up through special chairs or through a special floor surface. The advantage of the scheme is that it can keep track of individuals through assigned seats or positions.

Multi-touch computing is still in its infancy and there's no saying where it will end up. As Han puts it: "It's very rare that you come upon a really new user interface. We're just at the beginning of this thing."

BACK_TO_TOP* NEW PC ADVENTURES (5): While I was updating my desktop PC, I was also updating my laptop. I had a not-too-new and heavyweight Dell laptop, but I had long been wanting to get a small, cheap laptop like the "hundred dollar" PC that MIT's been pushing, and when I saw the Eee PC, made by ASUS of Taiwan, I had to pick it up. It was only two hundred bucks, I had more than enough in my slush fund to cover it. I bought the cheapest model, with minimal flash memory, and also bought an 8 GB flash card along with it. I figured it would be useful for little jobs around the house and for carrying along on air trips where a full-sized laptop would be cumbersome.

I was excited with the Eee PC at first, I liked the form factor, and it seemed to be exactly what I was looking for in an ultra-portable PC. Yeah, the keyboard was a bit painful to type on, but I had been expecting that much, and since I didn't do much heavy lifting on my laptops anyway, no big deal. Then unpleasant realities began to sink in. The first was that ASUS sold the Eee PC as a "turnkey solution" for naive computer users. That meant that basically the user got the PC as it was -- "have it our way". Not only was no documentation provided to show how to play with the system, the documentation basically told the users not to play with the system. That was annoying but not, I thought, a big deal, since I could find user forums online to tell me more, and I figured there would be "Supercharging Your Eee PC" books available soon enough anyway.

However, then the Eee PC began to get balky, refusing to do things like recognize USB devices. "Oh, this is not good at all." Since I had to go on a trip to Spokane, I threw up my hands and decided not to struggle with it, and bought an Acer laptop -- more below. After I got back, I got the thing working right for a while. I was still disgusted with the "Fisher-Price" user interface provided by default for Linux -- it was slow, clumsy, and extremely inflexible. I did some more poking around and after a few false starts I managed to set up a well-known Linux user interface known as KDE. After I did that, the Eee was far faster and easier to use. I found a nice "fractal planet" image and shrunk it to 800x480 pixels as a background, and then found a set of definitely flashy screen-savers, so the Eee PC even looked pretty now.

In fact, I didn't end up having that much use for it, mostly using it as a digital music player, playing ambient music through the night at my bedside. The built-in speakers were too weak to provide much sound volume, so I tried to plug in a set of Logitech USB speakers I was using on my old Dell laptop. It turns out that Linux doesn't know how to automatically configure USB audio just yet, and doing manually is a pain. That was kind of a shrug -- Logitech also sold a cheap AC-powered speaker system that plugs into a headphone jack, so I picked it up and it worked fine.

I kept trying to think of other things to do with the Eee PC, but tracking down tools could also be a pain, at least compared to getting them for Windows, one big reason being that with Linux, the applications had to be compiled on each platform and demanding a fair level of sysadmin skill. Debian, a Linux vendor, came up with a scheme called the "Advanced Packaging Tool (APT)" that automates compiling and installing applications, but even then that could be tricky. Finally, the Acer proved consistently unreliable, going out to lunch every now and then and finally crashing once more. Enough was enough -- I tossed it. [TO BE CONTINUED]

START | PREV | NEXT* CLIMBING MOUNT IMPROBABLE (6): Creationists like to point to the eye as a demonstration of the impossibility of evolution by natural selection. How could such a complicated organ as the eye have arisen through an undirected process of mutation and selection?

Charles Darwin himself saw the eye as a challenge, but he dealt with it head-on. Evolution by natural selection implies the derivation of biostructures like the eye by incremental small changes, working up from simple to increasingly elaborate schemes. Darwin observed that existing organisms demonstrate exactly such a range of structures, from a simple one-celled "eyespot" that can do no more than tell light from dark; to an array of light receptor cells to give more sensitivity and resolution; then an array of such cells in a cup to give some directionality; onto a pinhole-camera style eye; and then a camera-type eye with a lens, such as our own eye. A model constructed by two Swedish researchers in the 1990s suggested that it might only take a few hundred thousand generations to go from an eyespot to a camera-type eye.

In addition, evolution by natural selection can lead off on different paths, producing eyes of very different configuration. This is clearly observed in practice, most significantly in the compound eyes of insects versus our camera eyes. In fact, the eye appears to have evolved along several dozen different lines of descent, producing about nine distinct categories of eyes.

It should be noted that one eye configuration is not necessarily "better" than another. The human eye is a very capable biostructure, with good resolution, focus, and color capability. A snail simply cannot have eyes with the same capability: eyes don't scale down in all respects, and a small eye cannot in general work as well as a big eye. Incidentally, birds have better color vision than we do; birds of prey also have much better resolution, as well as ability to focus rapidly; and we don't have night vision that compares to that of, say, a cat. Of course there are organisms with larger eyes as well -- the biggest eye known being that of a giant squid that was 37 centimeters (15 inches in diameter).

* It actually seems unlikely that there were ever many multicellular organisms that were completely blind. There are plenty of organisms in modern times -- some jellyfish, starfish, various kinds of worms -- that have nothing resembling an eye, but have skin that can sense light, in much the same way that we can locate a heat source just from sensing radiated warmth with the skin on our hand.

The next step is to obtain a simple eyespot. The trick is to have chemicals in a cell that are modified by light and that can provide a signal to nerve endings. Once a one-celled eyespot is available, it can be duplicated, roughly doubling the amount of light that can be picked up. Doublings can then continue up to the point where an organism is spending as much of its resources as effective to support the primitive eye array.

Similarly, the individual cells in the eyespot can be enhanced to increased their sensitivity. A simple eyespot will have only a single layer of photosensitive chemicals; the human light receptor cell called the "rod" has about a hundred plate-like layers, arranged (to no surprise) in a rodlike cellular structure. Squids have a functionally similar light receptor cell, but it consists of rings arranged around a central shaft. In either case, light missed by the top elements in the light-receptor cell may be absorbed by the lower elements. Once there are enough elements to pick up most of the light that can be picked up, diminishing returns set in and there's no point in having more elements. [TO BE CONTINUED]

START | PREV | NEXT* Space launches for October 2008 included:

-- 01 OCT 08 / THEOS -- A Dnepr booster was launched from Yasny in southern Russia to put the "Thailand Earth Observation Satellite (THEOS) into near-polar Sun-synchronous orbit. THEOS had a launch mass of 750 kilograms (1,655 pounds) and carried two imagers, including a grayscale system with a resolution of about two meters (7 feet) and a swath width of 22 kilometers (14 miles), plus a color system with a resolution of about 15 meters (49 feet) and a swath width of 90 kilometers (56 miles). The spacecraft had a design lifetime of 5 years.

THEOS was built by EADS Astrium for the Thai "Geo-Informatics and Space Technology Development Agency (GISTDA)". GISTDA planned to use the imagery for cartography, land use, agricultural monitoring, forestry management, coastal zone management and flood risk management applications. The Dnepr booster was a converted R-36M (NATO SS-18 Satan) heavy ICBM, launched from an underground silo.

-- 12 OCT 08 / SOYUZ TMA-13 -- A Soyuz-Fregat booster was launched from the Baikonur space center in Kazakhstan to put the "Soyuz TMA-13" manned space capsule into orbit on an International Space Station (ISS) support mission. It carried the ISS "Expedition 18" crew of commander Mike Fincke of NASA (second space flight) and flight engineer Yuriy Lonchakov (third flight) of the RKA / Russian Space Agency, along with "space tourist" Richard Garriott.

Soyuz TMA-13 docked with the ISS central Zarya module on 14 October. The new arrivals were greeted by the Expedition 17 crew, consisting of commander Sergey Volkov, flight engineer Oleg Kononenko -- both of the RKA -- and Gregory Chamitoff of NASA. Volkov and Kononenko had arrived on the station in April on Soyuz TMA-12, with Chamitoff arriving at the end of April on the NASA space shuttle Discovery.

Garriot, a computer game designer, was the sixth space tourist to fly to the ISS, paying $30 million USD for the trip. Garriot was the son of NASA Skylab astronaut Owen Garriot. Sergey Volkov was also the son of a spacefarer, his father being Russian cosmonaut Alexander Volkov. Both fathers were present at a post-docking news conference in Moscow. Volkov, Kononenko, and Garriot returned to Earth on 23 October in Soyuz TMA-12, safely landing as per plan in Kazakhstan. Volkov and Kononenko had spent 183 days in space as the Expedition 17 crew. Chamitoff was to remain on the ISS until his replacement, NASA astronaut Sandra Magnus, arrived on shuttle Endeavour in December.

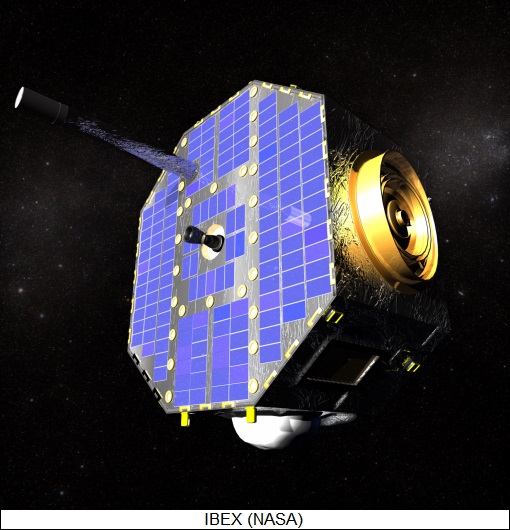

-- 19 OCT 08 / IBEX -- An Orbital Sciences (OSC) Pegasus XL booster was air-launched by the OSC Stargazer L-1011 launch jet from over the Pacific Ocean near Kwajalein Atoll to put the NASA "Interstellar Boundary Explorer (IBEX)" into orbit.

IBEX was a NASA "Small Explorer" science spacecraft, in the form of an octagonal box with a diameter of 96 centimeters (38 inches), a height of 58 centimeters (23 inches), and a launch mass of 107 kilograms (236 pounds). It was launched using an auxiliary Star kick stage into a highly elliptical orbit, from an altitude of 7,000 to 320,000 kilometers (4,350 x 200,000 miles), taking it out of the Earth's magnetosphere. The spacecraft's payload measured energetic neutral atoms from the "termination shock", where the solar wind entered the interstellar medium. The mission was to last two years, returning several maps of the interstellar boundary.

-- 22 OCT 08 / CHANDRAYAAN-1 -- An Indian Space Research Organization (ISRO) Polar Satellite Launch Vehicle (PSLV) was launched from Sriharikota Island to sent the ISRO "Chandrayaan 1" lunar orbiter to the Moon. It was ISRO's first planetary mission. "Chandrayaan" was Sanskrit for "Moon Craft". The orbiter had a launch mass of 1,380 kilograms (3,042 pounds). It was designed to provide a 3D atlas of the Moon's surface, as well as a map of the distribution of elements and minerals. It carried a payload of 11 instruments, five built by India and the others provided by foreign research organizations -- three by the ESA, two by NASA, and one by Bulgaria. The Indian-built instruments included:

ESA instruments included:

NASA instruments included:

The Bulgarian Academy of Sciences provided a Radiation Dose Monitory to characterize the radiation environment in the space near the Moon. ISRO regarded Chandrayaan 1 not only as an ambitious exercise for India but also as a platform for international cooperation.

-- 24 OCT 08 / COSMO-SKYMED 3 -- A Delta 2 7420 booster was launched from Cape Canaveral to put the Italian "Cosmo-Skymed 3" radar satellite into orbit. It was placed in the same orbit as the first two Cosmo-Skymed satellites, launched in 2007. COSMO stood for "Constellation of Small Satellites for Mediterranean basin Observation", with the spacecraft built by Thales Alenia Space Italia for the Italian Space Agency and the Italian Ministry of Defense. The satellites had a launch mass of 1,905 kilograms (4,200 pounds) featured a payload of a high resolution X-band synthetic aperture radar for environmental and military observation. Each spacecraft could provide 450 images a day, with images for civilian use featuring a minimum resolution of a meter (3.3 feet). Images for military purposes had better resolution.

-- 25 OCT 08 / SHIJIAN 6E & 6F -- A Long March 4B booster was launched from Taiyuan to put two Chinese secret military satellites into orbit, "Shijian 6E" and "Shijian 6F". They were placed in near-polar Sun-synchronous orbit, suggesting they were reconnaissance satellites of some sort.

-- 29 OCT 08 / VENESAT 1 -- A Long March 3B booster was launched from Xichang to put the Venezuelan "Venesat 1" AKA "Simon Bolivar" geostationary comsat into orbit. Venesat 1 was, as its name suggested, the first Venezuelan satellite. It was built by Great Wall Industries of China and was based on the DFH-4 platform. The spacecraft had a launch mass of 5,100 kilograms (11,245 pounds), a payload of 12 C-band / 14 Ku-band transponders, and a design life of 15 years. It was placed in the geostationary slot at 65 degrees West longitude to provide communications services for Venezuela.

* OTHER SPACE NEWS: In September, Google announced an initiative designated "O3b", which means the "other 3 billion". It will be based on a constellation of 16 comsats in medium Earth orbit, providing global access to the internet for users in the developing world. The satellites are being developed by Thales Alenia Space and will have a launch mass of 700 kilograms (1,540 pounds) each, pretty lightweight for a comsat, allowing them to be launched in clusters.

O3b will not provide direct internet access for end users; it will instead provide a "backbone" to link municipal or rural wireless networks to the rest of the world. Those who remember the ambitious "internet in the sky" exercises of the 1990s will remember how they crashed and burned. Hopefully Google is taking past history into consideration. Initial launches are scheduled for 2010 and the network is expected to be operational soon thereafter.

* The British Ministry of Defense -- "UK MOD" -- is now considering setting up the nation's first optical spy satellite network. It appears that the starting point for the concept is the multinational "Disaster Monitoring Constellation (DMC)" spearheaded by Surrey Satellite Technology LTD (SSTL) of the UK, a prominent maker of advanced technology small satellites. The DMC satellite imagery is much too coarse for military reconnaissance, but the "SkySight" proposal submitted to the UK MOD by SSTL in collaboration with EADS Astrium proposes the use of improved derivatives of the DMC satellite with better resolution.

The plan envisions orbital deployment of four satellites, beginning as early as 2010. The first two would have 1 meter (1.1 yard) imaging resolution, with the next two cutting that resolution in half. At present, the UK is dependent on the US for satellite reconnaissance imagery; if SkySight is implemented, it will give the UK a degree of independence and will also allow the British to provide intelligence back to the Pentagon in return.

* Branson's Virgin Galactic company has attracted a fair amount of attention with their "White Knight Two / SpaceShip Two" commercial suborbital system, with initial test flights to take place in 2009. Along with interest from space tourists, the US National Oceanic & Atmospheric Administration (NOAA) have agreed in principle to fit SpaceShipTwo with instruments for climate research. NOAA wants to perform high-altitude measurements to obtain calibration for the organization's weather satellites. NASA and the British Qinetic research organization are also interested in SpaceShip Two for suborbital research flights.

BACK_TO_TOP* FUEL FROM ALGAE: Biofuels are sexy these days, with one of the options under examination the production of biodiesel from algae -- pond scum. An article from IEEE SPECTRUM ("The Power Of Pond Scum" by Willie D. Jones, April 2008), took a close look at a Valcent Products of Vancouver, Canada, which is promoting algae as a renewable energy source.

Anyone who's ever had a swimming pool knows that algae grow very easily in water, as long as there's enough sunlight. Algae produce oils that can be processed for biodiesel, with the oil amounting to half the biomass in some species. There is interest in engineering the algae to produce more oil, and also in modifying algae to produce hydrogen for use as a fuel. According to Glen Kertz, president and CEO of Valcent, one hectare of corn planted for biofuel production will produce about 40 liters of biofuel per year, while a hectare planted with oil palms will produce about 1,000 liters of biofuel per year. Kertz says that such production is trivial compared to what algae can do: an algae production plant covering a hectare could yield 48,000 liters per year, and possibly up to three times that much.

Valcent has a proprietary approach to growing algae referred to as "Vertigro". Growing algae in a large open pond doesn't make very efficient use of space, and yield tends to be affected by temperature and weather. Vertigro, in contrast, takes a "vertical" approach to growing algae. The process begins with a tank full of water seeded with algae; the tank is underground, providing thermal insulation that helps keep the temperature constant. A pump drives the liquid to a holding tank three meters (10 feet) above the floor in a greenhouse, with the fluid then squirted onto a set of crisscross plastic sheets containing bladders, where the algae pick up sunlight. The fluid is collected in a second holding tank at the bottom of the sheets and then returned to the underground tank, to begin the cycle again. Once the algae density reaches a certain threshold, say 1.5 grams per liter, it is harvested. Over a 24-hour period, half the fluid is drained off, the algae is removed, and the water is returned to the underground tank. The pace of the harvesting matches the growth rate of the algae, ensuring that the process is continuous.

Kertz began working on a vertical crop production system for other plants about 15 years ago, when he realized that traditional methods of greenhouse cultivation, involving growing plants on tables, make poor use of greenhouse heating and cooling. He points out that the continuous process is also much more efficient than traditional crop production, in which product can only be harvested every few months. Valcent is now building a demonstrator plant in El Paso, Texas, to determine if the approach is practical and economically feasible. If the demonstration works out well, Valcent will then go on to build a pilot plant.

In a parallel effort, an investigation is being conducted at the Argonne-Northwestern Solar Energy Research (ANSER) Center near Chicago, Illinois, to genetically modify algae to produce hydrogen. Researchers are focusing on an enzyme named hydrogenase, which generates hydrogen, and working on ways to genetically modify algae so that hydrogenase becomes a component of the algae's photosynthetic cycle. If the trick can be done, the genetically modified algae will produce roughly as much hydrogen as they do oxygen.

Researchers at the National Renewable Energy Laboratory in Golden, Colorado, are bullish on algae for renewable energy, claiming that algae bioreactors covering about 40,000 square kilometers (15,400 square miles) -- about a tenth of the state of New Mexico -- could turn out enough biodiesel, bioethanol, and molecular hydrogen to completely replace petroleum as a transport fuel in the USA. That's clearly a lot of pond scum, and the vision may be impractical. However, advocates say that there's every good reason to see a big future for algae, envisioning the day when algae bioreactors are not only set up desert regions, but also in urban areas, on top of the smokestacks of industrial plants or coal-fired power plants, and in rural areas, with the algae being fed plant or animal wastes to provide remediation. As Kertz points out, algae are already the primary source of oxygen on the planet; they're a huge resource that we could put to more use for our own benefit.

BACK_TO_TOP* SHELL SHOCK: Well-equipped modern soldiers tend to be protected by high-tech armor, giving them a higher rate of survival in combat than soldiers of the past. However, as reported in an article in AAAS SCIENCE ("Shell Shock Revisited: Solving The Puzzle Of Blast Trauma" by Yudhijit Battacharjee, 25 January 2008), even the best armor protection may not shield soldiers from the shockwave of an explosion, which can have the effect of a blow up against the side of the head.

During the brutal Wars of the Yugoslav Succession in the 1990s, Ibolja Cernak, a neurologist at the Military Hospital in Belgrade, kept seeing young soldiers who had no obvious injury but had dizziness, memory deficits, speech problems, and difficulties with decision-making. One 19-year-old who went to a grocery store wept when he found he couldn't figure out how to get back home. Cernak investigated and found that all these soldiers had survived explosions on the battlefield.

Using computer tomography and magnetic resonance imaging, she detected signs of brain damage: evidence of minor bleeding in some patients, enlarged ventricles (which carry cerebrospinal fluid) in others. She found no references to such phenomena in the medical literature. Explosive shock waves were known to damage the lungs and bowels, but not the brain. In collaboration with other researchers in Belgrade, China, and Sweden, Cernak performed animal studies that showed how such shock waves could damage the nervous system. The matter remained academic until about two years ago, when large numbers of American and British soldiers came back from the combat zone with mild "traumatic brain injury (TBI)", caused by the explosions of roadside bombs.

Cernak, now a researcher at Johns Hopkins University in Baltimore, Maryland, felt vindicated. Her pathfinding work has now acquired considerable prominence, in large part due to a $150 million USD research effort on TBI begun by the Pentagon in 2007. However, researchers remain skeptical of Cernak's notions of how such injuries occur. She suspects the shockwave propagates up from the torso through the major blood vessels; the idea is backed up to a degree by the animal studies, but she admits more work is needed. If her suspicion is true, that would have significant consequences, since it would mean that a helmet in itself would not necessarily protect a soldier's brain from an explosive blast.

Cernak's more general assertion that simply being exposed to a blast can cause long-lasting brain damage even if there are no visible injuries has definitely opened a Pandora's box of difficulties, particularly for combat veterans. It implies that some could be suffering from brain damage that went undiagnosed or was misdiagnosed as post-traumatic stress disorder (PTSD). In fact, once the word got out about Cernak's research, veterans starting coming forward with tales of evidence of brain damage suffered from combat, sometimes decades ago.

The observation that soldiers who endure heavy bombardment may have erratic behavior is not new. In World War I and II, it was known as "shell shock", and it was generally seen as evidence of weak nerve among its victims. Cernak's research suggests there was more to it than that. In 1999, she published the results of a study of 1,300 patients who had suffered penetrating wounds to the body but not the head. More than half had been injured in a blast, with the others wounded by bullets and other projectiles. Many of those injured in a blast complained of vertigo, insomnia, and memory deficits, with more than 36% showing irregular brain activity, as observed by electroencephalograms taken within three days of the injury. Only 12% of those wounded by projectiles showed such activity; a year later, 30% of those injured by blasts still had irregular brain activity, while only 4% of those wounded by projectiles showed such abnormalities.

Her study was not entirely news. A year earlier, in 1998, a study performed by the US military's Veterans Administration (VA) showed that among veterans with PTSD, those with a history of blast exposure were much more likely to have abnormal brain activity, along with cognitive and behavioral problems. The report made little impression.

For decades, the goal of US Army researchers working on body armor for the troops had focused on shielding the lungs and bowel from blast shock. Such research was generally shut down in 2003, since the perception was that the problem had been solved. Then, in late 2004, Army doctors who were treating casualties from the battle zone in Iraq whose heads had swelled up significantly within a few hours of being caught in a blast. Some had obvious head injuries, but many did not, and the swelling in many of those who had clear head injuries was much greater than would have been expected.

That was only the leading edge. Soon Army doctors began to observe increasing numbers of soldiers returning from the combat zone who couldn't perform simple addition or subtraction, read more than a sentence at a time, or remember what they had for lunch. Most had been dazed or knocked out by a blast but not visibly injured, but they soon began to suffer from headaches and an inability to concentrate. Current estimates suggest that 10% to 20% of all soldiers in combat in Iraq and Afghanistan have suffered some type of TBI. That led to the Department of Defense program on TBI research, which is currently focusing on evaluations of "breachers" -- troops who blast holes in buildings with shoulder-launched weapons -- and construction of models of how blast-induced TBI takes place. Once the phenomenon is understood, then the researchers can go on to prevention and treatment.

As noted, Cernak's notion that the blast damage is transmitted up from the torso into the brain is not universally accepted. That mechanism is something new to researchers; they are familiar with the sort of TBI that occurs from collisions, in which the crash impact shakes the brain violently and causes damage. Some sort of similar "whiplash" effect may take place in the brains of blast victims as well, which means that helmets designed to damp the whiplash may help.

Cernak responds that in the animal experiments, the heads of rats and rabbits were immobilized by steel plates, with the effects of blast exposure much the same in these subjects as they were in animals whose heads were not immobilized. She also points out that collision-induced TBI usually affects the cortex, on the outer regions of the brain, while blast-induced TBI causes memory deficits, a problem associated with the hippocampus, deep inside the brain. If Cernak is right, then the design of body armor is going to have to rethought.

The US Army is now trying to keep a careful eye on TBI among the troops in the combat zone. In July 2006, the Army Surgeon General passed out a memo to field commanders suggesting that TBI screening be performed for any soldier demonstrating "poor marksmanship, delayed reaction times, decreased ability to concentrate, and inappropriate behavior." Medics now have a checklist of questions to ask troops who have been caught in a blast, asking for example if they were knocked out or couldn't remember what happened before the blast, and will refer a soldier who may have suffered TBI to further treatment, or at least take a day off and see if the symptoms go away. The Army would like to have some sort of "biomarker" to determine if a soldier has suffered TBI, but for now that idea is merely science fiction.

The costs of treating TBI in troops returning from the Middle East could be astronomical, in the tens of billions of dollars, but the Pentagon is determined to address the issue and is carefully checking the troops for signs of TBI. The situation gets more complicated with veterans of previous wars, as far back as Vietnam, who were diagnosed with PTSD but may have suffered TBI. Could aged veterans with Alzheimer's disease make claims on the VA on the basis that TBI predisposed them with the affliction?

Cernak adds that she has been getting a fair number of emails and calls from veterans who are interested in her research and telling her about their own problems. She is sympathetic: "Soldiers anywhere are one of the most vulnerable populations in the world. It is a moral obligation to help them."

BACK_TO_TOP* NEW PC ADVENTURES (4): Getting a new phone in the wake of my new PC was yet another piece of what amounted to an enormous, expanding puzzle. I knew that getting my new PC's communications system to work in detail was going to be a lot of effort, and it was:

Yet another thing to figure out was a photo editor, which turned out to be a troublesome task. Some time before I jumped from my old PC, I decided that my old copy of Paint Shop Pro 9 photo-editing software was getting a bit dated, and so I decided to buy the latest version of Photoshop since it seems to be the practical standard.

The first thing I noticed about Photoshop after installing it was just how slow it was. The next thing I noticed was how often it ran out of memory. I chalked that up to inexperience, thinking once I understood the functionality I would be able to use it more efficiently. Alas, in the end I didn't have any more functionality useful to me than I did with Paint Shop Pro, and in fact I found some of the "whizzy" features of Photoshop to be a waste of time, literally. I tried to do am "automatic align" on a photo and it simply went out to lunch, then decided to take a siesta.

What really tore it was its intrusiveness. Every time I plugged a USB memory stick into my PC, I got a complicated Photoshop dialog offering to do whatever with the images on the stick, and I couldn't figure out how to turn the dialog off. Worse, it used my web browser for online help, and ended up seriously interfering with Internet Explorer. Having had enough, I uninstalled Photoshop. What was particularly frustrating was that I then looked at the latest version of Paint Shop Pro, only to find reports that it was at least as bloated and clumsy. Old software packages do not fade away: they inflate until they die of their own dead weight.

So I looked around for alternatives. There are actually some free online photo editors, such as FotoFlexer and Picnic, but they required uploading the photos and seemed to be optimized for online photo-sharing services. What I settled on was a freebie called Paint.Net, put together by a group at Washington State University in Pullman with help from MSoft. It turns out to be a fairly capable package while still being lightweight. It was a nuisance to puzzle with photo editors, but at least after doing so for a bit I got to the point where I could jump into one and get up to speed on all my basic functional requirements in a short period of time. They all have much the same core capabilities, but in some cases they implement them differently.

* One of the amusing things about the effort were the occasional conversations with online folks. I spent over a dozen years in online myself and believe me, I deeply sympathize with them, and am perfectly aware that their knowledge is often shallow -- they don't get paid enough to acquire a deep expertise. Of course, I was wise to all the tricks of the game too, and I had to restrain a tendency to bully them: "We'd like to tell you about the service we offer -- "

"I'm really only interested in closing my account, if you please," I replied as politely as could. I know they're briefed to give sales pitches, but nobody briefed me that I had to cooperate.

"Well, we have a package -- "

"I'm really only interested in closing my account, if you please." I had no doubt I was in control of the conversation, though I suppose it was not surprising given that they were generally very young. For what it was worth, I tried to make sure I thanked them at the end of the conversation. [TO BE CONTINUED]

START | PREV | NEXT* CLIMBING MOUNT IMPROBABLE (5): Spider fossils go back 360 million years. There's no way webs leave fossils -- though strands of silk do show up in lumps of amber on occasion -- and there were few or no flying insects in those days anyway, but even the oldest fossils show that spiders had the gland openings for silk production. The fact that there are no spiderweb fossils means that the evolution of such elaborate biostructures -- elaborate in themselves, even more elaborate when the instincts used to build and use them are considered -- are a matter of educated speculation. However, spiderwebs are simple enough to make a very good subject for evolutionary computer simulations, one example being the "Netspinner" program.

Netspinner starts with a rudimentary web, maybe just a few strands, in an environment where simulated flies are flying around. The program then "mutates" the web slightly, generation by generation, using a set of rules to determine the "budgeting" for building a web and to determine the effectiveness of different web configurations for catching flies. Web configurations are scored by these criteria, and those with the best scores are carried on to future generations. Advanced versions of Netspinner allow "crossbreeding" between different web configurations to factor in the effects of sexual recombination. The interesting thing about Netspinner is how quickly the web configurations converge on those that resemble real spider webs.

The beauty of Netspinner is that the programmer does not specify any end goal other than towards the most economical web that is most effective at catching flies, making the program a very nice demonstration of natural selection at work. The programmer does have to define parameters for the program, such as the effectiveness of stickiness for catching flies and the food value of flies, but this is effectively just defining the rules of the "virtual universe" in which the web evolves.

In practice, spiders have an "ingenuity" in the varieties of webs they construct that mere human computer simulation can't match. However, for those who might want to dismiss Netspinner and its like as mere computer-aided fantasy, the marks of natural selection are all over spiderwebs in nature. It is possible to organize taxonomic "trees" of spiderweb configurations; the interesting thing is that they don't track the taxonomic trees of the spiders themselves. Spiders that are closely related may have very different webs, while spiders that are not closely related may have very similar webs.

This simple observation points to the repeated "reinvention" of web configurations by different lineages of spiders, over and over again. The really interesting prospect is the possibility of eventually understanding and backtracking the specific genetic changes that affected the web-building behavior of spiders. Web construction is entirely hardwired by a genetic program. We don't know much about the genetic basis of such hardwired behaviors just yet; hopefully we will be able to map such structures out in detail and map their evolutionary history before too long. [TO BE CONTINUED]

START | PREV | NEXT* GIMMICKS & GADGETS: According to WIRED.com Peterbilt, manufacturer of tractor-trailer rigs, has demonstrated a fuel-cell auxiliary power unit (APU) for a big rig that truckers will find very attractive. A typical big rig has a cozy sleeper section at the back of the cab, but if the trucker doesn't have access to a power cord, the alternative is to run the vehicle's diesel engine all night to keep the air conditioning and other systems running.