* 22 entries including: evolution & information, parasites, smart bots for computer games, smarter cars, machine vision apps, ADS-B, Bhopal disaster at 25, runaway stars, Future Combat System canceled, geothermal power in Africa's Rift Valley, mainstream money laundering, and science instrument systems for ferries.

* NEWS COMMENTARY FOR APRIL 2009: As reported in THE ECONOMIST, the current government of Iran under President Mahmoud Ahmadinejad has been inclined to trumpet its successes as the guardian of the Islamic Revolution. Tehran has been able to continue to defy the malevolent schemes of America -- the Great Satan -- and America's stooges in the region. Nuclear development continues, and recently the government scored a triumph by performing Iran's first satellite launch, placing SAFIR OMAR (AMBASSADOR HOPE) into orbit.

Not all the citizens were so impressed. Following the launch of the satellite, a text message made the rounds of cellphones around the country: FIRST FINDING FROM OMID, THE EARTH IS ROUND! The people do not assume the leadership understands such things. There is a general realization that the Islamic revolution has had its positive accomplishments, but there has been a price in lost economic opportunities, foreign relations with a strong tendency towards the shrill, and constraints on personal freedoms. Many Iranians are sick of the zealous rule of mullahs; it is estimated that there are up to two million Iranians hooked on drugs flowing in from Afghanistan, while Iran's best brains, lacking opportunity at home, have been inclined to go elsewhere for work. The government has done nothing to encourage enthusiasm, with security officials sternly warning those who send text messages on their cellphones mocking the government do so at their own risk.

National elections are coming up in June. The matter might not seem all that important, since the elected government is entirely subservient to the Council of Guardians, an unelected body of clerics, and to the Supreme Leader, the Ayatollah Ali Khameni. However, the elected government does have the power to implement policies and administer them, and Ahmadinejad's government has not made itself universally popular in the way it has done so. With the global collapse in oil prices and economic activity, Iran's sources of income have dried up; worse, Ahmadinejad's administration has had little notion of fiscal responsibility, and the government's saber-rattling attitude towards the outside world has ensured that international sanctions place their burden on Iran as well.

Ahmadinejad's revolutionary zeal has led to the purge of reformists from positions of influence and the jailing of those who challenge the party line. Women who walk the streets in dress judged as immodest are once again likely to be harassed and humiliated by the police. While the government's generosity has won it friends with the poor, it has also left the state financially impoverished, with economic mismanagement leading to painful inflation and public scandals. Ahmadinejad's willingness to denounce Iran's enemies outside its borders has actually won him much respect from the citizens, but a rational assessment tends to show that the benefits are far more show than substance. Iran's close neighbors do not think much of Iran's unwillingness to work for peace, its menacing nuclear program, or its schemes to drive American power out of the region -- however clumsy and overbearing the Americans can be, the smaller states see the USA as vastly preferable to an unleashed Iranian wolf.

The choice in the elections will in fact make a big difference. Iran is, like it or not, a regional power; a reformist government could do much to help establish peace and stability in a part of the world where such things are not in plentiful supply, while electoral endorsement of the current regime would, at the very least, be unhelpful. A new reformist government will be too constrained to make fundamental changes in policy, but even a change in tone would help a great deal. There is convergence between rational Iranian goals and those of outsiders, from stability in Iraq and Afghanistan to control of the drug trade. Iran needs foreign assistance and can pay its way with vast reserves of natural gas.

The current leadership is simply paranoid. The suspicion of outsiders has a basis in their malign interference in the past, but the surly attitude has gone beyond any level at which it might be considered constructive, amounting at best to little more than grandstanding to impress the public. Many reformers would like to see a more rational government, one that isn't quite as willing to continually adjust the rules as suits their convenience. However, rivalries among the reformists may lead to a self-defeating split vote, and the state media of course only rings the praises of the government. The BBC has recently launched a Farsi satellite TV channel for Iran, but security officials have warned that Iranians cooperating with the BBC could be arrested.

On the other side of the coin, there are powerful conservatives who are sick of the current government and would prefer a president that came across as a polished professional, not someone who more resembles a beady-eyed crank spouting off on an internet forum. Tehran's highly-regarded mayor, Muhammed Qalibaf, has his backers, who see his distinguished record of service to the state and assertions that he would uphold Iran's principles -- but at a lower price -- as a winning formula. A reformist Iranian government might not be in the cards; but a rational conservative government might be a fair second.

COMMENT ON ARTICLE* HITCHING A RIDE: Scientists, with a few prominent exceptions, generally do not have generous access to funding, and they tend to be ingenious in finding ways to get their research done on a budget. As reported in an article in AAAS SCIENCE ("Ferryboxes Begin To Make Waves" by Claire Ainsworth, 12 December 2008), oceanographers have figured out a clever way to hitch a free ride on ferryboats to obtain floods of information about the ocean.

The oceanographers generally stay at home, the work being done by the "Ferrybox" -- an automated data-acquisition system about the size of a washing machine. Dozens of Ferryboxes are now carried by ferries the world over to sample the waters that the vessels cruise over. They measure water variables such as temperature, salinity, oxygen content, and carbon dioxide content, and take biological samples of plankton to measure, for example, chlorophyll content.

Researchers can obtain data from their Ferryboxes in the comfort of their labs using a satellite communications link -- though the volumes of data are such that satcom link can't handle it all; the researchers generally have to visit a ferry when it comes into port to download the data from a Ferrybox's mass storage system. They need to visit anyway to ensure that the Ferrybox remains in good working order, in particular to make sure its sampling systems haven't become clogged. Except for the periodic visits, however, the Ferrybox operates on its own.

* The idea of obtain data from a "ship of opportunity (SOOP)" is not new, naturalists having asked ship captains to make observations and collect samples for centuries. Oceanographers have even taken to mining old ship's logs for useful data, scanning concise weather reports back into the 17th century to see what patterns they reveal.

Ferryboxes are a major expansion on this tradition, offering capabilities not imagined by the naturalists of past centuries. Ferries are actually very good observational platforms. They are generally big enough to operate in seas that would keep smaller vessels in port, and they travel fixed routes over fairly short voyages, permitting predictable and regular observations. Oceanographic vessels, while wide-ranging, cannot provide this level of consistent coverage for specific regions, and even if they could, it would be very expensive to do so. Ferries make their journeys with funding from their passengers, with their Ferryboxes just going along for the ride. The data in the Ferryboxes is very useful, in particular helping to understand the absorption of carbon dioxide by the seas -- a highly relevant factor in global warming.

The effort began in the 1990s, when European oceanographers began to think that the 800 or so ferries that cruise off the shores of Europe might make surprisingly effective oceanographic survey platforms. Researchers at the Finnish Institute Of Marine Research pioneered such usage in the early 1990s, placing sensors on ferries traveling over the Baltic and the Gulf of Finland to help track algae concentrations. The research effort went well and led to an international collaboration to develop the Ferrybox. The initial operational phase of the Ferrybox effort ran from 2002 to 2005, with eight European ferry lines collecting floods of interesting and useful data.

The concept is now starting to catch on all over the world. The first generation of Ferryboxes were essentially hand-built, but now scientific instrument manufacturers are selling modular gear specifically designed for integration into Ferryboxes -- with new capabilities being continually added. Work is also underway to reduce the amount of maintenance required to keep a Ferrybox in operation, for example adding some capability for the Ferrybox to clean out fouling. Nobody envisions a Ferrybox that could run for a year or longer without maintenance, but reducing the number of service visits definitely improves the bottom line.

Ferry companies seem to be generally happy to help, and in fact one ferry line agreeably modified the design of their newest ferries to accommodate a small lab room. A merchant shipping company that heard about Ferryboxes came forward to not merely volunteer use of Ferryboxes on their merchantmen, but to provide research funding. Oceanographers working with Ferryboxes have become evangelical, one saying: "There are a lot of ships out there. We want to spread the word."

COMMENT ON ARTICLE* SHADY PRACTICES: Money laundering -- the practice of disguising the origins of money obtained from illegal activities -- is generally associated with Swiss banks or dodgy financial institutions in the Cayman Islands. As reported by an article in THE ECONOMIST ("Haven Hypocrisy", 28 March 2009), Jason Sharman, a professor of political science at Griffith University in Australia, discovered that the problem is much closer to home.

Armed with no more than $10,000 USD, Google, and ads from international business magazines, he was able to set up an elaborate network of anonymous shell companies and secret bank accounts all around the world. The system that Sharman uncovered was not supported by officials refusing to tell what they know so much as it was the officials knowing nothing, thanks to lax disclosure rules that wouldn't fly in Jersey or Switzerland, but are perfectly fine in the USA. That approach is even more convenient for those with dirty money, since there's no way to squeeze facts out of officials who don't have them. All fraudsters and gangsters have to do is create anonymous companies, which can then open bank accounts and move assets around under the radar.

Some American states seem proud of their weak disclosure rules, with Nevada being one of the most easygoing. A company can be set up there in an afternoon with minimal disclosure, and the state does not routinely share information about incorporations with the Federal government. There's obviously a demand for such a service, since the state incorporates about 80,000 new firms a year and now has more than 400,000. With about 2.6 million Nevadans, that's about one company per six citizens. The state does not seem particularly eager to tighten up regulations, though the US Internal Revenue Service estimates that from half to 90% of the companies incorporated in Nevada are in breach of Federal tax laws elsewhere.

In 2005, the US Federal government published a study that identified the states of Nevada, Wyoming, and Delaware as tax havens every bit as convenient as well-known offshore financial centers. In fact, due to easy-going tax laws in the USA, they may well be more convenient. Senator Carl Levin has recently introduced legislation to demand greater disclosure, saying: "For too long, criminals have misused US corporations to hide illicit activity, including money laundering and tax fraud. It doesn't make sense that less information is required to form a US corporation than to obtain a driver's license." However, similar legislation introduced to Congress in the past has been quickly sidetracked.

Sharman found that other countries were almost as lax, attempting to create shell companies and bank accounts 45 times across the world, and succeeding in 17 of those attempts. 13 of the countries that let him through were OECD nations; he was able to set up a dummy firm in the UK in less than an hour without providing any real identification. In some other countries, he was at least asked to fax a copy of his driver's license; in contrast, in Switzerland and Bermuda he was told to provide notarized copies of his birth certificate. Sharman concluded: "In practice, OECD countries have much laxer regulation of shell corporations than classic tax havens. And the US is the worst on this score, worse than Liechtenstein and worse than Somalia."

COMMENT ON ARTICLE* SMARTER CARS (3): Video cameras are not only being used in automotive collision-detection systems, they are also being used in systems that provide a warning when a vehicle leaves its lane. Such systems track lane markers and notice when the vehicle crosses over them. Nissan has introduced a "lane departure" system and is coming out with an improved version. The system prevents drowsy or inattentive drivers from drifting out of a lake, providing asymmetric braking to steer the car back into the lane -- with the driver alerted by, say, shaking of the steering wheel. Using the turn signal overrides the system.

"Blind-spot detection" systems are now also on the market. They use ultrasonic or radar sensors to check for vehicles in a car's "blind spots", most of them providing an alert by blinking warning lights in the side-view mirrors to indicate somebody's there. Manufacturers expect that such systems will prove particularly popular for trucks, since they have very large blind spots.

"Lane-change assistance" systems are an extension of blind-spot technology. The sensors scan about 50 meters (165 feet) behind and indicate that a car is rapidly gaining in the other lane. "Backover protection" systems determine if there is an obstruction to the rear when the car is being backed up; the primary focus of this technology is the rare but horrific case of drivers backing up over small unseen children. Over the longer run, automotive engineers foresee systems that detect pedestrians and animals, even at night, and traffic-sign recognition systems that alert drivers about stop signs and red lights.

The sensors currently available are already being used in parking-assistance systems. Parallel parking can be tricky and is a common source of scrapes and other minor accidents; an automated parallel parking system was offered as an option to the Toyota Prius in 2003 and is now being offered by other manufacturers. The driver just drives forward of the parking space, engages parking assist, and the car parks itself. It requires electronic steering to work, but electronic steering is quickly becoming standard.

* The main problem with such an array of safety systems is expense. An alternate approach envisions low-bandwidth wireless communications with other cars and traffic signals. Such "vehicle-to-vehicle (V2V)" and "vehicle-to-infrastructure (V2I)" systems would be able to "see" beyond the visible horizon, for example receiving a signal that a car is approaching from beyond a blind corner.

The system would be comparatively low tech, consisting of a wireless unit coupled to a Global Positioning System (GPS) receiver and modest processing power. The system would not require a full infrastructure at the outset -- even 10% coverage would provide benefits. However, to be workable the scheme demands common system standards, and hammering out an industry standard can be troublesome. Setting up a consistent V2I road infrastructure could be expensive, with some cost estimates of a V2I network for the entire USA running to a trillion USD. However, the world's major car manufacturers are very interested in the concept.

Ultimately, will cars be able to drive themselves? Military research efforts have come up with prototypes that can do so, at least in relatively benign traffic conditions. Fully-autonomous robot cars are clearly over the technological horizon, but V2I networks might make it comparatively easy to achieve, though at first drivers would only make use of it for local trips. At present, few would trust their lives to a robot car -- but the current system is clearly unsafe, and if robot cars could be clearly shown to be safer, enthusiasm would grow. It may seem like a mad idea now, but there may come a time when the era of hordes of cars driving around under manual control may seem primitive and mad as well. [END OF SERIES]

START | PREV | COMMENT ON ARTICLE* THE PARASITES (1): Evolution is opportunistic, and one of the straightforward career opportunities for an organism is "parasitism", making a living off another organism. Carl Zimmer's 2000 book PARASITE REX surveyed the parasites and is worth outlining here.

* We have all been infected by bacteria, which are often if not necessarily parasitic, and viruses, which are always parasitic -- viruses can't reproduce without taking over a host cell, they are "obligate" parasites. However, in scientific and medical circles, bacteria and viruses are not technically referred to as "parasites", that term being reserved for eukaryotes -- creatures like ourselves with large cells containing a nucleus and distinct cellular organelles, very unlike bacterial cells and viruses -- that have adopted a parasitic lifestyle.

That may seem like an arbitrary distinction, but the bacteria, viruses, and eukaryotic parasites not only have distinct biologies, they have generally different pathologies -- for example, bacterial and viral infections tend to be one-shot affairs, with a victim getting sick and either dying or getting well again, while parasitic infections tend to be chronic. It makes sense to segregate the studies of the three different classes of pathogens because they require different mindsets and approaches. In addition, the eukaryotic parasites cover a bewildering range of forms, and it is convenient to lump them under a single term. These parasites include protozoans -- single-celled animals -- and multicellular organisms such as fungi, worms, and biting insects.

"Parasitologists" -- biologists who work in "parasitology", the study of parasites -- divide their subjects into two rough classes: "ectoparasites", which live outside the host body, and "endoparasites", which live inside the host body. Well-known parasites include:

This is hardly a complete list; parasitism can take many forms. There are small clams known as "fingernail clams" that will parasitize fish for a brief part of their life-cycle. There are plant parasites such as mistletoe and strangler figs, parasitic fish like the lamprey, and even parasitic birds, particularly "nest parasites" such as the cuckoo.

The effects of parasites range from inconsequential -- we can live with some of them without inconvenience or even any idea they are present -- to lethal, and parasitic diseases present serious global health threats. To deal with the challenge requires understanding the threat, which ironically leads to an appreciation of some of the elegant adaptations these disagreeable organisms have acquired to maintain their lifestyles. [TO BE CONTINUED]

NEXT | COMMENT ON ARTICLE* GIMMICKS & GADGETS: An article in BUSINESS WEEK pointed out that one of the silver linings from the economic slowdown for the public was the sudden drop of fuel prices from painful highs, but this silver lining has an edge: oil companies no longer have funds to invest in production, and when the economy starts to pick up again, fuel prices may skyrocket.

However, during the boom times the oil companies were extravagant in their ways, and now they are learning the benefits of efficiency and frugality. That means such straightforward measures as maintenance and fine-tuning at oil refineries, but Royal Dutch Shell has gone much further, setting up small automated production platforms in the North Sea that cost about a third of conventional platforms. The platforms are monitored and controlled from land over satellite links and their power is obtained from solar panels and wind turbines. One has to think that using green energy to pump oil deserves at least an honorable mention for irony.

* It was once commented by a woman that "all men would still like to have a train set". As reported by BBC.com, Frederick & Gerrit Braun, twin brothers in Hamburg, Germany, have demonstrated this with a vengeance with their "Miniatur Wunderland (MW)". This is no basement train layout: at the present time it covers 1,150 square meters (12,380 square feet), has almost 10 kilometers (6 miles) of track, along with 700 trains pulling 10,000 cars, plus 2,800 buildings and 160,000 figures.

It has an ocean on which ships cruise, freeways with trucks zipping down the lanes, an airport where jetliners taxi down the runways. There are stadiums full of spectators, people at performances of plays, even a rescue team recovering a drowned person from a stream. It features environments from the world over, with the trains roaring past Mount Rushmore and the Grand Canyon; Las Vegas is very pretty at night, when the room lights are dimmed and the buildings light up with arrays of LEDS. The MW cost $16 million USD and is operated by a staff of 160, with a central control room featuring a wall of video displays. Work on the MW began in 2000 and is continuing, with completion as planned scheduled for 2014.

* I just got into the GADGET LAB blog on WIRED.com, which is a great resource for anyone who likes gadgets, even the ridiculous ones:

Articles come in on GADGET LAB in a fast and furious fashion. Even the most gadget-happy reader can find it wearying after a while, but they can still come up something completely different to keep people reading.

The WIRED "GeekDad" blog is also worth a look -- it's a review of kid's stuff for the geekily-oriented dad, with some of the entries just being stuff for geeky dads themselves. One item opened the door to a dimension I didn't know existed: unusual icecube trays, capable of producing icecubes in the shape of letters -- including a "pi" tray for the math-inclined -- plus strawberries, Lego bricks, Tetris pieces, and even Space Invaders (as "Ice Invaders"). If I saw anything like that in a store, I'd sure give it a looking-over, but I probably wouldn't buy it.

COMMENT ON ARTICLE* GEOTHERMAL POWER FOR THE RIFT VALLEY: Geothermal power has generally been seen as a somewhat marginal renewable energy source, a poor cousin to solar and wind. It is not easy to implement, and it has traditionally been dependent on finding locales where the geological activity permits it -- volcanic Iceland is one of the biggest users. However, in such places it is a very attractive power source, since it runs 24:7:365 for decades and it has no real carbon footprint.

As discussed in an article in THE ECONOMIST ("Continental Rift", 18 December 2008), the Great Rift Valley of Africa, which stretches from the northern end of the Red Sea down to Mozambique, is an ideal locale for extracting geothermal power. East Africa needs power and has no substantial deposits of fossil fuels. The United Nations Environment Program, with its world headquarters in Nairobi, Kenya, estimates the geothermal potential of the Rift Valley at 14,000 megawatts (MW), but only 200 MW is being extracted at the present time. Geothermal enthusiasm claim the Rift Valley could provide from 10% to 25% of Africa's power by 2030. Currently, electricity tends to be provided by hydropower, but dams can be environmentally troublesome and have an unfortunate tendency to go dry in droughts.

There are problems with geothermal, too. It's not all that labor-intensive and doesn't make for a good jobs program, which turns off African politicians. Plants can disrupt the lives of rural folk, and can also leak radon and other unpleasant emissions. Setting up a geothermal plant is expensive, likely more expensive than a coal plant. However, international agreements on carbon emissions may help Africans get cheap loans and provide the expertise needed to set up the plants.

Roughly 18 geothermal plant sites have been identified in the Ethiopian part of the Rift Valley and in the Danakil depression, on the border with Eritrea and Djibouti. The Ethiopians believe they could obtain 440 MW of power from geothermal, adding to the country's current capacity of 790 MW. Djibouti has signed a deal with Iceland, which energetically exports its geothermal expertise, to build a plant near the Lake Assal, the continent's lowest point. The plant will also condense steam for public water use.

Kenya is an African pioneer in geothermal power, with its Olkaria plant outside Naivsaha currently producing 158 MW. The government would like to raise the amount of geothermal power produced in the country to 576 MW within a decade. Kenya's current national power generating capacity is 1,200 MW, and all of that is in use -- power cuts are increasingly common, and diesel generators are being installed to try to make ends meet. Kenyans have a big incentive to like geothermal.

COMMENT ON ARTICLE* FCS BITES THE DUST: As reported in the WIRED.com / DANGER ROOM blog, US Defense Secretary Robert Gates has finally decided to pull the plug on the US Army's $90 billion USD "Future Combat System (FCS)" program, which was an effort to build a comprehensive constellation of weapons systems, from armor to robot drones, for the Army's warfighters.

Gates told an audience at the Army War College that FCS weapons development hadn't just gone off the rails -- producing a new lighter tank and infantry combat vehicle that just didn't meet specs -- but that the whole program was misconceived. As Gates put it, "my experience in government is, when you want to change something all at once and create a whole new thing, you usually end up with an expensive disaster on your hands. Maybe Google can do something revolutionary. But we don't have the ability to do that."

Army brass had a lot riding on the FCS, but Gates assured the audience that components of the FCS program that seemed clearly useful, particularly the battlefield "Warfighter Information Network - Tactical (WIN-T)" and its associated robotic "eyes" and "ears", would continue, and that the money allocated for FCS wasn't going to disappear from the Army's budget.

In general, Gates is trying to refocus the Pentagon from its lingering Cold War mindset to the era of dirty little wars. One casualty so far is the Lockheed-Martin F-22A Raptor, an ultrasophisticated and extremely expensive "fourth generation" fighter designed to counter a next-generation Soviet threat aircraft that never materialized. The F-22A will remain in Air Force service, but once the batch of aircraft currently in the pipeline are delivered, that will be the end of it, with only 187 obtained in all. The cheaper F-35 Joint Strike Fighter, designed to pound the Black Hats on the battlefield, is now the Air Force mount of choice.

Similarly, Gates plans to revise the Pentagon's missile-defense effort, for example putting the brakes on the Air Force's "Airborne Laser", a flying laser system built into a Boeing 747 jetliner designed to hit missiles as they take off -- though missile defense remains on the burner. Winners are the Navy's "Littoral Combat Ship (LCS)" program, intended to provide lighter high-tech warships for shallow-water operations, and in particular the array of drones that have proven so valuable in the military's current battle theaters.

Big weapon systems programs have their backers, making them hard to kill, and even when they've been killed they don't necessarily stay dead. Gates wants to hold the line, however. As he said at a Pentagon press conference: "Some will say I am too focused on the wars we are in and not enough on future threats. But it is important to remember that every defense dollar spent to over-insure against a remote or diminishing risk -- or, in effect, to 'run up the score' in a capability where the United States is already dominant -- is a dollar not available to take care of our people, reset the force, win the wars we are in, and improve capabilities in areas where we are underinvested and potentially vulnerable. That is a risk I will not take."

He commented elsewhere that though his decisions "did not leave smiles on the face of different services", he made in clear that, once the brass had their say, they should fall in line: "I don't want to see any guerrilla warfare on this. We have a chain of command." Critics outside the Defense Department have descended on Gates, claiming that he is really pushing unilateral disarmament in the form of restructuring, but he has also made it clear that he wants more money for the Pentagon and that the money should keep flowing in even if, when, the military has withdrawn from the current round of combat operations.

As Gates put it: "We cannot disarm as we begin to see these conflicts we're in today wind down." He is not ignoring other potential threats, saying that "we know other nations [read 'China'] are working on ways to thwart the reach and striking power of the US battle fleet -- whether by producing stealthy submarines in quantity or developing anti-ship missiles with increasing range and accuracy. We ignore these developments at our peril." Still, that chain of command doesn't stop at Gates; he takes his marching orders from President Obama, and though Gates appears to have the president's confidence, the vision of the man at the top is the one that's going to be implemented.

COMMENT ON ARTICLE* SMARTER CARS (2): One of the more interesting directions of work in automotive safety technology is the development of systems that warn of, and help avoid, collisions with vehicles or other road hazards ahead.

"Forward-collision warning" systems are based on adaptive cruise-control devices that leverage off radar data to maintain a present distance or time gap behind the vehicle in front. Anyone who's ever used a traditional cruise-control system knows it's fine for cross-country driving, but generally irrelevant for city traffic; however, newer "stop & go" adaptive cruise controls make riding in slow-moving traffic considerably easier, because they are able to follow the car ahead precisely as it halts and starts up again.

Adding in a camera and sophisticated software-control algorithms makes for a basic collision warning system. Radars are good at detection and ranging, but they are poor at classifying a target; a video camera is good at identifying things. Together, the two systems can determine with confidence that a dangerous frontal collision is likely and take corrective action.

In typical installations, a long-range microwave radar operating at 77 gigahertz (GHz) scans a few hundred meters ahead, while a short-range radar operating at 24 GHz keeps track tens of meters ahead. Wide-angle video cameras can recognize objects about fifty meters ahead. When the integrated warning system determines that a collision is possible, it jogs the brake pedal, flashes a warning system, and prepares the brake system for instantaneous activation by precharging the hydraulic brake lines. If the driver does nothing and a collision becomes inevitable, the system then slows the car as much as possible to reduce the damage. Such schemes point the way to improved systems that automatically hit the brakes or steer cars to avoid collisions completely. However, designing such an advanced system that is fail-safe under all reasonable driving conditions is obviously troublesome, and nobody expects to see it for at least a decade.

Volvo took a shot at automated braking in its XC60 model with its "City Safety" feature -- a low-speed collision avoidance and mitigation system that operates below 32 KPH (20 MPH). It is intended to avoid urban "fender bender" accidents, which account for about three-quarters of all car collisions. Fender benders are usually caused by distracted drivers; they rarely cause fatalities, but often can result in whiplash. City Safety has an infrared laser range finder that detects objects a few car lengths ahead. The system determines the approach speed to an obstacle and, if necessary, primes the emergency brake to respond quickly. If the driver doesn't respond, the system will brake automatically; City Safety does not alert the driver at that time since the result is likely to be confusion; the driver is belatedly notified after the crisis has passed.

Volvo engineers say that all-speed autonomous braking will be very difficult, since the number of different situations that can be encountered is very large, requiring a very smart system that won't be more likely to kill drivers than save them when a corner case occurs. Automotive manufacturers have an obviously very heavy burden of reliability and so they tend to be conservative, testing new systems intensively to make sure they work as specified. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* EVOLUTION & INFORMATION (7): Underlying the information theory arguments of the critics is an insistence that modern evolutionary theory is adequate to explain what its advocates claim it can explain. In broad terms, as one critic insisted, MET "neither anticipates nor remembers. It gives no directions and it makes no choices."

It is true it doesn't anticipate, which is why it comes up with things like flightless and defenseless dodo birds; and it is at least true to an extent that it gives no directions, except for improved fitness to the environment. That it makes no choices is bogus, since it has the ultimate choice, between survival and extinction. If "the creation of information demands intelligence", the single-minded Grim Reaper provides all the "intelligence" necessary to do the job -- making a brutal, simple, clear, and decisive ON or OFF judgement to sort out what prospers and what dies out. Natural selection can say YES or NO to sort out a ONE from a ZERO and can, over time, accumulate as much "information" as anyone would like.

It is also bogus to claim it cannot remember. It clearly does, using the mechanism of heredity as embodied in the "memory tape" of the genome, and in fact if there was no heredity, no "memory", there would be no evolution. The critics still demand to know where the information -- the "functional information", the "instructions" -- acquired by the "memory" embodied in the genome comes from. Evolutionary scientist Richard Dawkins provided an eloquent answer:

BEGIN QUOTE:

If natural selection feeds information into gene pools, what is the information about? It is about how to survive. Strictly it is about how to survive and reproduce, in the conditions that prevailed when previous generations were alive. To the extent that present day conditions are different from ancestral conditions, the ancestral genetic advice will be wrong. In extreme cases, the species may then go extinct.

To the extent that conditions for the present generation are not too different from conditions for past generations, the information fed into present-day genomes from past generations is helpful information. Information from the ancestral past can be seen as a manual for surviving in the present: a family bible of ancestral "advice" on how to survive today. We need only a little poetic license to say that the information fed into modern genomes by natural selection is actually information about ancient environments in which ancestors survived.

END QUOTE

The instructions in the genome were acquired the hard way, through sheer trial-and-error experience. A jumble of "raw information" -- indiscriminately good, bad, or indifferent -- was inserted by mutations, with the "nonfunctional information" tossed out by the Grim Reaper and the "functional information" left behind to act as instructions. The instructions encode the past experience of an organism's ancestors in the form of adaptions, with no foresight to the future. This is essentially just the definition of evolution by natural selection. Where's the problem? What fantasy "conservation law" renders it impossible?

Information theory poses no real challenge to MET. Despite this, the critics press ahead regardless, hoping to muddy the waters using information-theory arguments generally characterized by fallacies:

In the end, the only result is to contrive a system rigged to crank out the desired answer. These exercises have never stood up to peer review, and in fact those cooking them up rarely express much interest in criticism when they even bother to acknowledge it.

* Exactly who originally came up with the idea of using information theory to attack MET is not entirely clear. However, the basic reasoning behind the argument is very old, tracing back to the work of the 18th century British "natural philosopher" William Paley. Paley reasoned that the elaborations of nature indicated that they had been formed by an intelligence. He famously said that if one had found a watch, its elaboration would imply a watchmaker, and so if one finds, say, a butterfly, its greater elaboration would imply a greater "watchmaker" -- a Designer.

Paley appears to have been a thoughtful and conscientious man, and it is a bit of a pity that he has become so well known for what has been recognized as the "Paley fallacy". His fallacy was not in suggesting that the elaborations of nature might imply a Designer; on the face of it, they might. His fallacy was that he was simply reasoning by analogy, believing that since humans design elaborate mechanical objects, then elaborate natural objects had to have been Designed by some super-human intelligence. The argument actually does not address the question of whether elaborate natural objects could have arisen by some natural process; it simply leaps to an answer and proclaims they had to have been Designed by analogy with human artifice.

While the question of whether the laws of nature were Designed or not is a philosophical one that the sciences cannot address -- and have no need to, the laws of nature work the same either way -- MET flatly rejects the idea that the Earth's organisms were specifically designed, stating instead that they are opportunistic adaptations produced by natural processes. MET's critics find this an outrageous idea, claiming "an unmade bed cannot make itself", but all they really can do in the end is fall back on the Paley fallacy, leaping to the answer that they had to have been Designed.

Paley's reasoning does have a certain intuitive appeal, but it tends to lose its appeal on closer examination. Paley's thinking was very mechanistic, comparing the workings of machinery to the workings of organisms, despite the fact that there is so little resemblance between the two. Machines, unlike organisms, do not grow or reproduce; no two organisms of the same species are as alike as two machines from the same production batch; and so on. The analogy is like comparing a Barbie doll to a real woman.

Worse, although Paley identified organisms with machines, his line of reasoning could provide no details whatsoever of the Designer, such as who the Designer was -- any Designer could be proposed as anyone was inclined to -- what it did, when it did it, and so on. In the absence of any such details, from a scientific point of view, it was hardly an explanation of anything, proposing as a "mechanism" a solution with no machinery, like explaining the operation of an automobile by invoking unseen gremlins. Paley used a watch for his example because it was the 18th-century concept of high technology. The "Law of Conservation of Information" is nothing more than the same reasoning, updated to use computer programming as a model instead of a watch. It might as well be phrased as: "Only a Watchmaker can produce information."

* The information theory argument against MET remains very popular, partly deriving its strength from the intuitive but misleading appeal of the Paley fallacy; partly from the fact that everyone has something of an intuitive concept of the idea of "information", and it's very easy to come up with plausible-sounding arguments. The fact that such arguments have zero basis in either the laws of physics or mainstream information theory can be easily concealed in a fog of sophisticated-sounding bafflegab.

The use of information theory in biology is actually of little interest to all but a handful of specialists. Personally, I see the subject as a trap. I have no use for information theory beyond the fact that critics of MET like to misuse it, and no good reason to go through the time and effort to acquire expertise in the subject when I have better things to do.

I just wrote up these notes to commit what I knew to record, and they clearly leave something to be desired. Sometimes I think it would be nice for information theory pros to go over them -- but the odds are they would tell me I needed to go buy an expensive textbook, drop other things on my plate, and spend a serious chunk of time trying to wade through it. No way. This will just have to do. [END OF SERIES]

START | PREV | COMMENT ON ARTICLE* SCIENCE NOTES: An interesting article on BIOLOGY NEWS NET discussed how biochemists are now constructing synthetic proteins not found in nature. Natural proteins are evolved structures that may serve elaborate roles, and to no surprise they are elaborate, consisting of long chains or sets of long chains of amino acids that are folded into complicated three-dimensional structures. Understanding their structure and precise function is challenging. Proteins are also often used in medicine and industry, but the natural proteins used in such applications may be expensive to produce.

A team of biochemists from the University of Pennsylvania School of Medicine has synthesized an entirely new protein. The protein carries oxygen, much as does the hemoglobin molecule, but the synthetic is simpler. It may prove useful in manufacturing artificial blood for use in combat or the emergency room. Dr. Christopher C. Moser, one of the senior researchers involved in the effort, explains: "Our aim is to design new proteins from principles we discover studying natural proteins. For example, we found that natural proteins are complex and fragile and when we make new proteins we want them to be simple and robust. That's why we're not re-engineering a natural protein, but making one from scratch."

Traditionally, protein engineering has focused on modification of natural proteins. The Penn researchers felt that it might have advantages to work their way up from general principles, not only for potential applications but also to demonstrate a command of protein structure and function. Moser's colleague Dr. P. Leslie Dutton believes that biochemists will be able to design much more effective proteins than those provided by nature: "This exercise is like making a bus. First you need an engine, and we've produced an engine. Now we can add other things on to it. Using the bound oxygen to do chemistry will be like adding the wheels. Our approach to building a simple protein from scratch allows us to add on, without getting more and more complicated."

* LIVESCIENCE.COM reports that last year, astronomers were able to obtain samples of an asteroid without leaving the Earth. On 6 October 2008, the automated Catalina Sky Survey telescope at Mount Lemmon, Arizona, spotted an asteroid on a collision course with the Earth. 19 hours after the sighting, the asteroid exploded in a fireball in the stratosphere over the Nubian Desert of northern Sudan. Peter Jenniskens, a meteor astronomer with the Carl Sagan Center at the SETI Institute, thought it might be possible to find fragments of the bolide. Working with researchers and students of the University of Khartoum, Jenniskens followed the asteroid's trajectory plot and found dozens of fragments strewn over a 19 kilometer (18 mile) stretch of desert.

To be sure, there are fragments of asteroids scattered all over the surface of the Earth, but this was the first time fragments had been recovered from an asteroid that had been observed in space. Asteroids in space are covered with space dust, and so observations of them as they fly past don't necessarily tell much about their internal composition. Obtaining the fragments allowed cross-referencing with the space observations.

The asteroid, designated "2008 TC3", was from 2 to 5 meters (7 to 16 feet) in diameter. It was what is known as an "F-class" asteroid, featuring very dark, porous, fragile material with a high carbon content that suggests they were "cooked" to high temperatures at sometime in their past. Astronomers are looking forward to obtaining fragments from future impacts, or at least they are as long as the asteroids remain fairly small. As the saying goes: be careful what you ask for -- you might just get it.

* In science news of a sort, MSNBC reported that Willie, a Quaker parrot (AKA Monk parakeet though they're really just small parrots) down the road from me in Denver, Colorado, was granted a lifesaver award by the local Red Cross chapter after he saved the life of a toddler. His owner, Megan Howard, was babysitting a little girl; Howard left the room and the little girl started to choke on her breakfast.

Willie got excited, flapping his wings and screeching: "Mama, baby!" -- repeatedly. Howard dashed back into the room to find the little girl turning blue; the Heimlich maneuver cleared the obstruction and the toddler was able to breathe again. There is a tendency to read more cognizance into animal actions than may actually be there, but parrots are very intelligent and some breeds, such as the African grey and Quaker, can speak words in context and even put together simple sentence constructs.

I did some poking around on Wikipedia, but alas the entry does not explain how the Quaker parrot got its name. However, it is an interesting beast -- it is a gregarious species, congregating in flocks, and unlike most other parrots, it builds a stick nest instead of finding holes in trees or the like. It is also fairly tolerant of cold weather, adapts easily to urban environments, and feral colonies have established themselves in the UK and over a half-dozen US states. There's a ton of pix of Quaker parrots on Flickr, and the majority are of wild birds living in Brooklyn. Somehow I visualize a Quaker from the NYC area saying: "Hiya! My name's Boit! I'm from dah Bronx!"

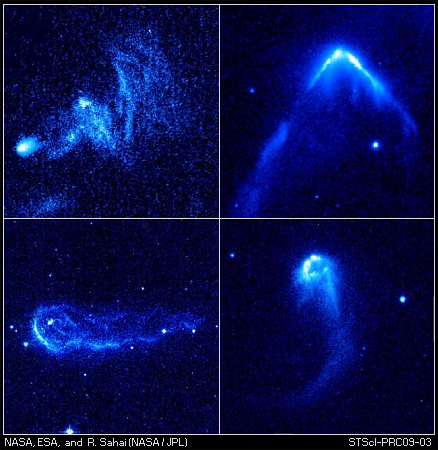

COMMENT ON ARTICLE* RUNAWAY STARS: An article from LIVESCIENCE.com ("Runaway Stars Go Ballistic" by Andrea Thompson) commented on fourteen young stars discovered by the US National Aeronautics & Space Administration's (NASA) Hubble Space Telescope that are racing through clouds of interstellar gas, creating "bow shocks" and leaving a trail behind them.

Says Hubble research scientist and team leader Raghvendra Sahai of NASA's Jet Propulsion Laboratory in Pasadena, California: "We think we have found a new class of bright, high-velocity stellar interlopers. Finding these stars is a complete surprise because we were not looking for them. When I first saw the images, I said: 'Wow. This is like a bullet speeding through the interstellar medium.'"

The bow shocks are formed when the stars' powerful stellar winds -- streams of neutral or charged gas that flow from the stars -- slam into the surrounding dense gas, like a speeding boat pushing through water on a lake. The strong stellar winds suggest the stars are young, since only very young or very old stars produce powerful winds, and their youth is suggested by their association with dense interstellar clouds, which are sites of star-forming activity. The stars appear to be medium-sized, about eight times the mass of the Sun.

The bow shocks are huge, one to two orders of magnitude wider than our Solar System. The stars appear to be moving at roughly 180,000 KPH (112,000 MPH) through the gas medium they are plowing through, which is about five times faster than typical young stars. Sahai and his team suspect the young stars are runaways that were tossed out of the galactic clusters they were born in. The runaways might have been components of binary systems with a second star that went supernova, or the binaries might have been involved into collisions with another star or binary. Given their velocity and assumptions of their age, they have traveled about 160 light-years.

The stars spotted by the Hubble aren't the first stellar runaways astronomers have found. The Dutch-American Infrared Astronomical Satellite (IRAS) spotted similar objects in the 1980s. However, those stars were much more massive than those found by the Hubble and left much bigger bow shocks. According to Sahai: "The stars in our study are likely the lower-mass and/or lower-speed counterparts to the massive stars with bow shocks detected by IRAS. We think the massive runaway stars observed before were just the tip of the iceberg. The stars seen with Hubble may represent the bulk of the population, both because many more lower-mass stars inhabit the universe than higher-mass stars, and because a much larger number are subject to modest speed kicks."

Runaway stars are not easy to find because, having run away, they're in unpredictable places they shouldn't be. Sahai's team was actually investigating "preplanetary nebulas", or puffed-up old stars that are just about ready to shed their outer layers. However, having discovered the runaways, the team has found them a fascination subject for investigation.

COMMENT ON ARTICLE* BHOPAL AT 25: As discussed in an article from BBC.com ("Bhopal's Health Effects Probed" by Gaia Vince), at five past midnight on 3 December 1984, a storage tank exploded at the American-owned Union Carbide pesticides manufacturing plant at Bhopal, India, releasing 40 tonnes (44 tons) of methyl isocyanate (MIC) gas in a lethal cloud that dispersed over the densely populated city of nearly a million people.

One of Bhopal's citizens, Yassir Nadir, was fortunately visiting relatives outside of town when the accident happened: "My eyes began to sting, and I thought someone must be cooking nearby with chilies. We were watching THE GODFATHER film and it started to look a little blurry, but I didn't think anything of it. The next day I went to the school where I worked as an administrator, and found it shut and chaos on the streets."

"It looked like hail on the ground, like snow, there were so many shrouds. Everywhere you looked in the city, there were shrouds. The hospitals and mortuaries were overflowing. People just dropped dead in the street while they were walking ... People were running from the city leaving everything behind -- all of their belongings, even their children. But nobody knew anything about what had happened."

It was the worst industrial accident in history. Before the sun came up the next morning, roughly 2,000 people were dead; before three days were out, the number killed went to 5,000, and after a number of weeks, the tally came to 20,000. Of the survivors, almost a quarter-century later, at least 100,000 are chronically ill from their exposure to the toxic gas, and a further 30,000 continue to drink and wash using contaminated groundwater. MIC is highly reactive, burning the eyes and lungs; it can be absorbed into the bloodstream through the lungs, and then damage most of the body's organs. The citizens of Bhopal suffer from a wide range of maladies at far higher levels than the norm for India, the afflictions ranging from cancers to cataracts to kidney failure to birth defects.

The accident was due to a chain of blunders -- failures to make proper use of plant safety systems, coupled with defective gear. The ugliest fact was that MIC was just an intermediate in the process, and given a process that made use of it as it was generated, there would have been no need to store a huge tank of it. In the wake of the accident, there were also concerns about chemicals disposed of in landfill sites and three purpose-built storage ponds, with particular fears that the toxic ponds were contaminating the groundwater.

The political fallout from the accident was of course intense and continues, with lawsuits still in progress in both India and the USA. Union Carbide was bought out by Dow Chemical in 2001, and though activists have demanded that Dow clean up the ponds, company officials have disclaimed responsibility. The Indian government claims it does not have the resources to do the job, and in fact the government stopped conducting research on the long-term health effects on the people of Bhopal in 1994. Faced with continuing protests, however, the government has restarted investigation, with the Indian Council of Medical Research (ICMR) now funding an investigation to help characterize health problems and identify hazards posed by toxic waste.

This March, groups representing the survivors and victims of the tragedy appealed to UNESCO for the preservation of the Union Carbide plant as an "Industrial Heritage Site" of international importance. They want the structure to remain as a memorial and a reminder to future generations. The activists say they will physically block any attempts to demolish the plant.

COMMENT ON ARTICLE* SMARTER CARS (1): An article in SCIENTIFIC AMERICAN ("Driving Towards Crashless Cars" by Steven Ashley, December 2008) surveyed the latest technology being developed to build smarter and safer cars. In 2006, there were six million motor vehicle accidents in the USA, resulting in 39,000 deaths and 1.7 million injuries -- a level of carnage equivalent to a serious war. American roads are growing more crowded. In the developing world, automotive ownership is growing by leaps and bounds, far outstripping the ability of countries to improve road infrastructure in step.

The primary cause of accidents is driver error, a problem that is becoming more serious in countries where population growth is slowing and the average age of drivers is increasing. A smarter car can help compensate for the reduction in faculties with age. The emphasis on improved fuel mileage has also led to lighter cars that are more vulnerable in collisions, making cars that don't get into collisions even more attractive.

There are, however, factors slowing down the adoption of smarter cars. One is of course that the high tech needed to increase the intelligence of cars tends to be expensive. The complexity of the technology also makes car designers nervous, since bugs may be difficult to work out and could expose manufacturers to litigation. Customers are certainly hesitant to give up control over the car to automated systems, and have to be reassured that they are better off with the automation than without it.

* Passive safety systems -- seat belts, airbags, and crumple / crush zones that soak up or divert damage in collisions -- have been around for decades and have proven effective, but they do nothing to prevent collisions in the first place. Some active safety systems, created to help avoid accidents or reduce their severity, have also been around for a long time. "Antilock brake systems (ABS)" were introduced commercially in 1978. ABS improves steerability and hastens deceleration when a driver slams on the brakes. ABS was particularly significant in that it was the first example of an electronic system that took over control from the driver in an emergency situation.

ABS was followed by "traction control systems (TCS)", which automatically adjust power to the drive wheels when the driver steps on the gas too abruptly, preventing the wheels from spinning out. Next was "enhanced stability control (ESC)", which continuously monitors the angle of the steering wheel and the vehicle's direction. If ESC detects skids, it attempts to compensate for them by selectively activating the brakes to straighten out the car's trajectory. It may also throttle down the power until the car is out of danger. Studies show that ESC is highly effective, lowering the rate of single-vehicle crashes by 29% to 35% and the number of head-on collisions by 15% to 30%. The US National Highway Traffic Safety Administration (NHTSA) is so impressed with ESC that from 2012, all vehicles below 4,535 kilograms (10,000 pounds) gross weight must have ESC. ABS and TCS are expected to become standard along with it.

ESC is seen as the key to a new generation of safety systems based on networking vehicle sensors. Until recently, car sensors worked independently of each other; networking allows them to operate synergistically. ESC is built around acceleration or "gee-force" sensors that keep an eye on overall motion of the vehicle, and so it can act as a central element in a networked vehicle sensor system.

Consider Bosch's current side-impact airbag system. In a crash, the airbags are triggered by two separate sensors -- one in the door that detects pressure, and one in the stability-control unit that measures acceleration. Since setting off airbags when they're not needed could be disastrous, the sensor inputs have to be validated and coordinated before the airbags go off, which leads to a potentially fatal time delay in reaction. With a networked system, the gee-force system maintains continuous track of vehicle motion and orientation, and if the system detects the car is moving sideways, it can arm the airbags to go off the instant the door pressure sensor says they need to. Not only will a networked system be more responsive, it is possible it will even be cheaper, because it will eliminate unnecessarily duplicated sensors. [TO BE CONTINUED]

NEXT | COMMENT ON ARTICLE* EVOLUTION & INFORMATION (6): Having acquired definitions from information theory, we can now return to the supposed "Law of Conservation Of Information" in its various forms. One way that it is expressed is that there is no way for "information" to arise from "randomness". For example, as one critic put it:

BEGIN QUOTE:

Information theory states that "information" never arises out of randomness or chance events. Our human experience verifies this every day. How can the origin of the tremendous increase in information from simple organisms up to man be accounted for? Information is always introduced from the outside. It is impossible for natural processes to produce their own actual information, or meaning, which is what evolutionists claim has happened. Random typing might produce the string "dog", but it only means something to an intelligent observer who has applied a definition to this sequence of letters. The generation of information always requires intelligence, yet evolution claims that no intelligence was involved in the ultimate formation of a human being whose many systems contain vast amounts of information.

END QUOTE

This statement seems impressively authoritative, but it was just pulled out of thin air. If random changes are introduced into a string, the information content is likely to keep getting bigger and bigger. Suppose a data file gets corrupted; does it gain or lose information? It actually gains information by adding randomness to the string -- "GGdGXXTTTyT" has more information than "GGGGXXTTTTT" because it can't be compressed as much. As far as information theory is concerned, information absolutely arises out of randomness: the more random the information, the less "air" that can be squeezed out of it.

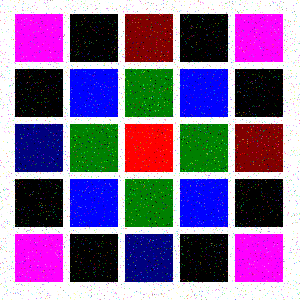

To the general public, the idea that information theory says that random changes introduce information may seem counterintuitive, since most people would think that random changes would amount to "noise" that would corrupt "information". For example, imagine the simple structured image file used as an example in a previous installment; if it were corrupted, say with little colored speckles randomly sprinkled over the image:

-- it would seem plausible to say that there had been a loss of information. However, once again, the problem is that "meaning", in this case the picture contained in the file, isn't the issue addressed by contemporary information theory. The speckles mean more information to compress, with the compression algorithm having to record where the speckles are so they can be reconstructed later, meaning the compression ratio will not be as great. While the original file compressed to 1.12 KB, a half percent of its uncompressed size, this "degraded" file only compresses down to 47.4 KB, 18% of its uncompressed size. The random changes added information to the file. The fact that they have resulted in a less satisfactory image is irrelevant.

Counterintuitive this may be, but it was the critics who ran off with the information theory football and carefully kicked it through the wrong goalposts. A comment along the lines of "information theory states that information never arises out of randomness or chance events" is flatly bogus; it says no such thing. Indeed, as Chaitin pointed out, generating a large amount of information requires either a very long-winded calculation or a source of randomness. In other words, the complexity of the genome is entirely consistent with its origins in random mutations. The attempt by the Design advocates to "move the goalposts" and proclaim they actually mean "functional information" is crippled by the fact that they cannot provide anything resembling a clear definition of the concept, much less show how it supports a "Law of Conservation of Information".

* Another Design advocate went on in the same vein, attacking the idea that MET could account for, say, the transformation of a hemoglobin gene into an antibody gene:

BEGIN QUOTE:

There are two fallacies in this argument. The first is that random changes in existing information can create new information. Random changes to a computer program will not make it do more useful things. It doesn't matter if you make all the changes at once, or make one change at a time. It will never happen. Yet an evolutionist tells us that if one makes random changes to a hemoglobin gene that after many steps it will turn into an antibody gene. That's just plain wrong.

END QUOTE

Again, the argument is trying to leverage off an implied law of information theory -- "random changes in existing information cannot create new information" -- that doesn't exist, based on a fuzzy definition of the term "information". Once that red herring is thrown out, the critic is merely making a hamfisted "monkeys & typewriters" argument against MET: "It doesn't matter if you make all the changes at once, or make one change at a time. It will never happen." In reality, anybody who thinks MET is about monkeys blindly hammering on typewriters hasn't understood the essential point of the whole concept: the random changes matter a great deal, as long as each change is screened by natural selection.

This particular example makes it plain what the critics are trying to say. In effect, given an image file, they are claiming that random changes of the picture dots are not going to result in a proper image of anything; by implication it requires intelligence to create a useful image. Random changes by themselves of course won't produce a useful image, but there are two fallacies in this argument:

[TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* Space launches for March included:

-- 06 MAR 09 / KEPLER -- A Delta 2 7925 booster was launched from Cape Canaveral to put the NASA "Kepler" astronomy spacecraft into space. Kepler was designed to hunt for evidence of planets in distant star systems though "stellar occultations". The spacecraft's payload was a 1 meter wide-angle Schmidt telescope with a 105-degree field of view and an unprecedented 95-megapixel imager, with the telescope scanning the sky to detect intermittent variations in stellar brightness caused by a planet passing in front of a star. The spacecraft was capable of detecting a change in brightness of 1 part in 20 million and could detect Earthlike planets in orbit around stars from up to 3,000 light-years away.

Kepler was placed in a solar orbit to trail the Earth at a tenth of the distance of the Earth from the Sun. From its distant location, it could maintain a continuous watch on a patch of sky without the Earth getting in its way or affecting its station-keeping while it monitored a patch of sky in the constellation Cygnus, with the region estimated to contain 100,000 Sun-like stars near enough for Kepler to observe. The primary mission was planned to last three and a half years, the length of time it would take a planet in an Earthlike orbit to transit its parent star three times, showing that it was a planet in a predictable orbit and not, say, a big sunspot or other anomaly. Kepler had station-keeping fuel adequate for at least six years.

Kepler had a launch mass of 1,050 kilograms (2,320 pounds) and a length of 4.66 meters (15 feet 4 inches). The imager system was passively cooled by heat radiators to -85 degrees Celsius. It was fitted with four solar panels providing 1.1 kilowatt of power, plus a radiation-hardened PowerPC-based flight computer, a 63 gigabyte data storage system, and a Ka-band datalink. The spacecraft was built by Ball Aerospace for NASA's Jet Propulsion Laboratory.

-- 15 MAR 09 / DISCOVERY (125 STS-119) / ISS (S6 POWER TRUSS) -- The NASA space shuttle Discovery was launched from Kennedy Space Center on "STS-119", the 125th shuttle mission. The purpose of the mission was to deliver the "S6 Power Truss", the final solar array / truss assembly for the International Space Station (ISS).

The crew included commander USAF Colonel Lee Archambault (second space flight); pilot Navy Commander Dominic Antonelli (first flight); plus mission specialists Joseph Acaba (first flight), Steven Swanson (second flight), Richard Arnold (first flight), and Dr. John Phillips (third flight, including a six-month stint on the ISS). The flight also brought up Dr. Koichi Wakata (third flight) of the Japanese JAXA space agency to join the ISS Expedition 19 crew, and was to return with Dr. Sandy Magnus of the ISS Expedition 18 crew, who had arrived on the station on the previous shuttle flight, STS-126, in November 2008.

Discovery docked with the ISS on 17 March. The astronauts went through their mission duties, setting up the final power assembly and repairing a urine recycling system, while taking time to chat with US President Barack Obama over videolink with the White House. Discovery undocked from the ISS on 25 March and landed at Kennedy Space Center on 28 March after about 12 days 19 hours 30 minutes in space.

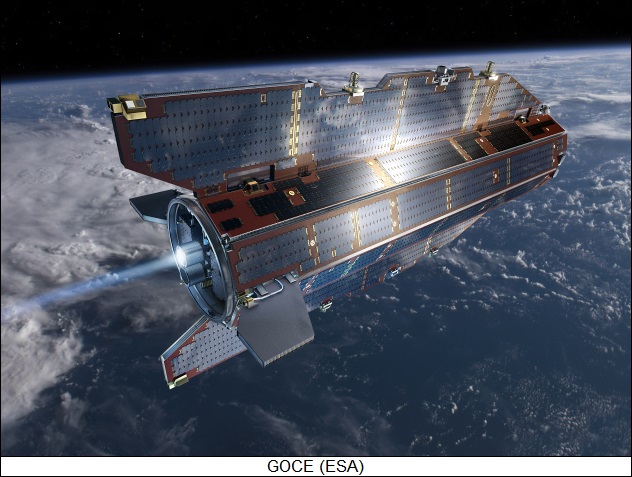

-- 17 MAR 09 / GOCE -- A Rockot booster was launched from the Plesetsk Northern Cosmodrome in Russia to put the European Space Agency (ESA) "Gravity field & steady-state Ocean Circulation Explorer (GOCE)" Earth gravity mapping satellite into orbit. GOCE's mission was to provide a gravity map of the planet in unprecedented detail.

GOCE was built by Thales Alenia Space and Astrium. It had a launch mass of 1,050 kilograms (2,319 pounds) and measured about 5.2 x 0.9 meters (17 x 3 feet). Its primary payload was a "gradiometer", with three pairs of identical, highly sensitive accelerometers. The accelerometer pairs were mounted on three arms like a jack structure, with one arm pointed along the flight path, one arm pointed up and down, and one pointed to the sides. The accelerometers provided differential measurements of the changes in velocity of the spacecraft due to variations in gravity. The sensitivity was described as adequate to sense the fall of a single snowflake onto a supertanker ship.

To make its precision map, GOCE was placed in a very low orbit with an altitude of 260 kilometers (160 miles); at that altitude, it had to plow through the very thin traces of the upper atmosphere, and so it was one of the few satellites not designed to be recovered that had an aerodynamic configuration, with fins running down its sides; mission staff called it the "space arrow". It had a xenon ion engine to keep its orbit from decaying. GOCE worked along with the ESA Envisat environmental satellite to build the map.

GOCE's measurements were to be a thousand times better than those returned by the previous gravity-mapping space experiment, the US-German GRACE mission. The mission was to last two to three years, depending on the level of solar activity and its agitation of the upper atmosphere.

-- 24 MAR 09 / GPS 2R-20 -- A Delta 2 7925 booster was launched from Cape Canaveral to put the "GPS 2R-20" navigation satellite into medium Earth orbit. It replaced the aging GPS 2A-27 satellite, launched in September 1996. GPS was the seventh in a series of "modernized" replacement GPS navigation satellites and was also known as "GPS 2R-M7". The modernized GPS satellites carried additional navigation signals and provided better accuracy. This particular satellite also carried a demonstration "L5" payload, planned for use in the next generation of GPS. This was the 20th GPS spacecraft built by Lockheed Martin; the next-generation GPS 2F spacecraft, being built by Boeing, will incorporate the L5 signal. This was also the second-to-last flight of an Air Force Delta 2, with the service to transition to the Delta 4 and Atlas 5 series.

-- 26 MAR 09 / SOYUZ TMA-14 (ISS) -- A Soyuz-Fregat booster was launched from the Baikonur Space Center in Kazakhstan to put the "Soyuz TMA-14" manned space capsule into orbit on an International Space Station (ISS) support mission. It carried commander Gennady Padalka of the Russian Space Agency (RKA) on his third space flight and rookie engineer-physician Michael Barratt of NASA to the station -- as well as "space tourist" Charles Simyoni, a Hungarian-born American software entrepreneur making his second trip to the station.

Padalka and Barratt joined Japanese astronaut Koichi Wakata -- delivered by the shuttle DISCOVERY days earlier to change places with NASA flight engineer Sandra Magnus -- to form up the new crew, relieving Expedition 18 commander Mike Fincke of NASA and flight engineer Yury Lonchakov of the RKA. The Expedition 18 crew left the station 7 April, with Simyoni returning with them. Landing was delayed by weather and took place on 8 April.

* OTHER SPACE NEWS: In 1966 and 1967, NASA put sent five "Lunar Orbiter" probes to the Moon to provide maps of unprecedented detail of the lunar surface, in preparation for the Apollo landings. The probes were marvels of technology for the time, with a high-resolution and a medium-resolution film camera, the film being developed on board and then scanned for radio relay back to Earth. The first three were put into orbit around the Moon's mid-latitudes to obtain images of potential landing spots, while the last two were put into polar orbit to obtain global maps. Three of the probes were partial successes and two worked perfectly.

The imagery was impressive for the time but less satisfactory to the modern eye, in particular because the images were typically released as "mosaics" of many film frames, and the splicing together was far from seamless. Now the "Lunar Orbiter Image Recovery Project (LOIRP)", at NASA's Ames Research Center in California, is going through the archives and cleaning them up for the 21st century. The LOIRP team is reading Lunar Orbiter analogue image data from old tapes -- a feat that required refurbishing old gear and figuring out how to interface to it -- and converting it to digital, allowing it to be run through sophisticated image processing algorithms.

The cleanup effort is not simply an exercise in nostalgia; the resolution of the Lunar Orbiter imagery was very good in many cases, and comparing the refurbished digital imagery to imagery returned by more modern Moon orbiters will permit comparison of terrain over a 40-year interval, which should uncover craters that were created over the interval, allowing an estimate of impact rates. The LOIRP group is looking to work over other NASA imagery, particularly that returned by the Apollo missions. Given the unfortunate tendency of digital media to become obsolete, team members feel they need to do the job now before the data is lost forever.

COMMENT ON ARTICLE* ADS-B ON THE WAY: An article on SCIENTIFICAMERICAN.com -- "Feds Push Satellite Technology to Make Skies (and Runways) Friendlier" by Cheryl Harris Sharman -- discussed the imminent adoption of the "Automated Dependent Surveillance-Broadcast (ADS-B)" aircraft tracking system.

In 2007, almost 770 million people flew on the airlines; by 2016, the number is expected to reach a billion. That means many more aircraft moving around, greatly complicating the problem of tracking them and leading to an increasing likelihood of disastrous collisions. Traditional radar-based tracking systems just can't do the job, and so the US Federal Aviation Administration recently awarded contracts to Honeywell and Aviation Communications Systems (ACS) to get the next-generation ADS-B system rolling.

ADS-B was first conceived in the early 1990s by the FAA, along with a number of other government agencies and businesses, including the US National Aeronautics & Space Administration (NASA) and United Parcel Service (UPS). An aircraft ADS-B system is built around a Global Positioning System (GPS) navigation satellite receiver, plus datalinks. The ADS-B unit continuously updates its position from GPS and sends it out over the datalinks. It also obtains the ADS-B positions from other aircraft, with the relative positions displayed on a digital map in a jetliner's cockpit. There are large gaps in radar coverage between populated areas; in contrast, ADS-B works everywhere, all the time.

Alaska has poor radar coverage, and air accidents are higher there than elsewhere. In 1999, the FAA collaborated with industry to perform a trial of ADS-B named "Capstone", with ADS-B boxes installed in aircraft for free. Over the seven years of Capstone's operation, air accidents in Alaska dropped by almost half. UPS has also experimented with ADS-B at the company's global hub in Louisville, Kentucky, with the exercise showing that the precision tracking of ADS-B permitting an increase in traffic rates by 15%, as well as less fuel wasted in holding patterns.

After about a decade of development and evaluation, in 2005 the FAA declared ADS-B to be safe and effective, clearing the way for deployment. The Honeywell and ACS contracts are preliminary, focusing on runway safety. Honeywell will test the technology in two of its planes, as well as install ADS-B systems in JetBlue Airways and Alaska Airlines aircraft for pilots from those companies to evaluate. Honeywell will work at Seattle-Tacoma International and Snohomish County Paine Field airports in Washington State. ACS will work at Philadelphia International Airport with US Airways to develop standards, flight demonstrations and prototypes of the technology, equipping twenty Airbus A330 aircraft with ADS-B systems.

ADS-B is a major component of the FAA's "Next-Generation Air Transportation System (NextGen)", to be deployed by 2020. NextGen is a far-ranging plan for controlling the airways, developed by the FAA in conjunction with other organizations such as the Department of Homeland Security and the White House. The major problem with implementing ADS-B is cost, with estimates for implementation over the USA running from $15 billion USD to $22 billion USD. The FAA wants to make sure that ADS-B box design is standardized to ensure low costs and quicker adoption by aircraft operators.

* In closely related news, an article in AVIATION WEEK ("Containing Congestion" by Michael A. Taverna, 5 January 2009) reports on an automated air traffic control system being evaluated in a three-year European Union (EU) research project. The system , designated the "En-Route Software Management Ultimate System (ERASMUS)", when authorized by a pilot, takes over control of an airliner's flight management system (FMS) to adjust speed and maintain proper spacing during approach. It permits efficient approaches and landings, with no time and fuel wasted in unneeded go-rounds.

ERASMUS is seen as a major component in the "SESAR" system, the EU's answer to the FAA's NextGen. ERASMUS will be an important component in the SESAR "4D" flight planning system -- a concept discussed here last year. NextGen does not incorporate anything like ERASMUS, but Honeywell did work on ERASMUS and it is a possible future.

COMMENT ON ARTICLE* MACHINE VISION GETS SHARPER: Machine vision technologies have been advancing at a rapid rate in the last decade or so. As reported in an article in THE ECONOMIST ("Machines That Can See", 7 March 2009), the capabilities that machine vision has attained are impressive -- and to an extent disturbing.

Consider the work of Omron Corporation of Japan, a developer of robotics software. The company is now introducing a "smile management" system that observes employees over video and determines how much they smile, a useful tool for managers at retail and fast-food outlets -- though not one that is likely to make employees feel much like smiling. Even faking it may not be helpful, because researchers at the University of Buffalo in New York state are working on software that can analyze facial expressions for their sincerity.

Analysis of facial expressions is not just a passing gimmick, either. Anglo-Dutch consumer-goods giant Unilever is using expression-analysis software to nail down the reactions of tasters to food, while Proctor & Gamble, a US competitor, is using such software to assess the expressions of focus groups viewing advertisements.