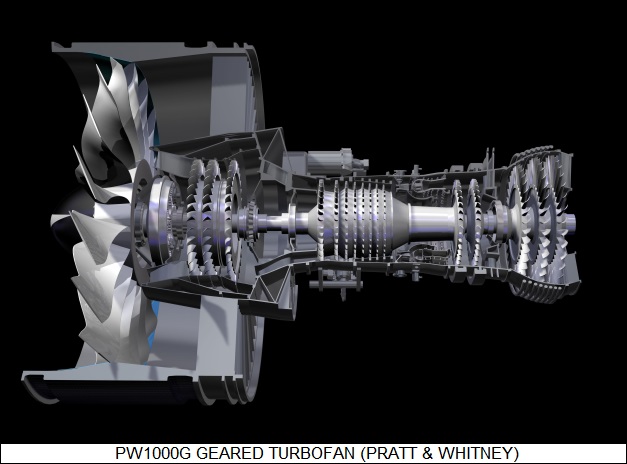

* 21 entries including: parasites, smart grid, the origins of flowers, wireless recharging, global access to fresh water, software agent collectives, SpaceX booster efforts, next-generation ICs, the Russian gas business, geared turbofans, and terrorist Bryant Neal Vinas.

* NEWS COMMENTARY FOR AUGUST 2009: Although the security situation in Iraq has been changing dramatically for the better over the past three years, along with the improvement there has been a nagging unease that everything might go to hell again once the Americans pull out, and even Barack Obama tended to conditionally hedge his bets on his campaign promise to withdraw US forces. However, as reported by THE ECONOMIST and backed up by articles elsewhere, since the handover of firstline security to Iraqi forces on 30 June, the buzz has been shifting gradually towards the idea that maybe we should be in a bigger hurry to leave. Earlier attempts to turn over security to Iraqi forces did not go well since they generally lacked training and motivation, but this time around they seem gung-ho. Says an American officer: "They're like kids with a brand-new muscle car, out there burning rubber. It almost scares you."

The scary part is that the swagger of Iraqi forces may not be exactly what is needed in a country with such ugly tendencies toward instability, and the new-found aggressiveness isn't necessarily associated with professionalism. Recently a gang composed of members of the Iraqi Presidential Guard knocked over a bank, killing eight bank workers and making off with $7 million USD. The gang was geared for combat and the police were helpless to stop them. Interior Ministry troops stepped in and put the bandits down, but they only intervened because the robbers were from a rival Shia faction.

Still, though the situation in Iraq remains difficult, there is the troublesome question of whether American troops can do much to improve matters. Some US officials think they are now simply making matters worse and ought to go. Once they go, the Iraqis will be on their own, with little US firepower to fall back on. There was a time only a few years ago when everything seemed all but lost; one can do little but hope that the trendline will continue up and to the right.

* Relative to the comments here last month on the attempts of US Defense Secretary Robert Gates to hold the line on stopping production of the F-22 Raptor fighter in favor of buying weapons more suited to the current era of "dirty little wars", WIRED.com reports that in July, the US Air Force issued a requirement for a light attack / trainer aircraft. The idea is to obtain a hundred cheap aircraft that can perform the close air support mission and also help train local air forces.

The Brazilian EMBRAER Super Tucano turboprop is regarded as a fairly typical candidate; there's even intriguing talk of Piper revisiting its PA-48 Enforcer, effectively a slightly scaled-up P-51 Mustang with turboprop propulsion, a prototype of which has been gathering dust at the Air Force Museum for the last few decades. The USAF has been already obtaining modified off-the-shelf civil aircraft like the Beech Super King Air for surveillance, and is similarly pursuing acquisition of an off-the-shelf light cargolifter. The price tag for a machine like a modified Super King Air might make the likes of us gasp for breath, but compared to the F-22 it's dirt cheap, and it can be in service within a year of the go-ahead.

Gates says that trying to get Air Force brass to buy off on air warfare on the cheap, what he calls the "75% solution", has been "like pulling teeth." The brass worry that they may go up against a superior adversary in the future that will be able to sweep the boys in blue out of the sky. Gates finds the idea absurd: "By 2020, the United States is projected to have nearly 2,500 manned combat aircraft of all kinds .... Of those, nearly 1,100 will be the most advanced 5th-generation F-35s and F-22s. China, by contrast, is projected to have no 5th-generation aircraft by 2020. And by 2025, the gap only widens."

* Nathan Hodge, a contributor to WIRED.com, had an interesting post about hitching a ride on an Air Force C-130 Hercules cargolifter dropping a hefty 155 millimeter howitzer to an outpost in Afghanistan -- the weapon was so big that it required five parachutes. This was not just a gee-whiz demonstration, but a part of a highly active airlift operation.

While there's a notion common among civilians that the military is all about shooting and blowing things up, in practice most of the work is actually logistics -- making sure the materiel gets to the troops, and that the day-to-day machinery of operations stays working. Afghanistan is notoriously rugged and is not noted for its good roads, which makes airlift an important capability. The US military has been gradually extending its reach, setting up bases in isolated areas, which then have to be kept supplied. The result is that airdrops over Afghanistan have handled more cargo in 2009 so far than was dropped there in 2005, 2006, and 2007 combined.

The military now has the JPADS GPS-guided airdrop system -- discussed here last July -- but it's expensive and only used when needed, for example if the drop target is covered by heavy enemy fire. Drops are typically at low altitude, with the skill of the aircrew ensuring that the payload falls into the drop area. It isn't a trivial process, particularly for the heavier payloads. The 155 millimeter howitzer was strapped to a pallet with a crushable bottom made of wood and cardboard, since it hits the ground at the speed of a competition sprinter, and it has to be precisely loaded and deployed. It's yanked out the back of the aircraft by a "deployment parachute", and if the load sticks, the crew is in serious trouble. Hodge found the experience "exhilarating, and slightly scary" -- and was relieved when the big gun was clear of the Hercules.

* THE ECONOMIST had an interesting article on the evolution of the former Yugoslavian states in recent years. One could not have thought of a region more wracked by antagonisms, but though suspicions and old enmities linger, the simple practicalities of life have been restoring connections. At a recent international meeting, Serbian President Boris Tadic suggested to Croatian President Stipe Mesic that the countries needed to join forces to bid on construction projects or military equipment contracts. Mesic was receptive to the idea, as well he might be -- on their own, each of the little fragments of what used to be Yugoslavia can't compete very well with bigger rivals.

From Slovenia on the border of Austria to Macedonia on the border of Greece, along with the things that tore these people apart there are things that pull them together, into what has been referred to as the "Yugosphere", though not all like the term and its connotations of the old Yugoslavian state. Most speak similar languages, with Serbo-Croatian amounting to a common tongue among them, and people can generally move freely from state to state just by showing an ID card. As a rule, the biggest trading partners of a state in the Yugosphere are other states in the Yugosphere, and businesses see it as their own common market.

The emergence of the Yugosphere has been quiet and hardly noticed, mostly because it involves the humdrum. Wars make headlines; setting up a regional fire-fighting center does not, though arranging cooperation and training between firemen of the different states does everyone a lot more good. The fire-fighting center was the work of the Regional Cooperation Council in Sarajevo, which has been slowly and steadily pushing over obstacles to getting things done between the member states. This is a region of very old and deep grudges, many of them savagely renewed in the 1990s, and nobody sensibly takes the peacefulness for granted. However, for the most part the rivalries these days are focused between national football teams. After the wars, most seem happy to keep the competition on the playing field instead of the battlefield.

COMMENT ON ARTICLE* THE PARASITES (16): The tales of contests between parasites and the immune system discussed in previous installments were all relative to the human immune system. Fellow vertebrates have immune systems more or less similar to ours, but other organisms do not. Insects, for example, like to seal up invaders in poisonous cocoons to kill them off. Unsurprisingly, parasites have acquired schemes to defeat such an attack.

One of the best-known examples is that of the wasp Cotesia congregata, which parasitizes the tobacco hornworm. The hornworm is a tubby little green caterpillar and a major crop pest, attacking not merely tobacco but many varieties of vegetables. As with other parasitic wasps, the Cotesia mother finds a hornworm, lights on it, stabs it with its ovipositor, and lays its eggs inside. The eggs hatch into larvae that live off the hornworm's blood. The hornworm's immune system would destroy the larvae, except for the fact that the mother wasp injects a soup along with the eggs that disables the host immune system, using vast numbers of viruses provided in the soup.

Where the viruses come from is little short of astounding. There are certain classes of viruses known as "retroviruses" -- HIV, the AIDS virus, is one -- that insert their own genome into the host cell genome to hijack the host cell to produce new viruses. In some cases, the viruses will insert their genome into a host germ-line cell -- sperm or egg -- and the viral code will be carried on as a hitchhiker to following generations. It may lie dormant, or it may be activated by some event, with the host coming down with a viral infection even though it was never exposed to the virus. Humans have a number of viral codes in their genome, but they were all broken by mutations long ago and are no longer functional.

Cotesia not only has an active virus code in its genome, the wasp actually uses it as a weapon. The wasps are born with the viral code scattered through their own genome. In male wasps, the viral code remains scattered, but when a female wasp becomes a pupa on its way from being a larva and then an adult, special cells splice together the code so it can be expressed, and then turn into virus factories, ultimately bursting to release their viral load. The viruses do not attack the mother wasp, but when they are injected into a hornworm along with the wasp larvae, they infect hornworm cells, forcing the cells to produce a flood of proteins that disable the host immune system. The effect is only temporary; it wears off in a few days, but by then the larvae have matured and acquired mechanisms of their own to frustrate the hornworm's immune system.

There is actually some doubt that the Cotesia wasp has acquired a remarkably tidy symbiotic relationship with a viral hitchhiker. Some offer as an alternative that the viruses injected by the mother wasp don't really have any relationship to viruses at all, instead being "pseudoviruses" that were actually evolved by the wasp itself that mimic "true" viruses. Either way, how Cotesia got from here to there was obviously an elaborate process, and the wasp promises to be a particularly interesting subject of study for a long time to come. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* SCIENCE NOTES: As reported by THE ECONOMIST, in 2006 Mario Lebrato and Daniel Jones of the National Oceanography Centre in the UK were using a remotely-operated underwater vehicle to inspect a seafloor oil pipeline off the Ivory Coast. The two researchers were surprised to find that the seafloor was carpeted with the corpses of "thaliaceans", which are free-floating members of the sea squirt or "tunicate" family.

Tunicates could be described as jellyfish-like tubes, but they are actually more closely related to us than they are to jellyfish, having a spinal cord (if no backbone as such) and resembling small very primitive fish in their larval stage. Thaliacean tunicates are very common, and the researchers got to wondering if they might be a significant "sink" for atmospheric carbon dioxide. An analysis of thaliacean tissues showed they were about 30% carbon by weight, as compared to 20% for the group of plankton known as "diatoms" and only 10% for jellyfish. The high concentration of carbon makes the thaliaceans relatively dense, and so they immediately sink to the bottom. There may well be concentrations of thaliacean corpses in trenches and other traps.

Traditional models of the global carbon cycle suggested that plankton was responsible for the bulk of the job in drawing out atmospheric carbon dioxide and depositing it as carbon on the seafloor. While data is sketchy so far, the researchers estimate that thaliaceans could well capture twice as much carbon as plankton does. Of course, that leads to the question of whether the carbon actually stays at the bottom. It would if the carbon was in the form of calcium carbonate shells grown by many small sea creatures, but thaliaceans don't have shells. However, thaliaceans don't decompose quickly on the seafloor, giving them opportunity to be gradually covered up. Even if they aren't, deep and shallow waters don't mix much, and the carbon is likely to stay down in the deeps for a long time.

Now climatologists have yet another factor to try to fit into their computer models. Not that it matters in the greater scheme of things. The sciences have always worked by successive approximations of reality, and the more we learn, the more closely the approximations approach reality.

* In somewhat related news, SCIENCEMAG.org reports on interesting research concerning the Mastigias jellyfish -- an elegant, mushroom-shaped, nonvenomous, grapefruit-sized beast commonly found in South Pacific lagoons -- to show that its seemingly lazy motions are important for the circulation of heat, gases, and nutrients through the oceanic water column.

While oceanic surface waters tend towards the more or less turbulent, go down about a hundred meters and they tend toward the calm. That sounds like a recipe for stagnation, but in fact marine ecosystems do well enough for themselves at such depths. Currents driven by temperature and salinity differences do contribute to the mixing, but about a half century ago a British physicist named Charles Darwin -- grandson of the famous naturalist -- suggested that the swimming action of sea creatures like jellyfish might be a significant factor in the mixing process. There was considerable skepticism that, say, the languid movements of jellyfish could be a significant factor in such oceanic transport processes because of the relatively high viscosity of the medium. However, now Kakani Katija and John Dabiri, two bio-engineers at the California Institute of Technology -- have demonstrated that the Mastigias jellyfish may in fact up to the job, through an experiment so simple and elegant that their colleagues must have slapped their foreheads and said: "Oh, why didn't I think of that myself?!"

The researchers observed Mastigias jellyfish in a lagoon in the Palau Islands, monitoring them with video cameras while the researchers released green dye to observe the water flow around the creatures. The slow motions of the jellyfish made them "model systems", as Dabiri puts it, for studies in fluid dynamics. The researchers analyzed the videos using computers and then performed calculations based on the size of the population of the jellyfish.

The calculations showed that the amount of energy and mixing produced by the jellyfish are surprisingly large and a significant factor in oceanic mixing. That may not be an earthshaking insight, but such small advances in knowledge are the bread and butter of science, and the two Caltech researchers definitely get points for their very tidy experiment.

* BBC.com reports that divers off the coast of California near San Diego are reporting an invasion of Humboldt squid, sometimes known as "jumbo flying squid", which can be up to 1.5 meters (5 feet) long. While the squid are too small to regard humans as potential prey, there have been confrontations, with one diver reporting a squid ripping a buoyancy aid and light from her suit and grabbing her with its tentacles. Other divers have swum along with the squids with no problems, and what provokes them is unclear.

The squids do not come up into the shallows and so swimmers have not encountered them, but dozens have washed up on shore. The squids normally live in the deep water off the Pacific coasts of Mexico and Central America, but squid invasions were reported in the Los Angeles area in 2005 and in San Diego in 2002. A scientist at the Scripps Institute in the San Diego area suspects the squids may have established a permanent population in the region.

COMMENT ON ARTICLE* WIRELESS RECHARGING: There's been considerable talk over the last few years of wireless power transmission, and as reported in an article in THE ECONOMIST ("Adaptor Die", 7 March 2009), after a period of fumbling manufacturers have started to become more interested in the idea.

They're not talking about eliminating power lines, of course. The target applications for wireless power are portable devices, particularly cellphones. Keeping them charged up is a nuisance; and users would find it much more attractive to just set them down on a pad where they could recharge themselves without concern for fumbling with adapters, rechargers, and cabling. In 2004 a UK firm named Splashpower went public to promote the "Splashpad", a pad that generated an electromagnetic field with a coil. A portable device with a receiving coil could be placed on the Splashpad, where it would charge up.

The idea is straightforward, "electromagnetic induction" has been known since the 19th century and is perfectly practical from a technical point of view, but the problem was finding manufacturers willing to buy into the concept with appropriately-configured portable devices. Manufacturers were not necessarily excited about the idea of wireless power, and those that were had concerns about standardization, there being not much sense in jumping into wireless power unless a standard scheme was available. Getting a consensus on an industrial standard can be very frustrating and time-consuming, and Splashpower went broke in 2008 without ever selling a Splashpad.

However, people are starting to come around. In 2008, the same year Splashpower went under, concerned companies established the "Wireless Power Consortium" to create a proper standard for wireless inductive recharging. The consortium includes big-time players like consumer-products giants Philips and Sanyo, as well as chipmaker Texas Instruments (TI). Philips has already introduced wireless recharging for some consumer products like electric toothbrushes, but Philips officials say that effective standards are required to permit interoperability with different devices, such as cellphones, laptops, and digital cameras.

Competition between manufacturers of mobile devices is helping to drive the effort, with some companies seeing wireless recharging as giving products an edge in a tough market. The Palm company made a big splash at the Consumer Electronics Show (CES) in Las Vegas this year with the Palm "Pre" smartphone, which features an optional wireless recharger known as "Touchstone". One of the problems with inductive recharging is that the recharger and mobile device have to be properly aligned, but Touchstone has magnets to align the Pre automatically.

Fulton Innovation, which bought up Splashpower's assets, used CES to display a wireless recharger to be fitted into cars to recharge cellphones and other mobile devices, while Bosch demonstrated a toolbox that wirelessly recharged power tools. TI is now working with Fulton to come up with chipsets for inductive recharging systems.

Inductive wireless recharging isn't the only game in town. A Colorado startup named Wildcharge is pushing a very simple scheme in which a phone or similar device has four conductive studs on the back; the devices are set down on a pad featuring an electric contact grid, and as long as two of the studs make contact the device will charge up. WildCharge and its licensees have developed replacement back covers with the studs for devices such as Motorola's popular RAZR cellphones and the Nintendo Wii videogame controller. There's also work on transmitting power over several meters using radio transmission or lasers, but such schemes are mainly intended for industrial applications that involve powering devices in environments where electrical connections are troublesome.

For now, the real game is wireless rechargers for portable devices. Some enthusiasts think the technology is about to take off, but there is still some resistance from industry: proprietary charging schemes help lock customers into product lines from particular manufacturers, and so some companies are not at all happy with the idea of standards that work across company lines. However, the pressure towards wireless recharging seems irresistible, and few think it will be even as much as a decade before it is the norm, making the current exercise of plugging gadgets into rechargers seeming antiquated and comical.

* I got a tipoff from someone on the message board for another angle on wireless power, though one that's a bit hard to believe. Finnish cellphone giant Nokia is now working a power harvesting system for cellphones that scavenges energy from urban radio transmissions. The harvester uses a wideband antenna coupled to a power conversion circuit; right now it can only get 5 milliwatts (mW) out of it, but the researchers are shooting for 20 mW, which would be enough to keep the cellphone going in sleep mode, and ultimately hope to get 50 mW, which would be enough to charge the thing.

The power density of radio broadcasts is slight and so there is skepticism that 50 mW is possible, with one critic claiming that would demand a thousand strong transmitters in the area. However, the same idea has been used with experimental micropower wireless sensors recently developed as the University of Washington (state) in Seattle -- but they're easy, since they operate at levels well below a milliwatt.

COMMENT ON ARTICLE* THIRSTY WORLD: Water has always been a crucial resource for human societies, the issue of water supply having been discussed here in detail in 2005, and today many lands seem to be suffering from a shortage of it. As reported in an article in THE ECONOMIST ("Sin Aqua Non", 8 April 2009), Australia has seen a decade-long drought, and California officials are warning of water rationing. Are these just local shortages? Or are we facing a creeping global water crisis?

On the face of it, there would seem to be no need for panic. Thousands of cubic kilometers of fresh water fall as rain or snow every year, and globally humans make use of no more than about 9% of it. That would seem like plenty to spare, but a closer examination suggests it may not be: after all, the rest of creation on the planet needs fresh water too. Nobody's sure just what percentage humans can get away with using, though it's clearly not 100%. Patchy evidence suggests that humans are pushing the limits, with many of the Earth's major rivers -- the Indus, Rio Grande, the Yellow -- no longer reaching the sea. Freshwater fish populations seem to be in decline, and wetlands are being damaged as salt water displaces lower volumes of fresh water flowing into them.

Of course, population growth is certain to increase the pressure on water supplies, but changes in lifestyle can greatly aggravate that pressure. Farmers use about three-quarters of the world's water; industry uses less than a fifth, while domestic or municipal use accounts for a mere tenth. It takes about a thousand liters (265 US gallons) of water to grow a kilogram of wheat; it takes about 15,000 liters (3,950 US gallons) to grow a kilogram of beef. The shift to increasing consumption of meat means a big ramp-up in water usage.

The other issue is climate change. The evidence is growing that global warming is speeding up the hydrological cycle, the rate at which water evaporates and falls again as precipitation. The result seems to be to make wet regions wetter and dry regions drier, with long droughts between bursts of rain. That means pressure on plant growth, as well as on water management systems -- both struggling along during the long dry spells, then inundated when the rains come back.

The immediate solution is to improve the efficiency of water use. The good news is that there's plenty of room for improvement and plenty of technology available to obtain those improvements; industrial users have been steadily cutting the amount of water they need to manufacture product, and in fact have been leaders in water conservation. Alas, it's the farms that use most of the water and seem most resistant to change. While education is valuable to improve the water efficiency of farming, water policies are also important, since if water is priced in a way that reflects its actual supply, people will have an economic incentive to conserve.

The difficulty is that determining the actual supply of water can be tricky, and people also tend to see access to water as a right. Administration of water supply also tends to be local and haphazard; since there was generally plenty of water in the past, wide-scale planning and organization was usually not seen as necessary. Few like the idea of establishing far-reaching bureaucracies to ensure adequate water supplies, but given the challenge, the pressure to do so may be irresistible.

* In somewhat related news, BBC.com reported that there is some evidence that global warming may actually shrink the world's deserts. That may seem entirely counterintuitive, but weather is notoriously complicated. Hotter temperatures will increase evaporation of bodies of water, which will result in more rainfall. Satellite imagery suggests that the southern edge of the Sahara Desert is actually retreating, and in the extremely dry Namib Desert in Namibia, in 2008 there was five times the average rainfall.

However, local weather patterns can vary a great deal, and these observations may be simple noise, not signal. Last year the Namib Desert also had the hottest day on record -- 47 degrees Celsius (116.6 degrees Fahrenheit) -- and other regions in Africa have been suffering from prolonged drought. Climatologists can only pick out a signal from averaging global measurements over time, and we have to be careful not to read into local variations an overall trend that's not really there.

COMMENT ON ARTICLE* SMART GRID (1): The current burst of enthusiasm over alternative energy seems much more earnest than the "green power" fad of the 1970s, with one indication of the present seriousness over the issue being the push to build, at great cost, a "smart" electrical power grid to distribute renewable energy all over the USA. An article in DISCOVER magazine ("Building An Interstate Highway System For Energy" by Peter Fairley, June 2009) took a snapshot of the concepts for "smart grids".

The current power grid is oriented towards local or regional operation, with power plants serving municipalities that are relatively nearby. The main problem with the current grid is that the best solar, wind, and geothermal power sources in the USA are found in remote areas, and there's no way to get power from these locales efficiently to the cities that need it. To make matters more difficult, renewable energy tends to be variable -- solar power goes away at night or in lousy weather, and winds can die down -- and the current power grid isn't flexible enough to divert power from different sources to end users as availability dictates.

The "smart grid" concept envisions a combination of "top-down" and "bottom-up" efforts. The top-down effort will involve construction of an entirely new long-distance high-voltage power distribution grid to cover the USA. The bottom-up effort will involve communications and distributed digital intelligence to allow end users in businesses and homes to optimize their energy use.

* The current power grid is based on a hierarchy of power-line systems, with the top levels of the hierarchy distributing power from power stations over relatively long distances at high voltages and the lower levels of the hierarchy distributing power to end users at low voltages, with transformers used to step voltages up and down as needed. It's a bit subtle to explain here, but it can be simply said that as far as electrical power distribution systems are concerned, the higher the voltage the lower the loss of electrical power, but the more difficult and expensive it is to handle the power. Long distance power lines operate in the tens of thousands to hundreds of thousands of volts, while local lines at 120 or 240 volts AC.

As mentioned, the current US power system is regional, with the country divided into Eastern, Western, and Texas interconnects. The three grids are basically stapled together; it is very important to maintain a constant phase in the 60-cycle power used in a grid since a failure to do so will lead to power fluctuations, and since maintaining phase across grids can be troublesome, power is transferred between them using "transfer stations". A transfer station takes the high-voltage alternating current (HVAC) power from one grid, converts it to high-voltage direct current (HVDC) power using heavy-duty electrical switching gear, and then passes the DC power off to the other grid, where it is converted back into AC power of the proper phase. This is a little awkward, but more to the point it's a bottleneck.

US President Barack Obama wants to increase America's use of renewable energy to 25% of national needs by 2025, but there's no way the current grid can handle the power. Conceptually, the current grid looks like the US highway system did at the beginning of the 1950s, amounting to a cobbled-together patchwork of local systems, which gave way to the national interstate highway network set up by the Eisenhower Administration.

The technology for the long-distance connections needed by the smart grid is already available. HVDC can operate at very high voltages with very low losses, and since it only requires two conductors instead of the three needed by a three-phase HVAC line, the HVDC line itself is cheaper to build. For various subtle reasons, HVDC also has less power loss than an HVAC line carrying the same amount of power. The need for expensive AC:DC conversion stations is the downside of HVDC, though the conversion systems have been falling in price. The tradeoff ensures that HVAC lines operating at very high voltages -- 765 kilovolts or even a megavolt, about twice to three times current HVAC line voltages -- can compete with HVDC systems for the time being. There's a tradeoff; some see HVDC and very high voltage AC (VHVAC) systems as complementary, with HVDC handling the top-level trunks in the smart grid hierarchy and VHVAC taking care of the level beneath that.

To get an idea of the magnitude of the task of setting up this long-distance grid, one study proposed a grid of 30,600 kilometers (19,000 miles) of VHVAC lines at a cost of $60 billion USD. That's pricey, but over ten years that amounts to about $20 USD a year per citizen to fund the grid. [TO BE CONTINUED]

NEXT | COMMENT ON ARTICLE* THE PARASITES (15): The small size of single-celled parasites like Plasmodium gives them an advantage: it's easy for them to find places to hide out from the immune system. A larger multicellular parasite like a tapeworm that is more than big enough to be seen with the naked eye makes a much better target, but not too surprisingly they have ways of spoofing the immune system as well, allowing them to thrive for years.

For an example, consider the tapeworm Taenia solium. Its usual intermediate host is a pig. Pigs swallow tapeworm eggs as they eat, with the eggs hatching once they get to the pig's intestines. The parasites use enzymes to drill through the intestine walls, then wriggle through and find a capillary that allows them to traverse the pig's body. The parasites take up residence in muscles or organs and form cysts, where they hide out for years.

Sometimes humans will ingest the tapeworm eggs, and the eggs then go through the same routine that they would in a pig, ending up in cysts in various parts of the host body. Whether they do the host any harm depends on where the cysts are formed: they may find a home in a muscle where they are effectively unnoticed, or they may set up shop in the brain, where they can cause inflammation or seizures.

The Taenia cysts are also more troublesome in general than the Toxoplasma cysts, since the tapeworms aren't inactive in their cysts: they soak up nutrients from the host and grow, in the meantime defending themselves from immune system attacks. The tapeworm can produce chemicals that neutralize complement molecules and can similarly frustrate destructive chemicals released by some of the immune system's white cells. Like Leishmania, the tapeworm also produces cytokines to skew the adaptive immune system away from producing killer T cells to producing antibodies -- with some researchers suspecting that the tapeworm actually digests the antibodies, using them as nourishment. However, the tapeworm ages as does any organism, and if it doesn't end up in a proper host, sooner or later its ability to spoof the immune system will falter. The immune system may then mount a violent attack that can cause severe inflammation, possibly killing the host.

* Blood flukes similarly have schemes to distract the immune system. When young flukes first penetrate the skin, they attract attention from the immune system, which recognizes their coat chemistry. To evade the immune system attacks, the flukes then switch their coats to a new one -- which has the nasty feature that it incorporates surface molecules from host cells, which means the host immune system sees the flukes as "self" and leaves them alone. Exactly how the flukes perform this trick is not entirely clear, but it has been demonstrated that flukes taken from one monkey, where they are clearly at home, and injected into another monkey are quickly slaughtered by the new host's immune system, which recognizes them as "nonself".

Blood flukes also can neutralize complement, and it is believed that they may be stealing the neutralizing chemical from the host itself. Indeed, the blood flukes are so dependent on the host immune system that the parasites cannot function properly if the host's immune system is compromised. Blood flukes lay eggs in veins and provoke an immune response that ends up driving the eggs into the intestines. In immunocompromised hosts, such as patients with AIDS, the flukes are effectively at a dead end.

Blood flukes, however, often do not cause their hosts much trouble. Like Toxoplasma, they can manipulate the immune system to perform population control -- provoking an immune response that is generally harmless to adults but fatal to young fluke larvae. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* GIMMICKS & GADGETS: The history of efforts to build vertical take-off & landing (VTOL) aircraft is long and troubled. The goal of building a machine that can go straight up and down like a helicopter and then fly as fast and far as a fixed-wing aircraft is tempting. Unfortunately, the effort has had an unfortunate tendency to produce machines that in the specific domains of helicopters and aircraft are inferior to both, and generally too complicated and expensive as well. There have been some successes, like the Harrier jump-jet, that fit into a specific niche between helicopter and fixed-wing aircraft, but there were far more programs that went nowhere.

Bell Helicopter and Boeing collaborated on the MV-22 Osprey tilt-rotor machine, which has a propeller pod on each wingtip that can be rotated up for vertical flight and turned flat for horizontal flight. The Osprey has entered service but had a very lengthy, painful gestation -- the tilt-rotor concept was invented by the Germans in World War 2 but it took half a century to get a tilt-rotor machine into production -- and still remains the target of critics.

Bell is now working on a concept for a "Hybrid Tandem Rotor (HTR)", which in a sense goes back to the late 1930s and 1940s for inspiration. Many of the early helicopters in those days used a side-by-side rotor configuration, with a rotor on a boom to each side of the fuselage. That configuration didn't catch on, but the HTR revisits it, envisioning what looks like an aircraft with a straight wing, featuring a turboshaft engine in a pod on each wingtip and a three-bladed helicopter rotor above each pod.

In terms of VTOL operation, the HTR is much more conservative than the Osprey, the HTR's only real trick being that it can hinge its wing up or down 15 degrees. The wing's hinged up for vertical flight, down for forward flight. In forward flight the lift from the wing offloads the rotors, providing greater speed and range. The HTR can be regarded as "VTOL on the cheap", improving on the range and speed of a conventional helicopter, with a modest increase in complexity and cost. It's not really in the same league with a true VTOL machine like the Osprey; whether its incremental advantages seem worth the bother to potential buyers remains to be seen.

* A WIRED.com report discussed how the US Defense Advanced Research Projects Agency (DARPA) is working on an ultra-slim digital camera system known as "Processing Arrays of Nyquist-Limited Observations to Produce a Thin Electro-Optic Sensor (PANOPTES)" -- Panoptes being a hundred-eyed watchman in Greek mythology.

A typical modern digital camera is just an old-fashioned film camera with an image sensor instead of film, technology that resembles the human eye. PANOPTES takes its cue from the compound eye of insects. The imager consists of an array of small, simple, cheap imaging sensors that are fitted with moveable micromirrors to adjust the focus of the array. A signal-processing subsystem controls the direction of the camera elements and splices together their output. PANOPTES promises a flat camera only 5 millimeters thick that could be attached to almost any surface, while providing high resolution and a wide field of view without use of bulky lenses.

The "adaptive" signal processing scheme permits the camera to zero in on "busy" interesting elements of a scene to provide more detail, diverting camera elements from "boring" background elements that don't require such detail. For example, if the camera takes a picture of an aircraft, most of the array elements will be focused on the aircraft while leaving the sky and clouds in relatively low resolution. That sounds like it might demand a lot of processing power, but it turns out that only about 15% of a typical image is "busy"; a standard signal processing chip can keep up with 60 frames per second. The initial application is for sensors for small unmanned aerial vehicles, but the number of other potential applications is obviously substantial.

* IEEE SPECTRUM reports that researchers at the US Researchers at the U.S. National Institute of Standards and Technology (NIST) in Gaithersburg, Maryland, have created a cheap, low-power, inexpensive flexible memory device that operates as a "memristor" -- meaning it can "remember" the amount of current that has flowed through it, with the "memory" reflected in the device's resistance.

Memristors are seen as potentially useful for nano-electronic memories and neural networks. They were first suggested in 1971, but it wasn't until 2008 that researchers at Hewlett-Packard (HP) announced that they had built a working memristor. At that time, the NIST researchers were simply working on printed and flexible electronics, but on reading reports of the HP memristor, the NIST team noticed they were working along similar lines. Few at NIST were convinced that memristors were all that useful, but the attitude was that it wouldn't hurt to investigate a bit.

The NIST memristor is built by applying a precursor solution to a surface and then allowing it to absorb water from the air to form a solid gel of titanium dioxide (TiO2) that changes its state gradually as current is applied. The HP device was also based on TiO2; it is believed the memristor effect is due to a deficiency of oxygen atoms in the TiO2 matrix, with the "vacancies" in the matrix shifting as current is applied, resulting in a change in resistance.

The NIST researchers see the device as useful as a digital or analog memory for medical applications. However, they admit they don't have a very good idea of how the device actually works and are working to get a better handle on it. They are also working on a new fabrication technology that could, in principle, lead to construction of such devices using a process that looks much like inkjet printing. Not only would that mean low-cost fabrication, it might even lead to cheap circuit fab units for home use.

COMMENT ON ARTICLE* AGENT ARMIES: In the movie version of Tolkien's LORD OF THE RINGS, viewers were treated to realistic swarms of evil orc warriors, rendered by digital cinematography. As reported in an article in THE ECONOMIST ("Model Behavior", 7 March 2009), it turns out that the technology used to render orc armies has significant practical application.

The tools used in the movie were created by Massive Software of Auckland, New Zealand, and permitted scenes with up to a half million virtual "actors", all behaving in an independent and plausible fashion. Each character was modeled as a software "agent" with a set of simple behaviors and the capability to react to its virtual environment. Any orc, for example, could observe other fighters in its line of sight, and accordingly attack or flee. The result was chaotic and realistic battle scenes that didn't require specifically dictating the actions of the vast numbers of characters.

Nate Wittasek, leader of the Los Angeles Fire Engineering Group at the engineering firm Arup, found the battle scenes in THE LORD OF THE RINGS to be far more than just entertaining. Wittasek, who had been a firefighter, had a hunch that similar software could be used to model how the inhabitants of a building respond in a blaze, permitting safer design of large buildings. Computer models had been written for this purpose before, but they tended toward the inflexible and unrealistic. According to Wittasek, the traditional approach has been to simply model the individuals in crowds as mobile particles in a fluid. As Wittasek explained: "It assumes people behave like water flowing through a pipe. They move at constant flow rates, heading for the nearest exits. But that's not realistic."

Human behavior in such emergencies is actually very complicated -- the matter was discussed here in 2005 -- and not entirely rational. People fleeing a fire will generally try to leave a building by the same route they came in, unwilling to trust that a much shorter route they are not familiar with is a good risk. On hearing a fire alarm, there's a strong tendency to believe it's a false alarm until demonstrated otherwise. How people respond also depends on their age, size, and physical condition. Wittasek said: "We have all this great physics for figuring out how heat moves in a building, but what we lack is how people behave. If we understand that better, then we can inform our designs better."

Wittasek's group got in touch with Massive Software to get hold of the company's technology and put to work modeling crowds trying to flee a burning building. In the Arup application, just as with the orcs, the people in the building take account of what other agents are doing around them, but unsurprisingly the rules are not the same in the two environments. One factor is that in a battle scene lasting a few minutes, the agents don't have a lot of time to learn from experience and they do not do so -- they deal with immediate stimuli and react accordingly, dodging blows from swords and axes and running if necessary. In a 45-minute evacuation scenario, the agents have to assess their environment and plan escape routes, demand an extra level of sophistication from the software.

The Arup team has named their derivative system "Massive Insight". It displays simple stick figures moving through a three-dimensional environment. The figures are not the particulate entities of traditional crowd-analysis software -- for example, they possess human-like sight and hearing, allowing them to understand their immediate surroundings but not parts of the environment they haven't encountered before. They don't have legs but they behave as if they did, becoming bogged down in a stampede if others have fallen over. The agents also incorporate subtleties of behavior, with those who have ignored an alarm finally taking notice when they see others heading for the door. Agents can range from newcomers, unfamiliar with the environment and easily confused, to experienced residents who can help direct traffic out of the building. Random variation is built into the characters.

For the moment, Massive Insight is still in the testing phase, being trialed using a model of the recently updated Los Angeles County Museum of Art, with plans to use a model of a new Guggenheim museum in Abu Dhabi. So far, the software seems to be working very well. According to Wittasek: "[The agent's] actions aren't choreographed, so each time you run it you get different results. It's helping us to predict human behavior, as opposed to predicting flow."

Demeteri Terzopoulos, a computer scientist at the University of California in Los Angeles, is constructing a software model along the same lines to analyze the behavior of commuters passing through Penn Station in New York City. The model's agents have memory, as well as sense of time and an ability to plan ahead. An agent entering the station will typically go to a ticket office, stand in line to buy a ticket, and then may kill time watching a street performer if the train doesn't arrive right away. An agent that is running late, in contrast, gets pushy and runs around. The model incorporates considerations of behavior, such as what people do when somebody collapses -- the agents crowd around to help, and if one has seen a policeman around runs to get help.

One application of this model is in the development of CCTV systems for public places. Using real camera inputs for research raises privacy concerns, and so the model is being made available to researchers who are working on "smart" video systems. In a particularly interesting application, Terzopoulus ran the model using a simulation of the Great Temple of Petra in Jordan, visualizing how it was used. The exercise suggested the capacity of the temple had been greatly overestimated.

There's no need for such modeling to restrict itself to humans, of course. Massive Software has been working with BMT Asia Pacific, a maritime consultancy, to construct a model of shipping traffic in Hong Kong harbor. The agents represent ships, which are under the control of several individuals, with occasional acts of recklessness or incompetence factored in. The exercise is highly relevant, since there are about 150 collisions a year in the harbor. The specific goal is to determine traffic-management strategies to be used for an upcoming tunnel construction project, which will involve floating prefab tunnel sections and lowering them to the harbor floor.

Massive Software is similarly working with the University of Southern California and the Wrigley Institute for Environmental Studies, both in Los Angeles, to model animal behavior on Santa Catalina island off the California coast. One target of the effort is to develop effective culling strategies to deal with the island's population of bison, the beast having been introduced there to make a silent film many decades ago to then overrun the landscape. Other animals will be modeled in the exercise. The more people play with agent-based software, the more applications are found, demonstrating the power and usefulness of the approach.

COMMENT ON ARTICLE* SPACEX FLIES: The notion of "space commercialization" has been around for decades and has left a road littered with the wreckage of startup companies that discovered the hard way that their business plans were fantasies. To rephrase what W.C. Fields once said, the only way to make a small fortune in commercial space was to start with a big one. As reported by an article in AVIATION WEEK ("SpaceX Secret" by Irene Klotz, 15 June 2009), Elon Musk's Space Exploration Technologies (SpaceX) commercial space company seems to be bucking the trend -- it has actually turned a small profit for the last few years.

Musk, born in South Africa and now 38 years old, established the PayPal online service, selling out to become one of the world's richest men. Among his diverse interests is a long-time fascination with space exploration, and in 2001 he came up with a scheme to land a small experimental greenhouse environment on Mars. However, on investigating launch costs, he discovered they dwarfed the cost of the project itself: $60 million USD for a US Delta 2 medium booster, $10 million USD even for a refurbished Russian ICBM. That was vivid evidence for Musk of how launch costs were the chokepoint in space activities.

As the saying goes: if you want something done right, do it yourself. Musk saw no reason why boosters should be so expensive and figured he could do a better job of it, starting up the California-based SpaceX in 2002 with $100 million USD of his own money. The company's first product was the two-stage "Falcon 1" booster.

The Falcon 1 is powered by liquid oxygen and kerosene (LOX-RP), with a length of 21.3 meters (70 feet) and capable of putting a 670-kilogram (1,480-pound) payload into low Earth orbit (LEO). The first stage is powered by a single Merlin rocket engine, providing 533 kN (54,420 kgp / 120,000 lbf) thrust; the first stage deploys a parachute after being dropped to allow it to be recovered and refurbished for a second flight. The second stage is powered by a single restartable Kestrel rocket engine, providing 30.7 kN (3,130 kgp / 6,900 lbf) thrust. The Kestrel is a pressure-fed engine, as opposed to the Merlin, which is driven by turbopumps; pressure-fed engines tend towards the cheap and simple but can't deliver as much thrust, since the fuel flow rates are lower.

Arrangements were made to launch the Falcon 1 from Kwajalein Atoll in the Marshall Islands, leveraging off pre-existing US government launch facilities there. The first shot was on 24 March 2006 and was a failure -- disappointing, no doubt, but hardly unexpected given that boosters rarely if ever fly right the first time around. The following two shots were failures as well, but the fourth shot, on 28 September 2008, was a success, though the booster only carried a dummy payload. The last shot, on 13 July 2009, successfully placed the Malaysian RakSat Earth remote sensing satellite into orbit. Cost for a Falcon 1 flight is roughly $8 million USD. The next launch, in 2010, will be of a "stretched" derivative, the "Falcon 1e", which will provide over twice as much lift capacity.

* SpaceX is also working towards flight late this year of the much more powerful "Falcon 9" booster, which in its base configuration will be able to put 10,450 kilograms (23,040 pounds) into LEO or 4,540 kilograms (10,010 pounds) into geostationary Earth orbit (GEO), for about $40 million USD. Like the Falcon 1, the Falcon 9 will have two stages. The first stage will have nine Merlin engines; the second stage will have one Merlin engine and will leverage off the design and elements of the first stage. It will be followed by a "Falcon 9 Heavy" booster, with three clustered first stages that will triple the payload, and will cost about $80 million USD. The company is setting up Falcon 9 shots at Space Launch Complex 40 ("Slick Forty") at Cape Canaveral, originally built for Air Force Titan boosters.

Musk makes no show of modesty for SpaceX's offerings, saying: "We're the lowest prices on the market for comparable capabilities." He points out that the Falcon boosters are even cheaper than those offered by China and India, proclaiming in good entrepreneurial fashion that such government programs are "always much less cost-effective than commercial."

Musk believes that a lean, high-quality engineering team can get rockets flying quickly, reliably, and at relatively low cost. The SpaceX approach is inclined to the path of what has been called the "big dumb booster (BDB)", opting for simplicity and low cost instead of the highest performance. As BDB advocates have long pointed out, it is not so important that a booster have the highest "payload fraction": it does not matter much if the booster can put only 2% instead of 3% of its launch mass into space, if the BDB only costs half as much.

To this end, the Falcon boosters only use LOX-RP propulsion, that being the cheapest and longest-established space launch rocket engine scheme; using different propulsion schemes for different booster stages tends to jack up design and handling costs. Another design intent is to compensate for failures: the Falcon 9 first stage, with its nine engines, can lose an engine and still accomplish its mission. As Musk points out, traditional booster designs are leveraged off "legacy" hardware, much of it originally built for military requirements, that was never really designed for low cost, and a "clean sheet" approach to booster design obviously can improve on matters.

SpaceX takes something of a small-business attitude to its working procedures, salvaging old fuel storage tanks and the like from NASA facilities. In addition, the company has a keen awareness of the potential of 21st-century tech. When the company put out a request for bids on two overhead cranes to handle the Falcon 9, the low bid was about $2 million USD. That seemed very steep, and on investigation it turned out that the cranes were designed according to long-standing safety standards that implied a good deal of expensive boilerplate. The same functionality can now be implemented with digital smarts instead, and the company bought the two cranes for $300,000 USD.

Although SpaceX has only successfully flown one payload, the company has two dozen launch reservations, with customers including ATSB of Malaysia, Avanti Corporation in the UK, MDA Corporation in Canada, the Swedish Space Corporation, and CONAE of Argentina. However, although it may be tempting to see SpaceX as a David outmaneuvering bureaucratic giants like the US National Aviation & Space Administration (NASA), in fact NASA is SpaceX's biggest customer, holding half the launch reservations, envisioning use of the Falcon 9 to haul cargo to the International Space Station after the retirement of the space shuttle in 2010.

Musk has his sights set on bigger ambitions as well, with SpaceX now lobbying to develop a "man-rated" derivative of the Falcon 9 to launch the company's "Dragon" space capsule, which can carry cargo or, with some additional work, four astronauts. Who knows? Maybe after SpaceX is flying missions on a steady basis, Musk can spend a chunk of his fortune and send a greenhouse to Mars after all. It might have been the long way around to build his own booster just to shoot for Mars -- but the long way around can sometimes be the most rewarding.

COMMENT ON ARTICLE* THE ORIGINS OF FLOWERS (2): Although the fossil record for the emergence of the angiosperms is sketchy, biologists assume that they branched off from the nonflowering seed plants, the gymnosperms, which were dominant 200 million years ago. Modern gymnosperms include conifers, ginkgoes, and the cycads, with their stout trunks and large fronds. Before the angiosperms arrived, the gymnosperms were much more diverse, including the cycadlike "Bennettitales" and the woody plants known as "Gnetales" -- a handful of which, including the joint firs, still survive. The "seed ferns" were also common during the Jurassic era, but they have disappeared; they included a species named Caytonia that had precarpel-like structures.

There has been considerable discussion over the relationships of these groups and their relevance to flower evolution. In the 1980s, the fact that both the Bennettitales and the Gnetales group their male and female organs together in what might be thought of as a "preflower" suggested they were really in the angiosperm camp, and they were clustered with the angiosperms under the label of "anthophytes". However, further investigation of the "preflowers" has suggested they are misleading, and genetic analysis of the living Gnetales tends to cluster them more with traditional gymnosperms than with angiosperms. Right now, paleobotanists admit to being confused.

What is unambiguous is that the angiosperms were a huge evolutionary success. Seed ferns and other gymnosperms arose about 370 million years ago and dominated the Earth's plant life for 250 million years. Then, in a few tens of millions of years, the angiosperms took over the dominant position; today, almost 90% of land plant species are angiosperms.

The exact timing of the rise of the angiosperms is debated, as are the reasons for their dominance. Molecular clock analysis place the origins of the angiosperms back to 215 million years ago; the problem is that molecular dating methods are regarded as dodgy if not backed up by fossil or other "calibrations" and the 215 million year figure is not taken too seriously. However, it does suggest that flowering plants have been around for a lot longer than generally believed, undergoing evolution as small organisms in a constrained niche until they performed an evolutionary "breakthrough" and took over. That would help explain why the search for intermediates has proven so difficult.

But why did they finally take over? Obviously, interactions with pollinators helped spread angiosperm genes; gymnosperms, dependent on more haphazard pollination methods such as dumping pollen to the winds, tend to be clustered in strands, and are slow to spread geographically. Another notion is that reproduction by flowers encouraged cross-breeding and genetic diversity, allowing angiosperms to evolve more quickly than gymnosperms. Both of these factors may have been important; the general consensus at the present is that angiosperms had a number of features that contributed to their success.

Biologists are a bit frustrated by the long-standing difficulties in penetrating the past of flowering plants, but the belief is that work is gradually converging towards a solution, that matters won't be confused in another decade. As one of them says: "The mystery is solvable." [END OF SERIES]

PREV | COMMENT ON ARTICLE* THE PARASITES (14): Another, if peculiarly inverted, example of how parasites spoof the immune system is provided by the protozoan Toxoplasma gondii, a relative of the Plasmodium parasite. Toxoplasma is little known, mostly because it usually doesn't cause a human host much trouble -- which is fortunate, since roughly a third of the world's population is infected by it, with thousands of such parasites in their brains. In parts of Europe everyone's infected, and there's a fair chance that people reading this article are hosting it and don't notice.

Oddly, despite the fact that Toxoplasma infects billions of humans, we aren't the parasite's normal host. Its primary target is the cat family; the parasite has a life cycle that alternates between cats and their prey. Cats pick up the parasite from their prey and deposit the egglike "oocysts" of the parasite in their droppings. The oocysts can survive for years until they are picked up by the prey species, beginning the cycle again. Another interesting feature of Toxoplasma is that, unlike its Plasmodium relative, it is not picky about the cells it infects; it can infect almost any kind of cell.

Once Toxoplasma invades a cell in a prey species, it starts feeding and reproducing, ultimately producing 128 copies of itself that break out of the host cell and then infect other host cells. However, after a few generations, the parasites seal themselves into hard-shelled cysts, with a few hundred parasites per cyst. Every now and then a cyst bursts open and the parasites go back to infecting cells, but they quickly seal themselves in cysts again. Effectively, the parasites have gone dormant, simply preserving themselves for years, waiting for the day when their host becomes cat food. Once in a cat, the cysts burst open, the parasites replicate rapidly, forming males and females that then produce oocysts, which are spread by cat droppings.

Humans end up acquiring Toxoplasma parasites by ingesting a speck of contaminated soil or the meat of an infected animal. A human is, as far as the parasite is concerned, just another intermediate host: the parasites reproduce rapidly, giving the human the equivalent of a case of light flu at the worst, but then go dormant in their cysts and cease being a problem. It's a dead end for the parasites, of course, since these days humans are very unlikely to be ingested by a cat.

Once in cysts, the Toxoplasma parasites are effectively protected from the immune system, so it would seem that they have no particular biological motive to manipulate the immune response. In fact, they do -- but they stimulate the immune system to attack their own kind. Cats are Toxoplasma's targeted hosts; cat prey species are just a vehicle that the parasites ride to get from cat to cat. If the Toxoplasma parasites run amok in a prey species host and kill it off, they never get into a cat, and that's the end of them. To keep the infection in check, the parasites release cytokines that activate macrophages, which kill all the Toxoplasma protozoans not sealed inside cysts. When a cyst bursts open every now and then, the infection is quickly suppressed.

This is all very neat, other than for the fact that Toxoplasma is wasting its time infecting a human. No matter; the protozoan oocysts are spread around, to be picked up indiscriminately by a range of species, some of which will end up being cat food. If the others don't -- well, those are the breaks for the parasite, but the system still works overall.

Unfortunately, though we are a dead-end intermediate host for Toxoplasma, it can still represent a threat to humans. It is very dangerous for a pregnant woman to become infected with the parasite, because her fetus has no operational immune system of its own. The fetus is partly protected by the mother's womb and also by antibodies passed over from the mother, but it is not protected by the mother's other immune system components, since they would be likely to recognize the fetus as "nonself" and kill it. If the fetus is infected with Toxoplasma parasites, the attempts by the invaders to restrain their own infection are futile. The parasites will replicate out of control and the fetus may end up brain-damaged, suffering "collateral damage" from a misdirected attack.

Toxoplasma is also dangerous in humans with compromised immune systems, the most prominent example being patients with the "advanced immune deficiency syndrome (AIDS)", the infamous viral infection that destroys the immune system. An AIDS patient may well have a dormant Toxoplasma infection; when a cyst bursts open to recharge the immune system, there is no immune system to recharge, and the parasite replicates out of control. Victims may suffer brain damage and then die. However, once a host's immune system is destroyed, Toxoplasma is only one of many normally harmless pathogens that becomes a killer, and the host has no future in any case. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* SPACE NEWS: Space launches for July included:

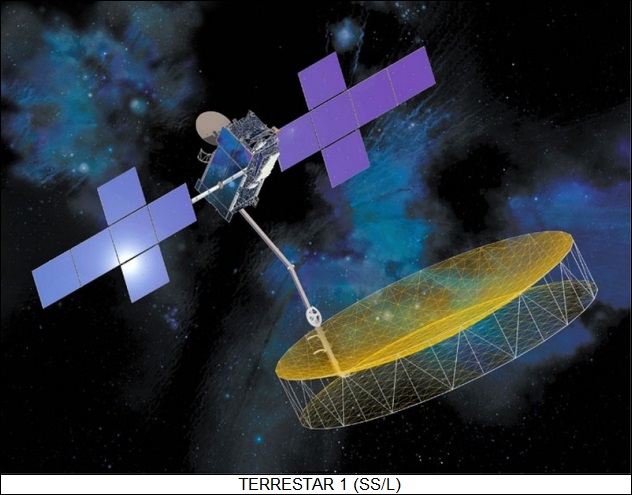

-- 01 JUL 09 / TERRESTAR 1 -- An Ariane booster was launched from Kourou in French Guiana to put the "TerreStar 1" geostationary comsat into space. It was intended to support mobile communications. It was built by Space Systems / Loral and was based on the SS/L 1300 comsat platform. It featured a folding antenna with a diameter of 18.3 meters (60 feet), with the payload system providing 500 spot beams. It was the biggest comsat launched to that time, with a launch mass of 6,910 kilograms (15,235 pounds), and had a design life of 15 years. The spacecraft was placed in the geostationary slot at 111 degrees West longitude to cover most of North America.

-- 06 JUL 09 / COSMOS 2451,2452,2453 -- A Rockot booster was launched from the Russian Plesetsk northern cosmodrome to put three classified military payloads into orbit. They were designated "Cosmos 2451", "Cosmos 2452", and "Cosmos 2543", and were believed to be Rodnik-class comsats, the Rodnik being an improved version of the old Strela-3 series.

-- 13 JUL 09 / RAZAKSAT -- A SpaceX Falcon 1 booster was launched from Kwajalein Atoll in the Marshall Islands to put the Malaysian "RazakSat" Earth observation satellite into orbit. It was the first successful launch of an operational payload by the Falcon 1. The 180-kilogram (400-pound) RazakSat satellite carried a camera system with a grayscale resolution of 2.5 meters (8.2 feet) and a color resolution of 5 meters (16.4 feet).

-- 15 JUL 09 / SHUTTLE ENDEAVOUR -- The NASA space shuttle Endeavour was launched from Cape Canaveral on "STS-127", an International Space Station (ISS) support mission. This was the 127th shuttle mission, the 23rd flight of Endeavour, and the 29th shuttle mission to the ISS. There were seven crew, including:

The main objective of the mission was delivery of an external experimental platform, the "Exposed Facility", for the Japanese Kibo ISS module. The flight also carried an Integrated Cargo Carrier for various ISS supplies, with the crew installing new batteries on an ISS solar array and performing other station maintenance tasks. In addition, two pairs of tiny "picosatellites", were deployed from the shuttle before its return to Earth:

Endeavour landed at Kennedy Space Center on 31 July after 15 days 16 hours 45 minutes in orbit. Wakata had spent over 137 days in space. Only seven more shuttle flights were planned before retirement of the system.

-- 21 JUL 09 / COSMOS 2454, STERKH 1 -- A Kosmos 3M booster was launched from Plesetsk to put the "Cosmos 2454" and "Sterkh 1" satellites into orbit. Cosmos 2454 was a Parus military navigation / communications satellite, while Sterkh 1 was a relay spacecraft for the COSPAS-SARSAT rescue beacon system.

-- 24 JUL 09 / PROGRESS 34P -- A Soyuz booster was launched from Baikonur to put the "Progress 34P" tanker-freighter spacecraft into orbit on an International Space Station (ISS) supply mission. It docked to the ISS Zvezda module aft port on 29 July.

-- 29 JUL 09 / SMALLSATS x 6 -- A Dnepr booster was launched from an underground silo at Dombarovsky in Kazakhstan to put an international payload of six smallsats into orbit. The payloads included:

The Dnepr was a converted R-36 (NATO SS-18 Satan) intercontinental ballistic missile.

* OTHER SPACE NEWS: One of the exciting bits of space news this last month concerned images taken by the NASA Lunar Reconnaissance Orbiter (LRO) of the Apollo landing sites on the Moon -- just in time for the 40th anniversary of the first manned landing. The images were fairly low resolution; however, the best of the set not only clearly revealed the base of the Apollo Lunar Module, but even the path trod by the astronauts wandering around. LRO will revisit the landing sites and take images with twice or even three times as much resolution.

There was some buzz online on what the "Moon landing was a hoax" crowd would think of the images, but everyone who asked that question immediately answered: "They'll claim the new images were faked." When people have one-track minds, it's not hard to see them coming and going.

COMMENT ON ARTICLE* NEXT-GENERATION ICS: As reported in an article in AAAS SCIENCE ("Is Silicon's Reign Nearing Its End?" by Robert F. Service, 20 February 2009), one of the latest Intel dual-core processor chips, codenamed "Penryn", contains a staggering 820 million transistors, each a few tens of nanometers across, operating at a maximum clock rate of 300 gigahertz. From a perspective of half a century ago, when the integrated circuit (IC) was just emerging, Penryn would seem like science fiction, but from those early days silicon-based IC technology has advanced by "Moore's law", doubling in density about ever two years.

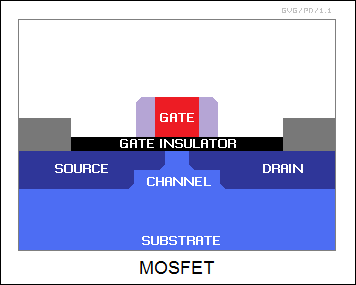

The problem is that diminishing returns have begun to set in. As devices grow ever smaller, the laws of physics increasingly impose limits on what can be done, forcing engineers to add ever more subtle tweaks. In 1990, a chip could be made with 15 different elements; now it requires 50, with a large of processing steps and small features added to make sure the MOSFET configuration works. Some gains are still possible with silicon IC technology, but the general consensus is that matters are going to have to be rethought, with a range of options being considered.

The traditional device for building large-scale digital ICs is the "metal oxide semiconductor field effect transistor (MOSFET)". Fabrication starts with a pure single-crystal silicon substrate. Pure silicon is not a very good conductor, but regions of the chip can be made conductive by adding "dopants" like boron or phosphorus, using masking techniques not so conceptually different from silkscreening. To build a MOSFET, two "islands" of doped conductive silicon named the "source" and "drain" are laid down on the chip very close together, with the gap between them known as the "channel". An insulating layer is laid down over the channel, and a conductive component known as a "gate" is laid down over that layer. The source, drain, and gate are all wired into the overall circuitry by conductive traces laid down on top of the chip.

The MOSFET acts as a switch. A voltage is placed between source and drain, but no current normally flows through the nonconductive channel. If a voltage is placed on the gate, the channel becomes conductive and a current flows. In the digital electronics world, the flowing current represents a binary "1"; the absence of a current represents a binary "0".

The insulating layer between the gate and the channel has traditionally been made of silicon dioxide (Si02). It is a very good material for the purpose because its structure mates neatly with the structure of the underlying silicon substrate. However, as of late it has become a bottleneck. As transistors shrank, the SiO2 layer had to be reduced to a thickness of one nanometer (nm), or only about three atoms thick -- and electricity tended to leak right through it. The answer, it seemed from the mid-1990s, was to find a new gate material that would be a better insulator even when it was only a nanometer thick. The insulating properties of a material are defined by its dielectric constant, given by the Greek letter kappa and usually just referred to as "k". The initial belief was that some "high-k" material could be found that could substitute for SiO2, and that would do the job. A candidate, hafnium dioxide (HfO2), seemed like it would do the trick.

However, it wasn't a question of just making one change. The Hf02 didn't neatly interface with the gate material, which at the time was made of polycrystalline silicon. The solution was to go to titanium-based alloys, but they had problems with some of the high-temperature IC manufacturing steps. Intel finally managed to get the scheme to work and started shipping chips with it in 2008. The disturbing thing was that this step took a decade to figure out when the steps leading up to it had taken a few years each.

The next step is to go from dimensions of 45 nm to 32 nm -- the distance being defined as that between two memory cells on a chip -- but SiO2 is presenting an obstacle again. What happens is that when the HfO2 insulating layer is laid down on silicon, some of the oxygen in the layer combines with silicon and forms an SiO2 layer. It actually makes for a better transistor at 45 nanometers, but at 32 nanometers the SiO2 layer makes troubles. Intel has worked on the problem and solved it, with 32 nm chips in the works.

* Beyond 32 nanometers is 22 nanometers, and at that point hafnium dioxide becomes problematic. The straightforward answer is to go to a material with an even higher dielectric constant. A number of options have been investigated, with the most promising high-k material being crystalline lanthanum lutetium oxide, with a k of 40 -- ten times that of SiO2 and almost twice as much as HfO2. However, the more elements in a compound, the harder it is to lay it down in a way that is compatible with the chip.

Another option is to change the geometry. The traditional MOSFET is "planar", laid flat relative to the substrate, but there's no reason other than processing complexity that it can't be laid out vertically, with the channel laid out so that the current flow isn't between one side and the other, it's between top and bottom. This configuration has the advantage that the channel could be surrounded on three sides by dielectrics and gate materials. That would give the option of providing multiple gates, which would not only help ensure better control over switching, but also permit "partly on" operation by selecting a subset of the gates. Academics have been playing with such devices, known as "finFETS" -- due to the fact that the vertical silicon channel looks a bit like a fish's fin -- for years. Now the big commercial players are moving in, with a consortium set up by Toshiba, IBM and Advanced Micro Devices (AMD) reporting they have developed the smallest fInFETs to date, half the size of the previous record, with high-k dielectrics and metal gates. However, the group says the technology isn't robust enough for manufacture just yet.

* The step to 15 nanometers is even worse, and at that point the general consensus is that silicon won't be able to do the job. The most attractive candidates are the semiconductor alloys known as "III-V" materials for the positions of their components in the periodic table of the elements. (Silicon is in column IV.) Examples include gallium arsenide (GaAs), indium gallium arsenide (InGaAs), and indium antimonide (InSb), which have higher "carrier mobilities" than silicon -- that is, currents flow through them faster than silicon.

III-V materials have been in use for decades, with GaAs common in high-speed radio communications chips, while compounds like InGaAs are used in optoelectronic components like LEDs and semiconductor lasers where silicon just can't do the job. The problem is that it is not easy to build complicated ICs with III-V technology -- it's impossible to build large wafers of III-V compounds, and so devices made of such materials are laid down on silicon substrates. That wouldn't be such a problem in itself except that the crystalline structures of III-V materials and the silicon substrate don't mesh with each other very well, resulting in defects that have proven very hard to deal with.

III-V compounds have another problem involving charge carriers. As mentioned, pure silicon is not very conductive and dopants have to be added to make it so. Pure silicon is a neat crystal with four chemical bonds to each atom, forming a tight tetrahedral lattice where currents don't flow easily; the situation can be modeled as a flat box loaded with ball bearings so that they tightly fit together in a bed and don't move around. Two classes of dopants can be added to make it conductive:

A logic switch in a modern digital chip is build around two MOSFETs, one using an n-type channel and the other using a p-type channel. The two MOSFETs are controlled by the same input voltage signal; they operate in an entirely reversed fashion, one turning on when the other is off and the reverse. This scheme, known as "complementary MOS (CMOS)" ensures that the switch either is completely on or off, meaning little power is dissipated by the switch itself. That not only reduces the power required by the chip, it also keeps it from melting down. Holes work almost as well in silicon as electrons do, but III-V compounds handle electrons far better than they handle holes. That makes it very difficult to build a large-scale III-V chip that won't melt itself down.

There has been some progress in working with III-V compounds. Intel researchers have figured out how to lay down InGaAs transistors on a silicon wafer using a buffer layer to mate the two dissimilar materials, but work on developing p-type III-V transistors has been going nowhere in any hurry. There may be a workaround, using germanium (Ge), a material once commonly used in high power transistors. Gallium isn't a III-V material but it seems relatively compatible with them, and work is underway on integrating Ge and III-V devices on the same chip.

* Even if such schemes don't pan out, or if they run out of steam in a generation or two, researchers have plenty of other ideas for entirely new configurations of devices, based on III-V "nanowires", the single-layer carbon sheets known as "graphene", and carbon nanotubes. Some are pessimistic about how much farther microelectronics can go before it finally runs into a wall, but given the potential payoff, nobody is prepared to give up just yet.

COMMENT ON ARTICLE* GAS PAINS: As reported in an article on BBC.com ("Energy Fuels New 'Great Game' In Europe" by Richard Galpin), Russia controls the world's largest known deposits of natural gas, and the Russians know perfectly well these gas deposits give them clout. In an interview with the BBC Alexander Medvedev, a senior official with the giant Russian energy company Gazprom, bluntly said that the nations of the European Union have to make up their minds about whether they want Russian gas or not: "Only three countries can be suppliers of pipeline gas in the long-term: Russia, Iran and Qatar. So there is no other choice than to deal with these suppliers. Europe should decide how to handle this situation, and if Europe doesn't need our gas, then we will find a way of selling it differently." My way or the highway.

Medvedev's comments reflected on the current EU scramble to find new supplies of gas following a crisis in January, when Russia shut down a key pipeline into Europe for two weeks in the course of a pricing dispute with the key transit country, Ukraine. The EU current relies on Russia for a quarter of its total gas supplies; of the 27 EU member states, seven are almost entirely dependent on Russian gas.