* 22 entries including: JFK assassination, future directions in aviation, dysfunctional California direct democracy, recycling refrigerators, winter cold snaps not incompatible with global warming, bark and ambrosia beetles versus trees, fecal transplants, banning cars from city centers, lithium mining in Bolivia, product carbon footprinting, scanning forests with lidar for carbon surveys, and Achaean impacts.

* NEWS COMMENTARY FOR JANUARY 2012: THE ECONOMIST reports that US employment statistics demonstrated a distinct uptick in December, with 200,000 more people back to work. Don't break out the bubbly, since 13 million Americans are still unemployed, compared to 6 million in 2006, before everything went south. Still, it's encouraging, and encouragement in itself can help the recovery.

If things continue to pick up, as long as Barack Obama does nothing seriously wrong, his re-election in November is a pretty good bet. Obama's leading his potential Republican challengers right now, which is somewhat surprising given the poor economy: incumbents tend to suffer when people don't have jobs. Obama may have inherited problems from the previous administration, but it's just the way things work, the current president is held responsible: "Sorry, Mr. President, I hate to be unsympathetic when you're wrestling with a big ugly monster, but that's just the way things are. The buck stops in the Oval Office."

Of course, by the same coin it's difficult to fault the Republican contenders for hammering on Obama's handling of the economy. What else would they be expected to do? However, THE ECONOMIST points out that:

BEGIN QUOTE:

... Mr Obama can always draw a favorable comparison with his predecessor. At the time of the election, the president may be able to say (more or less accurately) that where the last Republican president oversaw the loss of 653,000 private-sector jobs while creating 1.7 million government jobs, he presided over a gain of nearly 800,000 jobs in the private sector while trimming back nearly half of the public-sector fat added by Mr. Bush. All that despite the socialism.

END QUOTE

The last sentence in that citation puts an edge on it. Picking on Obama for the economy is to be expected; calling him a socialist is silly. Indeed, as THE ECONOMIST reports, Obama is invoking that towering Republican Teddy Roosevelt, who in 1910 introduced his "Square Deal", intended to level the ground for the little guys against the big guys. Obama feels that's a concept he can sell the electorate, though he's fully aware of just how damaging the "socialist" label can be, saying: "This isn't about class warfare, this is about the nation's welfare."

Obama simply says he is after "fairness", and the well-off should pay no more than their "fair share". This message goes over much better with the public than "soak the rich", while the perceived Republican coddling of the wealthy does not -- though admittedly that perception has been muddled recently as Republican candidates snipe at the considerable wealth of the pack leader, Mitt Romney. Still, Obama has been in favor of raising taxes on the rich all the time he's been in office, and so far, the Republicans have not been harmed by their repeated frustrations of his efforts.

Obama is walking a fine line in playing the "Square Deal" card. Nobody can sensibly deny that America's rich are concentrating wealth and power in private hands while the not-so-well-off are falling behind, but there's a fuzzy border between "leveling the playing field", which can be sold to the public, and "income redistribution", which can't. Americans tend to be sympathetic to the rich, admiring success and hoping for it themselves; maybe more to the point, to the extent that the rich are distrusted, the government is distrusted more. In a time when public sentiment is so heavily dominated by the slogan of NO NEW TAXES, many people see raising taxes on the rich as just the entering wedge of an overbearing government raising taxes on everyone.

* Incidentally, I track odds on the election every now and then. It can be tricky to figure out the odds, however, since there are two odds schemes at work. The first is the familiar concepts of odds as a ratio -- 7:5 for Obama versus 2:3 for Romney, though the odds are expressed as "5/7" and "3/2" respectively, the fraction giving the factor of return on the bet.

That's not a big deal to figure out, but the second scheme is puzzling. What does is mean that the odds on the Democrats are "-145" while the odds on the Republicans are "+125"? These are known as "money line odds" or "American odds", and they're based on a $100 USD stake. If the odds are negative, that's how much money has to be staked to win $100 USD, so "-145" means a $145 USD stake. If the odds are positive, that's the winnings from a $100 stake, so "+120" means winnings of $120 USD. Even odds are just "100", either sign can be used or no sign at all.

* The US military has now completed its formal withdrawal from Iraq. BUSINESS WEEK discussed the details of the departure, described as an "invasion in reverse". When the military tallied up its possessions in Iraq in September 2010, they came to about 2 million items at 92 bases, amounting to about 20,000 truckloads. As staggering as it was, it was done.

Most of the serious hardware was put to use at American military bases in the USA or overseas. Some of the excess ended up in the hands of American civil organizations, which only had to pay shipping costs to get their hands on an assortment of goods including bulldozers, all-terrain vehicles, and generators. Some of the kit that was too clapped-out was simply destroyed, but all the rest, as well as the bases once occupied by American troops, was handed over to the Iraqis, with some of the bases taken over by Iraqi security forces and some put into use for civil functions. The amount of material handed over to the Iraqis has been tallied as worth hundreds of millions of dollars, but the Pentagon feels that it all should be a great aid to the Iraqis.

COMMENT ON ARTICLE* THE CALIFORNIA CONUNDRUM (4): As a footnote to this series, an article from THE ECONOMIST ("So Bad, It Could Get Better", 16 July 2011) inspected the dismal state of California's prisons. Nobody expects prisons to be pleasant, but California's are particular nightmares because of overcrowding and underfunding. This last spring, the US Supreme Court judged that California had to reduce the overcrowding and provide inmates with better health care.

California's prison system has been afflicted by all the evils that have plagued the governance of the state -- along with some evils of unique to itself, thanks to a determination to get "tough of crime". It started in 1977 when Governor Jerry Brown signed a bill into law that directed fixed sentencing, limiting the discretion of judges and parole boards. That led to a political "race to the bottom" to get ever tougher with harsher sentencing.

The most notorious of these exercises was the "three strikes and you're out" ballot measure of 1994, in which felons convicted of a second crime get doubled sentences, while those convicted of a third crime get 25 years to life -- even if the crime is trivial. Other states picked up the idea, but California's remains the harshest. And who was so concerned about law and order to sponsor the "three strikes" initiative? The prison guards union, in search of job security for its members. How much "three strikes" has done to deter crime may be arguable; what isn't arguable is the way it's packed California's prisons with lifer inmates, not all of them really amounting to threats to society.

Along similar lines, six ballot measures conducted from 1978 to 2000 reintroduced and toughened the death penalty. The end result is that California has the country's most crowded death row, 714 inmates at last count -- but since appeals can take decades, there's been only 13 executions since the death penalty came back in 1978. In the meantime, 78 death row inmates have died from suicide, disease, or old age. Not only is the system clearly not working, it's frightfully expensive, with a price tag of $4 billion USD so far.

California is not alone in finding the death penalty to be more trouble than it's worth, with other US states backing away from it as well. There was a time when anyone who committed a serious crime was executed without many misgivings, but that time is long gone -- and as long as there are misgivings about it, the death penalty is going to demand special considerations and all the baggage that comes along with them. Talk of streamlining appeals is fatuous, since the irreversible nature of the death penalty demands a stronger appeals process, and appeals can't simply be waved away.

It's not really a question of whether the death penalty is right or not; the reality is that it's unworkable. Polls show that Californians still like the death penalty, but enthusiasm is on the fade. Don Heller, who actually wrote the 1978 ballot measure reintroducing capital punishment, has decided it's not such a good idea; now Heller and others want it replaced with life sentences without parole. A law to this effect is now working its way through the political machinery. "Three strikes" is also a target of reform; prosecutors used to like it, but it's ended up tying their hands so badly that they often use their discretion to dismiss cases when they can. An attempt to dump "three strikes" in 2004 failed, but polls now show 74% of California's voters are in favor of changing the law.

Getting tough on crime is all very well and good, but it's finally sinking in that mindless harshness is simply jamming up the justice system and doing more harm than good. A case might be made for harshness; not much of one can be made for stupidity. [END OF SERIES]

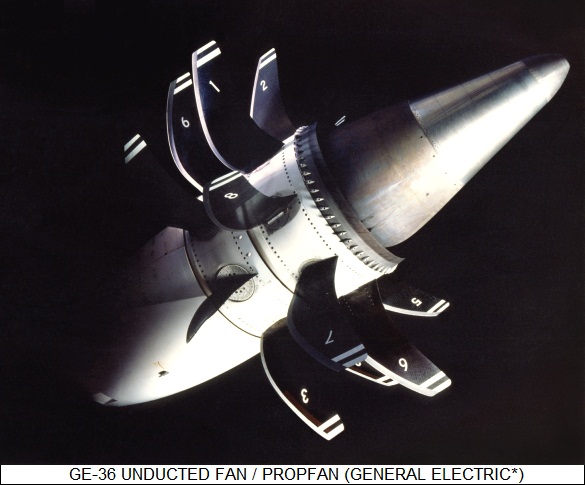

START | PREV | COMMENT ON ARTICLE* FUTURE FLIGHT (2): Of course, new airframes aren't the whole story to improved airliners. Engines will play a considerable part as well. Back in the 1980s there was considerable work on more fuel-efficient engines, most prominently "propfans" AKA "unducted turbofans", which were conceptually somewhere in between turbofans with the duct removed and turboprops. Improvements in high-bypass ratio turbofans kept propfans off the market, though they did help lead to new fan-style composite-material propellers for traditional turboprop engines.

High-bypass turbofans have continued to be improved, the latest generation being "geared" turbofans with gearing systems to ensure fan rotation at optimum speed -- discussed here a few years back -- along with combustor systems capable of operating at very high temperatures, efficiency and clean combustion being generally proportional to engine temperature. However, propfans appear to be making a comeback of sorts, with several organizations now tinkering with new designs. So far, nobody's operationally flying aircraft powered by them.

Aircraft materials are another frontier of investigation. Much is made of the use of composite materials in the latest generation of aircraft, and indeed composites have proven extremely successful, permitting lighter and sturdier aircraft with improved and elegantly graceful aerodynamics. However, even the most advanced composite aircraft, like the Boeing 787 and Airbus 350, are only half-built from composites, the rest of the airframes being built with aircraft metals technology.

Partly this is due to inertia: aircraft builders are used to metals, have a body of experience with them, and due to safety-liability considerations have good reason to be conservative. The metals industry believes there is no great reason to change that mindset either, with an official of US aluminum giant Alcoa stating: "New alloys fit into the existing metallic infrastructure, and offer less risk and cost versus a new supply chain for composites. We can provide up to 10% weight saving, up to 30% less cost to manufacture, and be ready for the next single-aisle [airliner] entering service after 2015."

He adds that the corrosion and fatigue resistance of third-generation lithium-aluminum alloy is competitive with that of composites. Indeed, while everyone assumes that the next generation of aircraft will feature a mix of composites and metals, metals advocates believe that, at least in some cases, the ratio will shift back towards metals. Composites, while perfectly marvelous materials in themselves, are hobbled by their limited supply: there's simply not the capacity there to push out composite materials at the same rate and low cost as metals, at least for the present.

Some composite advocates still believe that metal use is going to decline, but that it will require rethinking aircraft design on the basis of composite construction, taking advantage of the distinct properties of the material to do things that can't be so easily done in metal. Composites not only suggest new approaches to airframe design, they also could streamline manufacturing, for example by using 3D printing technology. The advocates believe they can overcome industry conservatism by using advanced modeling and "immersive reality" systems to build "virtual aircraft" that would show just what composites can do.

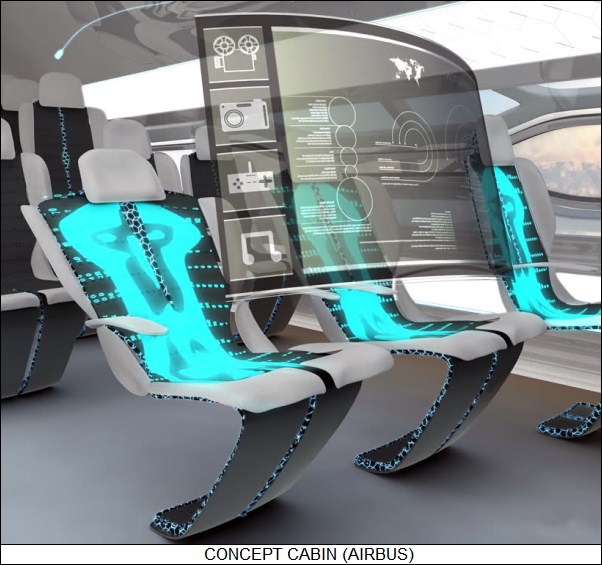

Finally, work continues on improved avionics systems, with future cockpits to feature wide-screen multimode displays with touch-sensitive input, holographic head-up displays, sidestick controllers, and voice input. None of these technologies are new, but there's plenty of room for improvement in capability and integration. Similarly, passenger electronic systems are a mature technology, but there's plenty of room for improvement in cost and capability, giving passengers more variety and options for inflight entertainment and communications.

Airbus Industries has performed an investigation named the "Concept Cabin" to envision next-generation passenger accommodations, featuring such ideas as seats that morph to a passenger to provide optimal comfort, or harvest energy from the heat and movements of the passengers to help power aircraft systems. A more radical suggestion is of walls that could become transparent on command to give passengers a grand view of the skies and landscape -- though that might frighten some passengers. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* GIMMICKS & GADGETS: Multispectral scanning -- observing a scene over multiple wavelength bands -- is nothing new, having been around for a fair amount of time in airborne and space sensor systems. Multispectral scans can provide information not available in a grayscale or straight color image. Now, according to THE ECONOMIST, researchers at the University of Oxford in the UK have developed a multispectral flatbed scanner.

The idea might seem baffling at first, few being able to think of why they'd want to scan pictures from books and magazines using multispectral techniques. Actually, the multispectral scanner was designed for a specific job: scanning ancient texts and the like. A multispectral scan of a classic parchment can reveal details that are almost impossible to see otherwise.

Multispectral scanning has been used on such items for over a decade, but previously it's been done with a camera mounted on a frame and using a rotating set of filters. The flatbed scanner is more compact and easier to use; it has a set of 6 or 12 light-emitting diodes, each tuned to a different wavelength from the infrared to the ultraviolet, with the scanner performing multiple sweeps over the target document, using a different LED each time. It is being commercialized by a firm named Oxford Multi Spectral. Applications include not only examination of classical documents, but also forensics, for example checking out counterfeit bills.

* My photography hobby has pretty much run out of steam; I still like to take pictures, but I ran out of interesting things to shoot. Now the tech blogosphere has been promoting a new camera that might give my photo hobby a bit of a shot in the arm: the "Tamaggo" 14-megapixel 360-degree imager.

I've only seen 360-degree images a few times. The first time I saw one, I didn't understand right away that the image is rotatable, that I could scroll around back and forth around the field of view. I got a little queasy doing it. I don't know if I'd ever take many 360-degree photos, but even a small number of them would be fun.

"Tamaggo" is Japanese for "egg", and that's what the Tamaggo is, a plastic egg with a lens dome on top and flat at the bottom, where there's an adjustable stand. If stood on its base, it takes a 360-degree image, but if held horizontally, it takes a wide-panorama shot. It has wireless and USB links for transferring imagery, and no doubt it comes with the proper viewing software. Or it will, it's not supposed to be available until mid-year. They're asking $200 USD, which I regard as very reasonable for what it's supposed to do.

I'll have to wait until the Amazon reviews give it a looking-over, however, in case it's really a piece of junk. If I do buy it, I can foresee one possible problem: the thing looks like a high-tech grenade, not a camera, and taking shots with it might get some people excited.

* I ran across a comment about the "bookcrossing.org" website from WIRED.com, and was intrigued enough to check it out. Got old books that don't have much resale value? Go to the BookCrossing website, register the book, get a download for a label to print out and stick on the book, and then release it into circulation. It could be released anywhere, but there are "Crossing Zones" in most towns where they can be dropped. When BookCrossers pick up a book, they can log doing so on the website, allowing it to be traced. BookCrossing is not new; it's been around for a decade, and claims to have going on 900,000 "Crossers" supporting a circulation of over 8 million books in 132 countries.

COMMENT ON ARTICLE* RECYCLING REFRIGERATORS: The prospect that the chlorofluorocarbons (CFCs) AKA freons used as refrigerants in air conditioners and refrigerators might deplete the Earth's protective ozone layer led to a ban on the use of CFCs in 1995. CFCs also turned out to be very potent greenhouse gases, orders of magnitude more effective than carbon dioxide in trapping heat. However, there are plenty of old appliances out there loaded up with CFCs, posing the problem of how to dispose of them safely.

As discussed in an article from THE NEW YORK TIMES ("Robots Extract Coolant From Old Refrigerators" by Anne Eisenberg, 24 September 2011), a few US companies have set up voluntary recycling programs to capture the CFCs from discarded refrigerators. It's not trivial to do: drawing off the refrigerant itself isn't so tough, but the foam insulation used in old refrigerators was "blown" with CFCs, since they have good insulating properties and are non-toxic. Only about 30% of the CFCs in a refrigerator are in the cooling system; the rest are in the insulation. Such recycling programs use robotic gear to get most of the CFCs out of the appliances before they're sent off to the scrapyard.

Appliance Recycling Centers of America (ARCA), a Minneapolis-based company that operates a chain of recycling depots, recently unveiled a house-sized automatic recycling system that can extract 99.8% of the coolant from a refrigerator. The system, installed at a facility in Philadelphia, uses shredders, magnets, chutes and sluices to convert a junked refrigerator into tidy piles of plastic and metal for recycling. The foam insulation is pelletized for use as fuel or whatever. The shredding and sorting process mostly takes place in a vacuum, to allow the CFCs to be collected.

Jack Cameron, chief executive of ARCA and of ApplianceSmart, a chain of appliance stores, says that the system can digest a refrigerator in a minute and process about 150,000 old refrigerators in a year. It doesn't come cheap, the pricetag being about $5.5 million USD; ARCA could afford it because the company has a six-year contract with appliance giant General Electric (GE). GE supplies new appliances to vendors while hauling off the old ones in twelve Eastern states, with GE having relationships with recycling organizations to deal with the old junk.

The recycling system was built by Untha Recycling Technology of Karlstadt, Germany. Elaborate refrigerator recycling systems like Untha's are rare in the United States for the moment but are common in Europe, thanks to tougher regulations on disposal of CFCs across the Pond. The Untha system installed in Philadelphia had to be scaled up to handle American refrigerators, which are on the average substantially bigger than European refrigerators.

Another robotic system designed to efficiently capture refrigerants is at the Stow, Ohio, facility of JACO Environmental. The system is transportable and was also built in Germany, by SEG of Mettlach. Michael Dunham, director of energy and environmental programs for JACO, said the system separates more than 95% of the materials in the old appliances for recycling. JACO picked up about 480,000 refrigerators for recycling in 2010, the appliances having an average age of 21 years. The company, which participates in a voluntary program to bag and burn old insulating foam in refrigerators, expects the volume of old refrigerators to continue over this decade -- though ultimately antique refrigerators loaded up with CFCs are going to disappear.

While refrigerator recycling runs at a financial loss at present, "cap and trade" regulations promise that the business is likely to be more profitable in the future. California is working on a cap-and-trade regulation, set to start in 2012, that includes credit for pre-1995 refrigerants. Dunham of JACO says the company is already taking one of the refrigerants it destroys, CFC 12, to the carbon offset market. "People are buying the credits and banking them, hanging on to them in hopes they will be more valuable when cap and trade comes into effect."

COMMENT ON ARTICLE* HOT WORLD COLD WINTERS: Every time a nasty cold snap occurs somewhere on the planet, climate-change skeptics proclaim it proves global warming is bogus. As reported by an article from AAAS SCIENCE NOW ("Cold Comfort: Frigid Months Will Still Come In A Warming World" by Sid Perkins, 23 November 2011), December 2010 was the coldest December in northwestern Europe for more than a century. Jouni Raeisaenen, a climate scientist at the University of Helsinki, points out that this should not be surprising in a global-warming scenario; statistically, it can be expected.

To show why, Raeisaenan and his colleagues analyzed how various greenhouse gas-emission scenarios would affect Earth's climate during the next four decades, using 24 different climate models to assess monthly global average temperatures for continents outside of Antarctica from 2011 through 2050. Breaking the analysis down by months, 480 in all, their statistics suggest that half of the months would be cooler than average, and that 48 of them would fall into the coolest 10% of months; on average, five of the months would break records. However, when the Helsinki researchers compared their results with actual climate data collected during the 20th century, cold periods were much less frequent. Raeisaenen said: "Cold months still occur in a warmer world. They just happen quite infrequently."

Unsurprisingly, not all parts of the world are affected equally. In the tropics, where climate typically doesn't vary much, almost all months in the coming four decades will be warmer than average by 20th century standards. In high-latitude areas of the Northern Hemisphere, where climate is predicted to warm more than in the tropics, cooler-than-average months are still likely to occur, simply because temperatures are more variable, "noisier", in such regions.

* A later article from AAAS SCIENCE NOW ("Global Warming May Trigger Winter Cooling" by Sid Perkins, 12 January 2012) reported on a study that suggested global warming could actually lead to cold snaps.

It is fairly well documented that global temperatures have been rising since the late 1800s; the warming over the past 40 years has been particularly rapid. According to Judah Cohen, a climate modeler at the consulting firm Atmospheric and Environmental Research in Lexington, Massachusetts, average temperatures in the Arctic have been rising at twice the global rate -- but winters in the Northern Hemisphere have grown colder and more extreme in southern Canada, the eastern USA, and much of northern Eurasia, with UK's record-setting cold spell in December 2010 as a case in point.

According to a paper by Cohen and his colleagues, a close inspection of climate data from 1988 through 2010, including the extent of snow cover on land and ice cover on sea, helps explain how global warming can drive regional cooling. The study combined climate and weather data from a variety of sources to estimate Eurasian snow cover, and then considered how that factor might have influenced winter weather elsewhere in the Northern Hemisphere.

First, the strong warming in the Arctic in recent decades, among other factors, has triggered widespread melting of sea ice. More open water in the Arctic Ocean has led to more evaporation, which moisturizes the overlying atmosphere. Previous studies have linked warmer-than-average summer months to increased cloudiness over the ocean during the following autumn. That, in turn, leads to increased snow coverage in Siberia with the coming of winter.

As it turns out, snow cover in October has the most significant effect on climate in subsequent months. That's because widespread fall snow cover in Siberia strengthens a semi-permanent high-pressure system called, duh, the "Siberian High", which reinforces a climate phenomenon called the "Arctic Oscillation" and drives frigid air south to mid-latitude regions throughout the winter.

Other climate researchers find the idea plausible. According to Anne Nolin, a climate scientist at Oregon State University in Corvallis: "Northern Eurasia is the largest snow-covered landmass in the world each winter." She observes that it would obviously have a big influence on the climate of the Northern Hemisphere, and adds that earlier studies have linked Siberian snow cover and climate in the northern Pacific.

COMMENT ON ARTICLE* THE CALIFORNIA CONUNDRUM (3): Where the dysfunctional nature of California's governance becomes most obvious is in the state's school system. To be sure, the schools have some demographic problems, mostly due to the large number of Hispanic students, since about two-fifths of them don't speak English well. However, California ranks near the bottom relative to other US states in funding per student, number of teachers for students, and ratio of students who graduate. California has made a decision to disinvest in education, the amount of funding having dropped by almost half since the 1960s.

Who's responsible? That's an interesting question. While the governor does have a secretary of education, actual power over education resides in the superintendent for public instruction, who is directly elected by the voters and so is not beholden to the governor. Californians are peculiarly fond of this division of executive power, taking it to an extreme among US states by individually electing a governor, lieutenant governor, attorney-general, secretary of state, controller, superintendent, and insurance commissioner. Sometimes these officials are at outright war with each other.

Locally-elected officials of school districts are supposed to be ultimately responsible for operating the public schools. In practice, Proposition 13 put the school districts into a financial noose. Since the effect of that noose was visible to citizens, action was taken -- in the form of several voter initiatives to obtain funding for schools from the state government. Of course, the initiatives ran head-on into the restrictions on taxing powers established by Proposition 13, resulting ultimately in a set of monstrous complications to the funding process that made it not merely opaque, but all but incomprehensible. Who's in charge? Who knows?

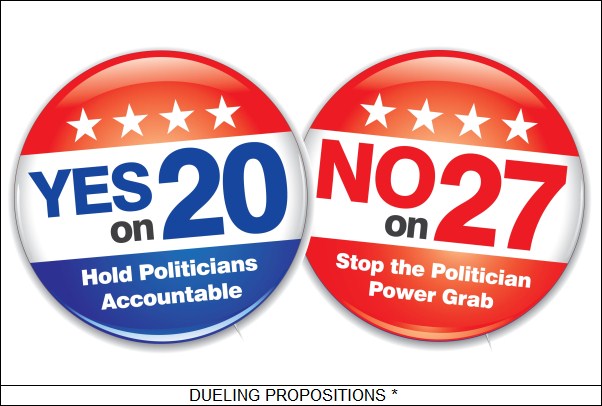

* What is particularly appalling is that, despite the chaotic structure of California's government, polls show the majority of Californians are more or less happy with the state's implementation of direct democracy, and believe they are well-informed on public issues -- while simultaneously demonstrating a general ignorance of basic issues of revenue and funding mechanisms. Californians aren't always even sure of what they're voting for. Initiatives are sometimes worded in such complicated ways that a high proportion of voters find they voted NO when they really wanted to vote YES, and the reverse. Most of what they know about the initiatives comes from advertising campaigns that are light on facts and strong on emotions. Is it any surprise the system works so badly?

California could stumble along somehow as long as the state remained prosperous, but the impact of the recession on the state's economy laid the problems bare. Now it has become evident, at least to the leadership, that something must be done. Some things already have been done, for example killing off gerrymandering and dropping the supermajority requirement to pass a budget, but those are only a start.

California won't dump direct democracy completely, that's not going to happen. Californians like their citizen rights and are not willing to give them up. However, there's no real need to; the principle works well enough in Switzerland. What's needed, as the Swiss example shows, is a change in the rules to implement checks and balances so the government and the citizens end up working together instead of at odds.

One angle is to encourage referendums and discourage initiatives. A referendum does not really work against the legislature; it simply passes judgement on legislative actions, and can provide political cover for politicians daring to push through controversial laws. As far as initiatives go, the legislature should be entitled to come back with a counterproposal, with only one proposition actually going to the vote. California might also restrict initiatives to enacting statutes, as many other states do -- and not, oh please not, allow them to amend the state constitution. Another practice used by some other states is to "sunset" initiatives, ensuring that they lapse in, say, ten years and have to be renewed. Certainly all initiatives must be explicit about their costs and funding, indicating where the revenue to implement them is going to be found.

California should of course fix the state legislature as well. Some steps, such as open primaries and ending gerrymandering, have been implemented. Other useful steps should include increasing the number of seats in the legislature so that voters have closer contact with their legislators, and at least relaxing term limits so that legislators can become more experienced in their jobs. Another interesting idea is a unicameral legislature, as already implemented in the state of Nebraska. The US Federal government has a bicameral system, but it was implemented as a necessary compromise -- the Senate provides equal representation of all states, regardless of size, while the House of Representatives provides representation by size of state populations. There is no comparable necessity in the case of California, and the current bicameral system just compounds the state's legislative chaos.

Of course the executive also needs reform, most particularly in reducing the number of elected statewide officials. Ideally, the governor should be able to appoint all the top-level administrative officials and be accountable for their actions -- an accountability notably lacking in the current balkanized arrangement.

But how to bell the cat? A constitutional convention is tempting, California not having had one since 1879, but in the current state of factionalism it might just noisily go nowhere. The alternative is to rely on the initiative process, which is dicey because it was the source of so many of the problems to begin with. However, the state's power elite are now lining up support for reform initiatives under the "Think Long Committee", which has public stars such as Arnold Schwarzenegger and Condi Rice as members, and which has access to deep-pockets funding. Might reform fail? It might; but the clock has already run out for California, and failure to achieve reform will certainly end in ruin. [TO BE CONTINUED]

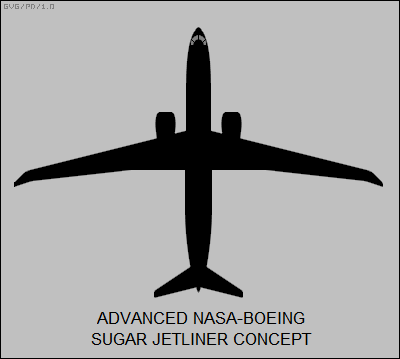

START | PREV | NEXT | COMMENT ON ARTICLE* FUTURE FLIGHT (1): The 18 July 2011 issue of AVIATION WEEK was mostly focused on future directions in civil aviation, in particular the airlines, with a series of articles pointing out that the industry is now at a crossroads, confronted by changing realities while being presented with new technological options. Aircraft configurations and operations have not changed dramatically in the past few decades; any observer from 1972 would not see much strikingly different flying in 2012. That may not be true in 2042.

One of the biggest drivers for change is the increasing price of fuel. It affects all aircraft operators, but it hits airlines worst of all, because they exist in such a brutally competitive environment. All projections indicate that fuel costs are going to grow at a more rapid rate than inflation, faster than airline ticket prices will be able to rise. Few would be willing to pay $2,000 USD for a Los Angeles to New York City flight, but that's where current airliner technology is headed even with technological improvements. Something dramatically new will be required. The problem is that the commercial aviation industry is conservative; airliners are expensive, airlines buy vehicles for the long haul, and cannot change to new technologies overnight.

The US National Aeronautics & Space Administration (NASA) has been investigating new airliner technology through the agency's "N+3" program, with NASA engineers convinced that it is possible to reduce fuel consumption relative to a contemporary Boeing 737 jetliner by 70%. Much of NASA's work has been on the "hybrid wing-body (HWB)" aircraft, essentially a flying wing, as discussed here in 2009. However, the HWB's configuration makes it better as an air freighter than a passenger jet, and it implies an economy of size as well, being better suited for larger aircraft.

For aircraft on the scale of the 737, other configurations are more optimal, with current research focused on the "truss-braced wing (TBW)". It has been long known that very long and slender wings -- that is, with a high aspect ratio -- are very aerodynamically efficient. It's hard to build such wings so they're strong, but modern materials help, and there's no great penalty in attaching a strut or truss to provide reinforcement. Boeing conducted a study on such an aircraft, made of lightweight composite materials, and estimated that fuel savings would be almost 40% with the newest high-bypass turbofan technology -- and over 60% with hybrid propulsion, a topic discussed later.

As Boeing envisioned the aircraft, it would have a wingspan of 65.2 meters (214 feet), with the wings folding after landing to a span of 36 meters (118 feet), the same as a contemporary 737, allowing the aircraft to use existing airport facilities. The performance penalty would be less than 10%. Incidentally, the Boeing TBW design is extremely graceful, along the lines of the more elegant airliners of the late 1930s, updated for the 21st century.

More conservatively, Boeing has conducted studies for NASA under the "Subsonic Ultra-Green Aircraft Research (SUGAR)" program, coming up with designs evolved from current 737 configuration to meet improved noise, emissions, and fuel economy standards for service in the 2030 timeframe. Baseline SUGAR concepts generally resemble the 737, though with a set of technology tweaks and a wing aspect ratio of 11.6, compared to 9.45 for the current 737. More radical SUGAR concepts envision an aspect ratio of 16, with a span of 48.7 meters (160 feet), again featuring a wing fold to permit use of existing airport facilities.

NASA is also investigating "short takeoff and landing (STOL)" technologies, though the current focus is on improvements to existing airliner configurations, such as improved flaps and various "wing blowing" schemes, in which engine airflow is diverted to enhance lift. Wing blowing is nothing new, with aircraft using it in service since the early 1960s, but modern turbofan technology and improved concepts are making it more attractive now.

The NASA focus is not only on permitting airliners to use smaller airports, but also to reduce noise through use of much steeper glide paths into and ascents out of such airports, reducing the size of the acoustic footprint over the surrounding area. How much more exciting riding such an aircraft might be to passengers remains to be seen. Obviously, lessons learned with STOL tech for current technology airliners will have its applications to next-generation designs such as the TBW. [TO BE CONTINUED]

NEXT | COMMENT ON ARTICLE* Space launches for December included:

-- 01 DEC 11 / BEIDOU IGSO 5 -- A Long March 3A booster was launched from Xichang in southern China to put the "Beidou IGSO 5" navigation satellite into orbit.

-- 11 DEC 11 / AMOS 5, LUCH 5A -- A Proton Breeze M booster was launched from Baikonur in Kazakhstan to put the Israeli "Amos 5" comsat and Russian Space Agency's "Luch 5A" data relay satellite into geostationary orbit. Amos 5 was built by the Russian Reshetnev organization; the satellite had a launch mass of 1,590 kilograms (3,500 pounds), a payload of 18 Ku band / 18 C band transponders, and a 15-year design life. It was placed in the geostationary slot at 17 degrees East longitude to provide communications services to Africa.

Luch 5A was intended to provide communications support for Russian space activities, using Ku / S-band transponders and steerable antennas. It had a launch mass of 1,135 kilograms (2,500 pounds) and a ten-year design life.

-- 12 DEC 11 / IGS 7A -- A JAXA H-2A booster was launched from Japan's Tanegashima space center to put the "Information Gathering Satellite (IGS) 7A" radar spy satellite into orbit.

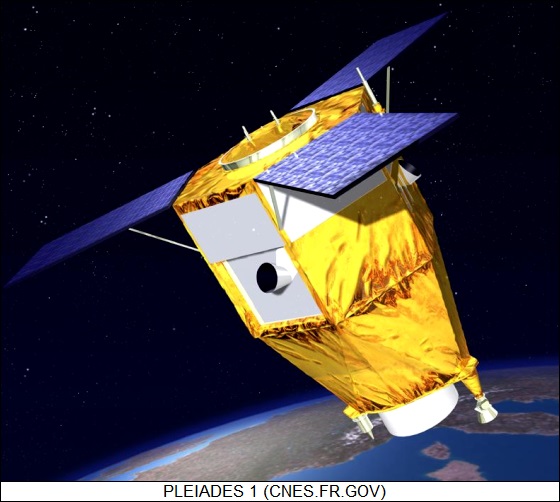

-- 17 DEC 11 / PLEIADES 1, ELISA x 4, SSOT -- A Soyuz Fregat booster was launched from Kourou in French Guiana to put the first French "Pleiades" Earth observation satellite into orbit. The launch also included four French ELISA electronic intelligence (ELINT) satellites and the Chilean SSOT remote sensing satellite. The six payloads were built by EADS Astrium:

This was the second Soyuz launch from Kourou.

-- 19 DEC 11 / NIGCOMSAT 1R -- A Long March 3B booster was launched from Xichang China to put the Nigerian "Nigcomsat 1R" geostationary comsat into orbit. The satellite was based on the Chinese DFH-4 bus; it had a launch mass of 5,080 kilograms (11,200 pounds), a payload of 4 C / 8 Ka / 14 Ku / 2 L band transponders linked with seven antennas, and a design life of 15 years. It was placed in the geostationary slot at 42.5 degrees East longitude. It replaced Nigcomsat 1, which was launched in 2007 but failed in 2008. The booster was in Long March 3B/R, with a stretched first stage and liquid-fuel strapon boosters.

-- 21 DEC 11 / SOYUZ TMA-03M -- A Soyuz booster was launched from Baikonur to put the "Soyuz TMA-03M" manned space capsule into orbit on an International Space Station (ISS) support mission. It carried a crew of Oleg Kononenko of Russia (second space flight); Andre Kuipers of the Netherlands / ESA (second space flight); and Donald Petit of NASA (third space flight). The capsule docked with the ISS Rassvet module on 23 December, its three occupants joining the "ISS Expedition 30" crew of Dan Burbank, Anton Shklaperov, and Anatoly Ivanishin.

-- 22 DEC 11 / ZIYUAN 1-2C -- A Long March 4B booster was launched from Taiyuan in northern China to put the "Ziyuan 1-2C" remote sensing satellite into Sun synchronous orbit. The spacecraft was built by the China Academy of Space Technology (CAST) and had a launch mass of 2,100 kilograms (4,630 pounds), with a payload of grayscale and color imagers.

-- 23 DEC 11 / MERIDIAN (FAILURE) -- A Soyuz 2.1b booster was launched from Plesetsk in northern Russia to put a Meridian Molniya-type military comsat into orbit. The booster failed and fell back to earth in Siberia.

-- 28 DEC 11 / GLOBALSTAR 2 x 6 -- A Soyuz 2-1a booster was launched from Baikonur to put six second-generation "Globalstar" comsats into orbit. Each satellite had a launch mass of 700 kilograms (1,545 pounds), with the comsats placed into orbits at an altitude of 1,415 kilometers (880 miles). This was the third of four launches to rebuild the Globalstar constellation.

* OTHER SPACE NEWS: AVIATION WEEK ran a survey on South Korea's space program, the country having two satellites now in orbit and three more on the way. South Korea's "Kompsats" are built by the Korea Aerospace Research Institute, working with Korea Aerospace Industries (KAI); the spacecraft are officially for civil use, but they often have obvious military uses, particularly for keeping an eye on South Korea's mentally unbalanced brother to the north.

The "Kompsat 1" satellite was put into orbit by an Orbital Sciences Taurus booster in 1999. It was an Earth observation satellite with a launch mass of 500 kilograms (1,100 pounds), carrying a payload of an imaging system, a multispectral scanner system, and technology tests. "Kompsat 2" was put into orbit in 2006 by a Russian-built Rockot booster; the satellite had a launch mass of 800 kilograms (1,765 pounds) and an imaging system with grayscale resolution of a meter, about six times better than the imager on Kompsat 1.

"Kompsat 3" will be launched in early 2012 on a Japanese H-2A booster. Kompsat 3 will be another Earth observation satellite, similar to Kompsat 2 but with still further improved imaging and scanning systems, as well as more electrical power. The next spacecraft in the series will be "Kompsat 5", leveraging off the same satellite bus as Kompsat 3, but with a payload of a synthetic aperture radar (SAR) system. It will be followed by an enhanced version of Kompsat 3 designated "Kompsat 3A", and then another SAR satellite, "Kompsat 6".

The Kompsats aren't the end of South Korea's space ambitions, the country having launched the first geostationary "Communications, Ocean, & Meteorological Satellite (COMS 1)" on an Ariane 5 booster in 2010. It was built by EADS Astrium and had a launch mass of 2,450 kilograms (5,400 pounds); as its name suggests, it was basically a comsat with weather observation and ocean survey instruments added. Another civil comsat and a military comsat are in the works.

The "KoreaSat" firm also has launched and operated a series of commercial comsats, with "KoreaSats" launched in 1995, 1996, 1999, 2006, and 2010. The last was "KoreaSat 6"; there was no "KoreaSat 4", the number "4" being seen as unlucky in many Far Eastern cultures, which may have also been the reason why no "Kompsat 4" is planned. All five KoreaSats were purchased from foreign satellite manufacturers. Another South Korean space firm, "Satrec Initiative", has developed smallsats for foreign countries. South Korea has even been working on its own launch vehicle, the "Korea Satellite Launch Vehicle (KSLV)", but test flights in 2010 and 2011 both ended in failure.

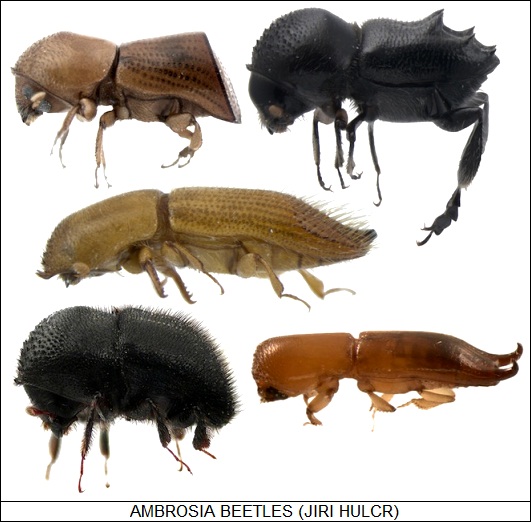

COMMENT ON ARTICLE* THE BEETLE SCOURGE: We have an inclination to believe in a tidy "balance of nature", but we have learned the hard way that the balance is dynamic, often shifting, and can be easily upset. For example, as discussed by WIRED.com, consider the "bark / ambrosia" beetles, a group of about 7,000 species of beetles found worldwide that has turned out to be a global threat to trees. The irony is that these beetles don't actually feed on trees: they bore into the bark to make "farms" for symbiotic fungi that they feed on instead.

Says biologist Jiri Hulcr of North Carolina State University: "You know how famous leafcutter ants are because they grow fungus? Those groups evolved this just once. In the bark beetles, there are at least 11 independent emergences. Go into the rain forest in South America, and you see the power and diversity of these fungus farmers. You'll barely see a tree without sawdust falling off. That's the fungus farmers at work, drilling through the trees and planting their fungus gardens."

Each group of bark and ambrosia beetles has its own special collection of fungi, carried in specialized pockets on their bodies, in their armpits and on their backs and in their mouths, always ready to be seeded. Says Hulcr: "Chop a beetle's head off, grind it up, spread it on agar and you will see the most marvelous organisms growing from it. Those are the fungal symbionts."

Hulcr adds that nobody knows much about the fungi, which is not surprising since only few people have had much interest in these beetles; Hulcr is one of the world's few specialists in the subject. Nobody found them all that intriguing because they traditionally only fed on dead trees and didn't draw too much attention to themselves. That changed when bark and ambrosia beetles started inadvertently traveling around the world, to acquire different habits.

The first known incidents of a bark beetle attacking living trees involved a beetle species called Scolytus multistriatus and its fungal symbionts Ophiostoma ulmi and Ophiostoma novo-ulmi. They're better known as the timber import-riding agents of Dutch elm disease, which in a few decades from the middle of the 20th century almost completely eradicated the elm from North America and Europe. Later arrivals have included the "redbay" beetle, an east Asian native first found in Georgia in 2005. It has a taste for trees of the Lauracae family, of which avocado is a member; redbay hasn't hit avocado farmers yet, but many suspect it's only a matter of time. Other beetles are afflicting poplars, mangoes, and oak trees, and there's a fear that we're only seeing the tip of the iceberg.

Nobody knows why the beetles are suddenly attacking living trees, but Hulcr suspects they're just doing what comes naturally to them: whatever cues the beetles use to target dead trees in their normal habitats don't work elsewhere, and so living trees look just like dead ones to them. The target trees aren't well adapted to the beetles, either; the unfamiliar beetle attack can cause a violent immune reaction in a tree that kills the tree. Hulcr doesn't think there's much that can be done to stop the beetles, saying with a certain excess of enthusiasm for the subject: "Another amazing feature of these beetles is their amazing reproductive strategies." Many species can reproduce parthenogenically, with females churning out more females without the help of a male. They can mature in two weeks.

Hulcr has an equal enthusiasm for the fungal symbionts: "They smell like white fruit. They look like puffy clouds. Sometimes they look like brown sludge. They often taste like mushrooms. So no wonder the beetles like them." He's tried them himself and liked them: "Wouldn't it be fascinating to grow beetle symbiotic fungus on a large scale, so we could turn wood into fruit? There are so many opportunities."

* In other news of beetles, the seed beetle, Mimosestes amicus, is an inhabitant of the deserts of the US Southwest, where it lays its eggs on the seed pods of acacia and mesquite trees of the region. The seed beetle would seem uninteresting, except for a peculiarity: unlike the norm for other beetles, it often lay its eggs in stacks, from two to four eggs deep.

Joseph Deas, a graduate researcher in entomology at the University of Arizona in Tucson, decided to look into the seed beetle in more detail. He was a little surprised to find out that he wasn't the first to investigate the insect; the mystery of the beetle's stacked eggs had been considered back in the 1920s, the conclusion then being that it was competition between female beetles at work, with one beetle laying eggs on top of another to prevent rivals from hatching. However, Deas realized that the eggs were being laid in stacks by one beetle, and so that answer wasn't right.

What was really going on? Deas determined that the real reason was the threat of parasitic wasps that lay their own eggs in the beetle eggs; wasp larva then hatch and gobble up the egg yolk, leaving none for beetle larva, which never comes to term. The sand beetle has acquired a defense against parasitic wasps, since the wasps will parasitize eggs on the top of the stack, but won't be able to get to the eggs on the bottom. What makes the adaptation more interesting is that the eggs on top are small and infertile, in fact too small to allow wasp larva to always come to term -- in simple terms, they're decoys. Deas has observed that the sand beetles generally lay more eggs in stacks in environments where parasitic wasps are common.

COMMENT ON ARTICLE* FECAL TRANSPLANTS: Comments have been often run here on the microorganisms that cohabit with us, intestinal flora being a leading-edge subject for biomedical research. A note from WIRED.com by Maryn McKenna, who comments on health issues with an emphasis on pathogens, discussed how a medical treatment based on intestinal flora known as a "fecal transplant" is already in use, sort of.

Lara T, a 20-something living in the state of Rhode Island, came down with a gastrointestinal (GI) ailment in early 2008. She thought it was just from holiday overindulgence and, as nasty as it was, figured it would pass. It didn't; it just got nastier. Food would go right through her, and she lost almost 9 kilos (20 pounds) in three weeks. When she went to the doctor to find out what was going on, she found out her GI tract had been all but taken over by the Clostridium difficile pathogen, discussed here in 2006. C. difficile, as its name suggests, is hard to treat, particularly since it's becoming increasingly resistant to medication. Nothing worked for Lara, with one treatment spreading yeast infections through her body. By the summer of 2008, she had dropped a total of 18 kilos (40 pounds). She was too sick to work and wasn't likely to survive much longer.

Desperate, Lara scoured the internet for help, and found out about fecal transplants. They're just what the name says -- inserting strained, diluted feces harvested from someone with a healthy gut into a sick person's large intestine, in hopes of revitalizing the devastated colony of bacteria there. Lara had nothing to lose and found a specialist in Providence, who performed the treatment in October, using Lara's boyfriend as a donor. Lara was feeling better in a day, and fully recovered in weeks.

The problem with fecal transplants is that they're effectively a hobby practiced by a few specialists whose employers are willing to turn a blind eye. A fecal transplant has very little resemblance to normal medical procedures, and so it just doesn't fit into practices approved by professionals, insurers, and regulators. Fecal transplants are, surprisingly, nothing new, having been used on humans for over half a century and on horses for longer than that. Advocates say the procedure is simple and cheap -- noninvasive, requiring no medications -- and it's 90% effective, but it can only be used on humans with a waiver since it's completely out of the box as far as the medical establishment is concerned. Papers on fecal transplants are becoming more common, but the procedure still remains officially on the fringe. Given the rising tide of pathogens resistant to traditional drugs, however, fecal transplants seem likely to become more important -- if they can get over the organizational obstacles in their way.

COMMENT ON ARTICLE* THE CALIFORNIA CONUNDRUM (2): The California initiative system remained a silent time bomb until 1978, when it was set off by "Proposition 13". Californians were irate over high property taxes; their anger was focused by Howard Jarvis, head of an association of property owners. Jarvis proposed via Proposition 13 that property taxes be slashed; that increases in property assessments be strongly limited; and that a two-thirds "supermajority" in the legislature would be required to implement any new taxes. California's leadership was not at all happy with Proposition 13, fearing with good reason that it would financially cripple local governments, and hogtie the state government's ability to obtain operating revenue. Governor Jerry Brown lobbied against it, but it did no good: Proposition 13 was passed by the voters by almost 2 to 1.

There were fears at the outset that local governments would go broke, but Governor Jerry Brown, having seen the will of the people, embraced the pain, helping to push through a scheme where the flush budget of the state government would be redistributed to local governments. Problem solved? Only in a sense, since local governments then became dependent on state money. Conservatives like to complain about centralization of power, but Proposition 13 forced on California a lopsided centralization of power in Sacramento, the state capitol. The result was opaque budgeting, along with an associated diffusion of authority and responsibility that almost inevitably bred inefficiency and bureaucracy. Some scholars identify California's funding arrangement as the state's "distinctively dysfunctional element."

The trouble was only getting started. Jarvis died in 1986, but even by that time the Frankenstein monster he had set loose was on a rampage. Up to Proposition 13 Californians hadn't given much thought to initiatives, but now they became an "industry and a circus". Every faction in the state jumped into the fray to push laws they wanted on even the most trivial issues. Signature gathering for launching initiatives became an industry, with businesses established to obtain signatures using various tricks and pressure tactics. An interest group could pay a given amount and get a specified number of signatures. Even more perversely, the signature gatherers began to promote initiatives of their own just to encourage clients to buy more signatures from them.

The process not only wound up the initiative mill, it wound up the cost of initiatives -- the cost aggravated because the hard-sell tactics used to obtain the signatures tended to produce low-quality signatures that had to be screened by the authorities at considerable expense. It was nothing unusual for people to sign as "Mickey Mouse". Some states have tried to ban paid signature collection, but the US Supreme Court ruled in favor of it in 1998, citing the right of free speech. The result is that schemes originally devised to protect the public from special interests ended up empowering special interests. As one California politician put it, "any billionaire can change the state constitution. All he has to do is spend money and lie to people."

* The problems with the initiative process tend to interact with the problems of the California state legislature. It wasn't so many decades ago that the state legislature was much admired; now the citizens see the legislature as generally dysfunctional. That helps drive the initiative frenzy. If the legislators can't do the job, so the thinking goes, then the people will have to do it instead.

Sorting out cause and effect in the matter is difficult, but it is evident that the California state legislature has structural problems. One is that it's simply too small, with 120 legislators representing 37 million people. That means a California state legislator represents three times as many constituents as a counterpart in New York or Illinois. The result is that legislators find it difficult to be close to their constituents. The other side of that coin is that politicians need to spend lots of money to promote themselves to the voters, which tends to make those politicians beholden to special interests with deep pockets.

The state legislature is notoriously hyper-partisan, though to an extent that's due to geography and demography: the state's coastal regions are strongly liberal, the inland regions strongly conservative, and in an era where partisanship is on the ascendant it's not surprising there's a good deal of political confrontation. However, as mentioned here in the past, the confrontation has traditionally been enhanced by "gerrymandering" due to successive state governments setting up "safe" electoral districts where Democrats or Republicans would almost always be elected -- one of the results being that local elections became competitions in extremism. Now, thanks to voter initiatives, gerrymandering is going away, with electoral districting to be determined by an independent commission. In addition, the voters pushed through "open primaries" in which anyone, regardless of party, could vote for any candidate in primary elections, helping to ensure that the most alarming candidates are blown out of the sky.

Another problem has been the requirement for a two-thirds supermajority to pass new taxes -- as per Proposition 13 -- or even pass a budget -- as established by an initiative passed in the 1930s. That meant a minority party could easily jerk around a majority party by controlling the swing vote, with the more specific results of a weak tax system and chronically late budgets. Voter initiatives have returned budgeting to a simple majority, though passing new taxes remains dependent on supermajorities.

The flood of voter initiatives that followed Proposition 13 also did much to undermine the legislature's control over the budget. Initiatives were pushed through with little concern about how they were to be funded or whether there were better uses for funds. Some politicians say the state legislature only controls 10% of the budget; the rest has been locked up by initiatives.

One initiative, Proposition 140 of 1990, created yet another trap for the legislature by establishing term limits. Term limits are not unusual among US state governments, but California's term limits are unusually severe: six years in the assembly, eight years in the senate. Term limits do have the advantage of preventing legislators from establishing themselves as fossilized centers of power, but unfortunately that turns out to be a disadvantage as well. There's a certain attraction to voters in having a powerful, well-established advocate for their interests; while mandating that legislators are always going to be more or less novices at their jobs ensures they will never be all that competent at them. Term limits end up being another recipe for extremism, since legislators don't need to worry about the long term: there isn't one.

If the California state legislature is a Frankenstein monster, the voters have done much to create it. The bottom line is that if there are things wrong with the legislature, they need to be fixed; but it makes absolutely no sense to elect a legislature and then go beyond placing checks and balances on it to do everything possible to cripple it. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* THE KILLING OF JFK -- A SUMMING UP: As the previous installments in this series demonstrated, the Warren Report, though not without faults, was in all significant conclusions correct: Lee Harvey Oswald killed John F. Kennedy and J.D. Tippit, operating on his own, with no substantial evidence that Oswald was part of a conspiracy, or would have even been capable of working in one. None of the attempts to demonstrate otherwise have proven credible under examination. In the decades following the Warren Report, many have come forward claiming they were going to "solve the mystery", but the mystery was resolved in 1964, leaving nothing more to do than provide clarifications, a few tweaky corrections, and some additional footnotes.

Conspiracy theorists insist that such a conclusion is biased -- but the fact remains that there is no credible evidence for a conspiracy. The "proofs" for a conspiracy focus on discrepancies, some of which have straightforward explanations, some of which remain discrepancies no matter what scenario is assumed; selective use and exaggeration of evidence; nitpicking, red herrings, and evasions; unsupported speculations; and outright fabrications. What is true is trite, what isn't trite isn't true, or at least not supported by the evidence. There was never any persuasive reason to conclude there was a conspiracy, and to the extent the evidence seemed to hint there was, it was investigated to show nothing there.

The conspiracy community hasn't been able to provide a consistent story that could hold up a fraction as well to a fraction of the criticism flamed onto the Warren Report. To the substantial extent that the conspiracy theorists have accused various groups of complicity in JFK's assassination, they also have the burden of proof of being the accuser, and if they are unable to supply proof, their accusations are slander -- though conspiracy theorists seem proud of their slanders as long as they're against public officials and people skeptical of the conspiracy case. The conspiracy theorists have had half a century to make their case, and produced nothing but an urban mythology. They've been crying wolf for decades and haven't been able to produce a wolf; they were entitled to a fair hearing for a time, but that time has passed, and nobody can now be faulted for concluding there never was a wolf.

Conspiracy theorists proclaim that inconvenient evidence was faked by an all-powerful conspiracy that has always been able to suppress the supposed "truth". Assuming that such a huge conspiracy could be actually be kept secret from the public, if there really is an overpowering conspiracy in power that will always suppress the truth -- then what is the point of the conspiracy movement? Is it anything more than a hobby?

Why is supposed to matter? Yes, when people are asked if there was a conspiracy to kill JFK, most will say YES, but what of it? They give their opinion, go on about their business, and that's the end of it. The event is vanishing into the past. By 2063, the history books will report no more than that JFK was killed by a solitary lunatic named Lee Harvey Oswald, adding that there were many suspicions of a conspiracy that were never actually validated. What more could be said? Who would care? Once JFK's murder is beyond living memory, people will have no more interest in Lee Harvey Oswald than they do in John Wilkes Booth, if they even have a clear idea of who Oswald was. "Oswald? Didn't he shoot Abraham Lincoln?"

The assassination conspiracy movement will disappear in time, leaving nothing of substance behind, having nothing of substance to leave behind. The Warren Commission formally closed the case on the JFK assassination and the HSCA effectively did nothing but endorse the Warren Report, with the "acoustic evidence" turning out an embarrassment that only further discredited the conspiracy case. Nobody, not even conspiracy theorists, honestly believes there is any prospect of the case being officially reopened. It never will be. It's been half a century; it's time for everyone to move on.

* Although this series began as an outline of Gerald Posner's CASE CLOSED, it changed direction during its implementation, mostly thanks to Vincent Bugliosi's RECLAIMING HISTORY. I also ended up scouring the internet on the subject, with John McAdam's website being a particularly important source. I found a lot of comments by conspiracy theorists on various websites and in particular forums, though these were more sources of claims to be checked out than sources of information.

I wrote all this just to get it straight in my head. Hopefully some readers have found it informative as well. I've had enough of it. I actually had more installments to run in the blog, but I cut them down so I could finish the blog postings a few months early and get the full document, which is about twice as big as the blog postings, up on the website.

It's on the website and now I can forget about it. It is not unusual nor unexpected to weary of a large project at the tail end of the effort, but this one became downright nasty. The final product seems to hang together well enough, but exerting so much effort on the JFK assassination was unrewarding. I spent two years of work to find out what I knew before I started, taking a trip to nowhere on the conspiracy crazy train. At the end, all I got from it was headaches and indigestion, popping painkillers and gulping down brews of seltzer tablets.

I have doubts the document serves a useful purpose. The JFK assassination is old news; the squabbling continues, but it's a hurricane-force tempest in a teacup, sound and fury amounting to nothing. I'm keeping the document on the website for the next few years, but when it comes time for review, I may say "enough of it" and archive it in my private notes. One decision's been made: no more serious work on conspiracy theories for me -- ever. [END OF SERIES]

START | PREV | COMMENT ON ARTICLE* SCIENCE NOTES: The work of Eric Alm and colleagues at the Massachusetts Institute of Technology (MIT) on genomically backtracking the roots of life back into the distant Archaean age was discussed here last summer. Now an MIT team led by Alm has performed another large-scale genomic analysis to investigate "horizontal gene transfer" among microorganisms. Prokaryotic organisms, the bacteria and the archaeans, easily swap genes with each other, and that sort of gene transfer also happens, if to a much smaller extent, with eukaryotic organisms. The MIT group compared 2,235 different prokaryotic genomes in hopes of spotting horizontal gene transfers. Alm said: "I was hoping to find five to ten examples of recent gene transfers. My students came back in a week with 10,000 different genes that had been transferred."

The researchers compared transfers by several criteria. Transfers sorted by genetic similarity of the subjects and by the geographic distance separating them didn't reveal many correlations, but comparison by ecological niches did. Instead of swapping genes with microbes genetically related to them or in the vicinity of them, prokaryotes seem inclined to swap with microbes fulfilling similar roles. Alm's team also found that genes especially significant to humans -- those found in our intestinal flora, for example, or genes conveying antibiotic resistance -- are particularly inclined towards wide-range jumps between different microorganisms.

Alm's computational biology lab is only getting started on the effort, with one follow-on goal being to track gene transfer in dangerous microbes, which could have significant application to global health efforts. Alm more generally wants to find out exactly how transactions are conducted in what he called the genomic "black market." DNA can travel on dead cell fragments, viruses, and other sub-cellular vehicles; the exact routes remain unknown, and Alm says: "I would love to know."

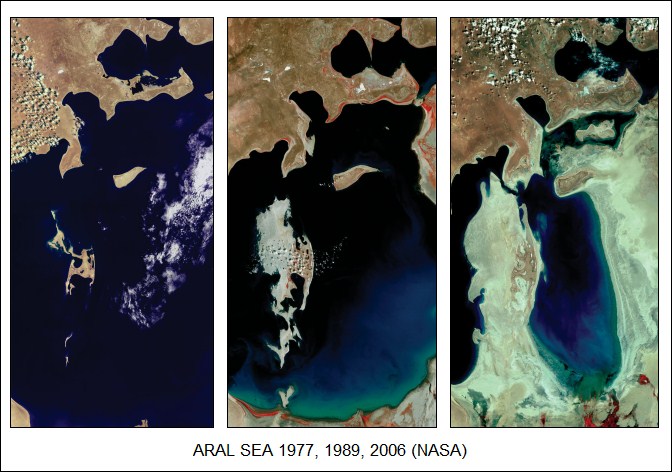

* As reported by AAAS SCIENCE, there was a time when the Aral Sea, in what is now Kazakhstan, was one of the world's biggest inland bodies of water. That was before Soviet-era planners dammed the water flowing into the sea for irrigating cotton fields, and now the Aral Sea is three separate lakes, with a total area only a tenth that of the original Aral Sea and too salty to support most fish. Fish catches plummeted.

Now a 13-kilometer (8-mile) long dike built by the Kazakh government on the Northern Aral Sea, raising the water level by 2 meters (6.5 feet) and dropping the salinity by a third. Local species of fish and plants that had been driven to other waters are now returning, and this year's fish catch is about five times greater than it was in 2005 -- a recovery far quicker than anyone expected. The Kazakh government is considering raising the dike to make the lake even bigger, but there is not only competition for funds, there's competition for use of the water.

* Regarding the ongoing "ClimateGate" controversy, involving supposedly incriminating emails hacked from the Climate Research Unit at the University of East Anglia in the UK: complaints from researchers and the media finally prodded the authorities into action, with Norfolk police descending on the home of climate skeptic blogger Roger Tattersall and seizing his computers. Tattersall was not named as a suspect. The US Department of Justice is cooperating with British authorities in the matter, zeroing in on suspicious bloggers in America to see if leads can be turned up.

This is a clear escalation in the war over climate science, though it was inevitable given the provocation: hacking into other people's computers is a real crime, not at all different from smashing in a window and taking off with a filebox of records, and doing so as a partisan vigilante exercise hardly amounts to an exoneration. Counter-escalation seems equally inevitable: prominent British climate skeptic Lord Monckton says he intends to pursue fraud cases against climate researchers. Monckton points out there's no way to go after the IPCC since it's beyond national jurisdiction, but "individual scientists can be brought to book."

Although that would obviously be a nuisance to the researchers targeted, it's unclear that it would do the climate skeptic movement much good. Skepticism over climate science is within the bounds; accusing the climate research community of conspiracy to commit fraud is far outside of them, into the slanderous and foolish, and would be certainly very difficult to prove. The courts would have to rely on expert testimony to come to a judgement, few if any climate experts think their colleagues are involved in a criminal fraud, and the likelihood of the skeptics of winning such a frivolous case is slight. Would that be an embarrassment to the climate skeptic community? Or do they just want to cause trouble for its own sake, hang the consequences?

COMMENT ON ARTICLE* NO CARS: The idea of reserving parts of downtown areas for pedestrians by banning cars and turning streets into plazas is not new, but as reported by an article from THE NEW YORK TIMES ("Across Europe, Irking Drivers Is Urban Policy", by Elisabeth Rosenthal, 26 June 2011), a number of European cities are taking the concept a big step farther, creating urban environments downright hostile to cars. The methods vary, but the goal is the same in all cases: to make car use expensive and just plain miserable enough to encourage citizens to use more environmentally friendly means of transport.

Cities from Vienna to Munich and Copenhagen have closed vast swaths of streets to car traffic. Barcelona and Paris have diminished car use by setting up popular bike-sharing programs. Drivers in London and Stockholm pay hefty congestion charges just for entering the heart of the city. And over the past two years, dozens of German cities have joined a national network of "environmental zones" where only cars with low carbon dioxide emissions may enter.

Likeminded cities welcome new shopping malls and apartment buildings, but severely restrict the allowable number of parking spaces. On-street parking is vanishing. Says Peder Jensen, head of the Energy and Transport Group at the European Environment Agency: "In the United States, there has been much more of a tendency to adapt cities to accommodate driving. Here there has been more movement to make cities more livable for people, to get cities relatively free of cars."

The Municipal Traffic Planning Department in Zurich has been dedicated to the principle of making life troublesome for drivers. Streets feeding downtown feature closely spaced red lights, and operators of the city's tram system can turn lights green so they can pass at the expense of car traffic. Pedestrian underpasses were removed so that pedestrian crossings block traffic. Around Loewenplatz, one of the city's busiest squares, cars are banned on many blocks; where cars are permitted, they have to inch through at the mercy of pedestrians who cross where they like. As in many European cities, parking is being made scarce and expensive. European building codes increasingly cap the number of available parking places for new building construction; American building codes, in contrast, tend to specify a minimum number.

A few US cities -- notably San Francisco, which has "pedestrianized" parts of Market Street -- have taken similar measures, but that's still far more the exception than the norm Stateside. European cities generally have stronger incentives to act: the cities tend to be older, meaning their streets weren't designed for auto traffic originally, while fuel costs are higher and public transport better. There's also a push to reduce emissions, as well as just make cities more pleasant places to live, with cleaner air and less traffic.

Driving a car puts a burden on a city. Calculations by Zurich urban planners show that a person using a car takes up 115 cubic meters (roughly 4,000 cubic feet) of urban space in Zurich while a pedestrian only takes up 3 cubic meters. European cities also realized they could not meet increasingly strict World Health Organization guidelines for fine-particulate air pollution if car use wasn't curtailed.

In Zurich, carless households have increased from 40% to 45% in the past decade, and those who retain their cars are using them less. 91% of the delegates to the Swiss Parliament take the tram to work. There is grumbling among those who at least occasionally want to or need to drive and find themselves running an obstacle course, but most citizens like the new order. Store owners in Zurich had worried that the closings would mean a drop in business, but banning cars increased foot traffic by 30% to 40%, with business rising proportionately. With politicians and most citizens still largely behind them, Zurich's planners continue their determination to drive cars out of the city and give it back to pedestrians.

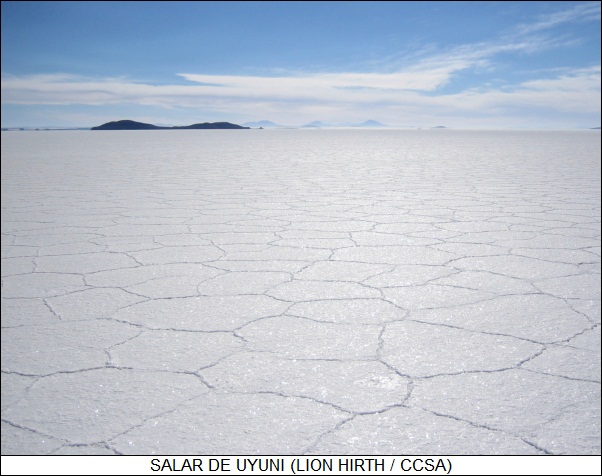

COMMENT ON ARTICLE* LITHIUM RUSH: The South American nation of Bolivia isn't the richest country on the planet, but as reported by an article from AAAS SCIENCE ("Dreams Of A Lithium Empire" by Jean Friedman-Rudovsky, 18 November 2011), in an age of booming demand for lithium batteries, it does have a potential treasure: the world's biggest lithium deposit. The catch is extracting the metal from it.

Bolivia's lithium treasure trove is not at the bottom of a mine; instead it's in the form of Salar de Uyuni, a huge salt flat -- over 10,000 square kilometers (4,000 square miles) in extent, dwarfing America's Bonneville Salt Flats -- at an altitude of 3,500 meters (12,000 feet) in the Andes. It's all that's left of a prehistoric lake that evaporated away, leaving behind salts full of minerals, including an estimated 100 million tonnes of lithium. Getting at those minerals is not a trivial task, with the Bolivian government relying on the skills of Guilliaume Rolants -- a Belgian-born nuclear engineer, now a Bolivian citizen, who has been thinking about exploiting Salar de Uyuni for decades.

Roelants began his quest in the 1980s, obtaining funding from the Belgian government in 1989 to set up a pilot plant to extract lithium from the Salar de Uyuni, the market for the metal at that time being ceramics and in aluminum production. The Bolivian government wasn't interested, so Roelants went on to set up Bolivia's borax industry and work as an agricultural adviser in the Salar de Uyuni region. In the meantime, Chile's Sociedad Quimica y Minera (SQM / Society of Chemicals & Minerals) become the world's biggest producer of lithium carbonate, the company obtaining it from salt flats in Chile's high Atacama Desert.

In 2005, Evo Morales, a socialist, became president of Bolivia; communities around Salar de Uyuni approached the government with proposals for extracting lithium from the salt flats, and in 2008 the government provided funding for setting up a pilot plant, with Roelants in charge. The pilot plant is now coming up to speed. Although lithium can be mined out of the ground, it's more commonly obtained by solar evaporation of salt flat brines. The Salar de Uyuni plant consists of about ten evaporation ponds in a series, with salt-laden brine pumped in one end. The evaporation of the brine isolates unwanted ions -- sulfates, magnesium, and potassium -- in stages, with lithium chloride produced at the output, to then be turned into marketable lithium carbonate.

SQM actually looked over the Salar de Uyuni and gave it a thumb's down. While there's an enormous amount of lithium at Salar de Uyuni, the problem is that there's even more magnesium, and the big trick in lithium production is sorting it out from chemically similar magnesium. The ratio of magnesium to lithium at Salar de Uyuni is 20:1, while at Atacama it's only 6:1. Evaporation rates in the Andes highlands can also be slow, reducing production throughput, and of course rain shuts everything down for the duration -- though the region is normally dry.

Some observers find the Bolivian government's nationalistic insistence on going it alone in extracting the lithium from Salar de Uyuni as wrongheaded, suggesting that the Bolivians would have been better off to set up a partnership with a mining company that had the expertise. Indeed, Roelants' operation runs on a shoestring budget -- once again, Bolivia is a poor country -- and the program is behind schedule. Even more discouraging, the global market for lithium carbonate is saturated for the moment.

Roelants, having spent decades pushing for lithium production from Salar de Uyuni, is not discouraged, believing that things can be made to work. As far as the saturated market goes, with rising demand for lithium batteries it's a fair bet that's a temporary condition. Although SQM's Atacama production can support the market now, that may not be the case in ten years, and Atacama's supply of lithium is not unlimited. Roelants has a reasonable case for believing that Bolivia will become a lithium powerhouse.

COMMENT ON ARTICLE* THE CALIFORNIA CONUNDRUM (1): The difficult state of California politics has been discussed briefly here in the past. A survey in THE ECONOMIST ("The People's Will", 20 April 2011) took a detailed look at how California's experiment in "direct democracy" went so badly wrong.