* 22 entries including: torpedoes, human microbiome, responsive space revisited, manufacturing coming back to USA, Seattle cop tweets / digital gear for convicts, smart irrigation / multicropping, open-source medtech, Chinese fossil forest, EcoATM for cellphone recycling, techshops, and printed electronics.

* NEWS COMMENTARY FOR NOVEMBER 2012: Barack Obama was, as all now know, re-elected President of the United States on 7 November -- as predicted by the bookmakers, with a slender margin in the popular vote but a comfortable margin in the electoral vote. A video taken by one of the Obama campaign staffers showed the president, it seems overwhelmed by the "tension release" of the end of campaign pressure, thanking his people with tears streaming down his face, a human touch for a man often seen as emotionally remote. The public could share a bit of that relief, given the entirely negative tone of political race. The fact that negative campaigns are nothing unfamiliar makes them no less wearisome.

The demographics behind the election were interesting. According to BLOOMBERG BUSINESSWEEK, in 1980 whites cast about 90% of the total votes; in 2012 the proportion had fallen to 72%. Of those who voted for Romney, 89% were white, while 56% of those who voted for Obama were white -- in effect, they put Obama into the White House, just as they had in 2008, more whites voting for Obama than all other groups combined. From those other groups, 71% of Latinos cast their vote for Obama, while 73% of Asians and 93% of blacks favored Obama. The demographics suggest that the closeness of the popular vote between Democrat and Republican is a misleading indicator for future elections: given that the proportion of white voters is going to fall further, the Republicans will either become marginalized, or more hopefully read the writing on the wall to stop their shift to the extreme and seek moderation instead.

* In any case, as discussed in TIME.com, one immediate consequence is that the Affordable Care Act of 2010, AKA "ObamaCare", is going to go forward. ObamaCare had already survived a "near-death crisis" with US Supreme Court approval, Chief Justice John Roberts tipping the scales in favor of it with his vote, as reported here in June. However, during the presidential campaign Republican presidential hopeful Mitt Romney promised to kill ObamaCare, saying he would set up some undefined program in its place. The uncertainty over the survival of ObamaCare had the health-care system walking on eggshells, and some Republican governors said they weren't going to implement it -- yes, partly for ideological reasons, but also partly because the governors rightly didn't know if ObamaCare was going to be around after 2012.

Now ObamaCare is on track towards implementation of the "individual mandate" in 2014. Assuming that things work out reasonably well with the program -- nobody could sensibly expect perfection -- ObamaCare should be solidly entrenched by the next election season in 2016 and not such a political football to toss around. Of course, the politicians will tweak the system in the future, it would be absurd to think they won't, but with experience the tweaks may get the system working better. We'll see.

Over the short term, Congress and the White House have to hammer out a preliminary budget agreement before the end of the year, or the government will fall off a "fiscal cliff" -- first a collision with the mandated debt ceiling, then the end of the Bush II tax cuts, and finally a steep set of automatic government funding cuts -- that experts on both sides of the political aisle see as disastrous. Obama is entirely agreeable to budget cuts, though exactly what will be cut is negotiable, but wants to raise taxes on the wealthy.

Although Republicans have been insistent on "no new taxes", some Republican politicians are demonstrating spine and publicly breaking ranks on the issue, and more generally senior Republican politicians are sounding agreeable on closing loopholes and other tweaks. There's a general sense of optimism that a deal can be worked out -- but that was the general sense in the last round of budget talks before the election, and they were a fiasco. However, in this case we'll see what happens quickly.

* Also as reported by BUSINESSWEEK, the US Federal Emergency Management Agency (FEMA) came out of Hurricane Sandy, which trashed the US Northeast in October, with considerable shine -- a far cry from FEMA's lamentable showing in response to Hurricane Katrina in 2005. New Jersey Governor Chris Christie, not known as a toady to the White House, told ABC's GOOD MORNING AMERICA: "I have to say the administration, the president himself, and FEMA Administrator Craig Fugate have been outstanding. We have a great partnership with them."

FEMA has been basking in praise for its coordination with relief organizations, local government, and state government, with the agency efficiently bringing in Federal personnel and resources. As of the end of October, 2,276 FEMA personnel had been deployed to the disaster area, with the agency directing hundreds of rescues, bringing in 2.5 million liters of water along with 1.5 million meals, with almost 11,000 people in FEMA shelters.

FEMA's difficulties in the past were partly due to the facts that it is a fairly young agency, its support from the top has sometimes been uncertain, and its mandate has not always been clear. Traditionally, when a disaster struck, the Federal government took ad-hoc relief measures, providing emergency funding and sending in whatever agencies seemed appropriate. No organization was fully tasked with preparation for disaster response. During the Carter Administration, state governors complained to Washington DC that the Federal government needed to clean up its confused act on disaster relief, and so President Jimmy Carter signed FEMA into existence in 1979.

Even then, FEMA wasn't a hands-on operation, being mostly focused on disbursing funds for disaster relief, and in reflection of the Cold War mindset of the era, spent much of its time planning for relief in response to a nuclear attack on the USA -- which sounds absurd now, but at the time it was taken much more seriously. However, FEMA was the target of public criticism in its poor handling of Hurricane Andrew, which hit Florida in 1992, one Florida official demanding to know: "Where the hell is the cavalry?"

The bad taste left by Hurricane Andrew was one of the factors that led to George H.W. Bush's defeat in the election of 1992. The new president, Bill Clinton, recognized this and decided to boost FEMA, raising it to a cabinet-level agency and assigning James DeWitt, Arkansas' emergency manager, to run it. With the Cold War over, DeWitt de-emphasized the nuclear conflict mission and turned FEMA into a more active organization.

Unfortunately, FEMA lost altitude again during the Bush II Administration, with the agency folded into the Department Of Homeland Security and refocused on dealing with terrorist attacks. President George W. Bush appointed administrators that had no emergency management experience; in the wake of Hurricane Katrina, FEMA's well-publicized bumbling reduced the agency to a national joke. President Obama put FEMA back on the front burner, bringing in Fugate, the head of Florida's emergency management, who proved an able administrator.

Whether FEMA's efficient response to Hurricane Sandy actually gave Obama an edge in his re-election is impossible to say, but it certainly couldn't have hurt. It also shines a light on the national question of the role of the Federal government, suggesting the notion that the government is useless at best and malign at worst is phrasing a legitimate debate on the functions of government in the most simple-minded terms. We have organizations such as FEMA because they are needed, and it is difficult to see how its functions could be effectively performed by a private organization. Even to the extent that non-governmental organizations such as the Red Cross assist in disaster relief, they would be hard-pressed to have the resources or authority to be as effective as a properly run and funded government agency.

* Incidentally, in response to Hurricane Sandy, the 5 November issue of BUSINESSWEEK firmly grasped the nettle of climate change by running a cover marked with the title: IT'S GLOBAL WARMING, STUPID! -- in bold black letters on a red background. The magazine's editors had slapped global warming denialists up against the side of the head.

I felt satisfaction. I had hedged my bets on global warming, realizing how difficult climate forecasting is, but I finally had to give up equivocation. It wasn't so much because the science seems to be consistently pointing to warming, though I judge that as the case; it was more because the denialists could produce little but ranting, sniping, and jeering in reply, so in the end I felt like an idiot saying anything that seemed to endorse them: "Man, these guys sound like creationists!" "That's because a lot of them are creationists."

It's a little awkward to see the failure of the critics as a convincing factor in the case for global warming -- but if the critics can't offer me a credible alternative, what else could I conclude? Still, the problem remains of what do to about climate change; although the science keeps getting clearer, the politics remain just as murky.

* Also according to BUSINESSWEEK, the US Supreme Court is now considering a case on whether the University of Texas can favor ethnic minorities in admissions. Of course, there are people willing to intensively argue the matter one way or another; as it turns out, big business is one of the factions arguing for it. An "amicus curae / friend of the court" document relevant to the case was presented by a group of over fifty companies including Aetna, Dow Chemical, General Electric, Haliburton, Merck, Microsoft, Northrop Grumman, Procter & Gamble, Walmart, and Xerox.

The brief made its case on business grounds, the firms arguing that they wanted access to a wide labor force, and that having minority employees helped the companies sell to minority groups. An official at Exelon, a power company involved in the brief, said that Exelon wasn't "in favor of racial preferences, per se, let alone quotas" but that universities should be able to consider "race as one factor among many." That echoes a 2003 ruling by the Supreme Court. One of the reasons the businesses have downplayed for fearing a judgement against affirmative action is that it may leave companies only too vulnerable to reverse-discrimination suits. In short, businesses are hanged if they do, hanged if they don't -- but see diversity as the way of the future, and so they prefer "do" over "don't".

COMMENT ON ARTICLE* GIMMICKS & GADGETS: According to WIRED.com, A Dutch engineering designer named Daan Roosegaarde has come up with a distinctly novel idea: roads that glow in the dark. His studio has developed a photoluminescent powder to create road markings that, after charging up in the sunlight during the day, will glow for up to ten hours after dark. Roosegaarde said: "It's like the glow in the dark paint you and I had when we were children, but we teamed up with a paint manufacturer and pushed the development. Now, it's almost radioactive."

Special paint will also be used to paint markers like snowflakes across the road's surface. When temperatures fall to a certain point, the markers will become visible, indicating that the surface will likely be slick. Roosegaarde says this technology has been around for years, on things like baby food packaging; the studio has just upscaled it.

A few hundred meters of glow-in-the-dark road will be set up in the Netherlands in 2013, with this experimental "smart highway" then being fitted with more of Roosegaarde's ideas, such as induction recharger lanes for electric vehicles; interactive lights that switch on as cars pass by; and wind-power lighting systems. Roosegaarde believes that, since cars are getting smarter, roads need to get smarter, too, and he's come up with almost two dozen ideas to give them smarts. He's received inquiries from all over the world, commenting that India, prone to power blackouts and traffic accidents, is very interested in his glow-in-the dark road markings.

* As reported by WIRED.com, an Austrian firm named IPTE Schalk has unveiled a new version of "DeerDeter", a system to reduce collisions between cars and wild animals such as deer that's been tested in Europe and the USA over the last five years. It has demonstrated reductions in collisions by two-thirds or more.

The DeerDeter system consists of a set of posts, each mounting a module at top, planted along stretches of road where such collisions are common. When a car approaches in the dark, its headlights activate the module on the first post, which emits a wail and blinks lights to alarm deer or other animals intending to cross the road. Each module in the line of posts is similarly activated as the car passes down the road. When there's no cars, animals can cross the road unhindered. The pods are solar-powered and have a wireless link for reporting their operating status.

* As discussed in BUSINESS WEEK, in the late 1980s Jeff Brennan was a student at the University of Michigan, working towards a master's degree under the direction of Noburo Kikuchi -- an engineer who was investigating methods to encourage bone growth to repair damage from diseases such as osteoporosis. Bones have an elaborate internal structure that makes them light as well as strong; Brennan got to thinking that the same sort of ideas could be applied to the structural elements of machines.

When Brennan completed his master's program in 1992, he went to Altair Engineering, an international engineering services firm with roots in Michigan. Altair officials liked Brennan's ideas and hired him on, with Brennan tapping Kikuchi to help develop an engineering design software package named "OptiStruct" -- which used algorithms derived from bone structures to trim away weight from structural elements. For example, Optistruct showed how to cut out a support bracket in a racecar to reduce weight by 37%.

There was some resistance to the notion at the outset, customers not being comfortable with the curvy, porous structures generated by OptiStruct. People tend to see solid parts as reassuringly solid and may not be quick to appreciate that an OptiStruct-optimized part is every bit as strong, as well as lighter. Now manufacturers from Airbus to Volkswagen are on board, and OptiStruct doesn't seem to have anywhere to go but up. Other firms are also investigating such technologies, finding biomimicry a profitable ground for further exploitation.

COMMENT ON ARTICLE* MADE IN THE USA? While America is still struggling with the global economic slowdown, the crunch is leading to positive changes in the nation's economy. As reported by an article from BUSINESS WEEK ("Made In China? Not Worth The Trouble" by David Rocks & Nick Leiber, 28 June 2012), one of these positive changes is the migration of jobs that were once outsourced to China back to the USA.

When Lightsaver Technologies, a US manufacturer of household emergency lighting, was set up in 2009 by Sonja Zozula and Jerry Anderson, it seemed the logical decision to manufacture in China, Anderson saying that on a simple analysis it was "30% cheaper". Lightsaver gradually found out that the simple analysis was misleading, Anderson saying: "But factor in shipping and all the other BS that you have to endure. It's a question of: How do I value my time at three in the morning when I have to talk to China?"

Sheer distance and national barriers imposed significant costs of their own on Lightsaver. Shipments could get stuck in customs for weeks, and communications with the Chinese were always a bottleneck. Anderson said: "If we have an issue in manufacturing, in America we can walk down to the plant floor. We can't do that in China."

According to Anderson, once all the true costs are factored in, manufacturing in the USA is 2% to 5% cheaper. This last winter, Lightsaver moved manufacturing to Carlsbad, California, only a short drive down the road for Anderson. Lightsaver is not alone; Harry Mosher -- founder of the Reshoring Initiative, a group of companies and trade associations pushing to bring factory jobs back to America -- says that American manufacturing productivity is "increasingly competitive" on the global scale, with a "dramatic increase" in the amount of work coming home over the past two years. Says the boss of one California manufacturing firm: "Now people are trying to come back. Everyone knows they're miserable."

Since 2008, Ultra Green Packaging, which makes plates and containers from wheat straw and other compostable materials, has been manufacturing in China, but by the end of 2012 the ten-person company will be manufacturing in North Dakota. That will not only cut shipping costs, it will protect the company's intellectual property, which the company's boss, Phil Levin, says is only too easy to steal: "All anybody needs to do is find a different factory and make a mold."

Indeed, the less regulated environment in China is by no means necessarily a benefit to businesses. Unilife, which makes prefilled syringes with retractable needles that protect medical personnel from accidentally sticking themselves, originally manufactured in China -- but found out that made it more difficult to meet stringent US Food & Drug Administration (FDA) rules. From March 2011, Unilife has been pumping out syringes from a plant the company set up in York, Pennsylvania. Says Unilife boss Allan Shortall: "The very thing in the USA that oftentimes we complain about -- the complexity of the rules and regulations -- works for us. FDA compliance is the main reason we're here."

Pigtronix, a maker of electronic guitar pedals, found it almost impossible to maintain good quality standards with Chinese production, with about a third of their pedals made there not meeting spec. The company moved manufacturing back to Long Island; manufacturing costs there are three to six times higher in themselves, but all the product is carefully tested, with a guitarist checking out each pedal before it goes out the door. One big problem for Pigtronix was that Chinese manufacturers would only build in fairly large minimum quantities, which gave the small firm serious inventory problems. Now Pigtronix only builds what the company believes can be sold.

The movement of manufacturing back from China hasn't got a great deal of momentum yet; it is generally the small firms that are coming back home. Big companies that are used to dealing in the global marketplace can handle working in China, with those doing big business in China having a strong incentive to manufacture there. For the moment, all the shift back to the USA has accomplished is to adjust the balance the loss of jobs to China. However, with China gradually becoming a more expensive place to do business, American businesses will have growing incentives to come back home, such incentives growing further as advanced automation techniques, such as 3D printing, become more common in manufacturing and eliminate the advantage of offshoring.

COMMENT ON ARTICLE* TWEETS BY BEAT: As reported by ECONOMIST.com, the Seattle police force has had some rough flying over the past few years, taking a beating from complaints about heavy-handedness and, much worse, a spate of officers killed in the line of duty. On the principle that better public communications would mean more harmonious policing, the department has adopted Twitter as a tool. The scheme is named "Tweets By Beat", with a common tweet feed sorted out by software into reports on 51 distinct cop beats, and a map interface allowing citizens to zero in on tweets from a particular street. The sorting software is designed with several constraints in mind:

A department spokesman says it is the combination of the ordinary and escalated incidents that provide residents with a sense of what police do in the course of their work. Citizens can also get personally useful information from the tweets, such as avoiding the scene of a traffic accident -- Seattle traffic is bad enough normally. The police have found that the tweets get much more attention than the department website, very likely because tweets are much easier to inspect on a smartphone.

* INMATE TECH: The US prison system is a major operation, and to no surprise there are industries dedicated to supporting it. According to BUSINESS WEEK, one of the firms that supports the prisons is JPay of Miami, which employs about 200 people. JPay has traditionally handled inmate money transfers, email communications, and video visitations, all set up to make sure corrections officers can monitor them. The company provides services for more than a million prisoners in 35 US states.

JPay is now branching out with its "JP3" MP3 player, which family or friends of a prison inmate can buy for $40 USD. Tech designed for prison use has a few unique requirements: it can't be used as a weapon, it can't be used to hide contraband, and it can't communicate with the outside world. The JP3 is too small to be used as a bludgeon, doesn't include any substantial metal elements, and has a transparent case so nothing can be hidden in it. As far as downloads go, inmates can buy tunes for a buck or two each from a kiosk set up in a prison common area. The prices are a bit steep, but JPay officials insist they're not looting a captive (so to speak) market, that they typically share some of the revenue with a prison.

JPay didn't invent the prison MP3 player, the first having been introduced by the Keefe Group, a Saint Louis-based supplier of food and personal-care products to prison commentaries. Keefe officials wouldn't comment to BUSINESS WEEK; JPay officials, much less reticent, say they are now getting ready to introduce the "JP4", roughly equivalent to a small kiddie tablet in clear plastic, which will sell for $50 USD. Like the JP3, the JP4 will have no internet access, with prisoners downloading content from the kiosks.

Small tablets could have a big impact in the prison system, giving prisoners access to news from the outside world and a list of e-books, of course available only through the one-way portal of a prison server. Corrections officials don't generally see such tech as coddling prisoners: inmates have to pay their debt to society, but there's no sense in letting boredom squeeze them into bombs waiting for an occasion to go off, and any upward education they can get is all for the good.

COMMENT ON ARTICLE* HUMAN MICROBIOME (3): As a complement to the SCIENTIFIC AMERICAN article on the human microbiome, Carl Zimmer published an article in THE NEW YORK TIMES ("Tending the Body's Microbial Garden", 18 June 2012) that also examined the microbiome research effort.

Julie Segre, a senior researcher at that National Genome Research Institute, has been studying the human microbiome, and has become something of a "believer" in its importance to its host. She says: "I would like to lose the language of warfare. It does a disservice to all the bacteria that have co-evolved with us and are maintaining the health of our bodies."

For example, Segre points out how essential our skin bacteria can be: "One of the most important functions of the skin is to serve as a barrier." Bacteria feed on the waxy secretions of skin cells and then produce a moisturizing film that keeps our skin supple and prevents cracking, preventing the entry of unwelcome pathogens. Segre believes we should be cultivating our useful bacterial commensals. For example, to ward off dangerous skin pathogens like Staphylococcus aureus, Segre envisions applying a cream loaded with nutrients for harmless skin bacteria to feed on. Segre says that it would promote "the growth of the healthy bacteria that can then overtake the staph."

Segre's views are reflected in a new concept of health care known as "medical ecology". Instead of conducting indiscriminate "carpet bombing" on microorganisms with antibiotics, Segre and others want to become microbial "wildlife managers". They don't believe antibiotics are obsolete, they just suggest that tweaking the microbiome offers potential benefits of its own, dealing with problems with fewer side effects.

Those involved in microbiome research often find surprises. A recent study published by Dr. Kjersti Aagaard-Tillery, an obstetrician at Baylor College of Medicine, and her colleagues described the vaginal microbiome in pregnant women. Aagaard-Tillery didn't think there would be much change from women who weren't pregnant, but what the group discovered was the exact opposite.

Early in the first trimester of pregnancy the diversity of vaginal bacteria changes significantly, with common species dwindling and uncommon species growing. One of the dominant species in the vagina of a pregnant woman, it turns out, is Lactobacillus johnsonii. It is usually found in the gut, where it produces enzymes that digest milk -- so why would it then be found in the vagina of pregnant women? Aagaard-Tillery doesn't know the specifics, but strongly suspects that the vagina encourages its growth, cultivating the bacterium so the infant will get a dose of it at birth, helping it to digest mother's milk.

The same sort of processes take place during breast feeding. In a recent study of 16 lactating women, Katherine M. Hunt of the University of Idaho and her colleagues reported that the women's milk had up to 600 species of bacteria, as well as sugars named "oligosaccharides" that babies cannot digest. The sugars are certainly not for the baby; it appears they are to nourish certain beneficial gut bacteria in the infants, the scientists said. The more the good bacteria thrive, the harder it is for harmful species to gain a foothold.

As babies develop, an elaborate microbiome develops along with them, resulting in the great diversity of microorganisms that reside in adults. That diversity is hard to appreciate. In any one person's mouth, for example, researchers found there are about 75 to 100 species of bacteria, and some that predominate in one person's mouth may be rare in another person's. Even that number may be a gross underestimate, with the rate of discovery suggesting that the human mouth is normally home to thousands of bacteria. Susan M. Huse of the Marine Biological Laboratory in Woods Hole, Massachusetts, who has worked on this research, commented: "The closer you look, the more you find." [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* THE TORPEDO (18): With the end of World War II, American torpedo development was scaled back but not abandoned, the focus being on the most promising technologies -- most significantly including wire guidance, targeting discrimination, and onboard attack logic. Once the electronics revolution began in earnest in the 1960s, the capability of torpedoes increased dramatically, along with their cost. Incidentally, unguided torpedoes remained in service for several decades after the war, with the US Navy finally giving them up in 1980. Ironically, the last unguided torpedo in US Navy service was the notorious Mark 14.

The US Navy focused on two primary classes of torpedoes: heavyweight submarine / surface vessel launched torpedoes, and lightweight air / surface vessel launched torpedoes. The first of the heavyweights -- the lightweights are discussed later -- was the GE-developed "Mark 35", a 53.3-centimeter (21-inch) torpedo with active / passive acoustic homing, a gyro-controlled runout capability, and a seawater battery. It had a range of 13,750 meters (15,000 yards) at 48 KPH (30 MPH / 27 KT). A batch of about 400 was built in 1949, with this torpedo serving for about a decade.

The Mark 35 was followed late in the 1950s by the "Mark 37", though the Mark 37 was a 48.3-centimeter (19-inch) torpedo, and more specifically a replacement for the Mark 27 Mod 4. In any case, the Mark 37 has been described as the first truly modern homing torpedo, its guidance system featuring:

The Mark 37 also featured a new propulsion system and body. It had a weight of 648 kilograms (1,430 pounds), including a 150 kilogram (330 pound) warhead; and electric propulsion with two selectable speeds -- 31 KPH (20 MPH / 17 KT) for a range of 21,100 meters (23,000 yards), or 48 KPH (30 MPH / 26 KT) for a range of 9,175 meters (10,000 yards).

The Mark 37 became the US Navy's primary submarine-launched antisubmarine warfare torpedo. Following launch, it performed a gyro-controlled runout, followed by a snaking or circular search pattern using passive acoustic search; once a target was acquired, the torpedo closed to about 640 meters (700 yards) under passive homing and then turned on active homing for the terminal attack.

* The problem with the straight runout is that the target might get wise before the runout was completed and take effective evasive action. To deal with this problem, the Navy developed the "Mark 39", which was a Mark 27 Mod 4 fitted with a wire guidance system. A small batch was fielded from 1956, mostly for training and evaluation. Of course wire guidance required that a submarine be fitted with a control system and that torpedo tubes be modified to handle the wiring connection.

The Mark 39 was a "bearing rider", with the torpedo steered to stay strictly on the bearing of the launch submarine. This was a simple approach, but it had a number of limitations:

However, the Mark 39 did demonstrate just how effective wire guidance could be in dealing with a maneuvering target. The Mark 39 led to the "Mark 37 Mod 1", a wire-guided version of the Mark 37, which went into service in 1960 and became a very important US Navy weapon. The Mod 1 was longer, heavier, and slower than the Mod 0, but it was more effective against agile adversary submarines. All Mark 37 Mod 0s were updated to the Mod 1 guided spec and redesignated "Mark 37 Mod 3".

The improved "Mark 37 Mod 2" was delivered from 1967 and featured many small changes, one being improved transducers that increased target acquisition range and avoided loss of homing sensitivity with depth. The Mark 37 was also the basis for the "Mark 67 Submarine Launched Magnetic Mine (SLMM)", in which the torpedo was launched into an inaccessible area, to come to rest on the bottom and wait for a vessel to pass by. Although the Mark 37 is now long out of service, the Mark 67 SLMM remains in the US Navy's inventory. Ironically, the torpedo had started out its history as what we now call a mine; with the Mark 67, it had come full circle back to being a mine of sorts again. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* Space launches for October included:

-- 04 OCT 12 / GPS 2F-3 (USA 239, NAVSTAR 67) -- A Delta 4 booster was launched from Cape Canaveral to the "GPS 2F-3" AKA "USA 239" AKA "Navstar 67" navigation satellite into orbit. It was the third Block 2F spacecraft, with the Block 2F series featuring a new "safety of life" signal for civilian air traffic control applications. The Delta 4 was in the "Medium+ (4,2)" configuration, with a payload shroud 4 meters (13.1 feet) in diameter and two solid rocket boosters. There was a booster anomaly during ascent, but the payload made its proper orbit.

-- 08 OCT 12 / DRAGON CRS-1, ORBCOMM -- A SpaceX Falcon 9 booster was launched from Cape Canaveral on its third flight, carrying the first operational "Dragon" cargo capsule with 400 kilograms (880 pounds) of cargo to the International Space Station (ISS). The capsule docked to the ISS on 10 October. It returned to Earth on 28 October, landing in the Pacific Ocean off the coast of Mexico and being recovered.

The launch also included a prototype for a second-generation Orbital Sciences Orbcomm low-orbit data relay comsat, with a launch mass of 165 kilograms (363 pounds). Unfortunately, one of the Falcon 9's first stage engines failed on ascent; the Dragon capsule made proper orbit, but the Orbcomm satellite did not, and fell back to Earth the next day. Some test data was returned from the Orbcomm, but the mission was otherwise written off as a total loss.

-- 12 OCT 12 / GIOVE FM3, FM4 -- A Soyuz 2-1b Fregat booster was launched from Kourou in French Guiana to put two "Galileo In-Orbit Validation Experiment (GIOVE)" navigation satellites into orbit. Each spacecraft had a launch mass of 700 kilograms (1,545 pounds); they were built by Thales Alenia Space in Italy. They joined two other Galileo test satellites launched in 2011, with the quartet being used for early validation tests of the Galileo system.

-- 14 OCT 12 / INTELSAT 23 -- A Proton M Breeze M booster was launched from Baikonur in Kazakhstan to put the "Intelsat 23" geostationary comsat into space. The spacecraft was built by Orbital Sciences and was based on the Orbital Star-2 comsat platform. Intelsat 23 had a launch mass of 2,680 kilograms (5,910 pounds), a payload of 15 Ku-band / 24 C-band transponders, and a design life of 18 years. It was placed in the geostationary slot at 53 degrees West longitude to provide communications services to the Americas, as well as western reaches of Europe and Africa, plus islands in the eastern Pacific. Intelsat 23 replaced Intelsat 707 at that geostationary position; Intelsat 707 had been launched in 1996 and was beyond its design life.

-- 14 OCT 12 / SHIJIAN 9A, 9B -- A Chinese Long March 2C booster was launched from Taiyuan to put the "Shijian 9A" and "Shijian 9B" technology test satellites into space; "shijian" is Chinese for "practice". They were placed into a Sun-synchronous orbit, suggesting they were actually surveillance satellites or testing surveillance gear.

-- 23 OCT 12 / SOYUZ ISS 32S (ISS) -- A Soyuz Fregat booster was launched from Baikonur to put the "Soyuz ISS 32S" AKA "Soyuz TMA-06M" manned space capsule into orbit on an International Space Station (ISS) support mission. It carried commander Oleg Novitskiy (first space flight) and flight engineer Evgeny Tarelkin (first space flight) of the Russian RKA space agency, along with NASA astronaut Kevin Ford (second space flight). The capsule docked with the Poisk compartment of the station's Russian Zvezda module two days later, its occupants joining the ISS "Expedition 33" crew of commander Sunita Williams, Japanese astronaut Akihiko Hoshide and cosmonaut Yuri Malenchenko.

-- 25 OCT 12 / BEIDOU G6 -- A Chinese Long March 3B booster was launched from Xichang to put the "Beidou G6" geostationary navigation satellite into orbit.

-- 31 OCT 12 / PROGRESS 49P (ISS) -- A Soyuz-U booster was launched from Baikonur to put the "Progress 49P" AKA "Progress-M 17M" tanker-freighter spacecraft into orbit on an International Space Station (ISS) supply mission. It was the 49th Progress mission to the ISS. It was launched on a fast-track trajectory, docking to the ISS Zvezda module less than six hours after launch.

* OTHER SPACE NEWS: The US Air Force's effort to develop a reusable space-launch system was last mentioned here in late 2011. Latest news flash: the program's dead. It appears that it was not merely a casualty of general military budget cuts, but also of the fact that analysis suggested the reusable booster system was unlikely to be cost-effective. It's the same old story for reusable space launch systems, they're a great idea in theory, but nobody can figure out a good way to get from here to there. The real irony is that reduced-cost expendable boosters seem to be undergoing a bit of a boom. The Air Force is continuing to perform technology investigations, however, in hopes of eventually finding the magic formula.

COMMENT ON ARTICLE* SMART IRRIGATION: Anybody living around America's Great Plains knows about "center pivot irrigation" -- involving a long pipe on wheels decorated with sprinkler heads that pivots around a water head, resulting in neatly circular fields of crops as seen from the air. Center pivot irrigation is effective, but as discussed in Babbage, THE ECONOMIST's technology blogger, some think it could be made more intelligent and more efficient.

In 1999 Craig Kvien of the University of Georgia got to thinking about the advantages of focusing water on where it's needed in the field instead of just blasting it out as the center pivot rig rolls around. Kvien got in touch with FarmScan AG, an Australian manufacturer of agricultural equipment, to push development of what he calls "variable-rate irrigation (VRI)" Dozens of Georgia farmers are now using VRI, with inquiries coming in from around the world.

VRI demands that a farmer generate a topographic map of his land registered with GPS coordinates and with a resolution of less than a meter, with an eye to finding low spots where water collects and high spots where it runs off. Fallow areas, uncropped parts, watercourses, dirt tracks and wetlands also need to be factored into the plot, and it can be useful to perform soil moisture probes to determine which parts of the field have soil that retains water better than others. Once the map is produced, it drives a control system that controls the water flow to the sprinkler heads on the center-pivot rig.

VRI installations are not cheap. Depending the size of the pivot and the number of bells and whistles involved, it can cost between $5,000 and $30,000 to smarten up a single irrigation system in this way. The payoff, though, is an average 15% reduction in water consumption and also a reduction in fertilizer use, because less is washed away by runoff. Agriculture is by far the biggest user of fresh water -- and as fresh water supplies start to run into limits, anything that can be sensibly done to reduce water usage is going to pay for itself.

* MULTICROPPING: In other agritech news, one of the features of most modern large-scale crop plants, such as wheat or corn, is that a crop is planted and then harvested, with a new crop planted in turn. As discussed in SCIENTIFIC AMERICAN, there are advantages to instead growing "perennials" that don't have to replanted again and again: the deep roots of perennials help reduce erosion, and they require less fertilizer and water to grow, since they don't have to grow from a seed over and over again. They are also more effective at sequestering carbon.

Farmers in Malawi are already planting pigeon peas, a perennial, between their rows of corn. The pigeon pea plants not only produce nutritious plant protein, they also improve water retention and fix nitrogen into the soil, actually improving the yield of the corn. The really big payoff, however, would come from genetically modifying crops like wheat and corn into perennials. Nobody knows how that could be done right now, and plant geneticists don't believe anyone will figure it out for a few decades. They believe it can be done, with some not only claiming that such perennial crops would improve agricultural productivity, they would also be able to sequester enough atmospheric carbon to put climate change on hold. That, however, might be taking an overly optimistic read on things.

COMMENT ON ARTICLE* OPEN-SOURCE MEDTECH: We have a tendency to think of "open source" software as the hobbyhorse of tech geeks and not necessarily very professionally constructed. However, as discussed in an article from THE ECONOMIST ("When Code Can Cure Or Kill", 2 June 2012), that mindset tends to overlook the deficiencies of proprietary software.

The case in point is medical technology, which uses an ever-increasing amount of software: 80,000 lines of code in a pacemaker, more than seven million lines of code in a magnetic resonance imaging (MRI) scanner. Anyone familiar with software knows it always has bugs, and the number of bugs tends to increase with the size of the program -- not by a linear factor, either, since the number of possible interactions, and possibilities for errors, tends to increase nonlinearly as programs grow in size.

Much of the time the bugs are not any big deal, just hiccups, but with medical technology there's a real potential for harm. One of the most ghastly known cases was the Therac-25 radiotherapy machine, which back in the 1980s overdosed a number of patients with radiation, killing five of them. Defects in implanted drug-infusion pumps, mostly due to software bugs, have killed hundreds over the last five years. Matters are becoming more menacing as implants and other medical technology becomes wireless-enabled, raising the possibility of murderous malicious hacking.

Add to this the fact that software projects are often poorly managed, particularly by firms that aren't really in the software business as such, and that supposedly professional programmers can be -- not necessarily are, of course, but can be -- staggeringly incompetent. As the saying goes, if buildings were constructed the way most software is, the first woodpecker that came along would destroy civilization.

The software produced by medical technology companies is as a rule proprietary -- which of course protects it from being stolen by rivals, but also prevents it from being inspected for bugs by outsiders. The US Food & Drug Administration (FDA) could in principle demand to inspect a manufacturer's software, but the FDA isn't really in a good position to perform software inspections, and generally does not do so.

A faction of academics thinks that open-source software is the better idea, since bugs won't be concealed and many hands can help fix them. As a pilot project, the University of Pennsylvania and the FDA collaborated on the "Generic Infusion Pump", the specification of which was based not just on desired functionality but also on specifying every imaginable thing that could go wrong with a drug-infusion pump. The software was then implemented as per specification for an existing drug-infusion pump mechanism. The project also had industrial partners; medical technology companies that don't do software for a living, particularly startups trying to get a fingerhold in the market, have sensible reasons to like open-source software themselves. They can always add special features to the software to differentiate their product from the competition.

University of Wisconsin at Madison researchers are working on a much more ambitious project, the "Open Source Medical Device", which is envisioned as a radiotherapy machine with computed tomography (CT) and positron emission tomography (PET) capability built in. That's a very ambitious goal, but as mentioned here earlier this year, an open-source surgical robot named the "Raven" is already being put through trials. Alas, for the time being no open-source medical technology can be used on humans, due to FDA regulations. Although the FDA doesn't make much effort to inspect software, it does require that the development of medical technology be accompanied by a paper trail that just isn't practical for open-source. For this reason, the Raven is only intended at present for use on animals and cadavers.

Change does seem to be coming to the FDA's attitude. The FDA is supporting an effort being run by the US National Institutes of Health named "Medical Device Plug-&-Play" to create specs that would permit interoperability of medical technology made by different vendors. The FDA is also working with John Hatcliff of Kansas State University on the "Medical Device Coordination Framework", the goal of this effort being an open source hardware platform including elements common to many medical devices, such as displays, buttons, processors and network interfaces, along the software to run them. By connecting different sensors or actuators, this generic core could then be made into dozens of different medical devices, with the relevant functions programmed as downloadable apps.

That's cracking the door open to open source, though it still leaves open the question of how the FDA can assure the safety of open source medical technology. However, giving the rising complexity of medical technology in general, the FDA is presented with problems along such lines whether it embraces open source or not.

[ED: This article cited one researcher working on the Generic Infusion Pump would like to ultimately see the hardware as open-source, as well as the software: "My dream is that a hospital will ultimately be able to print out an infusion pump using a rapid prototyping machine, download open-source software to it, and have a device running within hours."

As stated that's not realistic, since a 3D printer can't turn out anything that can run software. However, that leads to the interesting vision of rapid prototyping systems that can incorporate standard CPU modules, for example a packaged version of the popular cheap Arduino board, along with sensors and actuators. Such a notion is perfectly practical, but implies a level of standardization that's well over the horizon. Indeed, I had some familiarity with "Plug & Play" standardization efforts during my corporate days, and the phrase still fills me with a vague sense of dread.]

COMMENT ON ARTICLE* HUMAN MICROBIOME (2): We already knew a little about the operation of the human microbiome before metagenomic studies of it began. In the 1980s it was learned that vitamin B-12 is critical for a number of cellular functions, and that the enzymes required to synthesize vitamin B-12 were provided by bacterial commensals. Similarly, it has been long known that gut bacteria break down some components of food that we can't digest on our own. We know a lot more about our commensals now -- two of them being known to play significant roles in both digestion and regulation of appetite.

The bacterium Bacteroides thetaiotaomicron is well adapted to breaking down complex carbohydrates into glucose and other small, easily digested sugars. The human genome lacks most of the genes for enzymes that could "crack" complex carbohydrates; B. thetaiotaomicron, in contrast, has genes coding for more than 260 enzymes that digest plant matter. Details of the operation of B. thetaiotaomicron were obtained from studies of mice raised in a completely sterile environment, ensuring they had no microbiome, and then exposing them to this bacterium. The bacterium, it turns out, makes its living by digesting complex carbohydrates known as polysaccharides, excreting short-chain fatty acids that the mice would then use as fuel. The research showed that rodents without a microbiome had to eat 30% more calories than those with a microbiome to gain the same amount of weight.

The other bacterium, Helicobacter pylori, has something of a bad reputation it doesn't completely deserve. H. pylori is a borderline "extremophile", being one of the few microorganisms that can survive in stomach acid. In the 1980s, the Australian researchers Barry Marshall and Robin Warren fingered it as a cause of peptic ulcers -- a surprise to the biomedical community, there having been little thought given to the idea that peptic ulcers might be caused by a pathogen. Doctors began to treat peptic ulcer patients with antibiotics, to find the treatment very effective.

But was H. pylori really a pathogen? A researcher named Martin Blaser, now a professor of internal medicine and microbiology at New York University, certainly thought so when he began studying the bacterium, but by 1998 he had determined it was a commensal, publishing a paper in that year that showed H. pylori helps its host regulate stomach acid. The bacterium finds its environment most congenial when the stomach acidity is at a certain level; if the stomach grows too acid, strains of H. pylori that include a gene named cagA begin producing proteins to restrain the production of stomach acid. Unfortunately, in susceptible individuals the result is production of ulcers as well.

A decade later, Blaser published a study that discussed how H. pylori also helps control our appetite. The stomach generates two hormones that help direct appetite: ghrelin, which is an "I'm hungry" signal to the brain, and leptin, which is (among other things) an "I'm full" signal to the brain. Typically ghrelin goes down after eating, but Blaser and his colleagues discovered it doesn't in test subjects without H. pylori. Of course such test subjects tended to gain more weight as a result.

Blaser has no idea of how H. pylori pulls off that trick and, in the absence of a known mechanism, the correlation between ghrelin and the bacterium might be misleading. However, while H. pylori was common in Americans a century ago, it is rare now, with only about 6% of American children testing positive for it; American kids are being dosed with antibiotics from an early age, and of course that influences the microbiome. Does the loss of H. pylori lead to childhood obesity? Blaser isn't sure it's a significant factor, but he's inclined to suspect so.

Blaser points out that other factors are limiting human microbiome diversity. The increase in the number of births by cesarean section results in babies who haven't picked up a dose of bacteria from their mothers. Smaller families also mean less exposure to bacteria via siblings, and cleaner water similarly reduces exposure to microorganisms. That of course isn't an entirely bad thing, but it does mean that we are living with increasingly limited microbiomes.

* Studies of B. thetaiotaomicron and H. pylori are only scratching the surface of the mysteries of human-microbiome interactions. One major puzzle is how microbial commensals live happily in our bodies when our immune system should be attacking them. That is related to the question of why our immune system doesn't attack our own body; such "autoimmune" reactions can occur, with unpleasant results, and there are hints that one of the reasons it doesn't do that normally is because of commensals.

Biologist Sarkis K. Mazmanian of the California Institute of Technology and his colleagues have been zeroing in on a common microorganism named Bacteroides fragilis, found in about three quarters of the population. Working with mice lacking a microbiome, the Caltech researchers found that such mice tended to have dangerously overactive immune systems. Introducing B. fragilis restored the immune system balance. It turns out that B. fragilis usually has a surface sugar molecule named polysaccharide A; if it's missing, the immune system targets B. fragilis as an invader. The Caltech researchers chased down the chain of interactions derived from polysaccharide A and determined that its presence helped regulate the immune system. Mazmanian believes that other of our commensals have similar interactions with the immune system, saying: "This is just the first example. There are, no doubt, many more to come."

As with other commensals, our modern lifestyle is reducing the population of B. fragilis in the microbiome -- and Mazmanian suspects that its decline may be reflected by higher rates of autoimmune disorders. As with the correlation between loss of H. pylori and childhood obesity, the connection is merely suggestive, Mazmanian saying that "the burden of proof is on us, the scientists, and prove there is cause and effect by deciphering the mechanisms underlying them." [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* THE TORPEDO (17): Along with the Mark 24 / FIDO, two other passive homing torpedoes saw service with the US Navy in World War II. The Mark 27 torpedo, AKA "CUTIE", was a submarine-launched anti-escort-vessel weapon based on the Mark 24. The original "Mark 27 Mod 0" was a minimally modified Mark 24 fitted with wooden rails to permit loading into 53-centimeter (21-inch) torpedo tubes; a floor switch instead of a ceiling switch so it would not attack the launching submarine; and various arming, warm-up and starting controls to suit torpedo tube / swim-out launch mode.

Deliveries of the Mark 27 began in June 1944, with over a hundred launched, scoring 33 hits that resulted in 24 sinkings. A "Mod 3" was developed that was longer, had a heavier warhead, and a gyro for a straight runout to get it out of range of the launch platform. It was not put into production, but it did lead to a "Mod 4" that was produced in the postwar period. A 53.3-centimeter (21-inch) "Mark 28" homing torpedo for submarine launch was produced late in the war, though it saw little operational use. It remained in service up to 1960.

Passive homing was tricky enough; developing a active sonar homing system that could fit into a torpedo was well more challenging. US Navy work on active homing torpedoes paralleled work on passive homing, but it wasn't until 1944 that an active homing torpedo was demonstrated. Since an active homing torpedo only got a control input when it heard an echo from a sonar "ping", the torpedo had to have a gyro system to keep it on track between course corrections. It also had to discriminate against "clutter", or sonar reflections from the seafloor and the like; that was done by disabling the sonar receiver system for a very brief interval after the generation of a ping -- meaning echoes from nearby surfaces were ignored -- as well as use of some other tricks. The end result was the "Mark 32", which was effectively a Mark 24 / FIDO with an active homing head. A few hundred were manufactured after the end of the war; the Mark 32 didn't amount to much in service, but it did point the way to better postwar torpedoes.

Another innovation developed during the conflict that would prove valuable after the war was an improved electric battery. Electric torpedoes with performance along the lines of steam torpedoes finally became practical with the development of the "seawater battery". The seawater battery was based on an electrochemical cell with a magnesium anode, silver chloride cathode, and salt water as the electrolyte. The resulting battery had over three times the energy density of a lead-acid battery -- that is, it could produce over three times more electric power for the same weight of battery.

A further advantage of the seawater battery was that it could be easily kept on the shelf. A lead-acid battery has to be filled with sulfuric acid to be used and has a limited lifetime after it has been activated; the seawater battery could be filled when a torpedo was put in the launch tube, making the torpedo much easier to handle and giving it a longer shelf life. The limitation was the use of silver for the cathode, making the battery too expensive for civilian uses. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* SCIENCE NOTES: As reported by BBC.com, hermit crabs are well-known for acquiring mollusc shells to make mobile homes for themselves. The shells do pose a dilemma to the crabs, however, in that too heavy a shell is a burden to haul around. As a result, hermit crabs have evolved behaviors to trim the weight of the shells.

A study of Ecuadorian hermit crabs shows that the modifications are selective, in that they do not compromise the integrity of the shell. Tests showed such modified shells could still resist the attempts of the hermit crab's predators -- raccoons, coatis, and opossums -- to bite through them. Smarts on the part of the crab? Not really, it's pretty much instinct, the crabs are born to do the job right. It's just that those crabs that weren't born to do the job right were winnowed out over the generations.

* It is nothing unusual to find tiny ancient beasts encased in amber, the creatures having become trapped in plant pitch that eventually turned to stone. As reported by from BBC.com, a team of researchers have turned up particularly ancient remains from millimeter-sized amber droplets dug up from outcrops near to the village of Cortina in the Dolomite Alps of northeastern Italy. After careful analysis of tens of thousands of droplets, the research team found the partial remains of a midge fly and the complete remains of two fossil mites. The fossils date from the late Triassic period, about 230 million years ago, making them about 100 million years older than any other fossil arthropods found to date.

The new mite species are the oldest fossils of an extremely specialized group, the Eriophyoidea, containing about 3,500 living species, all of which feed on plants and sometimes on the plant tumors known as "galls"; as a result, they are sometimes called "gall mites". The ancient mites most likely fed on the leaves of an extinct conifer tree that ultimately preserved them. Today, only about 3% of the species of gall mites feed on conifers, most of them now being adapted to feeding on flowering plants, which weren't around in the late Triassic. However, the fossil gall mites are effectively identical to modern gall mites; having evolved to a configuration that works, the mites have been under no evolutionary pressure to change in any visible way.

* As reported by SCIENCEMAG.org, although we tend to find ladybug beetles cute, they're actually ravenous predators. In Latin America, one of their preferred prey species is the green coffee scale insect (Coccus viridis), and so ladybugs like to lay their eggs in locations where the scale insects are common, to give their offspring something to eat after they hatch. There's one big problem with that arrangement from the point of view of a ladybug: the scale insects are "farmed" by tree-nesting Azteca instabilis ants, who collect the sweet honeydew produced by the scale insects. The ants protect their scale insects, attacking ladybugs and cleaning out their egg clutches.

Since the ladybugs persist in their attention to the scale insects, it seemed likely to entomologists that the beetles had countermeasures. One possible option for countermeasures would be odors, since ants tend to communicate by smell. Researchers working on a coffee plantation in Mexico investigated using olfactometers -- smell measuring instruments -- and found out that was in fact the case, though the details were elaborate.

The ants are attacked themselves by parasitic phorid flies that lay eggs on the ants, with the eggs hatching and devouring the ants. The phorid flies hunt by motion and when the ants detect them about, they emit a pheromone -- smell signal chemical -- to alert the nest, with all the ants going into a protective freeze for up to two hours. It turns out that female ladybugs, especially pregnant ones, are sensitive to that pheromone as well, and when they smell it, they hunt out areas rich in scale insects until the ants revive. Nobody's ever observed any interspecies interaction exactly like it; nature, as always, continues to surprise.

COMMENT ON ARTICLE* PRIMEVAL FOREST: China has proven a new frontier in fossil discovery, with new and interesting fossils turned up every year. As reported by an article from AAAS SCIENCE ("Primeval Land Rises From The Ashes" by Mara Hvistendahl, 11 May 2012), China has now produced a primeval forest, buried by a volcanic eruption 298 million years ago, in the Permian period.

In 1999, Wang Jun, now a paleobotanist with the Chinese Academy Of Sciences' Nanjing Institute of Geology & Paleontology, was a postgrad researcher when an adviser presented him with a plant fossil dug up at Wuda, in Inner Mongolia. Wang traveled to Wuda to see if he could find out more about the fossil, only to find a wealth of fossils there. In 2000, Wang attended a meeting of the International Organization of Paleobotany, where he managed to get geologist Hermann Pfefferkorn interested in the Wuda site. The two visited Wuda in 2003 and began to realize the grand extent of the find.

When Wang found it, the Wuda forest deposit covered about 20 square kilometers. The entire forest was covered by volcanic ash in a geological instant, covering it with a layer of "tuff" -- an appropriately-named material resembling glassy chalk -- over 60 centimeters (two feet) thick. Wang calls the site a "vegetational Pompeii". At the time, the area was wetland; the base of the forest was covered by ferns, there being no grasses at the time, while Sigillaria trees, something like giant cattails, rose 20 meters (66 feet) above. The site has not just yielded remains of many obscure fossil plants, it provides a snapshot of an entire ancient ecology. One paleobotanist calls the Wuda material "nothing short of spectacular."

However, Wang is now faced with the destruction of the site, through a simple stroke of bad luck. China is plagued by underground coal fires, which as discussed here in 2005 can go on for decades as a noxious nuisance, and are very hard to extinguish. The ground is burning in the Wuda area, and so in 2006 the Chinese government came up with a straightforward and effective scheme to put out the fire: dig up all the coal as quickly as possible. That means digging up the Wuda fossil deposits.

Wang and Pfefferkorn have been trying to retrieve specimens one step ahead of the excavators, but often they have only been able to sketch out of a general map of a forest area and take some samples before the deposit was dug out. Wang is lobbying local officials to leave a square kilometer of the fossil forest intact for long term research, and is also proposing that a museum be constructed to support studies and public interest in the site. He has an ally in the form of Zhang Haiwang, a local coal official who has an interest in fossil studies, and who has temporarily delayed the excavation on occasion to help the researchers. It would be a major loss to science if the very last of an extraordinary find was be gutted by the earth-movers.

COMMENT ON ARTICLE* ECOATM: As reported by an article from BUSINESS WEEK ("The Automated iPhone Pawn Shop" by Caroline Winter, 1 October 2012), the San Diego-based firm EcoATM has come up with a clever business scheme, based on the "recycling for cash" of cellphones and other small electronics gadgets through kiosks, of course named "EcoATMs", sited in malls and supermarkets.

Over 150 EcoATMs have been installed to date, with two or three more going to work every day. The kiosks use cameras and clever software to recognize over 4,000 phones, MP3 players, and tablets with 97.5% accuracy. They assess the physical and operational condition of the device and then offer a payment. The typical payment rate for an iPhone 4/4S is a hefty $175 USD, while a beat-up Samsung Galaxy S yields about $60 USD.

EcoATM was founded in 2008 by a serial entrepreneur named Mark Bowles, who was inspired when he read a statistic that only about 3% of phones worldwide were recycled. He didn't even know where he could recycle his own phones, so he recognized a business opportunity. The firm built an operational prototype in a wooden frame and featuring a touchscreen interface, then placed it in a Nebraska shopping center to see how it would fly. It flew very well, with people lining up to use it and 2,300 phones collected in 30 days.

EcoATM then got $650,000 USD in grants from the US National Science Foundation, followed up by tens of millions of dollars from venture capital firms. EcoATM engineers spent over two years developing a production machine, first focusing on building a library of 3D models for all the phones the kiosk was to recycle; the library not only permits recognition of phone models, but also provides a reference to determine their level of physical damage. The next task was to figure out how to test the phones for functionality, which involved equipping the kiosks with a carousel of different plugs to allow interface with a wide range of devices, along with test routines for the devices.

Of course, it was entirely obvious that the EcoATMs would be magnets for scammers, and in fact unscrupulous users have been able to pawn off several dozen different cheap knockoffs for more than their selling price. EcoATM engineers have been quick to adapt the system to deal with trickery. Thieves do also make use of the kiosks, but a customer has to have a driver's license number and a thumbprint to close a transaction. The article didn't mention it, but one might suspect the kiosk has a camera to photograph the user in each transaction, and might well be sensitive to users wearing disguises.

Phones that are new enough and in good condition are sold to refurbishers, with some ending up in emerging markets but most ending up in the hands of insurance and warranty companies. Tens of millions of people have mobile phone insurance; when a client loses a phone, the insurer sends out a refurbished replacement, which will cost the insurer about half as much as a new item. About a quarter of the phones dropped into EcoATMs are too old or beaten-up to re-use, but the kiosks buy them for a few bucks each, with the phones sold to certified recyclers who reclaim the metals from them.

So far, EcoATM has collected over a half-million phones and other devices, and wants to haul in 20 million or more by 2014. Although wireless carriers often have buy-back programs and users can also sell their phones on Amazon.com or eBay, they don't compete with the EcoATMs for convenience and immediate payment. Bowles commented: "We've had churches where the preacher was passing the plate on Sunday, collecting phones to sell."

COMMENT ON ARTICLE* HUMAN MICROBIOME (1): As reported by an article from SCIENTIFIC AMERICAN ("The Ultimate Social Network" by Jennifer Ackerman, June 2012), we've long known that the human body plays host to large numbers of microorganisms -- but until the 21st century, not very much was made of the fact. The perception was that those microorganisms, when they weren't causing disease, were more or less passive residents. Now we are increasingly understanding that the human body is an interactive colony of many organisms -- indeed, we carry around roughly ten times as many microorganisms as there are cells in our body, though typically any one of those microorganisms is far smaller than one of our cells. Biologists are gradually finding out that our body functions are in many ways dependent on our "microbiome".

We've long tended to see microorganisms, "bugs", as nuisances at best and threats at worst. That's partly because microorganisms don't attract our attention if they're not causing problems, and partly because pathogens are generally easier to study than other microorganisms. Symbiotic microorganisms are usually dependent on the host environment and so are hard to culture -- for example, the microorganisms that live in our lower intestine don't like oxygen and have mutual interdependencies. In contrast, a host environment tends to be strongly hostile to pathogens, and so they are more flexible in their ability to deal with unfriendly environments.

A human baby in the womb does not have a microbiome, but begins acquiring a community of microbial "commensals" -- that term being from Latin and meaning "sharing a table" -- at birth, obtaining a "seed stock" from the mother's birth canal. Breast feeding and handling by relatives, as well as contact with bedding and pets, augment the initial stock, and by the time babies are walking they are carrying around a fully operational microbiome. Given the different circumstances under which any one infant can pick up commensals, it's not surprising that the human microbiome can vary substantially between individuals, even between identical twins -- and given the influence of the microbiome, that ensures identical twins are unlikely to have identical life histories.

Analysis of the microbiome has been boosted by the emergence of "metagenomics", a subject discussed at length here in the spring. Trying to culture all the many commensals we carry around would be very hard, but with metagenomic analysis it's relatively straightforward to at least get a survey of what's there. The key to such a survey is the "16S ribosomal RNA gene", which codes for a particular RNA molecule associated with the ribosome, the protein-manufacturing organelle of the cell. That gene amounts to a "key" that allows all the different players to be listed and tagged.

All we get out of that, however, is a list, telling us little about what those players actually do. The next step, figuring out the operation of the microbiome, is much more difficult, involving doping out all the genes; matching the genes to the appropriate microorganisms; and figuring out what the genes are doing. The task would be flatly impossible without modern fast gene sequencers and lots of processing power. In 2010, a European research group published a listing of the genes of the human microbiome in the lower digestive tract, the total coming to 3.3 million genes from more than a thousand species. That's about 150 times bigger than the human genome, which has about 20,000 to 25,000 genes. [TO BE CONTINUED]

NEXT | COMMENT ON ARTICLE* THE TORPEDO (16): The British worked on homing torpedoes during World War II, but they never fielded any such weapons during the conflict. However, they did pass on their research to the Americans, who proved energetic in developing the technology.

The first US homing torpedoes used passive homing systems that detected ship noise, primarily cavitation noise from the screws. Only days after Pearl Harbor, at a time when German U-boats were raising complete hell along America's East Coast, the Navy put out a requirement for an electric-powered homing torpedo for carriage by aircraft to attack submarines. Due to the aircraft carriage requirement, it necessarily had to be a relatively light weapon, and due to electric propulsion as well as noise issues, a low-speed weapon.

It was indicative of the energetic wartime industrial culture that the job was tackled by a multi-organization team involving General Electric (GE), Harvard, Bell Labs, and others -- and even more intriguing that the group developed two competitive designs, and had the final selection, the "Mark 24 / FIDO", in production by Western Electric by May 1943, less than a year and a half from the green light, an impressive engineering achievement. By the time FIDO was in service, the Navy was still struggling with the Mark 14. The FIDO worked, too, performing its first kill on 14 May 1943, when a US Navy Consolidated Catalina PBY flying boat sank the U-boat U-640, the first of dozens of German submarines the Mark 24 would send to the bottom. It must have seemed like pulp science fiction to the aircrews that used it in combat.

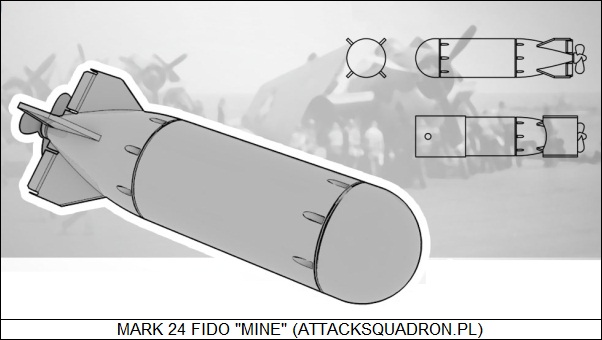

The Mark 24 that emerged had a weight of 308 kilograms (680 pounds) and a form factor of 48 x 213 centimeters (19 x 84 inches) -- absurdly stubby in appearance, which may have been one of the reasons it was formally designated a "mine". It was powered by a lead-acid battery and had a warhead containing 42 kilograms (92 pounds) of high explosive. FIDO solved many of the basic problems of homing torpedo design. It used a differential homing scheme, with four hydrophones spaced around the torpedo midsection to create "crosshairs", the homing system acting to keep the sound balanced in all four hydrophones. The midsection positioning improved homing through use of "body shadowing", ensuring that a hydrophone on one side and the hydrophone on the opposite side didn't pick up sounds from each other's "quadrant".

The idea sounds simple in concept, but it was tricky in practice; one difficulty was that the sound input had to be amplified, and it was hard to build two amplifiers that had the same amplification. FIDO got around that issue by using a single amplifier and switching it between hydrophones. It seems that FIDO also had to be calibrated by service crews before using, by turning potentiometers to ensure the homing system was properly "boresighted". The control system also had to be designed with the proper sensitivity: if it was too insensitive it wouldn't be able to track a target, if it was too sensitive it would "overshoot" and weave on the way to a target, possibly losing target lock. Incidentally, the torpedo operated at a fixed depth using a classic torpedo hydrostat / pendulum control system until it acquired a target, when the homing system took over.

Another issue was to protect friendly vessels under attack by a U-boat from being hit by a FIDO. As a safety feature, homing was disabled and hydrostat / pendulum control re-established if the torpedo rose above a ceiling set at about 12 meters (40 feet). When the Navy introduced submarine-launched homing torpedoes to attack surface ships, such weapons had a reversed limiting system, making sure they turned off homing below a certain depth so they didn't home in on the launch submarine. Another trick developed for that purpose was to not enable homing until the torpedo had crossed a "run-out" distance, and was out of homing range of the submarine that had launched it. [TO BE CONTINUED]

START | PREV | NEXT | COMMENT ON ARTICLE* GIMMICKS & GADGETS: The troublesome phenomenon of underground coal fires was discussed here in 2005. As discussed in BUSINESS WEEK, there's talk of deliberately starting underground coal fires, as a means of tapping deeply-buried coal seams for energy.

"Underground coal gasification (UCG)" is not a new idea, Sir William Siemens having pioneered UCG in the 1860s to light the streets of London. However, the scheme was expensive and never really caught on, at least before energy prices skyrocketed and made UCG more competitive. Improved seismic mapping of underground structures and techniques developed for "fracking" natural gas out of shales have also helped raise the visibility of UCG.

The idea behind UCG is to pump oxygen and steam down a borehole into a deep coal seam, then ignite the coal. The burning coal generates "synthesis gas / syngas", made up of hydrogen and carbon monoxide, mixed with carbon dioxide. The gas is pumped to the surface, with the syngas used as a fuel feedstock -- the hydrogen can be directly burned for energy -- and the carbon dioxide pumped back down into the coal deposit. It sounds dodgy, presenting the risk of starting an uncontrolled underground coal fire, and it certainly requires a good understanding of the layout of the underground coal seam. However, the fire is only sustained by the oxygen pumped in from topside; shut off the oxygen, and the fire goes out.

On the plus side, UCG avoids digging deep into the earth, and when the seam is burned out the coal ash, loaded with toxic heavy metals, remains trapped deep in the ground. UCG also isn't as dangerous as conventional coal mining, which kills scores of miners in China every year. Environmental groups are cautiously supportive. In an era of relatively cheap natural gas, UCG still remains expensive; however, it's being seriously considered in places that are not particularly blessed with underground gas reserves but are loaded with coal deposits, such as Canada, South Africa, China, New Zealand, and Uzbekistan. UCG's not on the front burner in the USA, though American mining companies do recognize its potential, some analysts suggesting that UCG could provide five times the energy of America's accessible coal deposits.

* The laser -- "light amplification by stimulated emission of radiation" -- burst onto the scene with a splash in the early 1960s, but the basic concept wasn't new, having been preceded by a similar microwave device, the "maser", which was introduced in 1953. Masers have never attracted much attention, mostly because they have always been expensive, fiddly devices that require cryogenic cooling or intensive magnetic fields; they are mostly used as ultra-low-noise amplifiers for radio telescopes and other sensitive instruments.

Now a team of British researchers at the National Physical Laboratory and Imperial College London have developed a (potentially) low-cost maser based on a crystal of a material named "p-terphenyl", seeded with chains of molecules named "pentacene". It is "pumped" by a commercial yellow-light medical laser, with the crystal then shedding the energy when hit by microwaves that stimulate it into emitting a stronger version of the input signal. It doesn't require cryogenic cooling or strong magnetic fields. The researchers believe that a convenient, cheap maser could find a wide range of applications in communications and instrumentation.

* BUSINESS WEEK zeroed in on Woodman Labs and its "GoPro" miniature ruggedized videocam, which action sports enthusiasts can strap onto a helmet or across the chest to generate thrilling HD videos of wild rides. GoPro has caught on; the company sold about a million HD Hero2 videocams in 2011. Of course, to no surprise the little cameras are being used for plenty of other applications; fire departments have used them for training, marine biologists have used them for their research, and the US Army has used them in field tests.

Woodman Labs has now introduced the HD Hero3, which is 25% lighter and 30% smaller than its predecessor. It comes in a range of models, priced from $200 USD to $400 USD; the high-end model comes with wi-fi capabilities to allow the camera to be controlled from a smartphone. The next generation will be able to generate streaming video that can be viewed on a smartphone. Competition is popping up, of course, with Sony of Japan introducing their "Action Cam" line, which has been judged entirely competitive in capability and price. Lower-end Chinese vendors are expected to jump in soon as well. Rugged baby videocams appear to be more than a flash in the pan; it might not be too long before they're ordinary, universal, and no doubt often denounced by the grumpy as yet another public nuisance.

COMMENT ON ARTICLE* TECHSHOPS ON A ROLL: There's been talk that in the era of software fiddling the urge to tinker with hardware has been on the fade, but as discussed in an article from BUSINESS WEEK ("The Builders", by Ashlee Vance, 28 May 2012), a movement is underway that shows that's not the case: Techshops. A Techshop is a club, organized around a facility loaded up with tools ranging from sewing machines to computer-controlled milling stations. Members sign up for about a hundred bucks a month to obtain free use of the facility, paying a little more to take classes as they see the need.

TechShops are the brainchild of Jim Newton, a Silicon Valley hardware engineer who has done consulting for the popular MYTHBUSTERS TV series. He got into Battlebots, the home built robots that fight each other in contests, and eventually started teaching a class on them. The pupils had a clear need for access to tools, and so he came up with the idea of the TechShop. He announced the scheme at the 2006 Maker Faire, a yearly gathering of hardware tinkerers in the San Francisco Bay Area, and people handed him dues on the spot. He quickly obtained more backers with deeper pockets, and soon he had $350,000 USD to open up the first TechShop, in Menlo Park, California.